Beginnings of the Holodeck: AMD's DX11 GPU, Eyefinity and 6 Display Outputs

by Anand Lal Shimpi on September 10, 2009 2:30 PM EST- Posted in

- GPUs

Wanna see what 24.5 million pixels looks like?

That's six Dell 30" displays, each with an individual resolution of 2560 x 1600. The game is World of Warcraft and the man crouched in front of the setup is Carrell Killebrew, his name may sound familiar.

Driving all of this is AMD's next-generation GPU, which will be announced later this month. I didn't leave out any letters, there's a single GPU driving all of these panels. The actual resolution being rendered at is 7680 x 3200; WoW got over 80 fps with the details maxed. This is the successor to the RV770. We can't talk specs but at today's AMD press conference two details are public: 2.15 billion transistors and over 2.5 TFLOPs of performance. As expected, but nice to know regardless.

The technology being demonstrated here is called Eyefinity and it actually all started in notebooks.

Not Multi-Monitor, but Single Large Surface

DisplayPort is gaining popularity. It's a very simple interface and you can expect to see mini-DisplayPort on notebooks and desktops alike in the very near future. Apple was the first to embrace it but others will follow.

The OEMs asked AMD for six possible outputs for DisplayPort from their notebook GPUs: up to two internally for notebook panels, up to two externally for conncetors on the side of the notebook and up to two for use via a docking station. In order to fulfill these needs AMD had to build in 6 lanes of DisplayPort outputs into its GPUs, driven by a single display engine. A single display engine could drive any two outputs, similar to how graphics cards work today.

Eventually someone looked at all of the outputs and realized that without too much effort you could drive six displays off of a single card - you just needed more display engines on the chip. AMD's DX11 GPU family does just that.

At the bare minimum, the lowest end AMD DX11 GPU can support up to 3 displays. At the high end? A single GPU will be able to drive up to 6 displays.

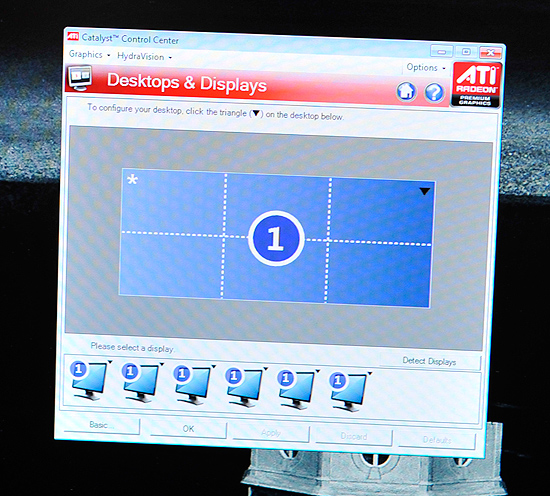

AMD's software makes the displays appear as one. This will work in Vista, Windows 7 as well as Linux.

The software layer makes it all seamless. The displays appear independent until you turn on SLS mode (Single Large Surface). When on, they'll appear to Windows and its applications as one large, high resolution display. There's no multimonitor mess to deal with, it just works. This is the way to do multi-monitor, both for work and games.

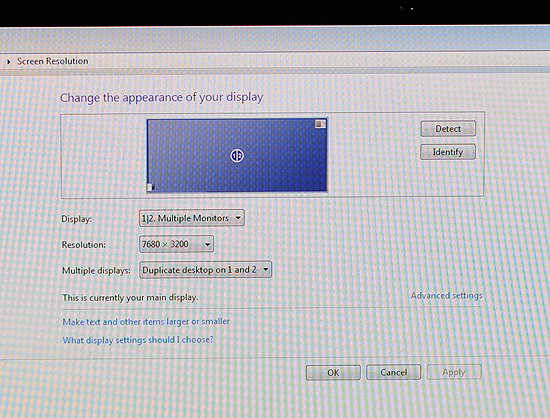

Note the desktop resolution of the 3x2 display setup

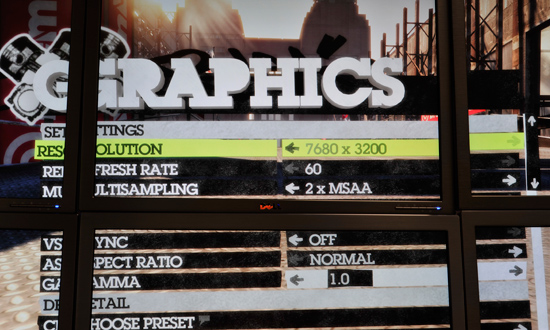

I played Dirt 2, a DX11 title at 7680 x 3200 and saw definitely playable frame rates. I played Left 4 Dead and the experience was much better. Obviously this new GPU is powerful, although I wouldn't expect it to run everything at super high frame rates at 7680 x 3200.

Left 4 Dead in a 3 monitor configuration, 7680 x 1600

If a game pulls its resolution list from Windows, it'll work perfectly with Eyefinity.

With six 30" panels you're looking at several thousand dollars worth of displays. That was never the ultimate intention of Eyefinity, despite its overwhelming sweetness. Instead the idea was to provide gamers (and others in need of a single, high resolution display) the ability to piece together a display that offered more resolution and was more immersive than anything on the market today. The idea isn't to pick up six 30" displays but perhaps add a third 20" panel to your existing setup, or buy five $150 displays to build the ultimate gaming setup. Even using 1680 x 1050 displays in a 5x1 arrangement (ideal for first person shooters apparently, since you get a nice wrap around effect) still nets you a 8400 x 1050 display. If you want more vertical real estate, switch over to a 3x2 setup and then you're at 5040 x 2100. That's more resolution for less than most high end 30" panels.

![]()

Any configuration is supported, you can even group displays together. So you could turn a set of six displays into a group of 4 and a group of 2.

It all just seems to work, which is arguably the most impressive part of it all. AMD has partnered up with at least one display manufacturer to sell displays with thinner bezels and without distracting LEDs on the front:

A render of what the Samsung Eyefinity optimized displays will look like

We can expect brackets and support from more monitor makers in the future. Building a wall of displays isn't exactly easy.

137 Comments

View All Comments

7Enigma - Friday, September 11, 2009 - link

I had said this after the last launch of gpu's but I think AMD/NVIDIA are on a very slippery slope right now. With the vast majority of people (gamers included) using 19-22" monitors there are really no games that will make last gen's cards sweat at those resolutions. Most people will start to transition to 24" displays but I do not see a significant number of people going to 30" or above in the next couple of years. This means that for the majority of people (lets face it CAD/3D modeling/etc. is a minority) there is NO GOOD REASON to actually upgrade.We're no longer "forced" to purchase the next great thing to play the newest game well. Think back to F.E.A.R., Oblivion, Crysis (crap coding, but still); all of those games when they debuted were not able to be played even close to the max settings on >19" monitors.

I haven't seen anything yet coming out this year that will tax my 4870 at my gaming resolution (currently a 19" LCD, looking forward to a 24" upgrade in the next year). That is 2 generations back(depending on what you consider the 4890) from the 4970, and the MAINSTREAM card at that.

We are definitely in the age where the GPU, while still the limiting factor for gaming and modeling, has surpassed what is required for the majority of people.

Don't get me wrong, I love new tech and this card looks potentially incredible, but other then new computer sales and the bleeding edge crowd, who really needs these in the next 12-24 months?

Zingam - Friday, September 11, 2009 - link

Everybody is gonna buy this now and nobody will look at GF380 :DVision and Blindeye technoglogies by AMD are a must have ones!!!

Nvidia and Intel are doomed!

Holly - Friday, September 11, 2009 - link

Please correct me if I am wrong but it seems to me that card simply lacks enough RAM to run at that resolution...Forgeting everything except framebuffer

7680*3200*32bits per pixel = 98,304,000 bytes

now add 4x FSAA... 98,304,000 * 4*4 = 1,572,864,000 bytes (almost 1.5 GB)

we are quite close to the roof already if the 2GB RAM on card informations are correct... and we dropped Z-Buffer, Stencil Buffer, textures, everything...

Zool - Saturday, September 12, 2009 - link

"7680*3200*32bits per pixel = 98,304,000 bytes" u are changing bits to Bytes :P. Thats only 98,304,000/8 Byts.Holly - Sunday, September 13, 2009 - link

no, I write it in bits so people are not puzzled where I took 4 bytes multiplication.... 7680*3200*4 = 98,304,000 Bytes.if it was in bits... 7680*3200*32 = 786,432,000 bits

Dudler - Friday, September 11, 2009 - link

They use the Trillian(Six?) card. AFAIK no specs has been leaked about this card. The 5870 will come in 1 and 2 gig flavours, maybe the "Six" will come with 4 as an option?poohbear - Friday, September 11, 2009 - link

why is so much attention given to its support for 6 monitors? that's cool and all, but who on earth is gonna use that feature? seriously, lets write stuff for your target middle class audience, techies that generally dont have $1600 nor the space to spend on 6 displays.Dudler - Friday, September 11, 2009 - link

A 30" screen is more expensive than 2 24" screens. So when Samsung (And the other WILL follow suit) comes with thin bezel screens, high resolutions will become affordable.So seriously, this is written for the "middle" class audience, you just have to understand the ramifications of this technology.

And as far as I know, OLED screens can be made without bezels entirely.. I guess the screen manufacturers is going to push that tech faster now, since it actually can make a difference.

jimhsu - Friday, September 11, 2009 - link

I remembered when 17 inch LCDs looked horrible and cost almost 2000$. This is a bargain by comparison, assuming you have the app to take advantage of it.camylarde - Friday, September 11, 2009 - link

8th - Lynnfield article and everybody drools to death about it.10th - 58xx blog post and everybody forgets Lynnfield and talks about AMD.

15th - Wonder what Nvidia comes up with ;-)