The SSD Relapse: Understanding and Choosing the Best SSD

by Anand Lal Shimpi on August 30, 2009 12:00 AM EST- Posted in

- Storage

The Cleaning Lady and Write Amplification

Imagine you’re running a cafeteria. This is the real world and your cafeteria has a finite number of plates, say 200 for the entire cafeteria. Your cafeteria is open for dinner and over the course of the night you may serve a total of 1000 people. The number of guests outnumbers the total number of plates 5-to-1, thankfully they don’t all eat at once.

You’ve got a dishwasher who cleans the dirty dishes as the tables are bussed and then puts them in a pile of clean dishes for the servers to use as new diners arrive.

Pretty basic, right? That’s how an SSD works.

Remember the rules: you can read from and write to pages, but you must erase entire blocks at a time. If a block is full of invalid pages (files that have been overwritten at the file system level for example), it must be erased before it can be written to.

All SSDs have a dishwasher of sorts, except instead of cleaning dishes, its job is to clean NAND blocks and prep them for use. The cleaning algorithms don’t really kick in when the drive is new, but put a few days, weeks or months of use on the drive and cleaning will become a regular part of its routine.

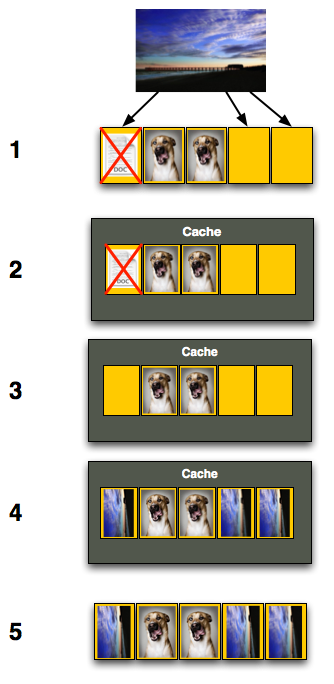

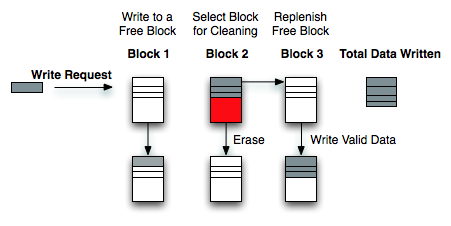

Remember this picture?

It (roughly) describes what happens when you go to write a page of data to a block that’s full of both valid and invalid pages.

In actuality the write happens more like this. A new block is allocated, valid data is copied to the new block (including the data you wish to write), the old block is sent for cleaning and emerges completely wiped. The old block is added to the pool of empty blocks. As the controller needs them, blocks are pulled from this pool, used, and the old blocks are recycled in here.

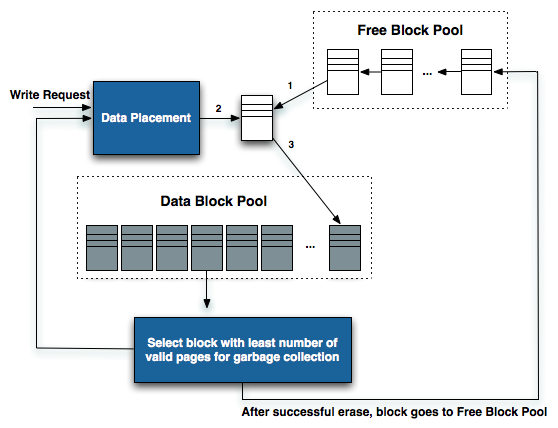

IBM's Zurich Research Laboratory actually made a wonderful diagram of how this works, but it's a bit more complicated than I need it to be for my example here today so I've remade the diagram and simplified it a bit:

The diagram explains what I just outlined above. A write request comes in, a new block is allocated and used then added to the list of used blocks. The blocks with the least amount of valid data (or the most invalid data) are scheduled for garbage collection, cleaned and added to the free block pool.

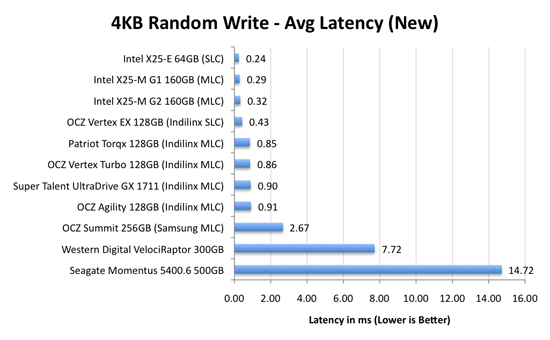

We can actually see this in action if we look at write latencies:

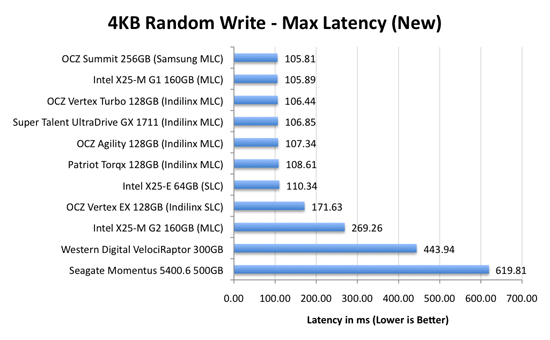

Average write latencies for writing to an SSD, even with random data, are extremely low. But take a look at the max latencies:

While average latencies are very low, the max latencies are around 350x higher. They are still low compared to a mechanical hard disk, but what's going on to make the max latency so high? All of the cleaning and reorganization I've been talking about. It rarely makes a noticeable impact on performance (hence the ultra low average latencies), but this is an example of happening.

And this is where write amplification comes in.

In the diagram above we see another angle on what happens when a write comes in. A free block is used (when available) for the incoming write. That's not the only write that happens however, eventually you have to perform some garbage collection so you don't run out of free blocks. The block with the most invalid data is selected for cleaning; its data is copied to another block, after which the previous block is erased and added to the free block pool. In the diagram above you'll see the size of our write request on the left, but on the very right you'll see how much data was actually written when you take into account garbage collection. This inequality is called write amplification.

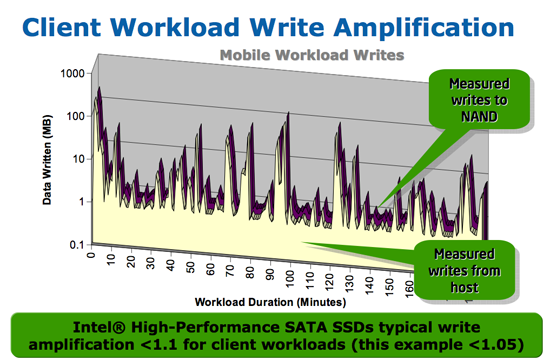

Intel claims very low write amplification on its drives, although over the lifespan of your drive a < 1.1 factor seems highly unlikely

The write amplification factor is the amount of data the SSD controller has to write in relation to the amount of data that the host controller wants to write. A write amplification factor of 1 is perfect, it means you wanted to write 1MB and the SSD’s controller wrote 1MB. A write amplification factor greater than 1 isn't desirable, but an unfortunate fact of life. The higher your write amplification, the quicker your drive will die and the lower its performance will be. Write amplification, bad.

295 Comments

View All Comments

sotoa - Friday, September 4, 2009 - link

Another great article. You making me drool over these SSD's!I can't wait till Win7 comes to my door so I can finally get an SSD for my laptop.

Hopefully prices will drop some more by then and Trim firmware will be available.

lordmetroid - Thursday, September 3, 2009 - link

I use them both because they are damn good and explanatory suffixes. It is 2009, soon 2010 I think we can at least get the suffixes correct, if someone doesn't know what they mean, wikipedia has answers.AnnonymousCoward - Saturday, September 5, 2009 - link

As someone who's particular about using SI and being correct, I think it's better to stick to GB for the sake of simplicity and consistency. The tiny inaccuracy is almost always irrelevant, and as long as all storage products advertise in GB, it wouldn't make sense to speak in terms of GiB.Touche - Thursday, September 3, 2009 - link

Both articles emphasize Intel's performance lead, but, looking at real world tests, the difference between it and Vertex is really small. Not hardly enough to justify the price difference. I feel like the articles are giving an impression that Intel is in a league of its own when in fact it's only marginally faster.smjohns - Tuesday, September 8, 2009 - link

This is where I struggle. It is all very well quoting lots of stats about all these drives but what I really want to know is if I went for Intel over the OCZ Vertex (non-turbo) where would I really notice the difference in performance on a laptop?Would it be slower start up / shut down?

Slower application response times?

Speed at opening large zipped files?

Copying / processing large video files?

If the difference is that slim then I guess it is down to just a personal preference....

morrie - Thursday, September 3, 2009 - link

I've made it a habit of securely deleting files by using "shred" like this: shred -fuvz, and accepting the default number of passes, 25. Looks like this security practice is now out, as the "wear" on the drive would be at least 25x faster, bringing the stated life cycles closer to having an impact on drive longevity. So what's the alternative solution for securely deleting a file? Got to "delete" and forget about security? Or "shred" with a lower number of passes, say 7 or 10, and be sure to purchase a non-Intel drive with the ten year warranty and hope that the company is still in business, and in the hard drive business, should you need warranty service in the outer years...Rasterman - Wednesday, September 16, 2009 - link

watching too much CSI, there is an article somewhere i read by a data repair tech who works in one of the multi-million dollar data recovery labs, basically he said writing over it once is all you should do and even that is overkill 99% of the time. theoretically it is possible to even recover that _sometimes_, but the expense required is so high that unless you are committing a billion dollar fraud or are the secretary to osama bin laden no one will ever try to recover such data. chances are if you are in such circles you can afford a new drive 25x more often. and if you have such information or knowledge wouldn't be far easier and cheaper to simply beat it out of you than trying to recover a deleted drive?iamezza - Friday, September 4, 2009 - link

1 pass should be sufficient for most purposes. Unless you happen to be working on some _extremely_ sensitive/important data.derkurt - Thursday, September 3, 2009 - link

I may be wrong on this, but I'd assume that once TRIM is enabled, a file is securely deleted if it has been deleted on the filesystem level. However, it might depend on the firmware when exactly the drive is going to actually delete the flash blocks which are marked as deletable by TRIM. For performance reasons the drive should do that as soon as possible after a TRIM command, but also preferably at a time when there is not much "action" going on - after all, the whole point of TRIM is to change the time of block erasing flash cells to a point where the drive is idle.

morrie - Thursday, September 3, 2009 - link

That's on a Linux system btwAs to aligning drives...how about an update to the article on what needs to be done/ensured, if anything, for using the drives with a Linux OS?