The SSD Relapse: Understanding and Choosing the Best SSD

by Anand Lal Shimpi on August 30, 2009 12:00 AM EST- Posted in

- Storage

Intel's X25-M 34nm vs 50nm: Not as Straight Forward As You'd Think

It took me a while to understand exactly what Intel did with its latest drive, mostly because there are no docs publicly available on either the flash used in the drives or on the controller itself. Intel is always purposefully vague about important details, leaving everything up to clever phrasing of questions and guesswork with tests and numbers before you truly uncover what's going on. But after weeks with the drive, I think I've got it.

| X25-M Gen 1 | X25-M Gen 2 | |

| Flash Manufacturing Process | 50nm | 34nm |

| Flash Read Latency | 85 µs | 65 µs |

| Flash Write Latency | 115 µs | 85 µs |

| Random 4KB Reads | Up to 35K IOPS | Up to 35K IOPS |

| Random 4KB Writes | Up to 3.3K IOPS | Up to 6.6K IOPS (80GB) Up to 8.6K IOPS (160GB) |

| Sequential Read | Up to 250MB/s | Up to 250MB/s |

| Sequential Write | Up to 70MB/s | Up to 70MB/s |

| Halogen-free | No | Yes |

| Introductory Price | $345 (80GB) $600 - $700 (160GB) | $225 (80GB) $440 (160GB) |

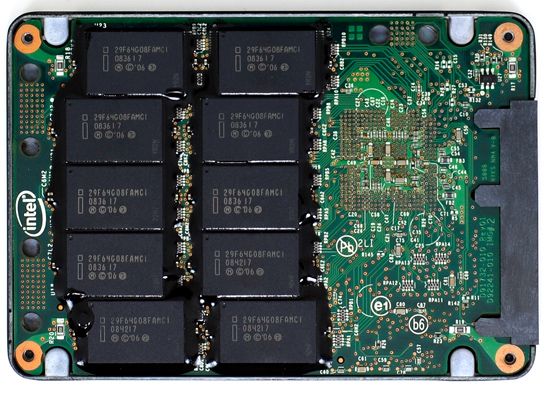

The old X25-M G1

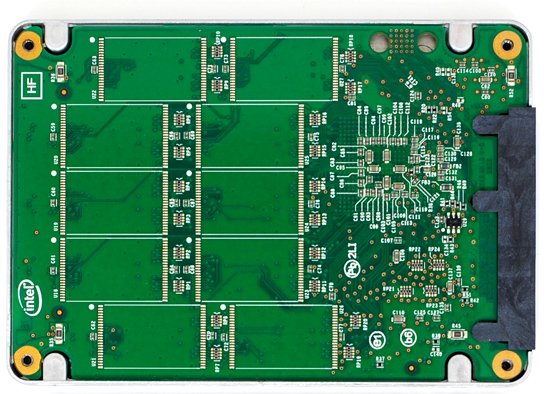

The new X25-M G2

Moving to 34nm flash let Intel drive the price of the X25-M to ultra competitive levels. It also gave Intel the opportunity to tune controller performance a bit. The architecture of the controller hasn't changed, but it is technically a different piece of silicon (that happens to be Halogen-free). What has changed is the firmware itself.

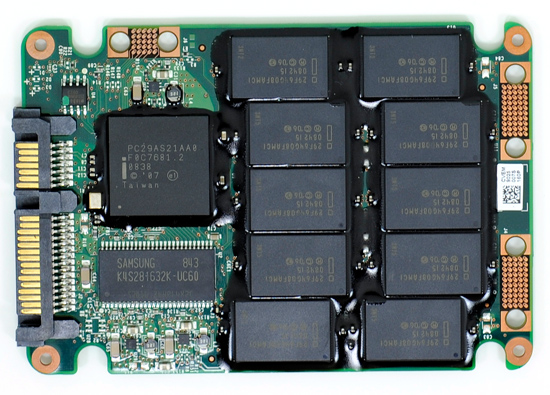

The old controller

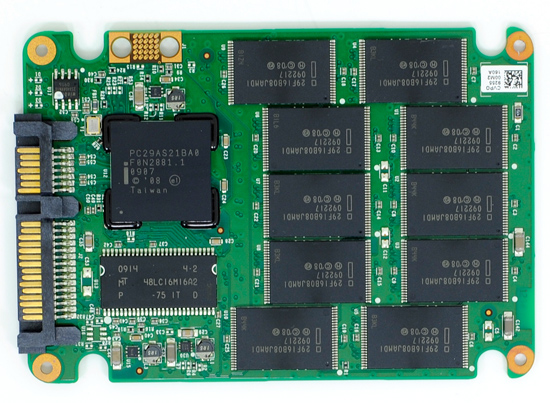

The new controller

The new X25-M G2 has twice as much DRAM on-board as the previous drive. The old 160GB drive used a 16MB Samsung 166MHz SDRAM (CAS3):

Goodbye Samsung

The new 160GB G2 drive uses a 32MB Micron 133MHz SDRAM (CAS3):

Hello Micron

More memory means that the drive can track more data and do a better job of keeping itself defragmented and well organized. We see this reflected in the "used" 4KB random write performance, which is around 50% higher than the previous drive.

Intel is now using 16GB flash packages instead of 8GB packages from the original drive. Once 34nm production really ramps up, Intel could outfit the back of the PCB with 10 more chips and deliver a 320GB drive. I wouldn't expect that anytime soon though.

The old X25-M G1

The new X25-M G2

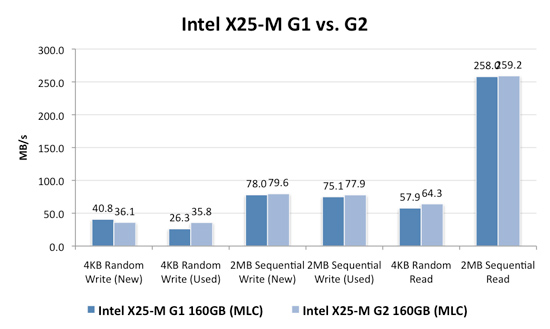

Low level performance of the new drive ranges from no improvement to significant depending on the test:

Note that these results are a bit different than my initial preview. I'm using the latest build of Iometer this time around, instead of the latest version from iometer.org. It does a better job filling the drives and produces more reliable test data in general.

The trend however is clear: the new G2 drive isn't that much faster. In fact, the G2 is slower than the G1 in my 4KB random write test when the drive is brand new. The benefit however is that the G2 doesn't drop in performance when used...at all. Yep, you read that right. In the most strenuous case for any SSD, the new G2 doesn't even break a sweat. That's...just...awesome.

The rest of the numbers are pretty much even, with the exception of 4KB random reads where the G2 is roughly 11% faster.

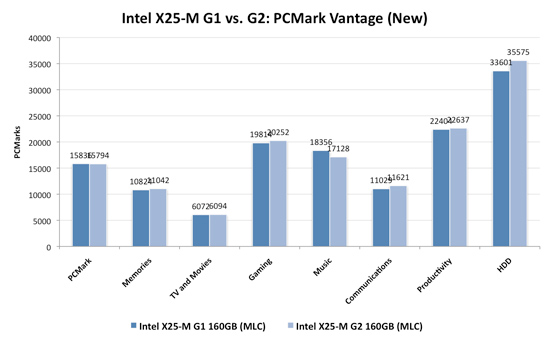

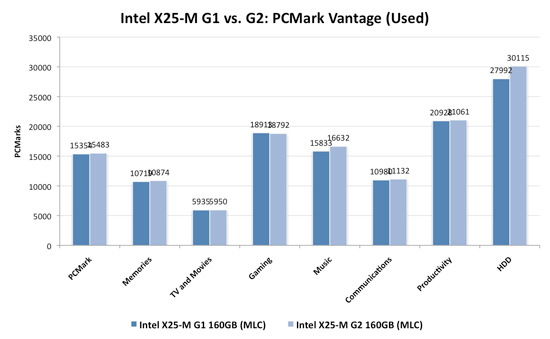

I continue to turn to PCMark Vantage as the closest indication to real world performance I can get for these SSDs, and it echoes my earlier sentiments:

When brand new, the G1 and the G2 are very close in performance. There are some tests where the G2 is faster, others where the G1 is faster. The HDD suite shows the true potential of the G2 and even there we're only looking at a 5.6% performance gain.

It's in the used state that we see the G2 pull ahead a bit more, but still not drastic. The advantage in the HDD suite is around 7.5%, but the rest of the tests are very close. Obviously the major draw to the 34nm drives is their price, but that can't be all there is to it...can it?

The new drives come with TRIM support, albeit not out of the box. Sometime in Q4 of this year, Intel will offer a downloadable firmware that enables TRIM on only the 34nm drives. TRIM on these drives will perform much like TRIM does on the OCZ drives using Indilinx' manual TRIM tool - in other words, restoring performance to almost new.

Because it can more or less rely on being able to TRIM invalid data, the G2 firmware is noticeably different from what's used in the G1. In fact, if we slightly modify the way I tested in the Anthology I can actually get the G1 to outperform the G2 even in PCMark Vantage. In the Anthology, to test the used state of a drive I would first fill the drive then restore my test image onto it. The restore process helped to fragment the drive and make sure the spare-area got some use as well. If we take the same approach but instead of imaging the drive we perform a clean Windows install on it, we end up with a much more fragmented state; it's not a situation you should ever encounter since a fresh install of Windows should be performed on a clean, secure erased drive, but it does give me an excellent way to show exactly what I'm talking about with the G2:

| PCMark Vantage (New) | PCMark Vantage HDD (New) | PCMark Vantage (Fragmented + Used) | PCMark Vantage HDD (Fragmented + Used) | |

| Intel X25-M G1 | 15496 | 32365 | 14921 | 26271 |

| Intel X25-M G2 | 15925 | 33166 | 14622 | 24567 |

| G2 Advantage | 2.8% | 2.5% | -2.0% | -6.5% |

Something definitely changed with the way the G2 handles fragmentation, it doesn't deal with it as elegantly as the G1 did. I don't believe this is a step backwards though, Intel is clearly counting on TRIM to keep the drive from ever getting to the point that the G1 could get to. The tradeoff is most definitely performance and probably responsible for the G2's ability to maintain very high random write speeds even while used. I should mention that even without TRIM it's unlikely that the G2 will get to this performance state where it's actually slower than the G1; the test just helps to highlight that there are significant differences between the drives.

Overall the G2 is the better drive but it's support for TRIM that will ultimately ensure that. The G1 will degrade in performance over time, the G2 will only lose performance as you fill it with real data. I wonder what else Intel has decided to add to the new firmware...

I hate to say it but this is another example of Intel only delivering what it needs to in order to succeed. There's nothing that keeps the G1 from also having TRIM other than Intel being unwilling to invest the development time to make it happen. I'd be willing to assume that Intel already has TRIM working on the G1 internally and it simply chose not to validate the firmware for public release (an admittedly long process). But from Intel's perspective, why bother?

Even the G1, in its used state, is faster than the fastest Indilinx drive. In 4KB random writes the G1 is even faster than an SLC Indilinx drive. Intel doesn't need to touch the G1, the only thing faster than it is the G2. Still, I do wish that Intel would be generous to its loyal customers that shelled out $600 for the first X25-M. It just seems like the right thing to do. Sigh.

295 Comments

View All Comments

Anand Lal Shimpi - Monday, August 31, 2009 - link

wow I misspelled my own name :) Time to sleep for real this time :)Take care,

Anand

IntelUser2000 - Monday, August 31, 2009 - link

Looking at pure max TDP and idle power numbers and concluding the power consumption based on those figures are wrong.Look here: http://www.anandtech.com/cpuchipsets...px?i=3403&a...">http://www.anandtech.com/cpuchipsets...px?i=3403&a...

Modern drives quickly reach idle even between times where the user don't even know and at "load". Faster drives will reach lower average power because it'll work faster to get to idle. This is why initial battery life tests showed X25-M with much higher active/idle power figures got better battery life than Samsungs with less active/idle power.

Max power is important, but unless you are running that app 24/7 its not real at all, especially the max power benchmarks are designed to reach close to TDP as possible.

Anand Lal Shimpi - Monday, August 31, 2009 - link

I agree, it's more than just max power consumption. I tried to point that out with the last paragraph on the page:"As I alluded to before, the much higher performance of these drives than a traditional hard drive means that they spend much more time at an idle power state. The Seagate Momentus 5400.6 has roughly the same power characteristics of these two drives, but they outperform the Seagate by a factor of at least 16x. In other words, a good SSD delivers an order of magnitude better performance per watt than even a very efficient hard drive."

I didn't have time to run through some notebook tests to look at impact on battery life but it's something I plan to do in the future.

Take care,

Anand

IntelUser2000 - Monday, August 31, 2009 - link

Thanks, people pay too much attention to just the max TDP and idle power alone. Properly done, no real apps should ever reach max TDP for 100% of the duration its running at.cristis - Monday, August 31, 2009 - link

page 6: "So we’re at approximately 36 days before I exhaust one out of my ~10,000 write cycles. Multiply that out and it would take 36,000 days" --- wait, isn't that 360,000 days = 986 years?Anand Lal Shimpi - Monday, August 31, 2009 - link

woops, you're right :) Either way your flash will give out in about 10 years and perfectly wear leveled drives with no write amplification aren't possible regardless.Take care,

Anand

cdillon - Monday, August 31, 2009 - link

I gather that you're saying it'll give out after 10 years because a flash cell will lose its stored charge after about 10 years, not because the write-life will be surpassed after 10 years, which doesn't seem to be the case. The 10-year charge life doesn't mean they become useless after 10 years, just that you need to refresh the data before the charge is lost. This makes flash less useful for data archival purposes, but for regular use, who doesn't re-format their system (and thus re-write 100% of the data) at least once every 10 years? :-)Zheos - Monday, August 31, 2009 - link

"This makes flash less useful for data archival purposes, but for regular use, who doesn't re-format their system (and thus re-write 100% of the data) at least once every 10 years? :-)"I would like an input on that too, cuz thats a bit confusing.

GourdFreeMan - Tuesday, September 1, 2009 - link

Thermal energy (i.e. heat) allows the electrons trapped in the floating gate to overcome the potential well and escape, causing zeros (represented by a larger concentration of electrons in the floating gate) to eventually become ones (represented by a smaller concentration of electrons in the floating gate). Most SLC flash is rated at about 10 years of data retention at either 20C (68F) or 25C (77F). What Anand doesn't mention is that as a rule of thumb for every 9 degrees C (~16F) that the temperature is raised above that point, data retention lifespan is halved. (This rule of thumb only holds for human habitable temperatures... the exact relation is governed by the Arrhenius equation.)Wear leveling and error correction codes can be employed to mitigate this problem, which only gets worse as you try to store more bits per cell or use a smaller lithography process without changing materials or design.

Zheos - Tuesday, September 1, 2009 - link

Thank you GourdFreeMan for the additional input,But, if we format like every year or so , doesnt the countdown on data retention restart from 0 ? or after ~10 year (seems too be less if like you said temperature affect it) the SSD will not only fail at times but become unusable ? Or if we come to that point a format/reinstall would resolve the problem ?

I dont care about losing data stored after 10 years, what i do care is if the drive become ASSURELY unsusable after 10 year maximum. For drives that comes at a premium price, i don't like this if its the case.