Overclocking Extravaganza: Radeon HD 4890 To The Max

by Derek Wilson on April 29, 2009 12:01 AM EST- Posted in

- GPUs

Exploring Core Overclocking

Adjusting core clock speed has a much higher impact on performance than only adjusting memory speed. At stock clock speeds the 4890 is much more compute bound than memory bound, and this is where the difference comes in. While the 900MHz core clock variant will not offer huge performance gains over the stock card, the performance gains will be fairly proportional to the clock speed increase.

Despite the fact that a 50MHz bump only offers a maximum potential average performance improvement of about 6%, we often see realized performance gains of between 3% and 5% on 900MHz core clocked 4890 hardware. This is certainly a much better return than we saw even with a 23%+ memory overclock. Even so, 5% real world performance isn't the holy grail. So we decided to test multiple core clock frequencies ranging from 850MHz to 1000MHz in 50MHz increments. For these tests, we fixed memory clock speed at 975MHz.

Let's jump right in and talk about 1000MHz. Here's a look at what we get from this boost in clock speed.

1680x1050 1920x1200 2560x1600

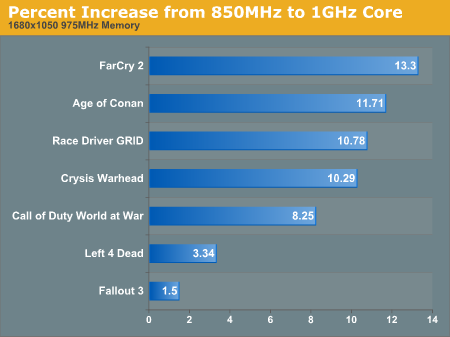

In non-CPU limited situations, the approximately 10% to 13% performance improvement out of a potential 17.6% improvement is nothing to sneeze at. Here's the break down of percent increase in performance at the different clock speeds we tested across three resolutions in all the games we tested.

At each speed bump we a pretty good proportional performance improvement. We are closer to the theoretical max at the more modest clock speed increases than at the high end though. This could potentially mean that our core clock speed increases are creating memory bottlenecks. It is clear that even without any potential boost from an accompanying memory overclock, the 4890 is potentially capable of some impressive clock speeds and performance. Despite the fact that we want to be thorough, we can't test all of these core clock speeds with multiple different memory clock speeds, as the testing would quickly balloon. So we compromised a bit, but the results on the next page speak for themselves.

We absolutely must caution our readers once again that these are not off-the-shelf retail parts. These are parts sent directly to us from manufacturers and could very likely have a higher overclocking potential than retail parts. From what we are hearing in the field, though, many people have been able to achieve a decent boost in clock speed with the 4890.

61 Comments

View All Comments

walp - Thursday, April 30, 2009 - link

I have a gallery how to fit the Accelero S1 to 4890(in swedish though):

http://www.sweclockers.com/album/?id=3916">http://www.sweclockers.com/album/?id=3916

Ah, here's the translated version: =)

http://translate.google.se/translate?js=n&prev...">http://translate.google.se/translate?js...mp;sl=sv...

You can change the volt with every 4890-card without bios-modding since they all are the same piece of hardware:

http://vr-zone.com/articles/increasing-voltages--e...

Its very easy that it is so fortunate, cause ASUS Smartdoctor sucks ass since it doesnt work on my computer anymore.

(CCCP:Crappy-Christmas-Chinese-Programmers...no pun intended ;)

\walp

kmmatney - Thursday, April 30, 2009 - link

Cool - thanks for the guide. I ordered the Accelero S1 yesterday. Nice how you got heatsinks on all the power circuitry.balancedthinking - Wednesday, April 29, 2009 - link

Nice, Derek is still able to write decent articles. Bad for the somewhat stripped-down 4770 review but good to see it does not stay that way.DerekWilson - Wednesday, April 29, 2009 - link

Thanks :-)I suppose I just thought the 4770 article was straight forward enough to be stripped down -- that I said the 4770 was the part to buy and that the numbers backed that up enough that I didn't need to dwell on it.

But I do appreciate all the feedback I've been getting and I'll certainly keep that in mind in the future. More in depth and more enthusiastic when something is a clear leader are on my agenda for similar situations in the future.

JanO - Wednesday, April 29, 2009 - link

Hello there,I really like the fact that you only present us with one graph at a time and let us choose the resolution we want to see in this article...

Now if we only could specify what resolution matters to us once and have Anandtech remember so it presents it to us by default every time we come back, now wouldn't that be great?

Thanks & keep up that great work!

greylica - Wednesday, April 29, 2009 - link

Sorry for AMD, but even with a super powerful card in Direct-X, their OPenGL implementation is still bad, and Nvidia Rocks in professional applications running on Linux. We saw the truth when we put an Radeon 4870 in front of an GTX 280. The GTX 280 Rocks, in redraw mode, in interactive rendering, and in OpenGL composition. Nvidia is a clear winner in OpenGL apps. Maybe it´s because the extra transistor count, that allows the hardware to outperform any Radeon in OPenGL implementation, whereas AMD still have driver problems (Bunch of them ), in both Linux and Mac.But Windows Gamers are the Market Niche AMD cards are targeting...

RagingDragon - Wednesday, May 13, 2009 - link

WTF? Windows gamers aren't a niche market, they're the majority market for high end graphics cards.Professional OpenGL users are buying Quadro and FireGL cards, not Geforces and Radeons. Hobbiests and students using professional GL applications on non-certified Geforce and Radeon cards are a tiny niche, and it's doubtful anyone targets that market. Nvidia's advantage in that niche is probably an extension of their advantage in Professional GL cards (Quadro vs. FireGL), essentially a side effect of Nvidia putting more money/effort into their professional GL cards than AMD does.

ltcommanderdata - Wednesday, April 29, 2009 - link

I don't think nVIdia having a better OpenGL implementation is necessarily true anymore, at least on Mac.http://www.barefeats.com/harper22.html">http://www.barefeats.com/harper22.html

For example, in Call of Duty 4, the 8800GT performs significantly worse in OS X than in Windows. And you can tell the problem is specific to nVidia's OS X drivers rather than the Mac port since ATI's HD3870 performs similarly whether in OS X or Windows.

http://www.barefeats.com/harper21.html">http://www.barefeats.com/harper21.html

Another example is Core Image GPU acceleration. The HD3870 is still noticeably faster than the 8800GT even with the latest 10.5.6 drivers even though the 8800GT is theoretically more powerful. The situation was even worse when the 8800GT was first introduced with the drivers in 10.5.4 where even the HD2600XT outperformed the 8800GT in Core Image apps.

Supposedly, nVidia has been doing a lot of work on new Mac drivers coming in 10.5.7 now that nVIdia GPUs are standard on the iMac and Mac Pro too. So perhaps the situation will change. But right now, nVidia's OpenGL drivers on OS X aren't all they are made out to be.

CrystalBay - Wednesday, April 29, 2009 - link

I'd like to see some benches of highly clocked 4770's XFired.entrecote - Wednesday, April 29, 2009 - link

I can't read graphs where multiple GPU solutions are included. Since this article mostly talks about single GPU solutions I actually processed the images and still remember what I just read.I have an X58/core i7 system and I looked at the crossfire/SLI support as negative features (cost without benefit).