Opening the Kimono: Intel Details Nehalem and Tempts with Larrabee

by Anand Lal Shimpi on March 17, 2008 5:00 PM EST- Posted in

- CPUs

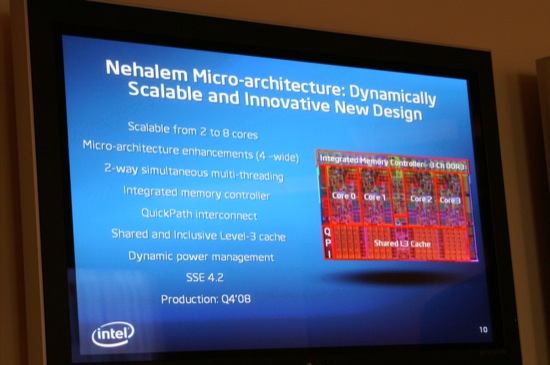

Nehalem supports QPI and features an integrated memory controller, as well as a large, shared, inclusive L3 cache.

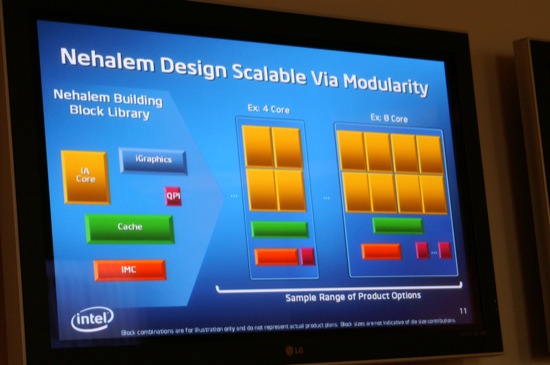

Nehalem is a modular architecture, allowing Intel to ship configurations with 2 - 8 cores, some may have integrated graphics and with various memory controller configurations.

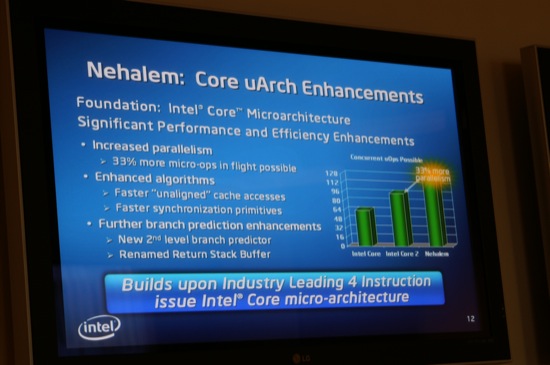

Nehalem allows for 33% more micro-ops in flight compared to Penryn (128 micro-ops vs. 96 in Penryn), this increase was achieved by simply increasing the size of the re-order window and other such buffers throughout the pipeline.

With more micro-ops in flight, Nehalem can extract greater instruction level parallelism (ILP) as well as support an increase in micro-ops thanks to each core now handling micro-ops from two threads at once.

Despite the increase in ability to support more micro-ops in flight, there have been no significant changes to the decoder or front end of Nehalem. Nehalem is still fundamentally the same 4-issue design we saw introduced with the first Core 2 microprocessors. The next time we'll see a re-evaluation of this front end will most likely be 2 years from now with the 32nm "tock" processor, codenamed Sandy Bridge.

Nehalem also improved unaligned cache access performance. In SSE there are two types of instructions: one if your data is aligned to a 16-byte cache boundary, and one if your data is unaligned. In current Core 2 based processors, the aligned instructions could execute faster than the unaligned instructions. Every now and then a compiler would produce code that used an unaligned instruction on data that was aligned with a cache boundary, resulting in a performance penalty. Nehalem fixes this case (through some circuit tricks) where unaligned instructions running on aligned data are now fast.

In many applications (e.g. video encoding) you're walking through bytes of data through a stream. If you happen to cross a cache line boundary (64-byte lines) and an instruction needs data from both sides of that boundary you encounter a latency penalty for the unaligned cache access. Nehalem significantly reduces this latency penalty, so algorithms for things like motion estimation will be sped up significantly (hence the improvement in video encode performance).

Nehalem also introduces a second level branch predictor per core. This new branch predictor augments the normal one that sits in the processor pipeline and aids it much like a L2 cache works with a L1 cache. The second level predictor has a much larger set of history data it can use to predict branches, but since its branch history table is much larger, this predictor is much slower. The first level predictor works as it always has, predicting branches as best as it can, but simultaneously the new second level predictor will also be evaluating branches. There may be cases where the first level predictor makes a prediction based on the type of branch but doesn't really have the historical data to make a highly accurate prediction, but the second level predictor can. Since it (the 2nd level predictor) has a larger history window to predict from, it has higher accuracy and can, on the fly, help catch mispredicts and correct them before a significant penalty is incurred.

The renamed return stack buffer is also a very important enhancement to Nehalem. Mispredicts in the pipeline can result in incorrect data being populated into Penryn's return stack (a data structure that keeps track of where in memory the CPU should begin executing after working on a function). A return stack with renaming support prevents corruption in the stack, so as long as the calls/returns are properly paired you'll always get the right data out of Nehalem's stack even in the event of a mispredict.

53 Comments

View All Comments

Yongsta - Tuesday, March 18, 2008 - link

Anime Characters don't count.RamIt - Monday, March 17, 2008 - link

Yep, you are the only one that cares :)teko - Monday, March 17, 2008 - link

I also ponder on the title and image. Actually, I clicked and read the whole article trying to look for a connection.Yea, I think it's bad taste.

Owls - Tuesday, March 18, 2008 - link

Who cares. If only Elliot Spitzer paid his hookers in Penryns no one would have known.Deville - Monday, March 17, 2008 - link

Wow! Exciting stuff!AMD? Hello???

InternetGeek - Monday, March 17, 2008 - link

But would you rather buy a Tick processor or a Tock processor?In any case you have to accept that the following generation (Tick or Tock) will perform faster. It's how Intel makes money.

The scenario is that on keeping your computer for 3-4 years. I rekcon that's still the average time. Basically when your GPU can no longer play the new games decently. On the CPU side, I think buying a Tock processor might be a better deal because you're getting the refined version of your generation.

Problem is the way Intel introduces their SIMD extensions. I've seen that done randomly (Ticks or Tocks) and sometimes you do want to have those extensions.

Is there a way to correlate Ticks/Tocks and SIMD extensions?

ocyl - Monday, March 17, 2008 - link

For some unknown reasons, Intel's "tick-tock" terminology is used inversely of the same phrase's common understanding, per Page 4 of the article. With Intel, "tock" refers to a brand new generation, while "tick" refers to refinement of the current generation. Why this is the case, I have no idea.InternetGeek - Monday, March 17, 2008 - link

I think you got it wrong. It is perfectly explained at http://www.intel.com/technology/tick-tock/index.ht...">http://www.intel.com/technology/tick-tock/index.ht...Tick is a new silicon process, Tock is an upgrade.

---

Year 1: "Tick"

Intel delivers a new silicon process technology that dramatically increases transistor density versus the previous generation. This technology is used to enhance performance and energy efficiency by shrinking and refining the existing microarchitecture.

Year 2: "Tock"

Intel delivers an entirely new processor microarchitecture to optimize the value of the increased number of transistor and technology updates that are now available

---

InternetGeek - Monday, March 17, 2008 - link

Ehm, You're actually correct. Tick is the upgrade. Tock is the new thing.masher2 - Monday, March 17, 2008 - link

Not quite. "Tick" is the new silicon process (the shrink). "Tock" is the new uCore architecture. They're both "new things".From a refinement perspective, the Tick generation will also typically include uCore refinements, and the Tock likewise includes process refinements.