The Road to Acquisition

The CPU and the GPU have been on this collision course for quite some time; although we often refer to the CPU as a general purpose processor and the GPU as a graphics processor, the reality is that they are both general purpose. The GPU is merely a highly parallel general purpose processor, which is particularly well suited for particular applications such as 3D gaming. As the GPU became more programmable and thus general purpose, its highly parallel nature became interesting to new classes of applications: things like scientific computing are now within the realm of possibility for execution on a GPU.

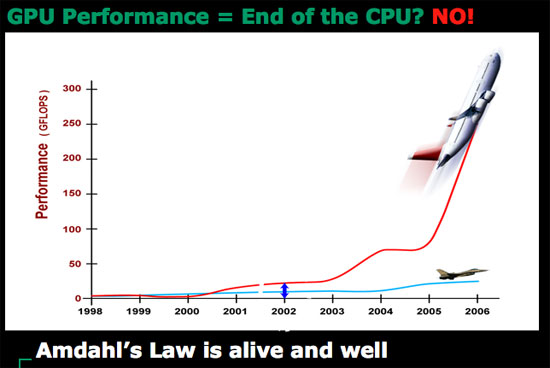

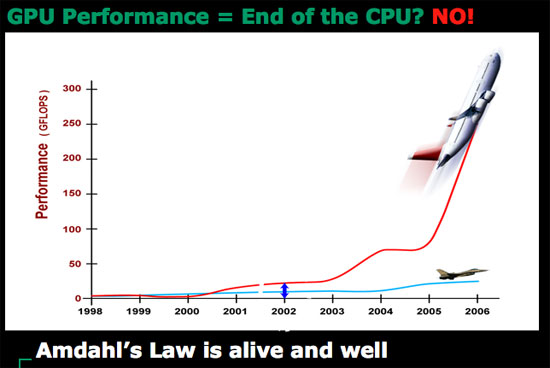

Today's GPUs are vastly superior to what we currently call desktop CPUs when it comes to things like 3D gaming, video decoding and a lot of HPC applications. The problem is that a GPU is fairly worthless at sequential tasks, meaning that it relies on having a fast host CPU to handle everything else other than what it's good at.

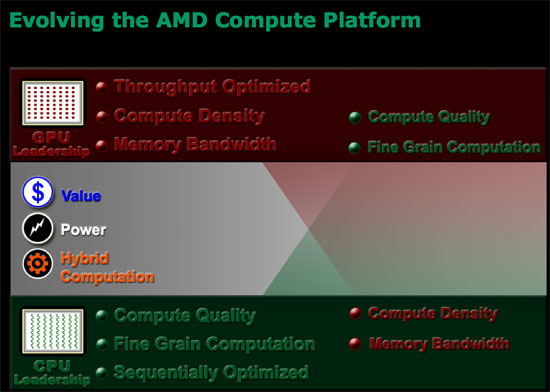

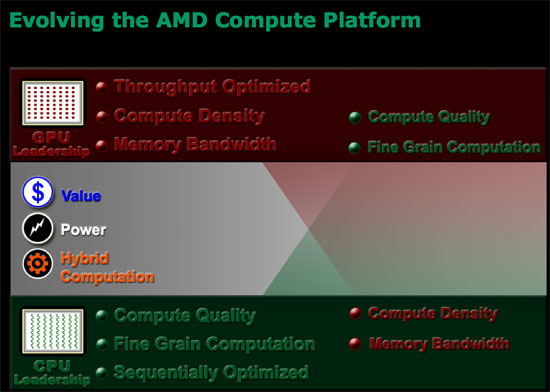

ATI discovered that long term, as the GPU grows in its power, it will eventually be bottlenecked by the ability to do high speed sequential processing. In the same vein, the CPU will eventually be bottlenecked by the ability to do highly parallel processing. In other words, GPUs need CPUs and CPUs need GPUs for all workloads going forward. Neither approach will solve every problem and run every program out there optimally, but the combination of the two is what is necessary.

ATI came to this realization originally when looking at the possibilities for using its GPU for general purpose computing (GPGPU), even before AMD began talking to ATI about a potential acquisition. ATI's Bob Drebin (formerly the CTO of ATI, now CTO of AMD's Graphics Products Group) told us that as he began looking at the potential for ATI's GPUs he realized that ATI needed a strong sequential processor.

We wanted to know how Bob's team solved the problem, because obviously they had to come up with a solution other than "get acquired by AMD". Bob didn't directly answer the question, but he did so with a smile, by saying that ATI tried to pair its GPUs with as low power of a sequential processor as possible but always ran into the same problem of the sequential processor becoming a bottleneck. In the end, Bob believes that the AMD acquisition made the most sense because the new company is able to combine a strong sequential processor with a strong parallel processor, eventually integrating the two on a single die. We really wanted to know what ATI's "plan-B" was, had the acquisition not worked out, because we're guessing that ATI's backup plan is probably very similar to what NVIDIA has planned for its future.

To understand the point of combining a highly sequential processor like modern day desktop CPUs and a highly parallel GPU you have to look above and beyond the gaming market, into what AMD is calling stream computing. AMD perceives a number of potential applications that will require a very GPU-like architecture to solve, things that we already see today. Simply watching an HD-DVD can eat up almost 100% of some of the fastest dual core processors today, while a GPU can perform the same decoding task with much better power efficiency. H.264 encoding and decoding are perfect examples of tasks that are better suited for highly parallel processor architectures than what desktop CPUs are currently built on. But just as video processing is important, so are general productivity tasks, which is where we need the strengths of present day Out of Order superscalar CPUs. A combined architecture that can excel at both types of applications is clearly a direction that desktop CPUs need to target in order to remain relevant in future applications.

Future applications will easily combine stream computing with more sequential tasks, and we already see some of that now with web browsers. Imagine browsing a site like YouTube except where all of the content is much higher quality and requires far more CPU (or GPU) power to play. You need the strengths of a high powered sequential processor to deal with everything other than the video playback, but then you need the strengths of a GPU to actually handle the video. Examples like this one are overly simple, as it is very difficult to predict the direction software will take when given even more processing power; the point is that CPUs will inevitably have to merge with GPUs in order to handle these types of applications.

The CPU and the GPU have been on this collision course for quite some time; although we often refer to the CPU as a general purpose processor and the GPU as a graphics processor, the reality is that they are both general purpose. The GPU is merely a highly parallel general purpose processor, which is particularly well suited for particular applications such as 3D gaming. As the GPU became more programmable and thus general purpose, its highly parallel nature became interesting to new classes of applications: things like scientific computing are now within the realm of possibility for execution on a GPU.

Today's GPUs are vastly superior to what we currently call desktop CPUs when it comes to things like 3D gaming, video decoding and a lot of HPC applications. The problem is that a GPU is fairly worthless at sequential tasks, meaning that it relies on having a fast host CPU to handle everything else other than what it's good at.

ATI discovered that long term, as the GPU grows in its power, it will eventually be bottlenecked by the ability to do high speed sequential processing. In the same vein, the CPU will eventually be bottlenecked by the ability to do highly parallel processing. In other words, GPUs need CPUs and CPUs need GPUs for all workloads going forward. Neither approach will solve every problem and run every program out there optimally, but the combination of the two is what is necessary.

ATI came to this realization originally when looking at the possibilities for using its GPU for general purpose computing (GPGPU), even before AMD began talking to ATI about a potential acquisition. ATI's Bob Drebin (formerly the CTO of ATI, now CTO of AMD's Graphics Products Group) told us that as he began looking at the potential for ATI's GPUs he realized that ATI needed a strong sequential processor.

We wanted to know how Bob's team solved the problem, because obviously they had to come up with a solution other than "get acquired by AMD". Bob didn't directly answer the question, but he did so with a smile, by saying that ATI tried to pair its GPUs with as low power of a sequential processor as possible but always ran into the same problem of the sequential processor becoming a bottleneck. In the end, Bob believes that the AMD acquisition made the most sense because the new company is able to combine a strong sequential processor with a strong parallel processor, eventually integrating the two on a single die. We really wanted to know what ATI's "plan-B" was, had the acquisition not worked out, because we're guessing that ATI's backup plan is probably very similar to what NVIDIA has planned for its future.

To understand the point of combining a highly sequential processor like modern day desktop CPUs and a highly parallel GPU you have to look above and beyond the gaming market, into what AMD is calling stream computing. AMD perceives a number of potential applications that will require a very GPU-like architecture to solve, things that we already see today. Simply watching an HD-DVD can eat up almost 100% of some of the fastest dual core processors today, while a GPU can perform the same decoding task with much better power efficiency. H.264 encoding and decoding are perfect examples of tasks that are better suited for highly parallel processor architectures than what desktop CPUs are currently built on. But just as video processing is important, so are general productivity tasks, which is where we need the strengths of present day Out of Order superscalar CPUs. A combined architecture that can excel at both types of applications is clearly a direction that desktop CPUs need to target in order to remain relevant in future applications.

Future applications will easily combine stream computing with more sequential tasks, and we already see some of that now with web browsers. Imagine browsing a site like YouTube except where all of the content is much higher quality and requires far more CPU (or GPU) power to play. You need the strengths of a high powered sequential processor to deal with everything other than the video playback, but then you need the strengths of a GPU to actually handle the video. Examples like this one are overly simple, as it is very difficult to predict the direction software will take when given even more processing power; the point is that CPUs will inevitably have to merge with GPUs in order to handle these types of applications.

55 Comments

View All Comments

Regs - Friday, May 11, 2007 - link

Tight lipped does make AMD look bad right now but could be even worse for them after Intel has their way with the information alone. I'm not talking about technology or performance, I'm talking about marketing and pure buisness politics.Intel beat AMD to market by a huge margin and I think it would be insane for AMD to go ahead and post numbers and specifications while Intel has more than enough time to make whatever AMD is offering look bad before it hits the shelves or comes into contact with a Dell machine.

strikeback03 - Friday, May 11, 2007 - link

Intel cut the price of all the C2D processors by one slot in the tree - the Q6600 to the former price of the E6700, the E6700 to the former price of the E6600, the E6600 to the former price of the E6400, etc. Anandtech covered this a month or so ago after AMD cut prices.

I wonder as well. Will it be relatively easy to mix and match features as needed? Or will the offerings be laid out that most people end up paying for a feature they don't want for each feature they do?

yyrkoon - Friday, May 11, 2007 - link

Yeah, its hard to take this peice of 'information' without a grain of salt added. On one hand you have the good side, true integrated graphics (not this shitty thing of the past, hopefully . . .), with full bus speed communication, and whatnot, but on the other hand, you cut out discrete manufactuers like nVidia, which in the long run, we are not only talking about just discrete graphics cards, but also one of the best/competing chipset makers out there.

Regs - Friday, May 11, 2007 - link

The new attitude Anand displays with AMD is more than enough and likely the whole point of the article.AMD is changing for a more aggressive stance. Something they should of done years ago.

Stablecannon - Friday, May 11, 2007 - link

Aggressive? I'm sorry could you refer me to the article that gave you that idea. I must have missed while I was at work.

Regs - Friday, May 11, 2007 - link

Did you skim?There were at least two whole paragraphs. Though I hate to qoute so much content, I guess it's needed.

sprockkets - Friday, May 11, 2007 - link

What is there that is getting anyone excited to upgrade to a new system? We need faster processors and GPUs? Sure, so we can play better games. That's it?Now we can do HD content. I would be much more excited about that except it is encumbered to the bone by DRM.

I just wish we had a competent processor that only needs a heatsink to be cooled.

Not sure what you are saying since over a year ago they would have been demoing perhaps 65nm cells, but whatever.

And as far as Intel reacting, they are already on overdrive with their product releases, FSB bumps, updating the CPU architecture every 2 years instead of 3, new chipsets every 6 months, etc. I guess when you told people we would have 10ghz Pentium 4's and lost your creditbility, you need to make up for it somehow.

Then again, if AMD shows off benchmarks, what good would it do? The desktop varients we can buy are many months away.

Viditor - Saturday, May 12, 2007 - link

In April of 2006, AMD demonstrated 45nm SRAM. This was 3 months after Intel did the same...

sprockkets - Friday, May 11, 2007 - link

To reply to myself, perhaps the Fusion project is the best thing coming. If we can have a standard set of instructions for cpu and gpu, we will no longer need video drivers, and perhaps we can have a set that works very low power. THAT, is what I want.Wish they talked more of DTX.

TA152H - Friday, May 11, 2007 - link

I agree with you about only needing a heat sink, I still use Pentium IIIs in most of my machines for exactly that reason. I also prefer slotted processors to the lame socketed ones, but they cost more and are unnecessary so I guess they aren't going to come back. They are so much easier to work with though.I wish AMD or Intel would come out with something running around 1.4 GHz that used 10 watts or less. I bought a VIA running at 800 MHz a few years ago, but it is incredibly slow. You're better off with a K6-III+ system, you get better performance and about the same power use. Still, it looks like Intel and AMD are blind to this market, or minimally myopic, so it looks like VIA/Centaur is the best hope there. The part I don't get is why they superpipeline something for high clock speed when they are going for low power. It seems to me an upgraded K6-III would be better at something like this, since by comparison the Pentium/Athlon/Core lines offer poor performance for the power compared to the K6 line, considering it's made on old lithography. So does the VIA, and that's what it's designed for. I don't get it. Maybe AMD should bring it back as their ultra-low power design. Actually, maybe they are. On a platform with reasonable memory bandwidth, it could be a real winner.