Power Within Reach: NVIDIA's GeForce 8800 GTS 320MB

by Derek Wilson on February 12, 2007 9:00 AM EST- Posted in

- GPUs

The 8800 GTS 320MB and The Test

Normally, when a new part is introduced, we would spend some time talking about number of pipelines, computer power, bandwidth, and all the other juicy bits of hardware goodness. But this time around, all we need to do is point back to our original review of the G80. Absolutely the only difference between the original 8800 GTS and the new 8800 GTS 320MB is the amount of RAM on board.

The GeForce 8800 GTS 320MB uses the same number of 32-bit wide memory modules as the 640MB version (grouped in pairs to form 5 64-bit wide channels). The difference is in density: the 640MB version uses 10 64MB modules, whereas the 320MB uses 10 32MB modules. That makes it a little easier for us, as all the processing power, features, theoretical peak numbers, and the like stay the same. It also makes it very interesting, as we have a direct comparison point through which to learn just how much impact that extra 320MB of RAM has on performance.

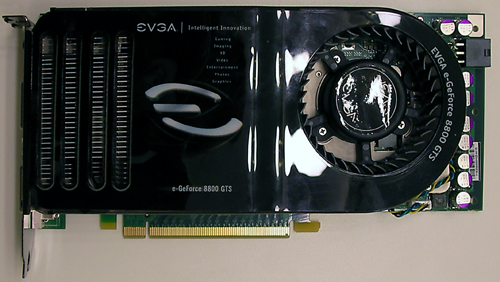

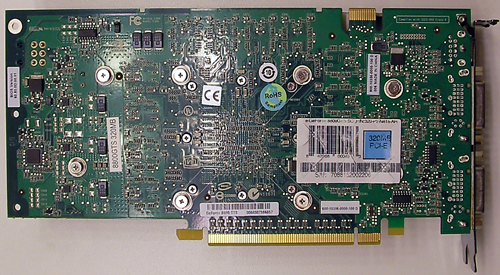

Here's a look at the card itself. There really aren't any visible differences in the layout or design of the hardware. The only major difference is the use of the traditional green PCB rather than the black of the recent 8800 parts we've seen.

Interestingly, our EVGA sample was overclocked quite high. Core and shader speeds were at 8800 GTX levels, and memory weighed in at 850MHz. In order to test the stock speeds of the 8800 GTS 320MB, we made use of software to edit and flash the BIOS on the card. The 576MHz core and 1350MHz shader clocks were set down to 500 and 1200 respectively, and memory was adjusted down to 800MHz as well. This isn't something we recommend people run out and try, as we almost trashed our card a couple times, but it got the job done.

The test system is the same as we have used in our recent graphics hardware reviews:

Normally, when a new part is introduced, we would spend some time talking about number of pipelines, computer power, bandwidth, and all the other juicy bits of hardware goodness. But this time around, all we need to do is point back to our original review of the G80. Absolutely the only difference between the original 8800 GTS and the new 8800 GTS 320MB is the amount of RAM on board.

The GeForce 8800 GTS 320MB uses the same number of 32-bit wide memory modules as the 640MB version (grouped in pairs to form 5 64-bit wide channels). The difference is in density: the 640MB version uses 10 64MB modules, whereas the 320MB uses 10 32MB modules. That makes it a little easier for us, as all the processing power, features, theoretical peak numbers, and the like stay the same. It also makes it very interesting, as we have a direct comparison point through which to learn just how much impact that extra 320MB of RAM has on performance.

Here's a look at the card itself. There really aren't any visible differences in the layout or design of the hardware. The only major difference is the use of the traditional green PCB rather than the black of the recent 8800 parts we've seen.

Interestingly, our EVGA sample was overclocked quite high. Core and shader speeds were at 8800 GTX levels, and memory weighed in at 850MHz. In order to test the stock speeds of the 8800 GTS 320MB, we made use of software to edit and flash the BIOS on the card. The 576MHz core and 1350MHz shader clocks were set down to 500 and 1200 respectively, and memory was adjusted down to 800MHz as well. This isn't something we recommend people run out and try, as we almost trashed our card a couple times, but it got the job done.

The test system is the same as we have used in our recent graphics hardware reviews:

| System Test Configuration | |

| CPU: | Intel Core 2 Extreme X6800 (2.93GHz/4MB) |

| Motherboard: | EVGA nForce 680i SLI |

| Chipset: | NVIDIA nForce 680i SLI |

| Chipset Drivers: | NVIDIA nForce 9.35 |

| Hard Disk: | Seagate 7200.7 160GB SATA |

| Memory: | Corsair XMS2 DDR2-800 4-4-4-12 (1GB x 2) |

| Video Card: | Various |

| Video Drivers: | ATI Catalyst 7.1 NVIDIA ForceWare 93.71 (G7x) NVIDIA ForceWare 97.92 (G80) |

| Desktop Resolution: | 2560 x 1600 - 32-bit @ 60Hz |

| OS: | Windows XP Professional SP2 |

55 Comments

View All Comments

Marlin1975 - Monday, February 12, 2007 - link

Whats up with all the super high resolutions? Most people are running 19inch LCDs that = 1280 X 1024. How about some comparisions at that resolution?poohbear - Tuesday, February 13, 2007 - link

have to agree here, most people game @ 12x10, especially people looking @ this price segment. Once i saw the graphcs w/ only 16x12+ i didnt even think the stuff applies to me. CPU influence is`nt really a factor except for 1024x768 and below, i`ve seen plenty of graphs that demonstrate that w/ oblivion. a faster cpu didnt show any diff until u tested @ 1024x768, 12x10 didnt show much of a diff between an AMD +3500 and a fx60 @ that resolution (maybe 5-10fps). please try to include atleast 12x10 for most of us gamers @ that rez.:)thanks for a good review nonetheless.:)DigitalFreak - Monday, February 12, 2007 - link

WHY didn't you test at 640x480? WaaahhhDerekWilson - Monday, February 12, 2007 - link

Performance at 1280x1024 is pretty easily extrapolated in most cases. Not to mention CPU limited in more than one of these games.The reason we tested at 1600x1200 and up is because that's where you start to see real differences. Yes, there are games that are taxing on cards at 12x10, but both Oblivion and R6:Vegas show no difference in performance in any of our tests.

12x10 with huge levels of AA could be interesting in some of these cases, but we've also only had the card since late last week. Even though we'd love to test absolutely everything, if we don't narrow down tests we would never get reviews up.

aka1nas - Monday, February 12, 2007 - link

I completely understand your position, Derek. However, as this is a mid-range card, wouldn't it make sense to not assume that anyone looking at it will be using a monitor capable of 16x12 or higher? Realistically, people who are willing to drop that much on a display would probably be looking at the GTX(or two of them) rather than this card. The lower widescreen resolutions are pretty reasonable to show now as those are starting to become more common and affordable, but 16x12 or 19x12 capable displays are still pretty expensive.JarredWalton - Monday, February 12, 2007 - link

1680x1050 displays are about $300, and I think they represent the best fit for a $300 (or less) GPU. 1680x1050 performance is also going to be very close - within 10% - of 1600x1200 results. For some reason, quite a few games run a bit slower in WS modes, so the net result is that 1680x1050 is usually within 3-5% of 1600x1200 performance. Lower than that, and I think people are going to be looking at midrange CPUs costing $200 or so, and at that point the CPU is definitely going to limit performance unless you want to crank up AA.maevinj - Monday, February 12, 2007 - link

Exactly. I want to know how this card compares to a 6800gt also. I run 1280x1024 on a 19' and with the 6800gt and need to know if it's worth spending 300 bucks to upgrade or just wait.DerekWilson - Monday, February 12, 2007 - link

The 8800 GTS is much more powerful than the 6800 GT ... but at 12x10, you'll probably be so CPU limited that you won't get as much benefit out of the card as you would like.This is especially true if also running a much slower processor than our X6800. Performance of the card will be very limited.

If DX10 and all the 8 series features are what you want, you're best off waiting. There aren't any games or apps that take any real advantage of these features yet, and NVIDIA will be coming out with DX10 parts suitable for slower systems or people on more of a budget.

aka1nas - Monday, February 12, 2007 - link

It would be nice to actually have quantifiable proof of that, though. 1280x1024 is going to be the most common gamer resolution for at least another year or two until the larger panels come down in price a bit more. I for one would like to know if I should bother upgrading to a 8800GTS from my X1900XT 512MB but it's already getting hard to find direct comparisons.Souka - Monday, February 12, 2007 - link

Its faster than a 6800gt.... what else do you want to know.:P