NVIDIA's GeForce 8800 (G80): GPUs Re-architected for DirectX 10

by Anand Lal Shimpi & Derek Wilson on November 8, 2006 6:01 PM EST- Posted in

- GPUs

What is CSAA?

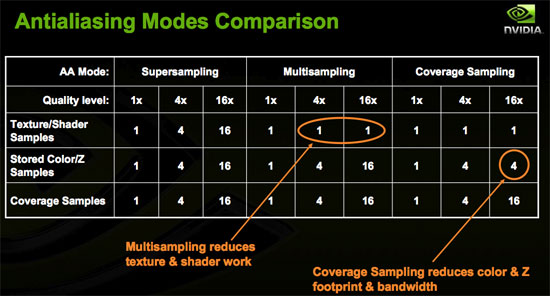

Taking another step forward in antialiasing quality and performance, NVIDIA is introducing Coverage Sample Antialiasing with G80. Coverage Sample AA is an evolutionary step forward in AA technology designed to improve how accurately the hardware is able to determine the area of a pixel covered by any given surface. CSAA can be thought of as extending MSAA. NVIDIA is calling all of their AA modes CSAA, even though common AA modes (2x, 4x, and now 8x (8xQ to NVIDIA)) are performed exactly the same way MSAA would be performed.

To enable modes that more accurately represent each polygon's coverage of a pixel, NVIDIA has introduced an "Enhance the application" option in their driver. This option will allow you to enable a desired MSAA mode in a game (either 4x or 8x) and then "enhance" it by enabling 8x, 16x, or 16xQ CSAA. This will make the 4xAA requested in the game look like 8xAA or 16xAA. Enhancing 8x to 16xQ gives the effect of 16xMSAA without the huge performance impact that would be associated with such a setting.

To understand how it comes together, lets take a quick look at fragments and the evolution of AA.

We usually refer to fragments as pixels for simplicity sake (and because Microsoft decided to use the term pixel shader rather than fragment shader in DirectX), but it helps to understand what the difference between a pixel and a fragment is when talking about AA methods. A pixel is simply a colored dot on the screen (or stored in a frame buffer). The different pieces of data that go into determining the color of a particular pixel are called fragments. For example, if 2 triangles cover the area of a single pixel, both will be processed as fragments. Texture look ups will be done for each at the pixel center, and a color and depth will be determined, and any of this data can be manipulated by a fragment (pixel) shader. Without AA (and ignoring blending, transparency, etc...), only the fragment that is nearest the viewer and covers the pixel center will determine the color of the pixel. Antialiasing techniques are used to make the final pixel color reflect an accurate blend of the colors that cover a pixel.

A sub-pixel can be thought of as a zoomed in look at the area a pixel covers, so for example instead of a single pixel it can be viewed as a 10x10 grid of sub-pixels. Current popular FSAA (full screen AA) methods use the calculated colors of multiple sub-pixels that fall within the area of a pixel rather than just the pixel center to determine the final color. Super Sample AA takes each of these sub-pixels through the entire pipeline to determine texture and pixel shader output at each location. This is very accurate, but wastes lots of processing power without providing a proportional benefit. This is because sub-pixels that fall on the same surface don't usually end up with very different colors. MSAA only looks at one textured/shaded sample point per fragment. The colors of the sub-pixels on a polygon are the same as the color at the center of the pixel, but each sub-pixel gets its own depth value. When two polygons cover the same pixel, we can end up with different colored sub-pixels. Blending these colors proportionally results in properly antialiased polygon edges.

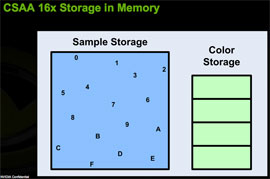

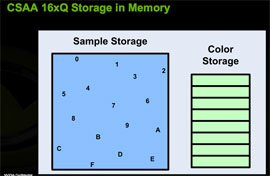

CSAA extends MSAA by decoupling color and depth values from the positions of the sample points within a pixel. Color values are determined at the pixel center, and color and depth data are stored in a buffer. The extension of this in CSAA comes in that we can look at more sample points in the pixel than we store color/Z data for. Under NVIDIA's 16x CSAA, four color values are stored, but the fragment coverage information for each of 16 sample points is retained. These coverage sample points are able to reference the appropriate color/Z data stored for the polygon that covers them.

|

|

While NVIDIA couldn't go into much detail on the technology behind CSAA, we can extrapolate what's going on behind the scenes in order to make this happen. For each triangle that covers a pixel, each CSAA sample point gets a boolean value that indicates whether or not it is covered by the triangle. Color/Z data for the fragment are stored in a buffer for that pixel. For this whole thing to work, each CSAA sample point must also know what color in the buffer to indicate. If we assume position is predefined, the most storage that would be needed for each CSAA point is 4 bits (one boolean coverage value plus 3bits to index 8 color/Z values). The color and Z data will be significantly larger than 8 bytes per pixel, especially for floating point color data, so the memory footprint shouldn't be much larger than MSAA.

As fragments are sent out of the pixel shader, sub-pixel data is updated based on depth tests, and coverage samples and color/Z data will be updated as necessary. When the scene is ready to be drawn, the coverage sample points and color/Z data will be used to determine the color of a pixel based on each fragment that influenced it.

So what are the downsides? We have less depth information inside the pixel, but in most cases this isn't as important as color information. We do need to know depth at different sub-pixel positions in order to handle intersecting polygons, but doing this with a different level of detail than color information shouldn't have a big impact on quality.

The other drawback is that algorithms that require stencil/Z data at sub-pixel locations will not work correctly with CSAA in modes where there are more coverage samples than colors stored. In these cases, like with the stencil shadows used in FEAR, only the coverage samples located where color values are taken are used. This effectively reverts these algorithms to MSAA quality levels. CSAA will still be applied to polygon edges, and stencil algorithms will still work with the decreased level of antialiasing applied.

At a basic level, CSAA can provide more accurate coverage information for a pixel without the storage requirements of MSAA. This not only gives gamers an option to enable higher quality AA, but the option to enable higher quality AA without a large performance impact. While the explanation of how it does this may be overly complex, here's a simple table to help convey what's going on:

111 Comments

View All Comments

JarredWalton - Wednesday, November 8, 2006 - link

Page 17:"The dual SLI connectors are for future applications, such as daisy chaining three G80 based GPUs, much like ATI's latest CrossFire offerings."

Using a third GPU for physics processing is another possibility, once NVIDIA begins accelerating physics on their GPUs (something that has apparently been in the works for a year or so now).

Missing Ghost - Wednesday, November 8, 2006 - link

So it seems like by substracting the highest 8800gtx sli power usage result with the one for the 8800gtx single card we can conclude that the card can use as much as 205W. Does anybody knows if this number could increase when the card is used in DX10 mode?JarredWalton - Wednesday, November 8, 2006 - link

Without DX10 games and an OS, we can't test it yet. Sorry.JarredWalton - Wednesday, November 8, 2006 - link

Incidentally, I would expect the added power draw in SLI comes from more than just the GPU. The CPU, RAM, and other components are likely pushed to a higher demand with SLI/CF than when running a single card. Look at FEAR as an example, and here's the power differences for the various cards. (Oblivion doesn't have X1950 CF numbers, unfortunately.)X1950 XTX: 91.3W

7900 GTX: 102.7W

7950 GX2: 121.0W

8800 GTX: 164.8W

Notice how in this case, X1950 XTX appears to use less power than the other cards, but that's clearly not the case in single GPU configurations, as it requires more than everything besides the 8800 GTX. Here's the Prey results as well:

X1950 XTX: 111.4W

7900 GTX: 115.6W

7950 GX2: 70.9W

8800 GTX: 192.4W

So there, GX2 looks like it is more power efficient, mostly because QSLI isn't doing any good. Anyway, simple subtraction relative to dual GPUs isn't enough to determine the actual power draw of any card. That's why we presented the power data without a lot of commentary - we need to do further research before we come to any final conclusions.

IntelUser2000 - Wednesday, November 8, 2006 - link

It looks like putting SLI uses +170W more power. You can see how significant video card is in terms of power consumption. It blows the Pentium D away by couple of times.JoKeRr - Wednesday, November 8, 2006 - link

well, keep in mind the inefficiency of PSU, generally around 80%, so as overall power draw increases, the marginal loss of power increases a lot as well. If u actually multiply by 0.8, it gives about 136W. I suppose the power draw is from the wall.DerekWilson - Thursday, November 9, 2006 - link

max TDP of G80 is at most 185W -- NVIDIA revised this to something in the 170W range, but we know it won't get over 185 in any case.But games generally don't enable a card to draw max power ... 3dmark on the other hand ...

photoguy99 - Wednesday, November 8, 2006 - link

Isn't 1920x1440 a resolution that almost no one uses in real life?Wouldn't 1920x1200 apply many more people?

It seems almost all 23", 24", and many high end laptops have 1900x1200.

Yes we could interpolate benchmarks, but why when no one uses 1440 vertical?

Frallan - Saturday, November 11, 2006 - link

Well i have one more suggestion for a resolution. Full HD is 1920*1080 - that is sure to be found in a lot of homes in the future (after X-mas any1 ;0) ) on large LCDs - I believe it would be a good idea to throw that in there as well. Especially right now since loads of people will have to decide how to spend their money. The 37" Full HD is a given but on what system will I be gaming PS-3/X-Box/PC... Pls advice.JarredWalton - Wednesday, November 8, 2006 - link

This should be the last time we use that resolution. We're moving to LCD resolutions, but Derek still did a lot of testing (all the lower resolutions) on his trusty old CRT. LOL