The GPU Advances: ATI's Stream Processing & Folding@Home

by Ryan Smith on September 30, 2006 8:00 PM EST- Posted in

- GPUs

Folding@Home

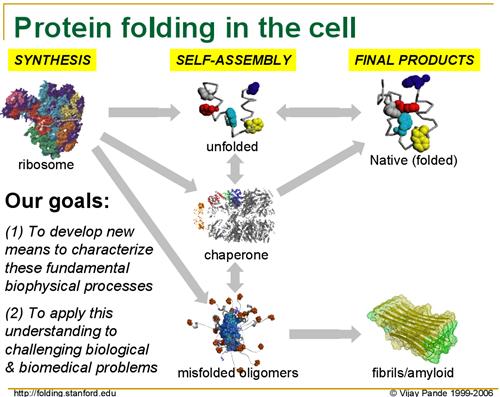

For several years now, Dr. Vijay Pande of Stanford has been leading the Folding@Home project in order to research protein folding. Without diving unnecessarily into the biology of his research, as proteins are produced from their basic building blocks - amino acids - they must go through a folding process to achieve the right shape to perform their intended function. However, for numerous reasons protein folding can go wrong, and when it does it can cause various diseases as malformed proteins wreck havoc in the body.

Although Folding@Home's research involves multiple diseases, the primary disease they are focusing on at this point is Alzheimer's Disease, a brain-wasting condition affecting primarily older people where they slowly lose the ability to remember things and think clearly, eventually leading to death. As Alzheimer's is caused by malformed proteins impairing normal brain functions, understanding how exactly Alzheimer's occurs - and more importantly how to prevent and cure it - requires a better understanding on how proteins fold, why they fold incorrectly, and why malformed proteins cause even more proteins to fold incorrectly.

The biggest hurdle in this line of research is that it's very computing intensive: a single calculation can take 1 million days (that's over 2700 years) on a fast CPU. Coupled with this is the need to run multiple calculations in order to simulate the entire folding process, which can take upwards of several seconds. Even splitting this load among processors in a supercomputer, the process is still too computing intensive to complete in any reasonable amount of time; a processor will simulate 1 nanosecond of folding per day, and even if all grant money given out by the United States government was put towards buying supercomputers, it wouldn't even come close to being enough.

This is where the "@Home" portion of Folding@Home comes in. Needing even more computing power than they could hope to buy, the Folding@Home research team decided to try to spread processing to computers all throughout the world, in a process called distributed computing. Their hopes were that average computer users would be willing to donate spare/unused processor cycles to the Folding@Home project by running the Folding@Home client, which would grab small pieces of data from their central servers and return it upon completion.

The call for help was successful, as computer owners were more than willing to donate computer cycles to help with this research, and hopefully help in coming up with a way to cure diseases like Alzheimer's. Entire teams formed in a race to see who could get more processing done, including our own Team AnandTech, and the combined power of over two-hundred thousand CPUs resulted in the Folding@Home project netting over 200 Teraflops (one trillion Floating-point Operations Per Second) of sustained performance.

While this was a good enough start to do research, it was still ultimately falling short of the kind of power the Folding@Home research group needed to do the kind of long-runs they needed along side short-run research that the Folding@Home community could do. Additionally, as processors have recently hit a cap in terms of total speed in megahertz, AMD and Intel have been moving to multiple-core designs, which introduce scaling problems for the Folding@Home design and is not as effective as increasing clockspeeds.

Since CPUs were not growing at speeds satisfactory for the Folding@Home research group, and they were still well short of their goal in processing power, the focus has since returned to stream processors, and in turn GPUs. As we mentioned previously, the massive floating-point power of a GPU is well geared towards doing research work, and in the case of Folding@Home, they excel in exactly the kind of processing the project requires. To get more computing power, Folding@Home has now turned towards utilizing the power of the GPU.

For several years now, Dr. Vijay Pande of Stanford has been leading the Folding@Home project in order to research protein folding. Without diving unnecessarily into the biology of his research, as proteins are produced from their basic building blocks - amino acids - they must go through a folding process to achieve the right shape to perform their intended function. However, for numerous reasons protein folding can go wrong, and when it does it can cause various diseases as malformed proteins wreck havoc in the body.

|

| Click to enlarge |

Although Folding@Home's research involves multiple diseases, the primary disease they are focusing on at this point is Alzheimer's Disease, a brain-wasting condition affecting primarily older people where they slowly lose the ability to remember things and think clearly, eventually leading to death. As Alzheimer's is caused by malformed proteins impairing normal brain functions, understanding how exactly Alzheimer's occurs - and more importantly how to prevent and cure it - requires a better understanding on how proteins fold, why they fold incorrectly, and why malformed proteins cause even more proteins to fold incorrectly.

The biggest hurdle in this line of research is that it's very computing intensive: a single calculation can take 1 million days (that's over 2700 years) on a fast CPU. Coupled with this is the need to run multiple calculations in order to simulate the entire folding process, which can take upwards of several seconds. Even splitting this load among processors in a supercomputer, the process is still too computing intensive to complete in any reasonable amount of time; a processor will simulate 1 nanosecond of folding per day, and even if all grant money given out by the United States government was put towards buying supercomputers, it wouldn't even come close to being enough.

This is where the "@Home" portion of Folding@Home comes in. Needing even more computing power than they could hope to buy, the Folding@Home research team decided to try to spread processing to computers all throughout the world, in a process called distributed computing. Their hopes were that average computer users would be willing to donate spare/unused processor cycles to the Folding@Home project by running the Folding@Home client, which would grab small pieces of data from their central servers and return it upon completion.

The call for help was successful, as computer owners were more than willing to donate computer cycles to help with this research, and hopefully help in coming up with a way to cure diseases like Alzheimer's. Entire teams formed in a race to see who could get more processing done, including our own Team AnandTech, and the combined power of over two-hundred thousand CPUs resulted in the Folding@Home project netting over 200 Teraflops (one trillion Floating-point Operations Per Second) of sustained performance.

While this was a good enough start to do research, it was still ultimately falling short of the kind of power the Folding@Home research group needed to do the kind of long-runs they needed along side short-run research that the Folding@Home community could do. Additionally, as processors have recently hit a cap in terms of total speed in megahertz, AMD and Intel have been moving to multiple-core designs, which introduce scaling problems for the Folding@Home design and is not as effective as increasing clockspeeds.

Since CPUs were not growing at speeds satisfactory for the Folding@Home research group, and they were still well short of their goal in processing power, the focus has since returned to stream processors, and in turn GPUs. As we mentioned previously, the massive floating-point power of a GPU is well geared towards doing research work, and in the case of Folding@Home, they excel in exactly the kind of processing the project requires. To get more computing power, Folding@Home has now turned towards utilizing the power of the GPU.

43 Comments

View All Comments

ProviaFan - Sunday, October 1, 2006 - link

The problem with F@H scaling over multiple cores is not what one might first think. I've run F@H on my Athlon X2 system since I bought it when the X2's became available in mid-2005. Since each F@H client is single-threaded, you simply install a separate command line client for each core (the GUI client can't run more than one of itself at once), and once they are installed as Windows services, they distribute nicely over all of the available CPUs. The problem with this is that each client has its own work unit with the requisite memory requirements, which with the larger units can become significant if you must run four copies of the client to keep your quad-core system happy. The scalability issues mentioned actually involve in the difficulty in making a single client with a single unit of work multi-threaded. I'm hoping that the F@H group doesn't give up trying to make this possible, because the memory requirements will become a serious issue with large work units when quad and octo core systems become more readily available.highlandsun - Sunday, October 1, 2006 - link

Two thoughts - first, buy more RAM, duh. But that raises a second point - I've got 4GB of RAM in my X2 system. If resource consumption is really such a problem, how is a GPU with a measly 256MB of RAM going to have a chance? How much of the performance is coming from having superfast GDDR available, what kind of slowdown do they see from having to swap data in and out of system memory?As for Crossfire (or SLI, for that matter) why does that matter at all, these things aren't rendering a video display any more, they don't need to talk to each other at all. You should be able to plug in as many video cards as you have slots for and run them all independently.

It sounds to me like these programs are compute-bound and not memory bandwidth-bound, so you could build a machine with 32 PCIEx1 slots and toss 32 GPUs into it and have a ball.

icarus4586 - Tuesday, October 3, 2006 - link

It depends on the type of core, and the data it's working on. There's an option when you set up the client for whether or not to do "big" WUs. I've found that a "big" WU generally uses somewhere around 500MB of system memory, while "small" ones use 100MB or less. I would assume that they'd target it to graphics card memory sizes. Given that the high-end cards they're targeting have 256MB or 512MB of RAM, this should be doable.gersson - Saturday, September 30, 2006 - link

I'm sure my PC can do some good...3.5Ghz C2D and x1900 Crossfire.I've done some Folding @ home before but could never get into it. I'll give it a spin when the new client comes out.

Pastuch - Saturday, September 30, 2006 - link

I just wanted to say thanks to Anandtech for writing this article. I have been an avid reader for years and an overclocker. People always talk about folding in the OC scene but I never took the time to learn just what folding@home is. I had no idea it was research into Alzheimer's. I'm downloading the client right now.Griswold - Sunday, October 1, 2006 - link

Unfortunately, many overclocked machines that are stable by the owners standards dont meet the standards of such projects. The box may run rock stable but can you vouch for the the results to be correct due to the fact that the system is running outside of its specs?If you read the forums of these projects, you will soon see that the people running them arent too fond of overclocking. I've never seen any figures, but I bet there are many, many work units being discarded (yet you still get credit) because they're useless. However, the benefit still seems to outweight the damage. There are just so many people contributing to the project because they want to see their name on a ranking list - without caring about the actual background. I guess this can be called a win-win situation.

JarredWalton - Sunday, October 1, 2006 - link

If a WU completes - whether on an OC'ed PC or not - it is almost always a valid result. If a WU is returned as an EUE (or generates another error that apparently stems from OC'ing), then it is reassigned to other users to verify the result. Even non-OC'ed PCs will sometimes have issues on some WUs, and Standford does try to be thorough - they might even send out all WUs several times just to be safe? Anyway, if you run OC'ed and the PC gets a lot of EUEs (Early Unit Ends), it's a good indication that your OC is not stable. Memory OCs also play a significant role.nomagic - Saturday, September 30, 2006 - link

I'd like to put some of my GPU power into some use too.Griswold - Sunday, October 1, 2006 - link

Read the article.Furen - Saturday, September 30, 2006 - link

Has ATI updated it at all? I dont have an ATI video card around here so I can't go check it out but from what I've seen it's was an extremely barebone application.