Smarter Decoding

Similar to the K8 architecture, Core pre-decodes instructions that are fetched. Pre-decode information includes instruction length and decode boundaries.A first for the x86 world, the Core architecture is equipped with four x86 decoders, 3 simple decoders and 1 complex decoder. The task of the decoders - for all current x86 CPUs - is not only to decipher the incoming instruction (opcode, addresses), but also to translate the 1 to 15 byte variable length x86 instructions into - easier to schedule and execute - fixed length RISC-like instructions (called micro-ops).

The most common x86 instructions are translated into a single micro-op by the 3 simple decoders. The complex decoder is responsible for the instructions that produce up to 4 micro-ops. The really long and complex x86 instructions are handled by a microcode sequencer. This way of handling the complex most CISC-y instructions has been adopted by all modern x86 CPU designs, including the P6, Athlon (XP and 64), and Pentium 4.

There is still more to the Core decoders. The first clever technique is macro-op fusion. It makes it possible for two relatively common x86 instructions to be fused into a single instruction. For example, the x86 compare instruction (CMP) is fused with a jump (JNE TARG). These instructions are typically the assembler result of a compiled if-then-else statement.

The result is that on average in a typical x86 program, for every 10 instruction, two x86 instructions (called macro-ops by Intel) are fused together. When two x86 instructions are fused together, the 4 decoders can decode 5 instructions in one cycle. The fused instruction travels down the pipeline as a single entity, and this has other advantages: more decode bandwidth, less space taken in the Out of Order (OoO) buffers, and less scheduling overhead. If Intel's "1 out of 10" claims are accurate, macro-ops fusion alone should account for an 11% performance boost relative to architectures that lack the technology.

The second clever technique already exists in the current P-M CPUs. There are a few x86 instructions which are pretty complex to perform, but which are at the same time a very typical and common x86 instruction. We are talking for example about mathematical operations where an address is referenced instead of a register. One common example is ADD [mem], EAX . This means add the content of register EAX to the content of a certain memory location (i.e. store the result back at the memory address). Store instructions which get broken down into store address and store data are another example.

In earlier designs such as the P6 (Pentium Pro, PII, PIII) architecture, these instruction would have been broken up into two or even three micro-ops. Remember that the whole philosophy behind all modern x86 CPUs, since the P6, is to decode x86 instructions into RISC-y micro-ops which are then fed to a fast RISC backend; the backend then schedules, issues, executes and retires the instructions in a smooth RISC way.

There is no way you could feed such an instruction (ADD [mem], EAX) to RISC execution units. It violates every RISC rule. RISC designs all load their data into the registers and then perform the necessary calculation on the registers.

So ADD [mem], EAX is broken down into:

Load the contents of [mem] into a register (Since Banias, the ALU and the Load operation are kept together in one micro-op. This is called micro-op fusion. This is no small feat: in older designs keeping the load and ALU operation together would result in pipeline stages that take much longer and thus lower the maximum clock frequency. (In CPU designs, the maximum clock speed is essentially determined by the slowest possible pipeline stage execution time.) Only by using bigger, smarter circuitry that can do a lot in parallel is micro-op fusion possible without lowering the clock speed significantly.MOV EBX, [mem])

An ALU operation, ADD the two registers together (ADD EBX, EAX)

Store the result back to memory (MOV [mem], EBX)

The pre-decode stage recognizes the macro-ops (or x86) instructions that should be kept together. In the decoding phase, ADD [mem], EAX results in one micro-op. Again, this means that the CPU can stuff more instructions in the same OoO buffers, increasing efficiency and improving performance.

Core versus Hammer: Decoding

All very nice, but let us take a look at what really matters: How do the 3 simple + 1 complex decoders of Core compare to the 3 complex decoders of AMD's K8 architecture?The original Athlon ("K7") has two way of decoding, Vector and Direct Path. The Vector Path decoding results in more than two RISC-like instructions (called "macro-ops" by AMD), the Direct Path in one, sometimes two macro-ops. Each of the decoders in K7 can handle both Vector Path and Direct Path decoding, but from a performance standpoint Direct Path is preferred since it results in fewer macro-ops. If you're wondering why were discussing K7 all of a sudden, just as Core is largely based off the P6 architecture, K8 is largely based off the K7 architecture.

The 3 complex decoders are powerful and can decode most x86 instructions, with few instructions requiring the Vector Path. The only downside of the K7 decoders is that some FP instructions and SSE instructions have to pass through the Vector Path. K8 has even stronger complex decoders and almost all FP and SSE instructions are also now decoded through the Direct Path decoders. This is possible as fetching and decoding takes more stages than it did in the K7; the K8 architecture is clearly more powerful when it comes to SIMD.

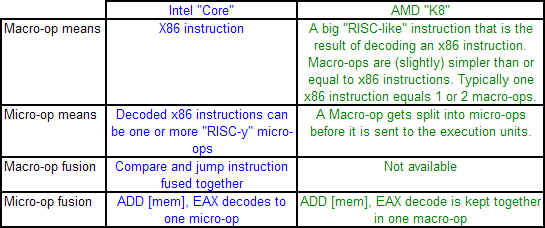

Obviously, Intel's Macro-op ( x86 instruction ) fusion does not exist in AMD's K8. However, micro-op fusion is available in another form. If we compare Intel's and AMD's macro-ops and micro-ops, it is easy to get confused. Take a look at the table below which explains the differences.

Micro-op fusion does exist in the Athlon. An ADD [mem], EAX is kept together in one macro-op as it travels through the pipeline. Therefore it will take only one place in the OoO buffers. However, the load and execute SSE/SSE2 operations can be fused on Core, while this is not the case on K8: packed SSE operations result in two macro-ops.

So how do Intel's Core and AMD's Hammer compare when it comes to decoding? It is hard to say at the moment without access to Intel's optimization manuals. However, we can get a pretty good idea. In almost every situation, the Core architecture has the advantage. It can decode 4 x86 instructions per cycle, and sometimes 5 thanks to x86 fusion. AMD's Hammer can do only 3.

The situation where AMD's 3 complex decoders can outperform Core's 1 complex + 3 simple decoders is much less likely to happen. It would happen when 3 instructions would be fetched that would have to be handled by the complex decoder of the Core CPU, but which are not too complex that the Microcode Sequencer must kick in. Since the most used x86 instructions all map to one Intel micro-op, this is pretty unlikely.

87 Comments

View All Comments

Missing Ghost - Monday, May 1, 2006 - link

hum, no. There is a 1.4gHz P6, you forgot Tualatin.JarredWalton - Monday, May 1, 2006 - link

1.26 was Tualatin as well, but that's beside the point. Basically, clock speed at launch vs. final clock speed of the architecture was a disappointment for Intel. They were hoping for 6+ GHz at launch, and even thinking 10 GHz might be possible.KayKay - Monday, May 1, 2006 - link

Someone should hire you to write textbooks because this was explained extremely well and in simple terms. Good JobJohanAnandtech - Monday, May 1, 2006 - link

Thanks! :-)Very happy to read that.

BitByBit - Monday, May 1, 2006 - link

Fantastic article.In retrospect, it is easy to conclude that this is the route Intel should have chosen for P6's retirement.

Core looks to be a very strong, all-round performer, unlike Netburst.

We can only hope that AMD has an answer in the works, as K8 will have a hard time competing with this monster.

It is unreasonable in my mind to expect a 4-5(?) year-old architecture to be able to compete with Intel's latest. AMD with K8 has had a long reign as the performance king, but is now facing something entirely different. Perhaps K8L will be able to offer serious competition.

It will, however, take more than a doubling of the FP units (if rumour is correct) to achieve this. The cumulative effect of Conroe's architectural features (memory disambiguation, macro-ops fusion etc...) mean that Core's efficiency has far exceeded K8's, not to mention the impact of its vastly superior cache system - its 8-way 32kb * 2 L1 should in theory exceed the hitrate of K8's 2-way 64kb * 2 L1.

It may not be until K10 is released that AMD takes back the performance crown.

Larso - Monday, May 1, 2006 - link

As the K8 is about 5 years old, and the current incarnations doesn't really include that many modifications, I wonder what AMD's engineers have been doing all these years. The K8 is not that different from the K7 even.Whats coming up? The AM2 version is basically the same beast with a new memory controller. The K8L, well since they didn't name it K9, I suppose its just small upgrades to the same design.

I really like to think AMD has something coming we don't know about. Or rather, they ought to have something coming... Any rumors?

Reynod - Monday, May 1, 2006 - link

I can't help but think (and pray) that Larso's comment has some validity here.Why would AMD sit back and do nothing for so long? Would they have not been tinkering with various prototypes over the last couple of years? Are we in for a surprise?? Anand, you and the review team touched on several improvements they could make, care to outline these in some detail in a future article? Someone needs to give AMD some free advice ... heh heh

Spoonbender - Monday, May 1, 2006 - link

Keep in mind that AMD doesn't have Intel's resources. Until recently, they still lost money every quarter. So they might not have been able to work on a successor to K8 until recently. (I remeber reading an interview with some AMD boss, saying that the K8 was literally a last-ditch effort to survive. If that failed, there wouldn't be an AMD, so they threw everything they had at it)So "Why would AMD sit back and do nothing for so long?" Because they had a good project, and didn't have the resources to make a new one?

Of course, it probably isn't that bad, just tossing out an alternative scenario. ;)

However, they have hinted that they were working on specific architectures for the notebook and server markets. (Unlike Intel who are moving back to a single unified architecture).

And despite its age, the K8 is still a pretty nice architecture, and it wouldn't be a huge undertaking to improve on it to get something quite a bit more efficient. Intel had to develop a new architecture because NetBurst just wouldn't cut it. AMD can probably afford to expand on K8 a bit longer, and even with K9/K10, I wouldn't expect a vastly different architecture.

Spoonbender - Monday, May 1, 2006 - link

"Because they had a good project" <- Was supposed to be product, not project... :)psychobriggsy - Monday, May 1, 2006 - link

AMD said recently that they have three times the engineers on their books as they did when they designed K8.However I suspect they're working on K10/KX, although maybe some of them worked on K8L.

Clearly it seems that some in-core work could translate into reasonable performance gains for the current K8 design. A 4-way L1 cache instead of 2-way for example, and a greater L2 to L1 bandwidth. Certainly a mechanism to reorder instructions so that loads can be performed earlier seems to be necessary. 2MB L2 per core could also help, and the 65nm die pictures that AMD showed recently did seem to show far denser cache. K8L is rumoured to include more FP resources, but I don't know about any of the other stuff - but AMD will be talking more about K8L (and beyond?) in June apparently.