Smarter Prefetching and Caching

Making sure that the right instructions and data are ready for use in the caches in the "3 GHz and beyond" era is one of the most important tasks of the architectural engineer. This helps to ensure performance increases as clockspeeds get pushed higher; otherwise, higher CPU clockspeeds will simply result in the processor spending more time waiting for data. This technique of priming the caches is knows as Prefetching; however, the current hardware prefetching algorithms don't always lead to success. There are quite a few cases where they can actually lower performance, especially in bandwidth sensitive applications.The Core architecture prefetching is without any doubt superior to what can be found in the Athlon 64 and Pentium 4. There are no less than three prefetchers - two data, one instruction - in each core, plus two prefetchers for the L2-cache. With eight prefetchers active in one dual-core Core CPU, all those prefetchers could easily get in the way of the "demand" bandwidth - the bandwidth which is needed by the load operations of the running program. In order to avoid this bottleneck, the prefetch monitor of the Core CPUs always give priority to the demand bandwidth; the prefetchers will never steal too much bandwidth away from the running program.

There is more. The data prefetch needs to perform tag lookups (tags = index of cache) in the caches frequently. To avoid this resulting in higher latency for the "normal" (caused by the program running) tag lookups, the data prefetch uses the store port for the tag lookup. If you remember, loads happen about twice as often as stores. This means the store port is used only half as much as the load port and it make sense to use that port for tag lookup by the prefetchers. Note also that stores are not critical for system performance in most cases -- once the data is "written" the processor can go on about its business. The cache/memory subsystem is in charge of replicating the data down to main memory, and as long as this happens eventually, everything works fine.

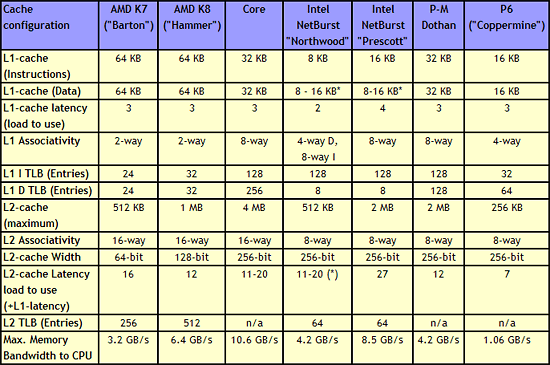

The cache system of the Core CPU is also very impressive. A massive 4 MB L2-cache is shared between the two cores and is accessed in only 12 to 14 cycles. Each core also has a 3 cycle 32 KB Instruction cache and a 32 KB data cache at its disposal. Note that the "Trace Cache" of NetBurst has been left behind with the return to shorter pipelines; the NetBurst Trace Cache basically functions as an instruction cache for pre-decoded instructions, and while this was apparently helpful for the long pipeline of NetBurst, Intel has apparently determined that a traditional L1 caching scheme makes more sense for Core.

Cache Architecture Overview

|

| Click to enlarge |

Just a quick look at the numbers in the above table make it clear that the memory subsystem of the Core architecture is impressive. It has twice as much L2 cache as current dual-core CPUs (the same amount as Presler), but the cache is still accessible with low latency. The shared L2 cache also allows one core to use more than 2MB of cache if necessary. Both L1 and L2 cache are accessed via a 256-bit wide bus, allowing the caches to deliver massive bandwidth to the core.

Core versus Hammer: Memory subsystem

Core's most important competitor, the Hammer ("K8") architecture, has two small but noteworthy advantages. The first is its bigger 2 x 64 KB L1 cache. This is only a small advantage as an 8-way 32 KB cache will have a hit rate close to that of a 2-way 64 KB cache.The second and more important advantage is the on die memory controller, which lowers the latency to the memory considerably. However, the lower clockspeeds of the Core CPUs (relative to NetBurst) and the faster FSB also lower latency significantly. With the numbers available to us now, we have reason to believe that the Athlon 64 X2's latency advantage will shrink to only 15 to 20%. For comparison, the memory subsystem of the Pentium 4 was almost twice as slow as the Athlon 64 (80-90 ns versus 45-50 ns).

However, those two small advantages are likely negated by all the other memory subsystem metrics. The Core CPUs have much bigger caches and much smarter prefetching than the competition. The Core architecture's L1 cache delivers about twice as much bandwidth (Measured by ScienceMark), while it's L2-cache is about 2.5 times faster than the Athlon 64/Opteron one.

87 Comments

View All Comments

thestain - Friday, May 5, 2006 - link

Larger Cache and an extra decoder were bound to help Conroe in the small and simple tesing done by most benchmarks.But, what about applications that are a bit larger than Conroe's cache size or those that are complex causing the simple decoders to not be able to be used that much while placing the single complex decoder on the Conroe into short supply?

Mike

IntelUser2000 - Friday, May 5, 2006 - link

LOL. You crack me up. Go see how much doubling of L2 caches help to increase performance. I guess the last 5 years of Netburst screwed people's mental abilities. Sure the caches will help Conroe, but if the CPU doesn't really need the extra cache, then it will be a waste. Kinda like how doubling L2 caches on Pentium D doesn't help a lot. Kinda like how doubling L2 caches on Athlon 64's don't help either. It's why Semprons excel.

About decoders. Guess you are still in the old ages where one of the reasons K7 was better than P6 was because it has the ability to decode complex instructions in all decoders. If you read about Conroe, more of the instructions that USED to go to the complex decoders can now go to the simple decoders.

And which benchmark would that be.

Guess there is gonna be a lot of AMD fanboys that are gonna cry when Conroe is shown.

stopkidding - Tuesday, May 2, 2006 - link

Did anyone notice that this comment thread is virtually free of the usual "Intel-this, AMD-that" comments that usually are seen on this site. The "fanbois" have nothing to bitch about as their little brains can't comprehend whats written in this article! :-)Reynod - Wednesday, May 3, 2006 - link

Which is a sigh of relief I must say. I can swallow hard facts and interpret code ... my 4400+ looks like going in as my new server box ... and my next gaming box looks like being an OC'd Conroe. I just won't buy an Intel Mobo ... heh heh.mino - Tuesday, May 2, 2006 - link

IMHO not, the article is extremely well written AND there are NO benchmarks => Intelman is happy from the text; AMD-man is hoping the real numbere won't be so bad...On topic, article is written in a very good style for general public.

On thing I'am afraid of is the moment code is optimized for Core, any other irchitecture would take a performance hit. K8 the smallest one, PM/K7 the small one, P4/P6 the big one and all older plus C7 e pretty huge hits.

That bothers me.

Except that, AMD will live for a long time (Opteron alone would survive them for 5+ yrs.) and X2's will be finally cheaper. What else to pray for :)

Best regards.

mino - Tuesday, May 2, 2006 - link

addennum:"the article is extremely well written FOR GENERAL AT AUDIENCE"

Otherwise job well done Johan.

nullpointerus - Tuesday, May 2, 2006 - link

Right, but then why do they respond to the other articles?JustAnAverageGuy - Tuesday, May 2, 2006 - link

Another top notch article, as always, Johan- JaAG

dguy6789 - Tuesday, May 2, 2006 - link

Thank you for writing this article. You have cleared up a large quantity of questions that I had in relation to the Core architecture.Betwon - Tuesday, May 2, 2006 - link

sub eax,[edi+ebx+79]There are 3 registers used: eax, edi, ebx

For Core duo, it decodes to one fusion-micro-op.

In the reservation station (RS), only one entry is needed to be allocated. There are three registers spaces in one RS entry at least. And the results of address(edi+ebx+79) can be w rited back into the same position of one register in this RS entry.(A replace method)

For K7/K8, it decodes to one macro-op?

In the reservation station (RS), only one entry is needed to be allocated? There are three registers spaces in one RS entry?

It can take one entry in ROB.

But I don't believe AMD. It may take two entrys in RS, because there are only two registers spaces in one RS entry of K8. K8 hasn't three registers spaces in one RS entry.

K8's RS is up to 8X3 macro-op, but not means that one macro-op can always take one entry in the RS.

I say that I don't believe AMD.

Of course, Other people have on need to believe me too.