ATI's New Leader in Graphics Performance: The Radeon X1900 Series

by Derek Wilson & Josh Venning on January 24, 2006 12:00 PM EST- Posted in

- GPUs

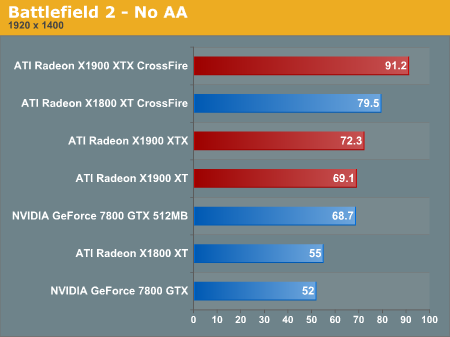

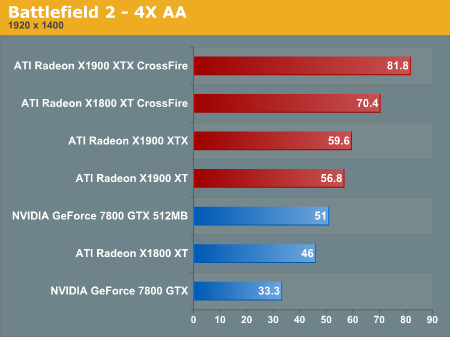

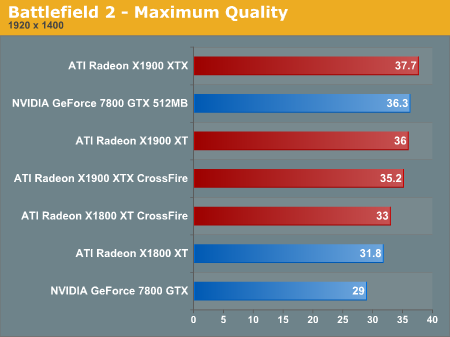

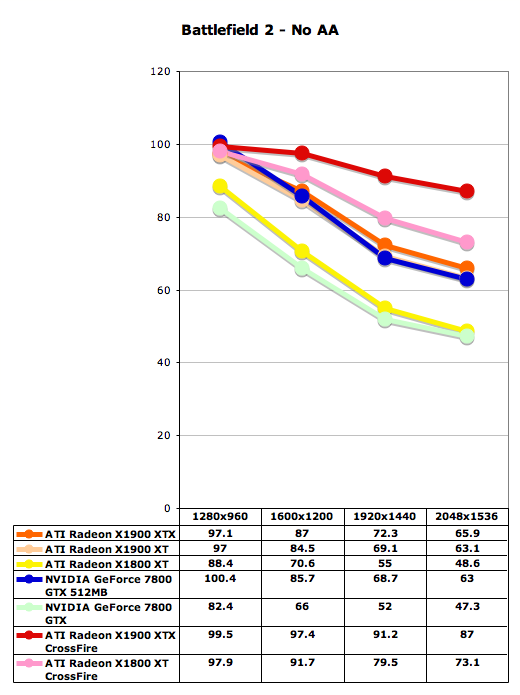

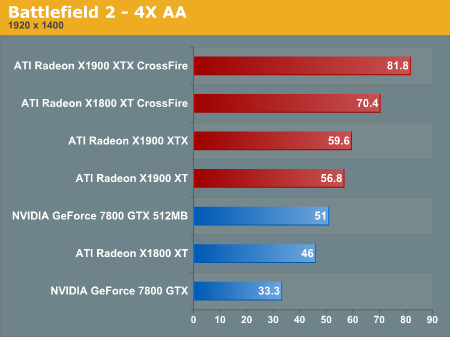

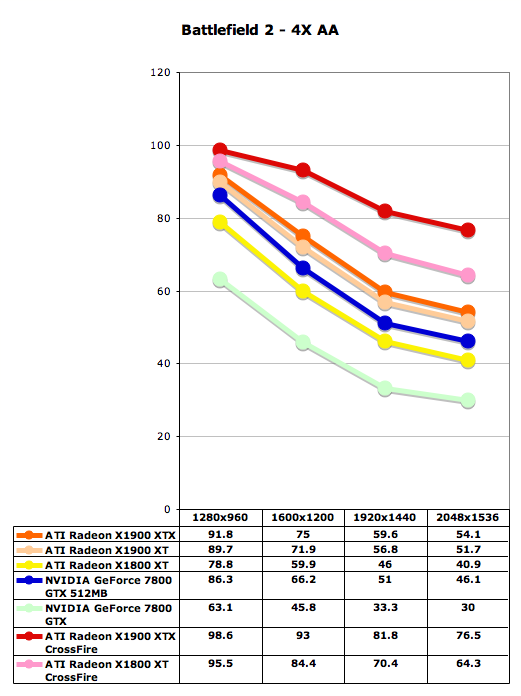

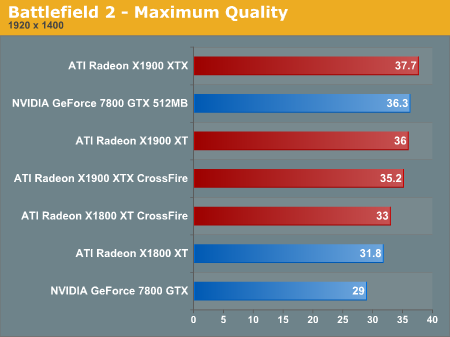

Battlefield 2 Performance

Battlefield 2 has been a standard for performance benchmarks here in the past, and it's probably one of our most important tests. This game still stands out as one of those making best use of the next generation of graphics hardware available right now due to its impressive game engine.

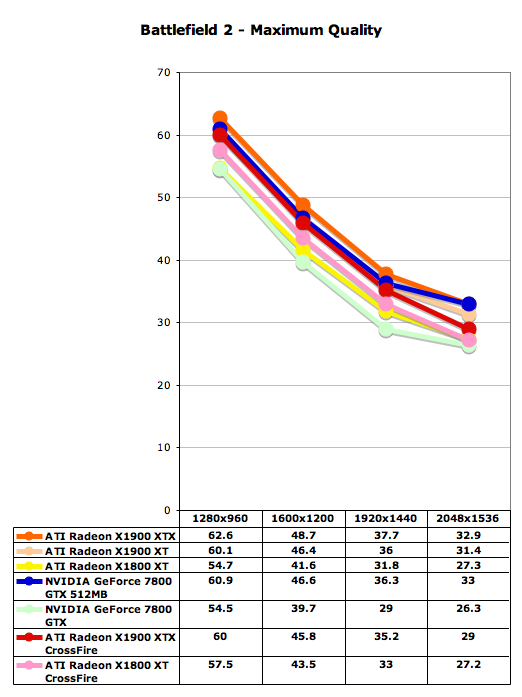

One of the first things to note here is something that is a theme throughout all of our performance tests in this review. In all our tests we find that the X1900 XTX and X1900 XT perform very similar to each other, and in some places differ only by a couple of frames per second. This is significant considering that the X1900 XTX costs about $100 more than the X1900 XT.

Below we have two sets of graphs for three different settings: no AA, 4xAA/8xAF, and maximum quality (higher AA and AF settings in the driver). Note that our benchmark for BF2 had problems with NVIDIA's sli so we were forced to omit these numbers. We can see how with and without AA, both ATI and NVIDIA cards perform very similar to each other on each side. Generally though, since ATI tends to do a little better with AA than NVIDIA, they hold a slight edge here. With the Maximum quality settings, we see a great reduction in performance which is expected. Something to keep in mind is that in the driver options, NVIDIA can enable AA up to 8X, while ATI can only enable up to 6X, so these numbers aren't directly comparable.

Battlefield 2 has been a standard for performance benchmarks here in the past, and it's probably one of our most important tests. This game still stands out as one of those making best use of the next generation of graphics hardware available right now due to its impressive game engine.

One of the first things to note here is something that is a theme throughout all of our performance tests in this review. In all our tests we find that the X1900 XTX and X1900 XT perform very similar to each other, and in some places differ only by a couple of frames per second. This is significant considering that the X1900 XTX costs about $100 more than the X1900 XT.

Below we have two sets of graphs for three different settings: no AA, 4xAA/8xAF, and maximum quality (higher AA and AF settings in the driver). Note that our benchmark for BF2 had problems with NVIDIA's sli so we were forced to omit these numbers. We can see how with and without AA, both ATI and NVIDIA cards perform very similar to each other on each side. Generally though, since ATI tends to do a little better with AA than NVIDIA, they hold a slight edge here. With the Maximum quality settings, we see a great reduction in performance which is expected. Something to keep in mind is that in the driver options, NVIDIA can enable AA up to 8X, while ATI can only enable up to 6X, so these numbers aren't directly comparable.

120 Comments

View All Comments

blahoink01 - Wednesday, January 25, 2006 - link

Considering the average framerate on a 6800 ultra at 1600x1200 is a little above 50 fps without AA, I'd say this is a perfectly relevant app to benchmark. I want to know what will run this game at 4 or 6 AA with 8 AF at 1600x1200 at 60+ fps. If you think WOW shouldn't be benchmarked, why use Far Cry, Quake 4 or Day of Defeat?At the very least WOW has a much wider impact as far as customers go. I doubt the total sales for all three games listed above can equal the current number of WOW subscribers.

And your $3000 monitor comment is completely ridiculous. It isn't hard to get a 24 inch wide screen for 800 to 900 bucks. Also, finding a good CRT that can display greater than 1600x1200 isn't hard and that will run you $400 or so.

DerekWilson - Tuesday, January 24, 2006 - link

we have looked at world of warcraft in the past, and it is possible we may explore it again in the future.Phiro - Tuesday, January 24, 2006 - link

"The launch of the X1900 series no only puts ATI back on top, "Should say:

"The launch of the X1900 series not only puts ATI back on top, "

GTMan - Tuesday, January 24, 2006 - link

That's how Scotty would say it. Beam me up...DerekWilson - Tuesday, January 24, 2006 - link

thanks, fixedDrDisconnect - Tuesday, January 24, 2006 - link

Its amusing how the years have changed everyone's perception as to how much is a reasonalble price for a component. Hardrives, memory, monitors and even CPUs have become so cheap many have lost the perspective of what being on the leading edge costs. I paid 750$ for a 100 MB drive for my Amiga, 500$ for a 4x CR-ROM and remember spending 500$ on a 720 X 400 Epson colour injet. (Yeah I'm in my 50's) As long as games continue to challenge the capabilities of video cards and the drive to increase performance continues the top end will be expensive. Unlike other hardware (printers, memory, hardrives) there are still perfomance improvements to be made that the user will perceive. If someday a card can render so fast that all games play like reality, then video cards will become like hardrives are now.finbarqs - Tuesday, January 24, 2006 - link

Everyone gets this wrong! It uses 16 PIXEL-PIPELINES with 48 PIXEL SHADER PROCESSORS in it! the pipelines are STILL THE SAME as the X1800XT! 16!!!!!!!!!! oh yeah, if you're wondering, in 3DMark 2005, it reached 11,100 on just a Single X1900XTX...DerekWilson - Tuesday, January 24, 2006 - link

semantics -- we are saying the same things with different words.fill rate as the main focus of graphics performance is long dead. doing as much as possible at a time to as many pixels as possible at a time is the most important thing moving forward. Sure, both the 1900xt and 1800xt will run glquake at the same speed, but the idea of the pixel (fragment) pipeline is tied more closely to lighting, texturing and coloring than to rasterization.

actually this would all be less ambigous if opengl were more popular and we had always called pixel shaders fragment shaders ... but that's a whole other issue.

DragonReborn - Tuesday, January 24, 2006 - link

I'd love to see how the noise output compares to the 7800 series...slatr - Tuesday, January 24, 2006 - link

How about some Lock On Modern Air Combat tests?I know not everyone plays it, but it would be nice to have you guys run your tests with it. Especially when we are shopping for $500 dollar plus video cards.