Arm Unveils 2023 Mobile CPU Core Designs: Cortex-X4, A720, and A520 - the Armv9.2 Family

by Gavin Bonshor on May 28, 2023 8:30 PM ESTArm Cortex-X4: Fastest Arm Core Ever Built (Again)

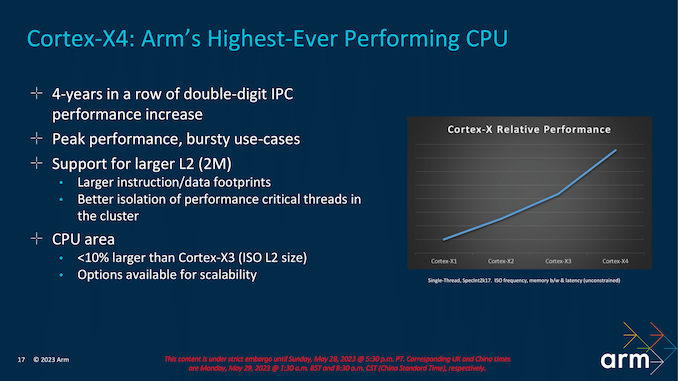

Diving further into Arm's new CPU core microarchitectures, we'll start with the Cortex-X4, which stands out as the most substantial advancement. Arm has consistently achieved significant double-digit improvements in instructions per cycle (IPC) with each iteration, starting from the original Cortex X1 core, then progressing to the Cortex X2, and continuing with the Cortex-X3 IP introduced last year, and they'll do so again for the Cortex-X4 in 2023 as well. The Cortex-X4 is specifically designed to cater to cutting-edge flagship Android-based smartphones and leading mobile devices that utilize robust Arm IP-based System-on-Chips (SoCs). Representing a subtle yet impactful enhancement over its predecessor, the Cortex-X4 further refines the capabilities of the Cortex-X3 core.

The Cortex-X4 is designed to deliver top-tier compute performance in mobile System-on-Chips (SoCs), particularly tailored to handle demanding workloads like AAA gaming and bursty operations. The Cortex-X4 is Arm's highest-performing core to date, featuring an anticipated core clock speed of 3.4 GHz and an increased L2 cache per core, doubling its capacity to 2 MB compared to last year's 1 MB Cortex-X3 . Despite these enhancements, Arm has managed to maintain a minimal increase in the physical size of the core, with the more complex X4 CPU core coming in at under a 10% die size increase (the additional L2 cache excluded).

As for power efficiency, Arm claims a notable improvement in power savings of approximately 40% compared to previous generations. Don't expect to see too many CPU vendors take advantage of that, since the primary job of the X-series is to run fast, but it goes to show what the X4 can accomplish in conjunction with the latest fab nodes.

Arm Cortex-X4: Front End Reshuffle, Redesigning Instruction Fetching

In terms of architecture, the Cortex-X4 exhibits similarities to its predecessor, the Cortex-X3, with the primary focus being on refining the existing architecture and optimizing efficiency across various core components.

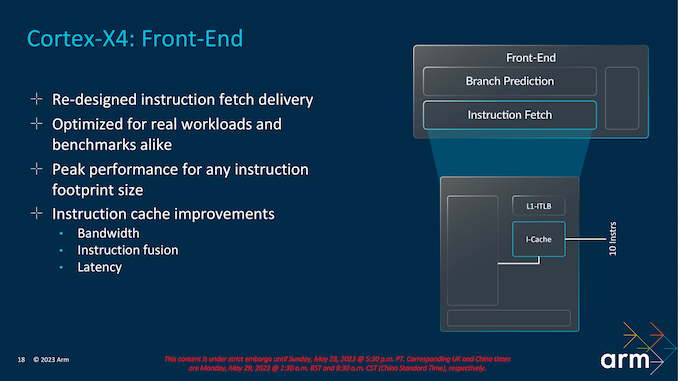

Now while things haven't changed all too much architecturally from the Cortex-X4 to the Cortex-X3, the Cortex-X4 front end has had a reshuffle and a tweak of the instruction fetching block. The aim of Arm has been to keep latencies low while offering peak bandwidth throughout its Cortex-X4 core and within the entire TSC23 core cluster.

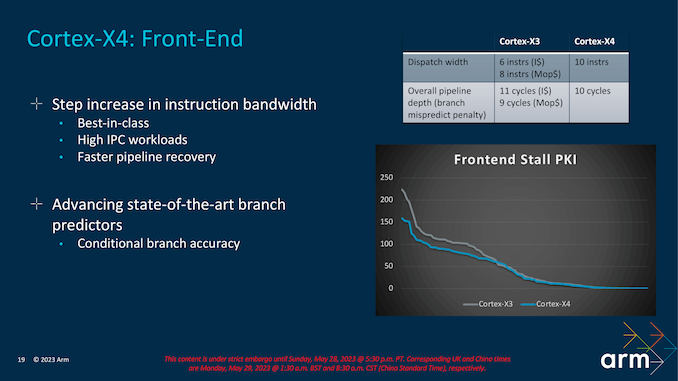

With regards to the Cortex-X4's front end, the big architectural change here has been through its dispatch width. The Cortex-X4 now has a more focused 10-wide dispatch width, up for the 6/8-wide dispatch width of the X3. That said, while the front-end has gotten wider, the effective pipeline length has actually shrunken even so slightly; the branch mispredict penalty is down from 11 cycles to 10.

The other big front-end focus has been on the instruction fetching process itself; Arm has essentially redesigned the entire instruction fetch delivery system to ensure better efficiency throughout the pipeline when compared to the Cortex-X3.

The latest architecture also takes another pass on improving Arm's branch prediction units, further improving their prediction accuracy. Arm isn't saying much about how they accomplished this, though we do know that they've targeted conditional branch accuracy in particular. None of this comes for free, though; Arm was quick to note that the improved predictors were more expensive to implement. Still, Arm believes this is worth it in keeping the beast (Cortex-X4) fed, so to speak.

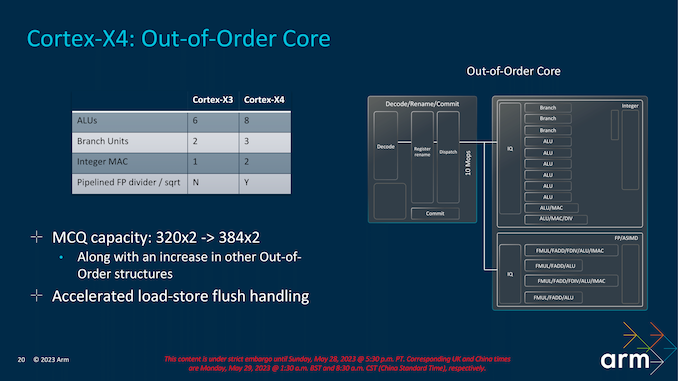

Shifting to the back-end of the CPU core, Arm has taken a focus on execution bandwidth. Among other changes, Arm has increased the number of ALUs from 6 to 8. Of these, six are simple ALUs for processing single-cycle uOPS. Meanwhile, there are two complex ALUs for processing dual and multi-cycle instructions, Arm has also squeezed another branch unit in, giving the Cortex-X4 a total of 3, up from 2, as well as adding an extra Integer MAC. Meanwhile on the floating point side of matters, the Cortex-X4 also upgrades a pipelined FP divider.

So to some extent, the X4's performance improvements come from a brute force increase throughout the chip, with the chip able to dispatch and retire more instructions in a single clock. The goal for the Cortex-X4 is to offer peak performance on both benchmarks and real-world workloads, as well as an increase in the fetch bandwidth for any instruction set going through the pipeline. The benefits come through latency reductions and instruction fusion benefits for larger instruction footprint workloads.

Increasing the Micro-op Commit Queue (MCQ) capacity – and thus the size of the window for instruction re-ordering – is another refinement in Arm's toolbox for Cortex-X4. As with previous increases in Arm's re-order buffers, the larger queue affords more opportunities to look for instruction re-ordering, to hide memory stalls and otherwise extract more opportunities for the rest of the CPU back-end to get some work done. And with CPU performance continuing to outpace memory bandwidth, the need for larger buffers only grows with each generation.

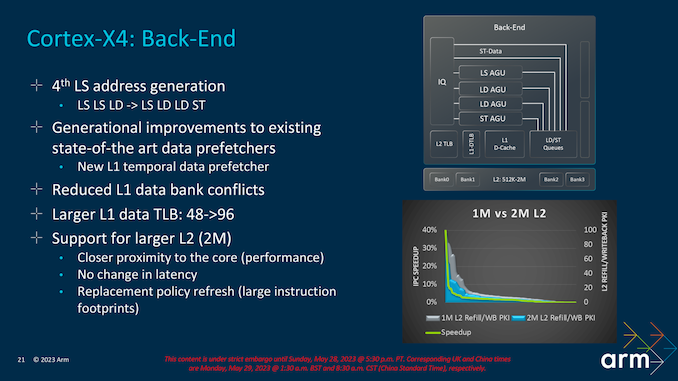

Finally, at the far back end of the X4 CPU core, Arm has added a fourth address generation unit. Interestingly, this one is just for stores; Arm already had a load-only unit, but opted for a store-only unit rather than converting it to a full mixed LS unit.

The L1 cache subsystem of the Cortex-X4 has also received a lot of work. The L1's translation lookaside buffer (TLB) has been doubled to 96 entries, and there's a new L1 temporal data prefetcher. Finally, Arm has taken steps to reduce the number of L1 data bank conflicts on the X4.

There have also been some changes made to better support the larger L2 cache size of the Cortex-X4 that we previously discussed. The L2 has been moved physically closer to the CPU core for performance reasons, and Arm has been able to expand the L2 size without any resulting increase in latency. So there is less of a trade off here than is often the case for increasing cache sizes.

Cortex-X4: IPC Uplift, Scalable up to 14-Cores, Up to 32 MB L3 Cache

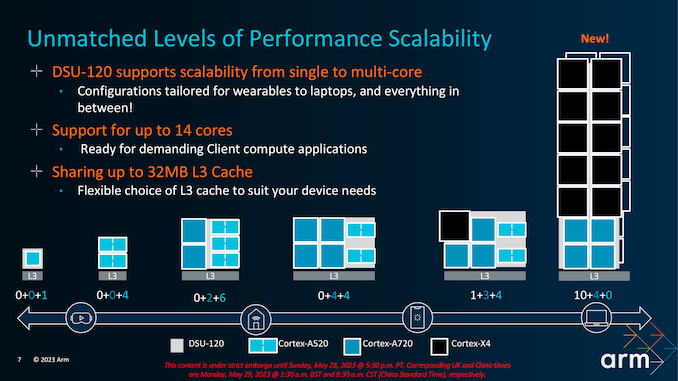

One of the primary benefits of Arm's v9.2 architecture shift is that it offers increased scalability. The TSC23 core cluster now supports up to 14 cores which adds a level of flexibility for SoC vendors to implement into their latest designs. Perhaps one of the biggest changes is support for up to 32 MB of shared L3 cache within the TSC23 core cluster. The levels of L3 cache implemented is of course down to the SoC manufacturer, but the maximum levels that can be offered is 32 MB, which allows increased support for higher-end mobile devices such as tablets and notebooks, where applicable.

The maximum number of cores across the entire TSC23 core cluster stretches to 14 in total, with a mixture of big and little cores, with multiple avenues for SoC vendors to explore to capitalize on things like performance gains and efficiency. All of this flexibility is given to the SoC vendors to design their own variations depending on the level of the device. So a flagship mobile device will leverage different combinations of Cortex-X4, Cortex-A720, and Cortex-A520 depending on multiple factors such as cost, power budget, and expected performance levels.

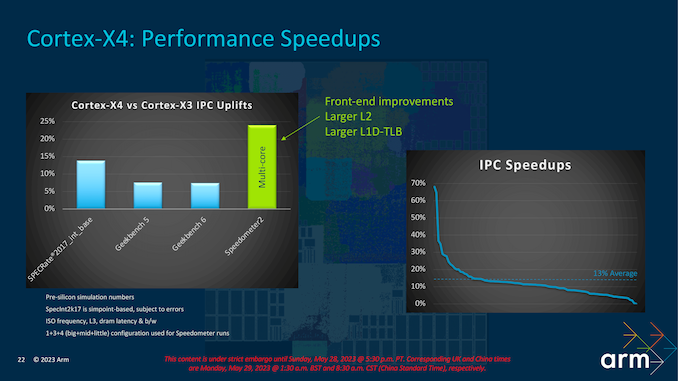

A bigger core and optimizations of existing processes typically come with a performance benefit. Arm is claiming that, based on its pre-silicon simulation numbers, the Cortex-X4 will deliver a 15% IPC uplift at iso-frequency and iso-bandwidth versus the Cortex-X3 used in last year's flagship Android SoCs. There are a number of factors at play here in delivering that total performance improvement, including front-end optimizations and improvements, as well as a larger L2 per core cache of 2 MB and a larger L1D-TLB, which is a cache designed for recently accessed page transitions.

52 Comments

View All Comments

Silver5urfer - Monday, May 29, 2023 - link

It is not related to the UI, it is related to the worst practices in ARM, Apple.Disposable goods, non compute focused, rather a simplistic tool for the Technological dependency rather than using it like a computer and most importantly, owning your own data in the case of an ARM powered smartphone - Filesystem, Applications control Etc. None of these are present in iOS. And they are now incorporated into the Android heavily from the UI, Design philosophy, Technology.

Axing 32Bit OS / Applications and forcing everyone to be on the Playstore mandated policies gives an edge to Android on axing the power user features, i.e targeting latest OS SDK means you are restricted heavily to an OS and its jail. Also they are hiding applications now on Playstore. That means old apps are now hard to find, and good apps do not work on latest OS (Timey app for eg), and lot of examples. Plus now modern Android blocks you even on Sideload notifying the SDK target version in normal terms such as this app won't work properly because Android 14 and up do not allow Android 6 below apps.

Windows enjoys the superior user retention and proper computing because of it's legacy support, A Windows 3.1 .exe will work on Windows 10. But on Apple it's all outdated and even hardware, any x86 processor from Core2Quad which lacks SSE4 and AVX2 still runs modern games which utilize these features but can be made to work because of the power of x86 and Windows. That's how a superior computer is born but not guardrails and heavy restriction and placing consumer in the dark in the name of technology BS.

Eliadbu - Monday, May 29, 2023 - link

Legacy support is overrated for vast majority of the user base, even on windows. its also a thing that can be achieved with emulation for the niche use cases. Most of the argument you gave had little to non to do with 32 bit support. This legacy support costs in silicon space, complexity and software upkeep - all of those resources can be used for actually useful things that will benefit most users.TheinsanegamerN - Wednesday, May 31, 2023 - link

LMAO legacy support is the only reason windows still exists.iAPX - Monday, May 29, 2023 - link

Intel is thinking about being 64bit-only too, with the X86S project.This is an interesting way, as 16bit and 32bit compatibility could be offered through software emulation in a VM (their proposal), naturally with impact on performances.

Silver5urfer - Monday, May 29, 2023 - link

I hope that project doesn't fly but looking at modern Intel with their ARM clone of P+E to worst now P+E+LPE cores they may break the whole 32Bit Application world.Only HPC market can stop it but looking at how Windows 10 is now being retired by 2030 max (LTSC 1809 maximum lifecycle) add maybe ESU channel like Win7 to 2033 at best, after that I think Windows will also copy Apple hard they are already doing it hardcore as Windows 11 is the Win10S branched out because those internal designers are cultists of the Applesque systems lock down and uber simplification of power user nature, this makes entire generations of young population being dumbed down by the basic structure of the OS + Technology rather than innovative and explorative thinking process of the older era (XP, 7 etc)

Windows 10 is the last Microsoft OS that has real support of all the older Windows applications, 11 discarded a lot of Shell32 / Win32 systems and ruined the NTKernel in the process and the CPU schedulers. They sabotaged the entire explorer.exe too, and with the modern fad AI introduction into the OS the telemetry will explode into exponential factor and with the complete dumbing down of the OS and the process, Atomization of the human thinking will lead to regressive computation. Really unfortunate.

Emulation means there will be a performance penalty.

stephenbrooks - Monday, May 29, 2023 - link

I'm interested by the ARM laptop direction (the 10 X4 plus 4 mid-core design). That could run a full OS like Windows or Linux.eastcoast_pete - Monday, May 29, 2023 - link

At least Gavin addressed the mini-elephant in the room for the small cores (thanks!): still no out-of-order design. Instead, an ALU is removed "for greater efficiency". By now, I am suspecting that ARM and Apple have some kind of understanding that ARM little cores won't, under any circumstance, be allowed to come anywhere close to challenging Apple's efficiency cores in Perf/W. Apple's efficiency cores have about twice the IPC of the little ARM cores and all at about the same power draw. Which made the impossible come true: I am now rooting for Qualcomm to kick ARM's butt, both in court and in SoCs.name99 - Monday, May 29, 2023 - link

Oh FFS, always the conspiracy theories!It’s really much simpler — Apple’s small cores are much larger than ARM’s small cores. ARM seems to be thinking that their mid cores (A720) can play the role of Apple’s small cores, and that may be to some extent true in that Apple can split work between big and small in a way that Android cannot, given that Apple knows much more about what each app is doing (better API for specifying this, and much more control of the OS and drivers).

Much more interesting is how this is all about essentially catching up to Apple’s M series. Which is fine, but if you look at what Apple is doing, the action is all at the high end. I’ve said it before and will say it again; Apple has IBM-level performance in its sights. The most active work over the past three years is all about “scalability” in all its forms, how to design and connect together multiple Max class devices to various ends. The next year or two will be wild at the Apple high end!

Kangal - Monday, May 29, 2023 - link

Thank you!However, I still welcome the development of a smaller and slower ARM core, if it means small power draw and small silicon area. There is a market for that outside of phones; in embedded devices, watches, wearables, and ultra low power gadgets.

We used to have something like Cortex-A7 (tiny), Cortex-A9 (small), Cortex-A17 (medium). Then we had Cortex-A35 (tiny), Cortex-A53 (small), Cortex-A73 (medium). But we never got a successor for the Cortex-A35, so perhaps a very undervolted Cortex-A520 will work. Just like how ARM justified using an overclocked Cortex-A515 as a legitimate successor to the Cortex-A53 range.

Almost all the attention goes to the (Medium) cores. It's their bread and butter. From the development of (2016) Cortex-A73, A75, A76, A77, A78, A710, A720 (2023).

But as you said, the exciting things are happening at the high-end (LARGE) cores. It's started with the creation of a new category in the X1, X2, X3, X4 designs. They seem unfit in phones, okay in tablets, and necessary for ARMbooks. Even then, their performance is somewhere far from Apple's M1/M2/M3 and unfit to tackle AMD Zen3 / Intel 11th-gen x86 cores. Let alone their newest variants.

back2future - Tuesday, May 30, 2023 - link

"Even then, their performance is somewhere far from Apple's M1/M2/M3 and unfit to tackle AMD Zen3 / Intel 11th-gen x86 cores."without sufficient support for desktop OS on desktop performance CPUs it reduces possibilities to binary translation/multiarch binaries and ISA specific OS from vendors, but not on ARM generally having a free choice for Windows/Linux/Unix variants that suit individual needs (work&development/media/gaming)