AMD Zen 4 Ryzen 9 7950X and Ryzen 5 7600X Review: Retaking The High-End

by Ryan Smith & Gavin Bonshor on September 26, 2022 9:00 AM ESTZen 4 Execution Pipeline: Familiar Pipes With More Caching

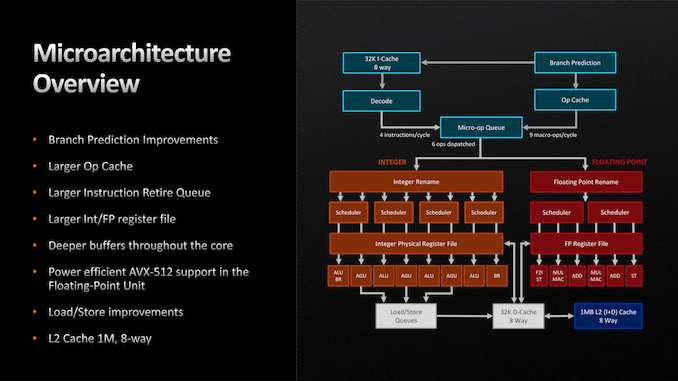

Finally, let’s take a look at the Zen 4 microarchitecture’s execution flow in-depth. As we noted before, AMD is seeing a 13% IPC improvement over Zen 3. So how did they do it?

Throughout the Zen 4 architecture, there is not any single radical change. Zen 4 does make a few notable changes, but the basics of the instruction flow are unchanged, especially on the back-end execution pipelines. Rather, many (if not most) of the IPC improvements in Zen 4 come from improving cache and buffer sizes in some respect.

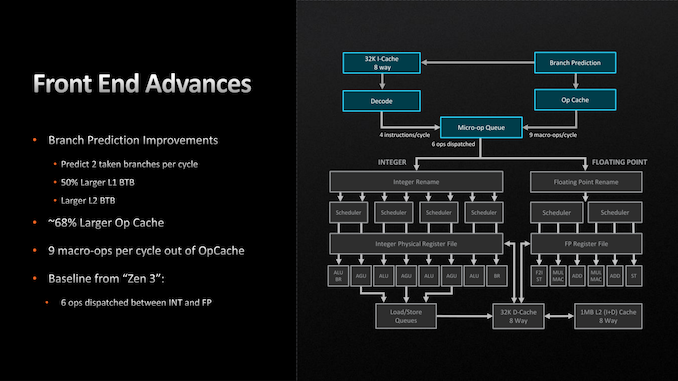

Starting with the front end, AMD has made a few important improvements here. The branch predictor, a common target for improvements given the payoffs of correct predictions, has been further iterated upon for Zen 4. While still predicting 2 branches per cycle (the same as Zen 3), AMD has increased the L1 Branch Target Buffer (BTB) cache size by 50%, to 2 x 1.5k entries. And similarly, the L2 BTB has been increased to 2 x 7k entries (though this is just an ~8% capacity increase). The net result being that the branch predictor’s accuracy is improved by being able to look over a longer history of branch targets.

Meanwhile the branch predictor’s op cache has been more significantly improved. The op cache is not only 68% larger than before (now storing 6.75k ops), but it can now spit out up to 9 macro-ops per cycle, up from 6 on Zen 3. So in scenarios where the branch predictor is doing especially well at its job and the micro-op queue can consume additional instructions, it’s possible to get up to 50% more ops out of the op cache. Besides the performance improvement, this has a positive benefit to power efficiency since tapping cached ops requires a lot less power than decoding new ones.

With that said, the output of the micro-op queue itself has not changed. The final stage of the front-end can still only spit out 6 micro-ops per clock, so the improved op cache transfer rate is only particularly useful in scenarios where the micro-op queue would otherwise be running low on ops to dispatch.

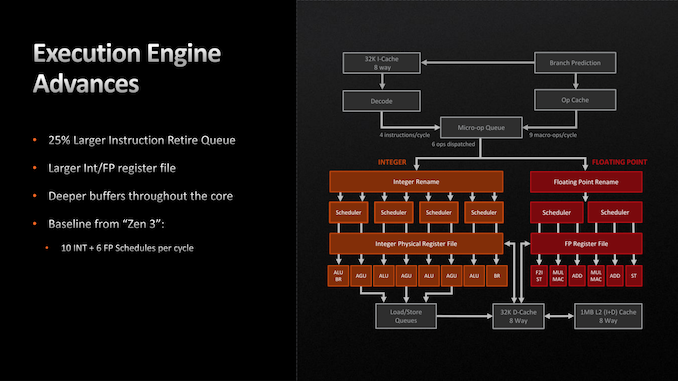

Switching to the back-end of the Zen 4 execution pipeline, things are once again relatively unchanged. There are no pipeline or port changes to speak of; Zen 4 still can (only) schedule up to 10 Integer and 6 Floating Point operations per clock. Similarly, the fundamental floating point op latency rates remain unchanged as 3 cycles for FADD and FMUL, and 4 cycles for FMA.

Instead, AMD’s improvements to the back-end of Zen 4 have here too focused on larger caches and buffers. Of note, the retire queue/reorder buffer is 25% larger, and is now 320 instructions deep, giving the CPU a wider window of instructions to look through to extract performance via out-of-order execution. Similarly, the Integer and FP register files have been increased in size by about 20% each, to 224 registers and 192 registers respectively, in order to accommodate the larger number of instructions that are now in flight.

The only other notable change here is AVX-512 support, which we touched upon earlier. AVX execution takes place in AMD’s floating point ports, and as such, those have been beefed up to support the new instructions.

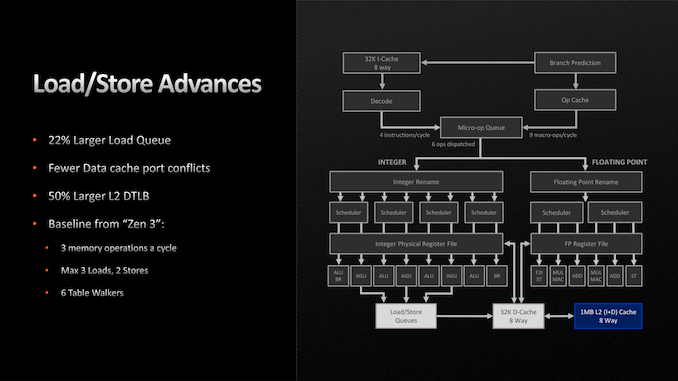

Moving on, the load/store units within each CPU core have also been given a buffer enlargement. The load queue is 22% deeper, now storing 88 loads. And according to AMD, they’ve made some unspecified changes to reduce port conflicts with their L1 data cache. Otherwise the load/store throughput remains unchanged at 3 loads and 2 stores per cycle.

Finally, let’s talk about AMD’s L2 cache. As previously disclosed by the company, the Zen 4 architecture is doubling the size of the L2 cache on each CPU core, taking it from 512KB to a full 1MB. As with AMD’s lower-level buffer improvements, the larger L2 cache is designed to further improve performance/IPC by keeping more relevant data closer to the CPU cores, as opposed to ending up in the L3 cache, or worse, main memory. Beyond that, the L3 cache remains unchanged at 32MB for an 8 core CCX, functioning as a victim cache for each CPU core’s L2 cache.

All told, we aren’t seeing very many major changes in the Zen 4 execution pipeline, and that’s okay. Increasing cache and buffer sizes is another tried and true way to improve the performance of an architecture by keeping an existing design filled and working more often, and that’s what AMD has opted to do for Zen 4. Especially coming in conjunction with the jump from TSMC 7nm to 5nm and the resulting increase in transistor budget, this is good way to put those additional transistors to good use while AMD works on a more significant overhaul to the Zen architecture for Zen 5.

205 Comments

View All Comments

linuxgeex - Monday, September 26, 2022 - link

All Microsoft customers are QA testers, lol. That's always how it's been. ReplyKangal - Tuesday, September 27, 2022 - link

Isn't that what goes for Linux?The only difference is that you don't pay money, you just pay in time, effort, frustration, and your soul.

Reply

Hifihedgehog - Tuesday, September 27, 2022 - link

Exactly. And you compile your own kernel for 24 hours hoping it will finish successfully. Replyat_clucks - Wednesday, October 19, 2022 - link

Not if you use the latest Ryzen 9 7950X. You may still pray it's successful at the end but God will answer a lot faster :). Replyelforeign - Monday, September 26, 2022 - link

Ah yes, the capitalistic adage of less is more. I'm sorry you guys have to deal with this, as with anyone in the workforce, where the powers that be sit on their ass with their cushy millions and say workers can do less with more and pile on with disregard.On a further note, I have been coming to Anandtech since the mid 00's. While I can understand the expectation surrounding good grammar and flawless articles, some issues are bound to come up now and then. The vitriol you guys receive for some simple grammar or syntax mistake is crazy. Reply

rarson - Wednesday, September 28, 2022 - link

"Ah yes, the capitalistic adage of less is more."This is not a thing. Reply

herozeros - Monday, September 26, 2022 - link

Kind reply, thanks. Hope your week lets you catch up.No more copy editors?! I guess my blonde is all now truly grey . . . sigh Reply

Threska - Monday, September 26, 2022 - link

Outsourced to AI. Replyemn13 - Monday, September 26, 2022 - link

I for one thoroughly enjoyed your article, and appreciate the technical content - a few editing nits don't detract from that.And hey, if I were to whine about embarrassing editing mistakes, rather than focusing on a long article written in limited time due to AMD's schedule, I'd poke fun at the 100 000 000 000 $ company's press slides touting their EXPO tech's openness in the form of public "doucments". 😀 Reply

linuxgeex - Monday, September 26, 2022 - link

So long as you're open to community feedback to correct hasty errors, there's no need for copy editors, and you can push your articles faster, which we'll all appreciate. Saying thanks is much more productive than making excuses. It shows that you appreciate your community. Reply