NVIDIA's GeForce 7800 GTX Hits The Ground Running

by Derek Wilson on June 22, 2005 9:00 AM EST- Posted in

- GPUs

The Pipeline Overview

First, let us take a second to run through NVIDIA's architecture in general. DirectX or OpenGL commands and HLSL and GLSL shaders are translated and compiled for the architectures. Commands and data are sent to the hardware where we go from numbers, instructions and artwork to a rendered frame.The first major stop along the way is the vertex engine where geometry is processed. Vertices can be manipulated using math and texture data, and the output of the vertex pipelines is passed on down the line to the fragment (or pixel) engine. Here, every pixel on the screen is processed based on input from the vertex engine. After the pixels have been processed for all the geometry, the final scene must be assembled based on color and z data generated for each pixel. Anti-aliasing and blending are done into the framebuffer for final render output in what NVIDIA calls the render output pipeline (ROP). Now that we have a general overview, let's take a look at the G70 itself.

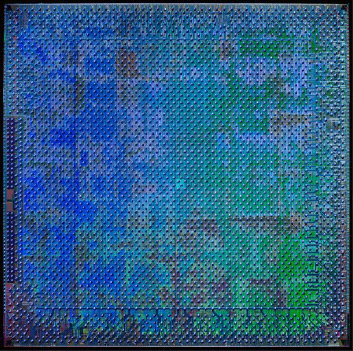

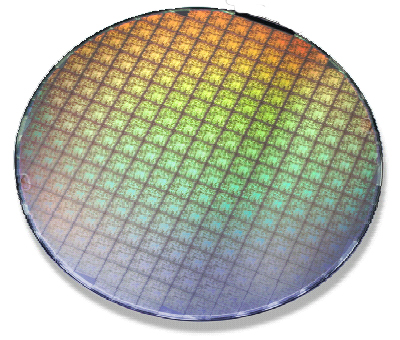

The G70 GPU is quite a large IC. Weighing in at 302 million transistors, we would certainly hope that NVIDIA packed enough power in the chip to match its size. The 110nm TSMC process will certainly help with die size, but that is quite a few transistors. The actual die area is only slightly greater than NV4x. In fact, NVIDIA is able to fit the same number of ICs on a single wafer.

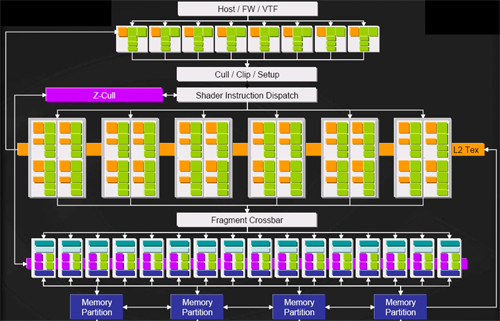

A glance at a block diagram of the hardware gives us a first look at the methods by which NVIDIA increased performance this time around.

The first thing to notice is that we now have 8 (up from 6) vertex pipelines. We still aren't vertex processing limited (except in the workstation market), but this 33% upgrade in vertex power will help to keep the extra pixel pipelines fed as well as handle any added vertex load developers try to throw at games in the near future. There are plenty of beautiful things that can be done with vertex shaders that we aren't seeing come about in games yet like parallax and relief mapping as well as extended use of geometry instancing and vertex texturing.

Moving on to pixel pipelines, we see a 50% increase in the number of pipelines packed under the hood. Each of the 24 pixel pipes is also more powerful than those of NV4x. We will cover just why that is a little later on. For now though, it is interesting to note that we do not see an increase in the 16 ROPs. These pipelines take the output of the fragment crossbar (which aggregates all of the pixel shader output) and finalizes the rendering process. It is here where MSAA is performed, as well as the color and z/stencil operations. Not matching the number of ROPs to the number of pixel pipelines indicates that NVIDIA feels its fill rate and ability to handle current and near future resolutions is not an issue that needs to be addressed in this incarnation of the GeForce. As NVIDIA's UltraShadow II technology is driven by the hardware's ability to handle twice as many z operations per clock when a z only pass is performed, this also means that we won't see improved performance in this area.

If NVIDIA is correct in their guess (and we see no reason they should be wrong), we will see increasing amounts of processing being done per pixel in future titles. This means that each pixel will spend more time in the pixel pipeline. In order to keep the ROPs busy in light of a decreased output flow from a single pixel pipe, the ratio of pixel pipes to ROPs can be increased. This is in accord with the situation we've already described.

ROPs will need to be driven higher as common resolutions increase. This can also be mitigated by increases in frequency. We will also need more ROPs as the number pixel pipelines are able to saturate the fragment crossbar in spite of the increased time a pixel spends being shaded.

127 Comments

View All Comments

Diasper - Wednesday, June 22, 2005 - link

oops posted before i wrote anything. Some of the results are impressive, others aren't at all. In fact results seem to be all over the board - I suspect drivers are something of the culprit and are to be blamed. Hopefully, as new drivers come out we'll see some performance increases or at least more a uniform distribution of good resultsDiasper - Wednesday, June 22, 2005 - link

Live - Wednesday, June 22, 2005 - link

Derek get cracking, the graphs are all messed up! And the Transparency AA Performance section could use some info on what game it is tested on and some more comments. I also think that each benchmark warrants some comments for all of us that have a hard time remembering two numbers at the same time. Keep it simple folks….Johnmcl7 - Wednesday, June 22, 2005 - link

I agree something is wrong with these results, I thought they were odd but when I got to the Enemy Territory ones they seem completely wrong - at 2048x1536 and 4xAA the X850XT is apparently getting 104 fps, while the 6800 Ultra gets 48.3 and the SLI 6800 Ultras are only getting 34.6 fps! Especially bearing in mind it's an OpenGL game.John

rimshot - Wednesday, June 22, 2005 - link

This has got to be an error by Anandtech, all other reviews show the 7800GTX in SLI at those same settings hammering the 6800Ultra in SLI.Lonyo - Wednesday, June 22, 2005 - link

The benchmarks are all a load of crap it seems.Check the Wolfenstein benchmarks.

The X850XT goes from 74fps @ 1600x1200 w/4xAA to 103fps @ 2048x1536 w/4xAA

A 33% increase when the res gets turned up. Good one.

There also seem to be many other similar things which look like errors, but they could just be crappy nVidia drivers, or something wrong with SLI profiles.

Who knows, but there's definately a lot of things which look VERY odd/suspicious here.

Dukemaster - Wednesday, June 22, 2005 - link

My Iiyama VMP 454 does 2048 no prob so i'm game :pvanish - Wednesday, June 22, 2005 - link

oh and in several of the benchmarks, the 6800U SLI more than doubles the performance over the single 6800U. Is that normal? I thought SLI gains were generally about 45% or so.rimshot - Wednesday, June 22, 2005 - link

Is it just me or is it a little strange that the 6800Ultra SLI outperforms the 7800GTX SLI at 1600x1200 with 4xAA in every benchmark ???PrinceXizor - Wednesday, June 22, 2005 - link

No comment on the fact that in virtually every game it LOSES to the 6800 SLI at 1600x1200 at 4XAA.All other scores look very impressive. But, in this particualar group of settings, the 6800 SLI eats it for lunch.

P-X