Apple's M1 Pro, M1 Max SoCs Investigated: New Performance and Efficiency Heights

by Andrei Frumusanu on October 25, 2021 9:00 AM EST- Posted in

- Laptops

- Apple

- MacBook

- Apple M1 Pro

- Apple M1 Max

Power Behaviour: No Real TDP, but Wide Range

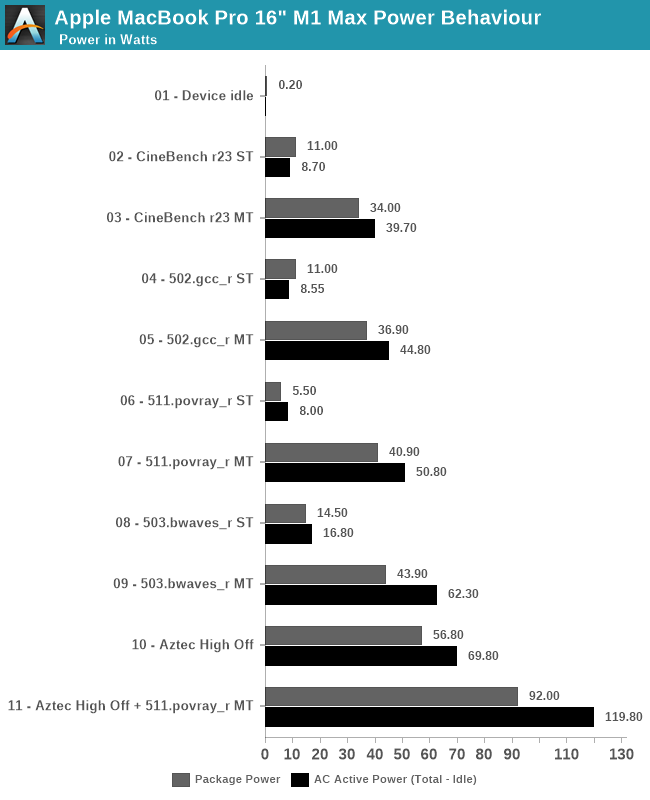

Last year when we reviewed the M1 inside the Mac mini, we did some rough power measurements based on the wall-power of the machine. Since then, we learned how to read out Apple’s individual CPU, GPU, NPU and memory controller power figures, as well as total advertised package power. We repeat the exercise here for the 16” MacBook Pro, focusing on chip package power, as well as AC active wall power, meaning device load power, minus idle power.

Apple doesn’t advertise any TDP for the chips of the devices – it’s our understanding that simply doesn’t exist, and the only limitation to the power draw of the chips and laptops are simply thermals. As long as temperature is kept in check, the silicon will not throttle or not limit itself in terms of power draw. Of course, there’s still an actual average power draw figure when under different scenarios, which is what we come to test here:

Starting off with device idle, the chip reports a package power of around 200mW when doing nothing but idling on a static screen. This is extremely low compared to competitor designs, and is likely a reason Apple is able achieve such fantastic battery life. The AC wall power under idle was 7.2W, this was on Apple’s included 140W charger, and while the laptop was on minimum display brightness – it’s likely the actual DC battery power under this scenario is much lower, but lacking the ability to measure this, it’s the second-best thing we have. One should probably assume a 90% efficiency figure in the AC-to-DC conversion chain from 230V wall to 28V USB-C MagSafe to whatever the internal PMIC usage voltage of the device is.

In single-threaded workloads, such as CineBench r23 and SPEC 502.gcc_r, both which are more mixed in terms of pure computation vs also memory demanding, we see the chip report 11W package power, however we’re just measuring a 8.5-8.7W difference at the wall when under use. It’s possible the software is over-reporting things here. The actual CPU cluster is only using around 4-5W under this scenario, and we don’t seem to see much of a difference to the M1 in that regard. The package and active power are higher than what we’ve seen on the M1, which could be explained by the much larger memory resources of the M1 Max. 511.povray is mostly core-bound with little memory traffic, package power is reported less, although at the wall again the difference is minor.

In multi-threaded scenarios, the package and wall power vary from 34-43W on package, and wall active power from 40 to 62W. 503.bwaves stands out as having a larger difference between wall power and reported package power – although Apple’s powermetrics showcases a “DRAM” power figure, I think this is just the memory controllers, and that the actual DRAM is not accounted for in the package power figure – the extra wattage that we’re measuring here, because it’s a massive DRAM workload, would be the memory of the M1 Max package.

On the GPU side, we lack notable workloads, but GFXBench Aztec High Offscreen ends up with a 56.8W package figure and 69.80W wall active figure. The GPU block itself is reported to be running at 43W.

Finally, stressing out both CPU and GPU at the same time, the SoC goes up to 92W package power and 120W wall active power. That’s quite high, and we haven’t tested how long the machine is able to sustain such loads (it’s highly environment dependent), but it very much appears that the chip and platform don’t have any practical power limit, and just uses whatever it needs as long as temperatures are in check.

| M1 Max MacBook Pro 16" |

Intel i9-11980HK MSI GE76 Raider |

|||||

| Score | Package Power (W) |

Wall Power Total - Idle (W) |

Score | Package Power (W) |

Wall Power Total - Idle (W) |

|

| Idle | 0.2 | 7.2 (Total) |

1.08 | 13.5 (Total) |

||

| CB23 ST | 1529 | 11.0 | 8.7 | 1604 | 30.0 | 43.5 |

| CB23 MT | 12375 | 34.0 | 39.7 | 12830 | 82.6 | 106.5 |

| 502 ST | 11.9 | 11.0 | 9.5 | 10.7 | 25.5 | 24.5 |

| 502 MT | 74.6 | 36.9 | 44.8 | 46.2 | 72.6 | 109.5 |

| 511 ST | 10.3 | 5.5 | 8.0 | 10.7 | 17.6 | 28.5 |

| 511 MT | 82.7 | 40.9 | 50.8 | 60.1 | 79.5 | 106.5 |

| 503 ST | 57.3 | 14.5 | 16.8 | 44.2 | 19.5 | 31.5 |

| 503 MT | 295.7 | 43.9 | 62.3 | 60.4 | 58.3 | 80.5 |

| Aztec High Off | 307fps | 56.8 | 69.8 | 266fps | 35 + 144 | 200.5 |

| Aztec+511MT | 92.0 | 119.8 | 78 + 142 | 256.5 | ||

Comparing the M1 Max against the competition, we resorted to Intel’s 11980HK on the MSI GE76 Raider. Unfortunately, we wanted to also do a comparison against AMD’s 5980HS, however our test machine is dead.

In single-threaded workloads, Apple’s showcases massive performance and power advantages against Intel’s best CPU. In CineBench, it’s one of the rare workloads where Apple’s cores lose out in performance for some reason, but this further widens the gap in terms of power usage, whereas the M1 Max only uses 8.7W, while a comparable figure on the 11980HK is 43.5W.

In other ST workloads, the M1 Max is more ahead in performance, or at least in a similar range. The performance/W difference here is around 2.5x to 3x in favour of Apple’s silicon.

In multi-threaded tests, the 11980HK is clearly allowed to go to much higher power levels than the M1 Max, reaching package power levels of 80W, for 105-110W active wall power, significantly more than what the MacBook Pro here is drawing. The performance levels of the M1 Max are significantly higher than the Intel chip here, due to the much better scalability of the cores. The perf/W differences here are 4-6x in favour of the M1 Max, all whilst posting significantly better performance, meaning the perf/W at ISO-perf would be even higher than this.

On the GPU side, the GE76 Raider comes with a GTX 3080 mobile. On Aztec High, this uses a total of 200W power for 266fps, while the M1 Max beats it at 307fps with just 70W wall active power. The package powers for the MSI system are reported at 35+144W.

Finally, the Intel and GeForce GPU go up to 256W power daw when used together, also more than double that of the MacBook Pro and its M1 Max SoC.

The 11980HK isn’t a very efficient chip, as we had noted it back in our May review, and AMD’s chips should fare quite a bit better in a comparison, however the Apple Silicon is likely still ahead by extremely comfortable margins.

493 Comments

View All Comments

Speedfriend - Tuesday, October 26, 2021 - link

This isn't their first attempt. They have been building laptop version of the A series chips for years now for testing. There have been leaks about this for years. Assuming that the world best SOC design team will make a significant advancement from here after 10 years of progress on A series is hoping for a bit muchrobotManThingy - Tuesday, October 26, 2021 - link

All of the games are x86 translated by Apple's Rosetta, which means they are meaningless when it come to determining the speed of the M1 Max or any other M1 chip.TheinsanegamerN - Tuesday, October 26, 2021 - link

Real-world software isnt worthless.AshlayW - Tuesday, October 26, 2021 - link

"The M1X is slightly slower than the RTX-3080, at least on-paper and in synthetic benchmarks."Not quite, it matches the 3080 in mobile-focused synthetics where Apple is focusing on pretending to have best-in-class performance, and then its true colours shows in actual video gaming. This GPU is for content creators (where it's excellent) but you don't just out-muscle decades of GPU IP optimisation for gaming in hardware and software that AMD/NVIDIA have. Furthermore, the M1MAX is significantly weaker in GPU resources than the GA104 chip in the mobile 3080, which here, is actually limited to quite low clock speeds, it is no surprise it is faster in actual games, by a lot.

TheinsanegamerN - Tuesday, October 26, 2021 - link

Rarely do synthetics ever line up with real word performance, especially in games. MatcHong 3060 mobile performance is already pretty good.NPPraxis - Tuesday, October 26, 2021 - link

Where are you seeing "actual gaming performance" benchmarks that you can compare? There's very few AAA games available for Mac to begin with; most of the ones that do exist are running under Rosetta 2 or not using Metal; and Windows games using VMs or WINE + Rosetta 2 has massive overhead.The number of actual games running is tiny and basically the only benchmark I've seen is Shadow of the Tomb Raider. I need a higher sample size to state anything definitively.

That said, I wouldn't be shocked if you're right, Apple has always targeted Workstation GPU buyers more than gaming GPU buyers.

GigaFlopped - Tuesday, October 26, 2021 - link

The games tested were already ported over to the Metal API, it was only the CPU side that was emulated, we've seen emulated benchmarks before, the M1 and Rosetta does a pretty decent job at it and when they ran the games at 4k, that would have pretty much removed any potential bottleneck. So what you see is pretty much what you'll get in terms of real-world rasterization performance, they might squeeze an extra 5% or so out of it, but don't expect any miracles, it's an RTX 3060 Mobile competitor in terms of Rasterization, which is certainly not to be sniffed at and very good achievement. The fact that it can match the 3060 whilst consuming less power is a feat of its own, considering this is Apple first real attempt at desktop level or performance GPU.lilkwarrior - Friday, November 5, 2021 - link

These M1 chips aren't appropriate for serious AAA Gaming. They don't even have hardware-accelerated ray-tracing and other core DX12U/Vulkan tech for current-gen games coming up moving forward. Want to preview that? Play Metro Exodus: Enhanced Edition.OrphanSource - Thursday, May 26, 2022 - link

you 'premium gaming' encephalitics are the scum of the GD earth. Oh, you can only play your AAA money pit cash grabs at 108 fps instead of 145fps at FOURTEEN FORTY PEE on HIGH QUALITY SETTING? OMG, IT"S AS BAD AS THE RTX 3060? THE OBJECTIVELY MOST COST/FRAME EFFECTIVE GRAPHICS CARD OF 2021??? WOW THAT SOUNDS FUCKING AMAZING!Wait, no I, misunderstood, you are saying that's a bad thing? Oh you poor, old, blind, incontinent man... well, at least I THINK you are blind if you need 2k resolution at well over 100fps across the most graphics intensive games of 2020/2021 to see what's going on clearly enough to EVEN REMOTELY enjoy the $75 drug you pay for (the incontinence I assume because you 1. clearly wouldn't give a sh*t about these top end, graphics obsessed metrics and 2. have literally nothing else to do except shell out enough money to feed a family a small family for a week with the cost of each of your cutting edge games UNLESS you were homebound in some way?)

Maybe stop being the reason why the gaming industry only cares about improving their graphics at the cost of everything else. Maybe stop being the reason why graphics cards are so wildly expensive that scientific researchers can't get the tools they need to do the more complex processing needed to fold proteins and cure cancer, or use machine learning to push ahead in scientific problems that resist our conventional means of analysis

KYS fool

BillBear - Monday, October 25, 2021 - link

The performance numbers would look even nicer if we had numbers for that GE76 Raider when it's unplugged from the wall and has to throttle the CPU and GPU way the hell down.How about testing both on battery only?