Intel 12th Gen Core Alder Lake for Desktops: Top SKUs Only, Coming November 4th

by Dr. Ian Cutress on October 27, 2021 12:00 PM EST- Posted in

- CPUs

- Intel

- DDR4

- DDR5

- PCIe 5.0

- Alder Lake

- Intel 7

- 12th Gen Core

- Z690

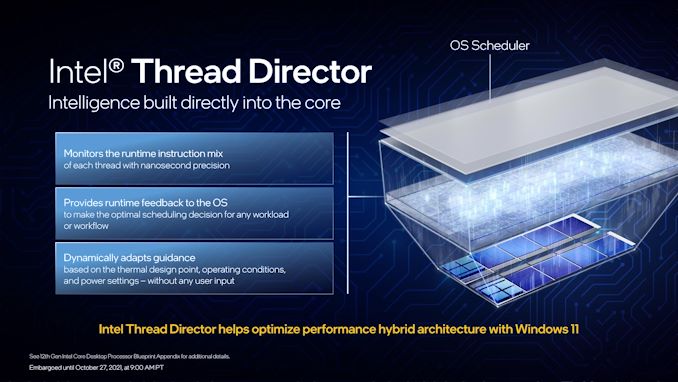

Thread Director: Windows 11 Does It Best

Every operating system runs what is called a scheduler – a low-level program that dictates where workloads should be on the processor depending on factors like performance, thermals, and priority. A naïve scheduler that only has to deal with a single core or a homogenous design has it pretty easy, managing only power and thermals. Since those single core days though, schedulers have grown more complex.

One of the first issues that schedulers faced in monolithic silicon designs was multi-threading, whereby a core could run more than one thread simultaneously. We usually consider that running two threads on a core usually improves performance, but it is not a linear relationship. One thread on a core might be running at 100%, but two threads on a single core, while overall throughput might increase to 140%, it might mean that each thread is only running at 70%. As a result, schedulers had to distinguish between threads and hyperthreads, prioritizing new software to execute on a new core before filling up the hyperthreads. If there is software that doesn’t need all the performance and is happy to be background-related, then if the scheduler knows enough about the workload, it might put it on a hyperthread. This is, at a simple level, what Windows 10 does today.

This way of doing things maximizes performance, but could have a negative effect on efficiency, as ‘waking up’ a core to run a workload on it may incur extra static power costs. Going beyond that, this simple view assumes each core and thread has the same performance and efficiency profile. When we move to a hybrid system, that is no longer the case.

Alder Lake has two sets of cores (P-cores and E-cores), but it actually has three levels of performance and efficiency: P-cores, E-Cores, and hyperthreads on P-cores. In order to ensure that the cores are used to their maximum, Intel had to work with Microsoft to implement a new hybrid-aware scheduler, and this one interacts with an on-board microcontroller on the CPU for more information about what is actually going on.

The microcontroller on the CPU is what we call Intel Thread Director. It has a full scope view of the whole processor – what is running where, what instructions are running, and what appears to be the most important. It monitors the instructions at the nanosecond level, and communicates with the OS on the microsecond level. It takes into account thermals, power settings, and identifies which threads can be promoted to higher performance modes, or those that can be bumped if something higher priority comes along. It can also adjust recommendations based on frequency, power, thermals, and additional sensory data not immediately available to the scheduler at that resolution. All of that gets fed to the operating system.

The scheduler is Microsoft’s part of the arrangement, and as it lives in software, it’s the one that ultimately makes the decisions. The scheduler takes all of the information from Thread Director, constantly, as a guide. So if a user comes in with a more important workload, Thread Director tells the scheduler which cores are free, or which threads to demote. The scheduler can override the Thread Director, especially if the user has a specific request, such as making background tasks a higher priority.

What makes Windows 11 better than Windows 10 in this regard is that Windows 10 focuses more on the power of certain cores, whereas Windows 11 expands that to efficiency as well. While Windows 10 considers the E-cores as lower performance than P-cores, it doesn’t know how well each core does at a given frequency with a workload, whereas Windows 11 does. Combine that with an instruction prioritization model, and Intel states that under Windows 11, users should expect a lot better consistency in performance when it comes to hybrid CPU designs.

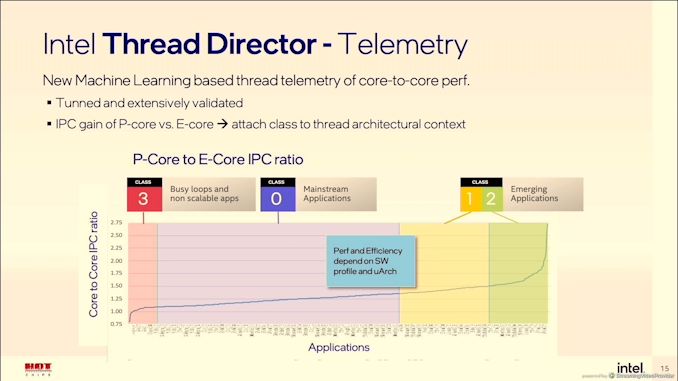

Under the hood, Thread Director is running a pre-trained algorithm based on millions of hours of data gathered during the development of the feature. It identifies the effective IPC of a given workflow, and applies that to the performance/efficiency metrics of each core variation. If there’s an obvious potential for better IPC or better efficiency, then it suggests the thread is moved. Workloads are broadly split into four classes:

- Class 3: Bottleneck is not in the compute, e.g. IO or busy loops that don’t scale

- Class 0: Most Applications

- Class 1: Workloads using AVX/AVX2 instructions

- Class 2: Workloads using AVX-VNNI instructions

Anything in Class 3 is recommended for E-cores. Anything in Class 1 or 2 is recommended for P cores, with Class 2 having higher priority. Everything else fits in Class 0, with frequency adjustments to optimize for IPC and efficiency if placed on the P-cores. The OS may force any class of workload onto any core, depending on the user.

There was some confusion in the press briefing as to whether Thread Director can ‘learn’ during operation, and how long it would take – to be clear, Thread Director doesn’t learn, it already knows from the pre-trained algorithm. It analyzes the instruction flow coming into a core, identifies the class as listed above, calculates where it is best placed (which takes microseconds), and communicates that to the OS. I think the confusion came with the difference in the words ‘learning’ and ‘analyzing’. In this case, it’s ‘learning’ what the instruction mix to apply to the algorithm, but the algorithm itself isn’t updated in the way that it is ‘learning’ and adjusting the classes. Ultimately even if you wanted to make the algorithm self-learn your workflow, the algorithm can’t actually see which thread relates to which program or utility – that’s something on the operating system level, and down to Microsoft. Ultimately, Thread Director could suggest a series of things, and the operating system can choose to ignore them all. That’s unlikely to happen in normal operation though.

One of the situations where this might rear its head is to do with in-focus operation. As showcased by Intel, the default behavior of Windows changes depending on whether on the power plan.

When a user is on the balanced power plan, Microsoft will move any software or window that is in focus (i.e. selected) onto the P-cores. Conversely, if you click away from one window to another, the thread for that first window will move to an E-core, and the new window now gets P-core priority. This makes perfect sense for the user that has a million windows and tabs open, and doesn’t want them taking immediate performance away.

However, this way of doing things might be a bit of a concern, or at least it is for me. The demonstration that Intel performed was where a user was exporting video content in one application, and then moved to another to do image processing. When the user moved to the image processing application, the video editing threads were moved to the E-cores, allowing the image editor to use the P-cores as needed.

Now usually when I’m dealing with video exports, it’s the video throughput that is my limiting factor. I need the video to complete, regardless of what I’m doing in the interim. By defocusing the video export window, it now moves to the slower E-cores. If I want to keep it on the P-cores in this mode, I have to keep the window in focus and not do anything else. The way that this is described also means that if you use any software that’s fronted by a GUI, but spawns a background process to do the actual work, unless the background process gets focus (which it can never do in normal operation), then it will stay on the E-cores.

In my mind, this is a bad oversight. I was told that this is explicitly Microsoft’s choice on how to do things.

The solution, in my mind, is for some sort of software to exist where a user can highlight programs to the OS that they want to keep on the high-performance track. Intel technically made something similar when it first introduced Turbo Max 3.0, however it was unclear if this was something that had to come from Intel or from Microsoft to work properly. I assume the latter, given the OS has ultimate control here.

I was however told that if the user changes the Windows Power Plan to high-performance, this behavior stops. In my mind this isn’t a proper fix, but it means that we might see some users/reviews of the hardware with lower performance if the workload doing the work is background, and the reviewer is using the default Balanced Power Plan as installed. If the same policy is going to apply to Laptops, that’s a bigger issue.

395 Comments

View All Comments

yeeeeman - Friday, October 29, 2021 - link

You comparisons at various price points are a good idea, but wrong, because 12600K will fight with 5800X performance wise, hence its efficiency will be judged compared to the 5800X, not with the 5600X, which obviously more efficient than the 5800X.Spunjji - Friday, October 29, 2021 - link

This remains to be seen - Intel rarely go low on price and high on performance. I'll be happy if they've changed that pattern!Carmen00 - Friday, October 29, 2021 - link

Surprised that nobody's talking about the inherent scheduling problems with efficiency+performance cores and desktop workloads. This is NOT a solved problem and is, in fact, very far away from good general-purpose solutions. Your phone/tablet shows you one app at a time, which goes some way towards masking the issues. On a general-purpose desktop, the efficiency+performance split has never been successfully solved, as far as I am aware. You read it right - NEVER! (I welcome links to any peer-reviewed theoretical CS research that shows the opposite.)In the interim, it seems to me that Intel has hitched its wagon to a problem that is theoretically unsolvable and generally unapproachable at the chip level. Scheduling with homogenous cores is unsolvable too, but an easier problem to attack. Heterogenous cores add another layer of difficulty and if Intel's approach is really just breaking things into priority classes ... oh, my. Good luck to them on real-world performance. They'll need it.

I can see why they've done it. They have the tech and they're trying to make the most of their existing tech investment. That doesn't mean it's a good idea. It is relatively easy to make schedulers behave pathologically (e.g. flipping between E/P constantly, or burning power for nothing) during normal app execution and we see this on phones already. Bringing that mess to desktops ... yeah. Not a great idea.

kwohlt - Friday, October 29, 2021 - link

"I welcome links to any peer-reviewed theoretical CS research that shows the opposite"That's not how this works. YOU are making the claim that heterogenous scheduling has never been solved on a general-purpose desktop - the onus would be on your to provide proof of this.

"Scheduling with homogenous cores is unsolvable too"

So if scheduling with heterogenous and homogenous cores is both unsolvable, then what point are you trying to make?

Has apple not demonstrated functional scheduling across a heterogenous architecture with M1?

And what does "solved" look like? Because if your definition of solved is "no inefficiencies or loss", then that's not a realistic expectation. Heterogenous architecture simply needs to provide more benefit than not - a goal of zero overheard of inefficiency is unrealistic.

As long as efficiency and performance gain outpaces the inefficiencies, it's a success. Consider a scenario of 4 efficiency cores occupying the same physical die space, thermal constrains, and power consumption of 4 performant cores - Surely an 8 + 8 design would offer better performance than a 10 + 0 design, when the e cores can offer performance greater than 50% of the P core.

Consider this: a 12600 will be 6 + 0. A 12600K will be 6+4. If we downclock 12600K to match P core frequency of 12600, we can directly measure the benefit of the 4 e cores.

If we disable 4 e cores in the 12700K so it is only 8P cores, and compare that to the 6+4 of the 12600K, if the 12600K is more performant, we can directly show that 6+4 was better than 7+0 in this scenario.

Carmen00 - Wednesday, November 3, 2021 - link

Sure, take a look at this for a good recent overview: https://dl.acm.org/doi/pdf/10.1145/3387110 . (honestly, though, I think you could have found that one for yourself... the research is not hidden!)Again, best of luck to Intel on it; deep learning models or no, they have a risk appetite that I don't share. Apple's success is due, in no small part, to the fact that it controls the entirety of the hardware and software stack. Intel has no such ability. My prediction is that you will have users whose computers sometimes simply start burning power for "no reason", and this can't be replicated in a lab environment; and you will have users whose computers are sometimes just slow, and again, this can't be replicated easily. The result will likely be an intermittently poor user experience. I wouldn't risk buying such a machine myself, and I certainly wouldn't get it for grandma or the kids.

mode_13h - Saturday, October 30, 2021 - link

Good scheduling requires an element of prediction. In the article, they mention Intel took many measurements of many different workloads, in order to train a deep learning model to recognize and classify different usage patterns.PedroCBC - Saturday, October 30, 2021 - link

In the WAN show yesterday Linus said that some DRMs will not work with Alder Lake, most of then old ones but Denuvo also said that it will have some problemsiranterres - Friday, October 29, 2021 - link

Having "efficiency" cores in modern desktop CPU are irrelevant. For laptops is another story.iranterres - Friday, October 29, 2021 - link

*is irrelevantnandnandnand - Friday, October 29, 2021 - link

False. It boosts multicore performance per die area.Intel could have given you 10 big cores instead of 8 big, 8 small. And that would have been a worse chip.