The AMD Ryzen 7 5700G, Ryzen 5 5600G, and Ryzen 3 5300G Review

by Dr. Ian Cutress on August 4, 2021 1:45 PM ESTCPU Tests: Legacy and Web

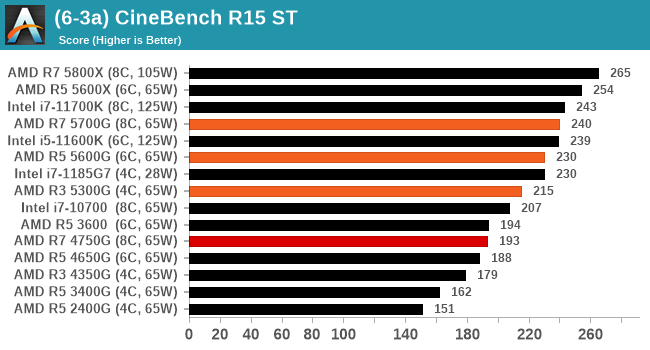

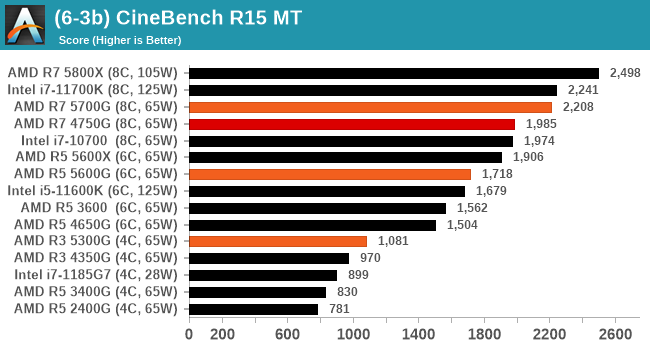

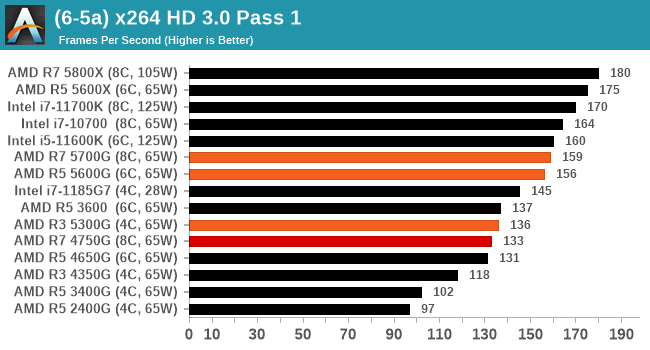

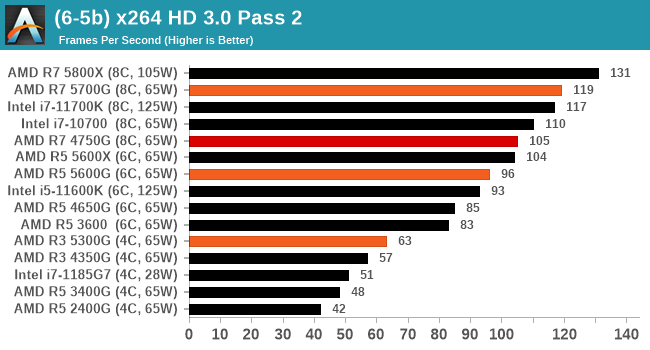

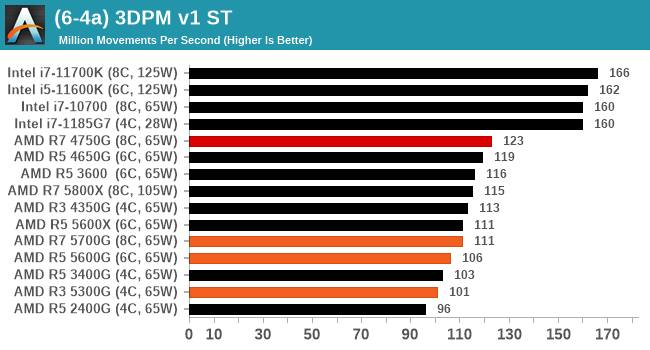

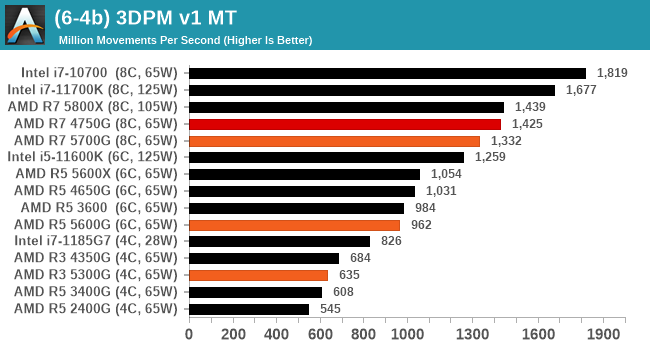

In order to gather data to compare with older benchmarks, we are still keeping a number of tests under our ‘legacy’ section. This includes all the former major versions of CineBench (R15, R11.5, R10) as well as x264 HD 3.0 and the first very naïve version of 3DPM v2.1. We won’t be transferring the data over from the old testing into Bench, otherwise it would be populated with 200 CPUs with only one data point, so it will fill up as we test more CPUs like the others.

The other section here is our web tests.

Web Tests: Kraken, Octane, and Speedometer

Benchmarking using web tools is always a bit difficult. Browsers change almost daily, and the way the web is used changes even quicker. While there is some scope for advanced computational based benchmarks, most users care about responsiveness, which requires a strong back-end to work quickly to provide on the front-end. The benchmarks we chose for our web tests are essentially industry standards – at least once upon a time.

It should be noted that for each test, the browser is closed and re-opened a new with a fresh cache. We use a fixed Chromium version for our tests with the update capabilities removed to ensure consistency.

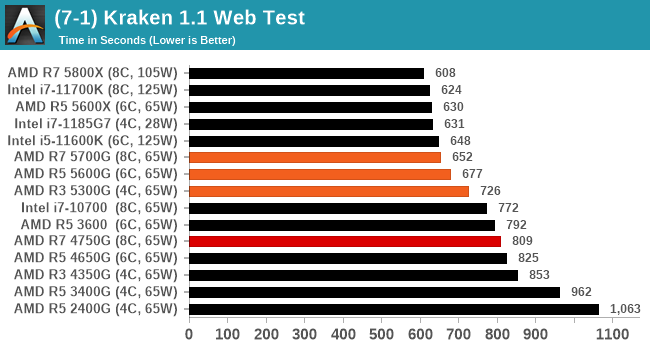

Mozilla Kraken 1.1

Kraken is a 2010 benchmark from Mozilla and does a series of JavaScript tests. These tests are a little more involved than previous tests, looking at artificial intelligence, audio manipulation, image manipulation, json parsing, and cryptographic functions. The benchmark starts with an initial download of data for the audio and imaging, and then runs through 10 times giving a timed result.

We loop through the 10-run test four times (so that’s a total of 40 runs), and average the four end-results. The result is given as time to complete the test, and we’re reaching a slow asymptotic limit with regards the highest IPC processors.

Sizeable single thread improvements.

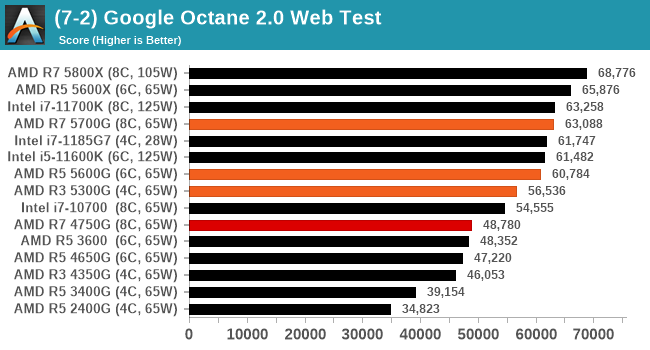

Google Octane 2.0

Our second test is also JavaScript based, but uses a lot more variation of newer JS techniques, such as object-oriented programming, kernel simulation, object creation/destruction, garbage collection, array manipulations, compiler latency and code execution.

Octane was developed after the discontinuation of other tests, with the goal of being more web-like than previous tests. It has been a popular benchmark, making it an obvious target for optimizations in the JavaScript engines. Ultimately it was retired in early 2017 due to this, although it is still widely used as a tool to determine general CPU performance in a number of web tasks.

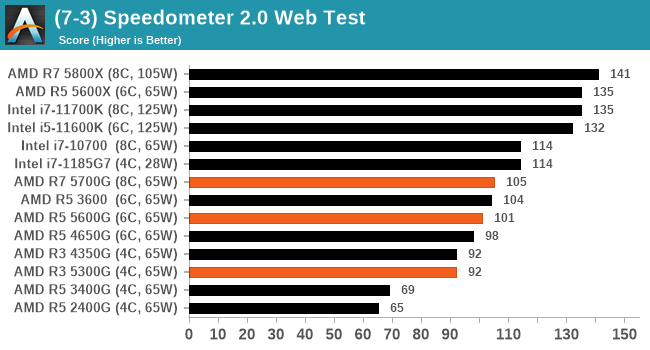

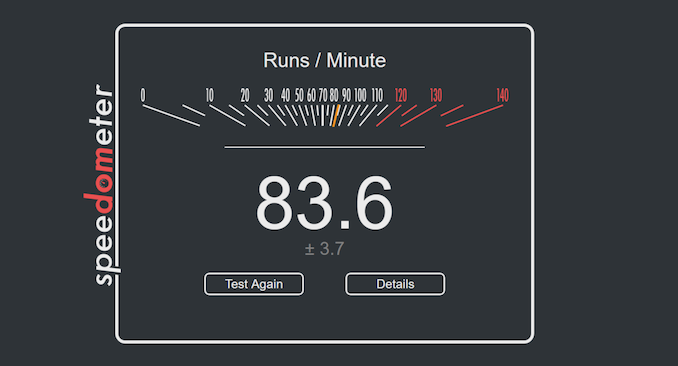

Speedometer 2: JavaScript Frameworks

Our newest web test is Speedometer 2, which is a test over a series of JavaScript frameworks to do three simple things: built a list, enable each item in the list, and remove the list. All the frameworks implement the same visual cues, but obviously apply them from different coding angles.

Our test goes through the list of frameworks, and produces a final score indicative of ‘rpm’, one of the benchmarks internal metrics.

We repeat over the benchmark for a dozen loops, taking the average of the last five.

Legacy Tests

135 Comments

View All Comments

GeoffreyA - Friday, August 6, 2021 - link

Okay, that makes sense.19434949 - Friday, August 6, 2021 - link

Do you know if 5600G or 5700G can output 4k 120fps movie/video playback?GeoffreyA - Saturday, August 7, 2021 - link

Tested this now on a 2200G. Taking the Elysium trailer, I encoded a 10-second clip in H.264, H.265, and AV1 using FFmpeg. The original frame rate was 23.976, so using the -r switch, got it up to 120. Also, scaled the video from 3840x1606 to 3840x2160, and kept the audio (DTS-MA, 8 ch). On MPC-HC, they all ran smoothly. Rough CPU/GPU usage:H.264: 25% | 20%

H.265: 6% | 21%

AV1: 60% | 20%

So if Raven Ridge can hold up at 4K/120, Cezanne should have no problem. Note that the video was downscaled during playback, owing to the screen not being 4K. Not sure if that made it easier. 8K, choppy. And VLC, lower GPU usage but got stuck on the AV1 clip.

GeoffreyA - Sunday, August 8, 2021 - link

Something else I found. 10-bit H.264 seems to be going through software/CPU decoding, whereas 8-bit is running through the hardware, dropping CPU usage down to 6/8%. H.265 hasn't got the same problem. It could be the driver, the hardware, the player, or my computer.mode_13h - Monday, August 9, 2021 - link

> 10-bit H.264 seems to be going through software/CPU decoding...

> It could be the driver, the hardware, the player

It's quite likely the driver or a GPU hardware limitation. There's some nonzero chance it's the player, but I'd bet the player tries to use acceleration and then only falls back on software when that fails.

GeoffreyA - Tuesday, August 10, 2021 - link

Yes, quite likely.mode_13h - Monday, August 9, 2021 - link

> the video was downscaled during playback, owing to the screen not being 4K.> Not sure if that made it easier.

Not usually. I only have detailed knowledge of H.264, where hierarchical encoding is mostly aimed at adapting to different bitrates. That would never be enabled by default, because not only is it more work for the encoder, but it's also poorly supported by decoders and generates slightly less efficient bitstreams.

GeoffreyA - Tuesday, August 10, 2021 - link

"hierarchical encoding"That could be x264's b-pyramid, which, I think, is enabled most of the time. Apparently, allows B-frames to be used as references, saving bits. The stricter setting limits it to the Bluray spec.

GeoffreyA - Tuesday, August 10, 2021 - link

By the way, looking forward to x266 coming out. AV1 is excellent, but VVC appears to be slightly ahead of it.mode_13h - Wednesday, August 11, 2021 - link

> That could be x264's b-pyramidNo, I meant specifically Scalable Video Coding, which is what I thought, but I didn't want to cite the wrong term.

https://en.wikipedia.org/wiki/Advanced_Video_Codin...

The only way the decoder can opt to do less work is by discarding some layers of a SVC-encoded H.264 stream. Under any other circumstance, the decoder can't take any shortcuts without introducing a cascade of errors in all of the frames which reference the one being decoded.

> The stricter setting limits it to the Bluray spec.

I think blu-ray mainly limits which profiles (which are a collection of compression techniques) and levels (i.e. bitrates & resolutions) can be used, so that the bitstream can be decoded by all players and can be streamed off the disc fast enough.

I once tried authoring valid blu-ray video dics, but the consumer-grade tools were too limiting and the free tools were a mess to figure out and use. In the end, I found simply copying MPEG-2.ts files on a BD-R would play it every player I tested. I was mainly interested in using it for a few videos shot on a phone, plus a few things I recorded from broadcast TV.