NVMe 2.0 Specification Released: Major Reorganization

by Billy Tallis on June 3, 2021 11:00 AM EST

Version 2.0 of the NVM Express specification has been released, keeping up the roughly two year cadence for the storage interface that is now a decade old. Like other NVMe spec updates, version 2.0 comes with a variety of new features and functionality for drives to implement (usually as optional features). But the most significant change—and the reason this is called version 2.0 instead of 1.5—is that the spec has been drastically reorganized to better fit the broad scope of features that NVMe now encompasses. From its humble beginnings as a block storage protocol operating over PCI Express, NVMe has grown to also become one of the most important networked storage protocols, and now also supports storage paradigms that are entirely different from the hard drive-like block storage abstraction originally provided by NVMe.

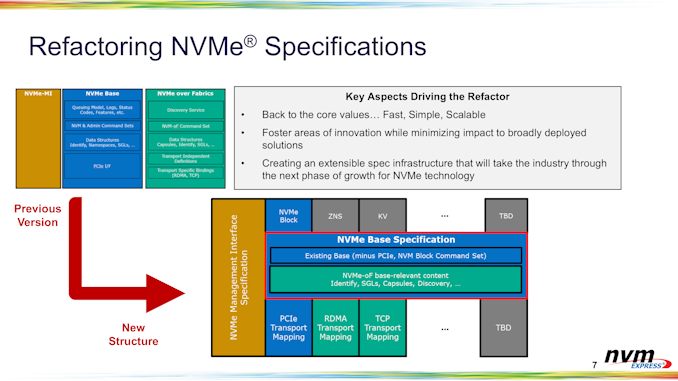

Instead of a base specification for typical PCIe SSDs and a separate NVMe over Fabrics spec, version 2.0 is designed to be a more modular specification and has been split into several documents. The base specification now covers both locally-attached devices and NVMeoF, but more abstractly—enough has been moved out of the base spec that it is no longer sufficient to define all of the functionality needed to implement a simple SSD. Real devices will also need to refer to at least one Transport spec and at least one Command Set spec. For typical consumer SSDs, that means using the PCIe transport spec and the block storage command set. Other transport options currently include networked NVMe over Fabrics using either TCP or RDMA. Other command set options include Zoned Namespace and Key-Value command sets. We already covered Zoned Namespaces in depth when it was approved for inclusion last year. The three standardized command sets (block, zoned, key-value) cover different points along the spectrum from simple SSDs with thin abstractions over the underlying flash, to relatively complicated, smart drives that take on some of the storage management tasks that would have traditionally been handled by software on the host system.

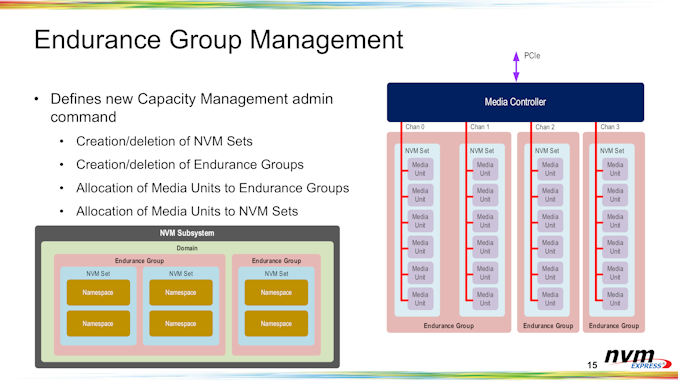

Many of the new features in NVMe 2.0 are minor extensions to existing functionality, making those features more useful and more broadly usable. For example, partitioning a device's storage into NVM Sets and Endurance Groups was introduced in NVMe 1.4, but the spec didn't say how those divisions would be created; that configuration would either need to be hard-coded by the drive's firmware, or handled with vendor-specific commands. NVMe 2.0 adds a standard capacity management mechanism for endurance groups and NVM sets to be allocated, and also adds another layer of partitioning (Domains) for the sake of massive NVMeoF storage appliances that needed more tools for slicing up their pool of available storage, or isolating the performance impacts of different users on shared drives or arrays.

The NVMe spec originally anticipated the possibility of multiple command sets beyond the base block storage command set. But the original mechanism included for supporting multiple command sets is not adequate for today's use cases: a handful of reserved bits in the controller capabilities data structure are not enough to encompass all the possibilities for what today's SSDs might implement. In particular, the new system for handling multiple command sets now makes it possible for different namespaces behind the same controller to support different command sets, rather than requiring all namespaces to support all of the command sets their parent controller supports.

Zoned and key-value command sets were already on the radar when NVMe 1.4 was completed, and now those technologies have been incorporated into 2.0 with equal status to the original block storage command set. Future command sets such as for computational storage drives are still a work in progress not ready for standardization, but the NVMe spec is now able to more easily incorporate such new developments. NVMe could in principle also add an Open Channel command set that exposes most or all of the raw details of managing NAND flash memory (pages, erase blocks, defect management, etc.), but the general industry consensus is that the zoned storage paradigm strikes a more reasonable balance, and interest in Open Channel SSDs is waning in favor of Zoned Namespaces.

For enterprise use cases, NVMe inherited Protection Information support from SCSI/SAS—associating some extra information with each logical block, which is used to verify end to end data integrity. NVMe 2.0 extends the existing Protection Information support from supporting 16-bit CRCs to also supporting 32-bit and 64-bit CRCs, allowing for more robust data protection for large-scale storage systems.

NVMe 2.0 introduces a significant new security feature: command group control, configured using a new Lockdown command. NVMe 1.4 added a namespace write protect capability that allows the host system to put namespaces into a write-protect mode until explicitly unlocked or until the drive is power cycled. NVMe 2.0's Lockdown allows similar control to disallow other commands. This can be used to put a drive in a state where both ordinary reads and writes are allowed, but various admin commands are locked out so the drive's other features cannot be reconfigured. As with the previous write protect feature, this command group control supports setting these restrictions until they are explicitly removed, or until a power cycle.

For NVMe over Fabrics use cases, NVMe 2.0 clarifies how to handle firmware updates and safe device shutdown in scenarios where the shared storage is accessible through multiple controllers. There's also now explicit support for hard drives. Even though it's unlikely that hard drives will switch anytime soon to natively use PCIe connections instead of SAS or SATA, supporting rotational media means enterprises can unify their storage networking with NVMe over Fabrics and drop older protocols like iSCSI.

Overall, NVMe 2.0 doesn't bring as much in the way of new functionality as some of the previous updates. In particular, nothing in this update stands out as being relevant to client/consumer SSDs. But the spec reorganization should make it easier to iterate and experiment with new functionality, and the next several years will hopefully see more frequent updates with smaller changes rather than bundling up two or three years of work for big spec updates.

Related Reading:

Source: NVM Express

24 Comments

View All Comments

Cooperdale - Friday, June 4, 2021 - link

"fades out"? Can you explain that or point me to some article? I'm just ignorant, interested and worried, no sarcasm here.sheh - Friday, June 4, 2021 - link

Search for: flash data retention, P/E cycles, temperature.Cooperdale - Saturday, June 5, 2021 - link

Thanks.mode_13h - Sunday, June 6, 2021 - link

In terms of P/E cycles, HDDs certainly aren't better than SSD. The strengths of HDDs lie in near-line/off-line storage and GB/$.Search for: power-off data retention.

mode_13h - Sunday, June 6, 2021 - link

One data point: I managed to recover the data off a TLC drive that had been powered off for 3 years, but several of the blocks that hadn't been touched since it left the factory had read errors. That puts the data retention limit somewhere near 4 years.Another data point: I had five 1 TB WD Black HDDs I put in service in 2010. 10 years later, I checked them and got no unrecoverable reads. However, they only spent about 10% - 20% of their time spun up.

sheh - Friday, June 4, 2021 - link

Seagate's dual-actuator drives already sort of hit SATA's 6Gbps limits.Lord of the Bored - Friday, June 4, 2021 - link

"No, I mean... how do you store data on glass and rust? There's no transistors!"mode_13h - Monday, June 7, 2021 - link

> A spinning platter of glass or rust attached to a multi-gigabit NVMe port.Multi-gigabit isn't too fast for a HDD. The largest-capacity HDDs can certainly exceed x1 PCIe 1.0.

I can easily imagine a RAID backplane with x1 PCIe 2.0 slots for the disk drives. It would save money on SAS controllers and let the drives offer some features only found in NVMe. You could even do away with a hardware RAID controller and just do it all in software (which is what I've been doing on my small SATA RAIDs for a decade, anyhow).

FLORIDAMAN85 - Monday, June 14, 2021 - link

I remember asking a Tiger direct employee if I could store a ramdisk image in a x6 flash drive RAID 0 array. His head exploded.edzieba - Monday, June 7, 2021 - link

Regardless of HDD performance, having your HDDs and SSDs operating on the same prototcol makes creating heterogenous storage pools much easier than juggling NVMe SSDs and SATA or SAS HDDs.