Arm Announces Mobile Armv9 CPU Microarchitectures: Cortex-X2, Cortex-A710 & Cortex-A510

by Andrei Frumusanu on May 25, 2021 9:00 AM EST- Posted in

- SoCs

- CPUs

- Arm

- Smartphones

- Mobile

- Cortex

- ARMv9

- Cortex-X2

- Cortex-A710

- Cortex-A510

The Cortex-A510: Brand-new Little Design Comes in Pairs

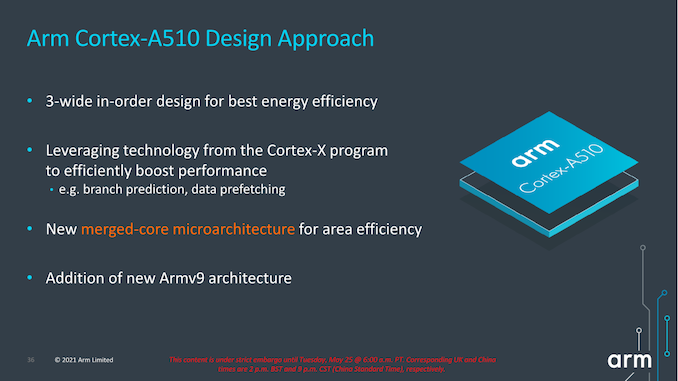

Moving on from the larger cores, this year we also have the pleasure to cover Arm’s newest little core, the new Cortex-A510. The new design if a clean-sheet microarchitecture from Arm’s Cambridge team which the engineers had been working on the past 4 years, and marks a quite different approach when it comes to how the little cores are built into the SoC.

First of all, Arm made explicit note of the design’s continued use of an in-order execution flow, mentioning that in their view that this is still the most power-efficient way to design a core for such workloads. This remains a relatively controversial topic and point of discussion when put into context of Apple’s own out-of-order efficiency cores, a topic I’ll return on later.

Secondly, the Cortex-A510 introduces something called a “merged-core” microarchitecture, there’s some very high-level comparisons and similarities to what AMD had done with CMT in their Bulldozer cores a decade ago, but differs quite significantly in some important aspects in terms of the details and design.

Of course, like the X2 and A710, the A510 is an Armv9 design, and all three cores share the same architectural features with each other, allowing them to be integrated together into the same SoC. It’s to be noted that the A510, much like the X2, is a 64-bit only AArch64 core.

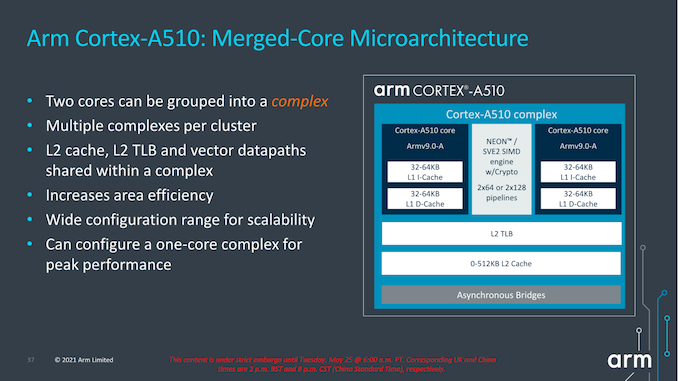

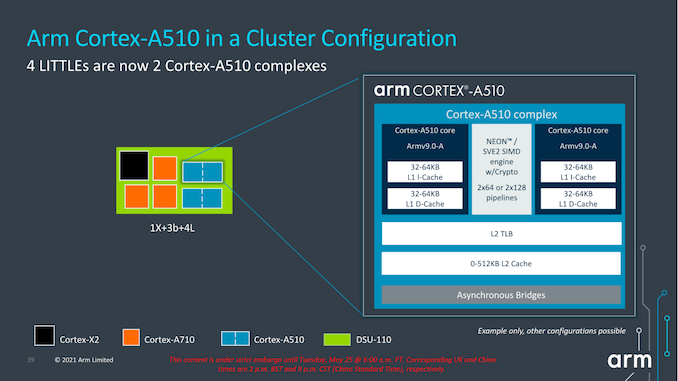

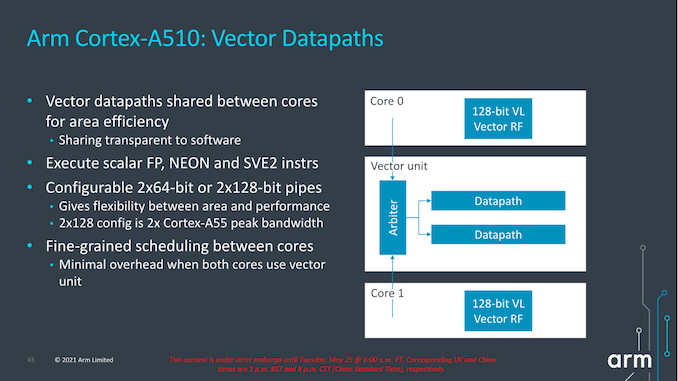

The most interesting aspect of the Cortex-A510 is the new merged-core approach. What Arm is doing here, is creating a new “complex” of up to two core pairs, which share the L2 cache system as well as the FP/NEON/SVE pipelines between them.

At first glance this will sound extremely similar to what AMD had done with Bulldozer and the CMT (Clustered Multithreading) approach, however Arm’s design is much more disaggregated in terms of what the actual cores are sharing. While AMD’s CMT module consisted essentially of a shared front-end between two integer back-ends and a single FP/SIMD back-end, Arm’s “merged cores” are actually full cores with their individual front-ends, mid-core, integer back-ends, and L1 cache hierarchies. The only thing being shared between the “cores” is the actual FP/SIMD back-end, as well as the L2 cache hierarchy.

Furthermore, while in the mobile market we expect vendors to use this new two merged cores per complex approach, it’s actually possible to simply just have a single core per complex. In which case the solo core would essentially have its own dedicated (non-shared) resources, but would be notably less area efficient than the intended merged core approach.

Generally, given the adoption of SVE2 and the relatively larger area footprint that the new execution backend requires, it makes sense to actually share these resources for these tiny new cores. Their typical workloads are also mostly only integer-bound background workloads which put less pressure on such units.

From a higher-level SoC standpoint, nothing really changes when it comes to the core-count, with the details being that we’ll be seeing two pairs or little cores now share a larger L2 cache between each other. This L2 can be configured up to 512KB, but as always, what we’ll actually see in products will very much depend on what vendors will want to implement into their designs. Because the new complex also only takes up a single interface on the DSU, it also opens up the possibility of designs larger than 8 “cores”, something I hope won’t happen, or hopefully only happens through more middle or big cores.

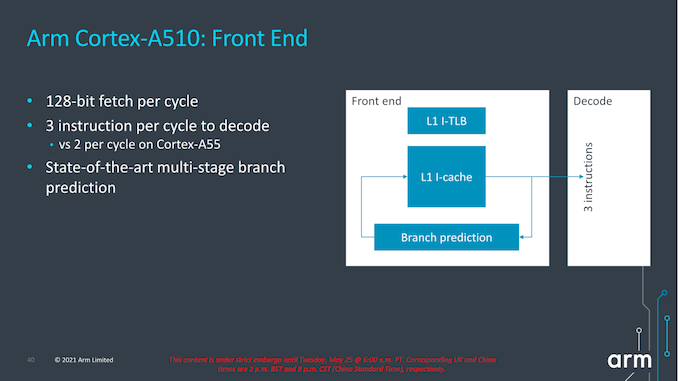

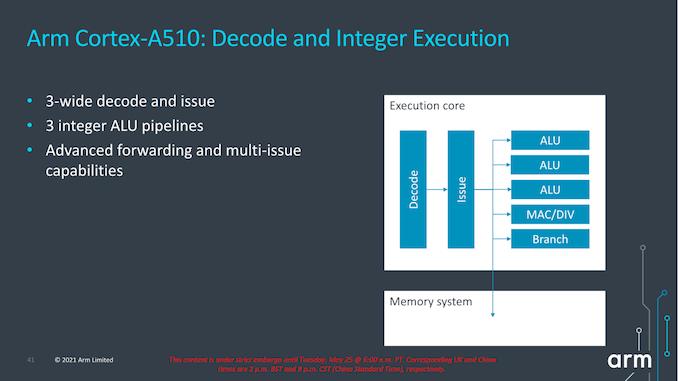

In terms of the front-end of the new A510, we’re seeing a 128-bit fetch pipeline which means it can fetch up to 4 instructions per cycle, giving the front-end a bit of leeway to close branch bubbles. The actual width of the decoder has increased from 2-wide to 3-wide.

In terms of branch prediction, as always Arm doesn’t disclose too many details, however the company did note that it used the same state-of-the-art approaches and techniques it uses on its larger cores. The L1 instruction cache can be either 32 or 64KB.

In terms of the shared vector execution back-end, it’s actually quite interesting here as Arm gives the option of either configuring the complex with smaller 2x64-bit pipelines or with 2x128b pipelines, the latter whose throughput would be 2x that of a Cortex-A55. I’m not too sure what mobile vendors will go with; we always hope for the larger configuration but as always, we’ll have to wait and see what will be employed in the actual products. In both configurations, the vector length is 128b as that’s the requirements for interoperability for the larger core microarchitectures.

Arm states that the shared pipelines are completely transparent to hardware, and that it’s also using fine-grained hardware scheduling. In actual multi-threaded workloads using both cores, the performance impact and deficit is said to only a few percent versus having a pipeline dedicated for each core. This is basically the cornerstone argument for why Arm has decided to use this more area-efficient merged-core approach.

Although it’s an in-order architecture, Arm has still widened the back-end of the Cortex-A510 which now includes 3 ALUs, one complex MAC/DIV unit and a branch forwarding port. The explanation here is simply that there’s more opportunities to execute a wider variety of code blocks in fewer cycles when there’s a fitting sequence of instructions coming in to be executed.

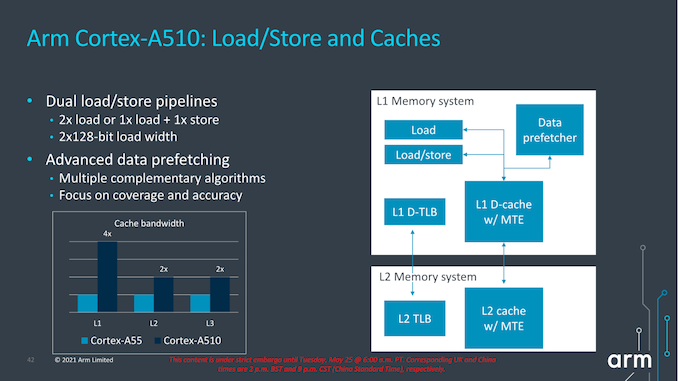

Finally, in the load-store system, the new structure is massively improved in comparison to the Cortex-A55 as we’re seeing a move from a load and store pipeline towards a load and a load/store pipeline, effectively doubling up on the number of loads executed per cycle. The width of the pipelines has also been increased with a doubling from 64b width to 128b width, so essentially load bandwidth compared to the Cortex-A55 is quadrupled.

The A510 also employs the similar very advanced prefetcher designs that we’ve seen in other recent big Arm cores, and adds to the large performance improvements that the core is able to achieve.

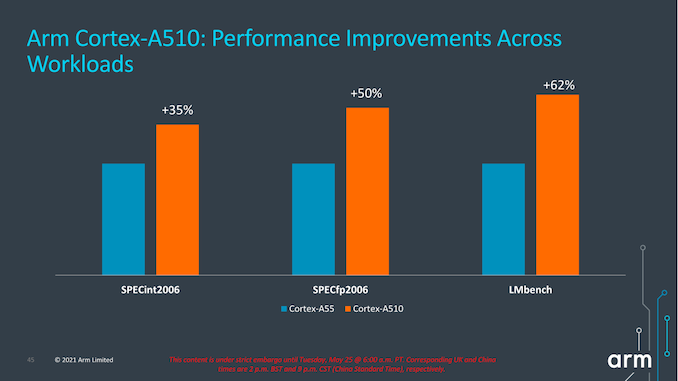

In terms of performance metrics, much like on the X2 and A710 presentation slides, the figures for the A510 aren’t very apples-to-apples as we’re comparing a Cortex-A55 with 32KB L1, 128KB L2 and 4MB L3 versus a Cortex-A510 with 32KB L1, 256KB L2 and 8MB L3. Frequency between the two cores is said to be the same. Under that scenario, we’re seeing +35% in SPECint2006 and +50% in SPECfp2006, which are seemingly very solid generational improvements, however given the cache hierarchy discrepancy as well as the fact that we’re comparing scores to a 4+ year old core, the actual improvements, especially from a compound annual growth rate (CAGR), doesn’t seem to be all that impressive.

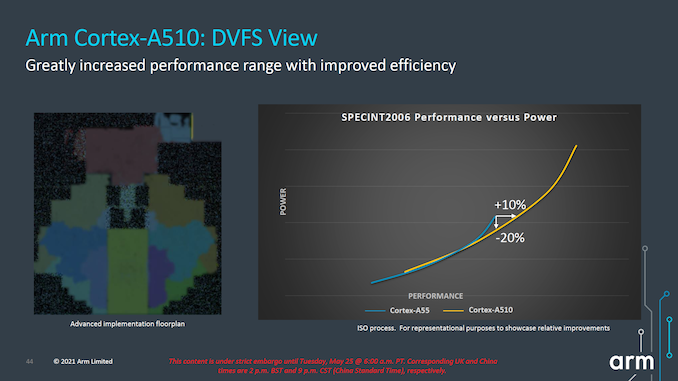

Looking at the projected performance and power curves on an ISO-process comparison, the new A510 seems rather lackluster from an efficiency standpoint. The ISO-power and ISO-performance gains are respectively +10% performance and -20% power, but the latter is really only valid for the high-end of the A55’s frequency curve, all the while the A510 pretty much overlaps the A55’s curve at lower operating points. While the A510 offers overall better performance, this seems to mostly be a product of extending the efficiency curve to higher power levels, and I was frankly disappointed to see this.

We’ll have to wait for the new generation SoCs to actually hit the market for us to test the new A510 cores, but if indeed they come with larger power consumption operating points to achieve higher performance, then Arm won't be much nearer in catching up to what Apple has been doing with their efficiency cores. As of the latest generation of SoCs, Apple’s efficiency cores were around 4x faster than any Cortex-A55 based SoC. Which, running at roughly the same system active power, also made them 3-4x more efficient in the traditional benchmarks. As presented, a theoretical A510 SoC won't be able to close that efficiency gap at all.

Arm is still adamant that for the kind of general use-cases in which the little cores are used in mobile phones – such as very light UI workloads – that their little core approach is still the most power-efficient way to achieve the best “DoU” or days of use figures. This is based in part on their internal projections as well as their partners', all of which indicate that the the triple issue in-order design they've developed is the most efficient option.

As the team explains it, it’s actually extremely hard to reproduce these more real-world workloads in any more structured benchmark (such as the typical test suites we tend to employ), but admitted there’s no real alternative that one could use to isolate performance and efficiency in such tasks. Generally, my counter-argument here is that iPhones still have outstanding battery life, so I’m still extremely skeptical on the whole lower-performance in-order core approach versus a more efficiency focused OOO core as demonstrated by Apple. Especially since we don’t really have an independent way to really test Arm’s claims, and the only data points we do have paint a very different picture.

Arm does note that this generation also had a lot of work done in regards to architectural features, and that the A510 is merely a starting point in a series of generations that are planned to be updated in a more regular fashion versus the large 4–5-year gap we’ve seen with the Cortex-A55 (just to be explicit, Arm doesn’t promise yearly updates, but we’ll be seeing successors in a much faster timeline). Those successors will see continued improvements in performance and power efficiency.

For this upcoming generation, the one benefit of the new little cores will come in more mixed-load workloads. Due to the DVFS nature of the various cores in an SoC, most core groups of a given core type share the same voltage rail and operate at the same frequencies. If there’s a primary thread workload that is resident on the middle cores at higher (less efficient) frequency operating points, any further medium-performance demand secondary threads that in the past couldn’t be serviced by A55 cores would have had to be migrated over to that much less efficient operating point on another middle core. On the A510 with its higher peak performance points, the workload would now be still be resident on the newer little cores, greatly increasing execution efficiency than if they were to be scheduled on the middle cores.

Overall, I’m still feeling a bit underwhelmed by the new A510 core, particularly given the 4 years it took to design it. Let’s hope that the upcoming mobile SoCs will have more apparent efficiency to them when we're testing them in 2022 devices.

181 Comments

View All Comments

Thala - Tuesday, May 25, 2021 - link

You compare only peak performance. ARM has demonstrated that SVE2 can have big advantages over NEON, in particular for computational kernel, which does not parallelize well for NEON.WorBlux - Thursday, May 27, 2021 - link

>If they are sticking with in-order, I hoped the A510 could’ve done something more over four years.In order is hard. The A55 was pretty cool in allowing certain instruction dependencies to be issued together. The traditional way to get more IPC out of in-order is VLIW, but would require an ABI break or at least a special sort of compiler optimization and quasi-long-words that in the end wouldn't do any better than the A55/A510 on legacy and non-optimized code.

mode_13h - Tuesday, May 25, 2021 - link

x86 is indeed on the way out, but your analysis is too facile.SarahKerrigan - Tuesday, May 25, 2021 - link

Essentially agreed.yeeeeman - Tuesday, May 25, 2021 - link

x86 maybe dead if you don't understand how and why things stand like they do.First of all, Apple is in a very very special situation where they control everything. Hardware, software, product. Plus they use the best process there is at the moment. All of this, contributes to their results. Which are very good, but they stem from what I told you.

Now, a better picture of what ARM is actually capable of in ... real life is the snapdragon 8cx, which for all intents and purposes is still alive only because qualcomm has a ton of money and can throw it away for projects that don't really sell.

Apple is using just ARM ISA. If Apple has great performance and great efficiency, it doesn't mean automatically that ARM and the companies that work with them will also reach that point. The truth is, Apple has put a LOT of money and R&D and got the best talents there are to get where they are today. Their cores are not exactly suited for the plethora of android devices that range from 50 bucks to 2000+.

Now, regarding x86, if you compare amd's zen 3 with m1, you'll see that they are not that far off, in perf and in efficiency. And AMD is using 7nm, not 5nm! Also, nowdays, all the cpus are risc inside, so x86 cpus are very similar inside to arm cpus, with the addition of the extra decoding and micro ops.

x86 main weakness is also its greatest advantage. Backwards compatibility is very important and needs to stay. ARM cpus lose compatibility totally once in a while, which is not something that will work in the long run.

Also, don't forget that Intel hasn't introduced anything major since 2015! Ice Lake/Tigerlake are just a bump in execution units over skylake, which on its own brings 20% better IPC. But Intel has stayed still for so many years, that is why ARM has got the chance to close the gap.

SarahKerrigan - Tuesday, May 25, 2021 - link

What? SNC is not merely a bump in execution units from SKL at all. It's a new, wider, more aggressive uarch across the board. SNC is a larger change than SKL itself was, and not by a small margin.boredsysadmin - Tuesday, May 25, 2021 - link

@yeeeeman - "Also, nowdays, all the cpus are risc inside, so x86 cpus are very similar inside to arm cpus, with the addition of the extra decoding and micro ops."Excuse, where did you get this BS? Only Arm, Risk-V, MIPS, and PowerPC are using RISC. x86 from both Intel and AMD are very much still CISC. So, no they aren't very similar in any share and form.

Drumsticks - Tuesday, May 25, 2021 - link

All x86 CPUs crack CISC macro instructions into smaller RISC like operations. The actual execution of the CPU operates on these smaller micro ops. Beyond the initial decode/cracking stage, it's pretty much a RISC operation.They are CISC from an architectural perspective, but they've been RISC in execution for some time.

vvid - Tuesday, May 25, 2021 - link

>> All x86 CPUs crack CISC macro instructions into smaller RISC like operations.RISC-like is not RISC. It is like saying that a woman with pear-like figure shape is actually a pear.

x86 uops are pretty much corresponding to CISC ISA now.

>> but they've been RISC in execution for some time

RISC-like.

mode_13h - Wednesday, May 26, 2021 - link

> they've been RISC in execution for some time.And sadly, Internet Oversimplification Syndrome claims another victim.