Intel 3rd Gen Xeon Scalable (Ice Lake SP) Review: Generationally Big, Competitively Small

by Andrei Frumusanu on April 6, 2021 11:00 AM EST- Posted in

- Servers

- CPUs

- Intel

- Xeon

- Enterprise

- Xeon Scalable

- Ice Lake-SP

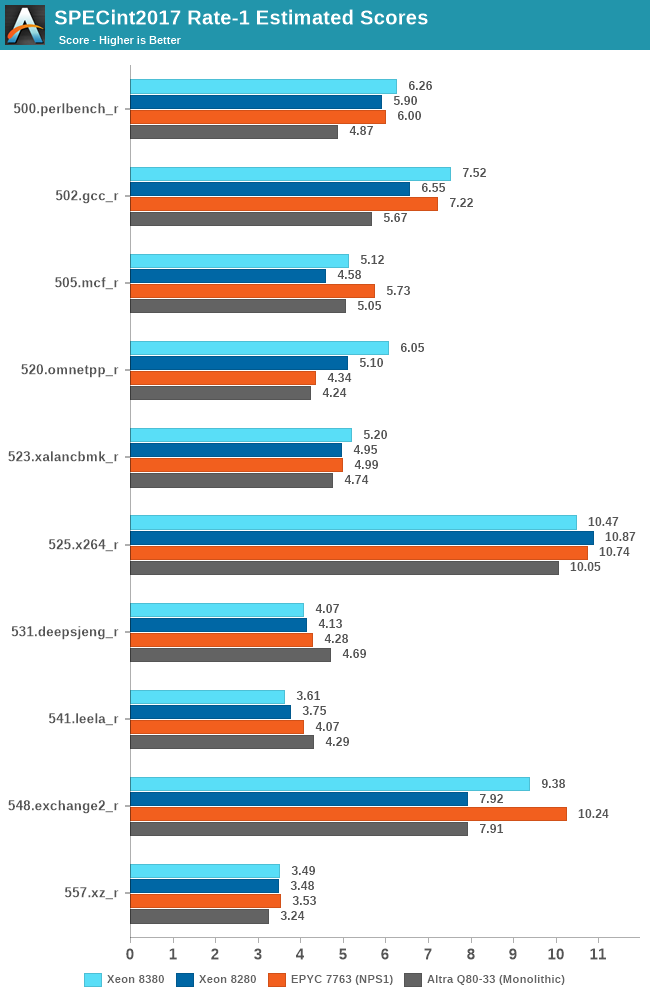

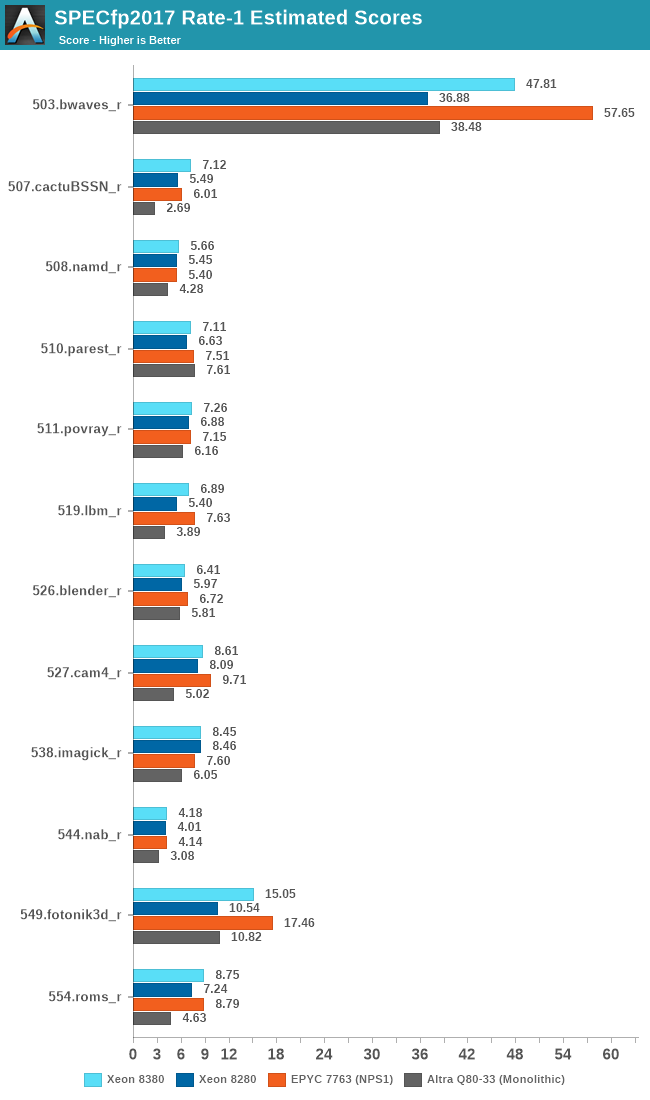

SPEC - Single-Threaded Performance

Single-thread performance of server CPUs usually isn’t the most important metric for most scale-out workloads, but there are use-cases such as EDA tools which are pretty much single-thread performance bound.

Power envelopes here usually don’t matter, and what is actually the performance factor that comes at play here is simply the boost clocks of the CPUs as well as the IPC improvement, and memory latency of the cores.

The one hiccup for the Xeon 8380 this generation is the fact that although there’s plenty of IPC gains to be had compared to previous microarchitectures, the new SKU is only boosting up to 3.4GHz, whereas the 8280 was able to boost up to 4GHz, which is a 15% deficit.

Even with the clock frequency disadvantage, thanks to the IPC gains, much improved memory bandwidth, as well as the much larger L3 cache, the new Ice Lake part to most of the time beat the Cascade Lake part, with only a couple of compute-bound core workloads where it falls behind.

The floating-point figures are more favourable to the ICX architecture due to the stronger memory performance.

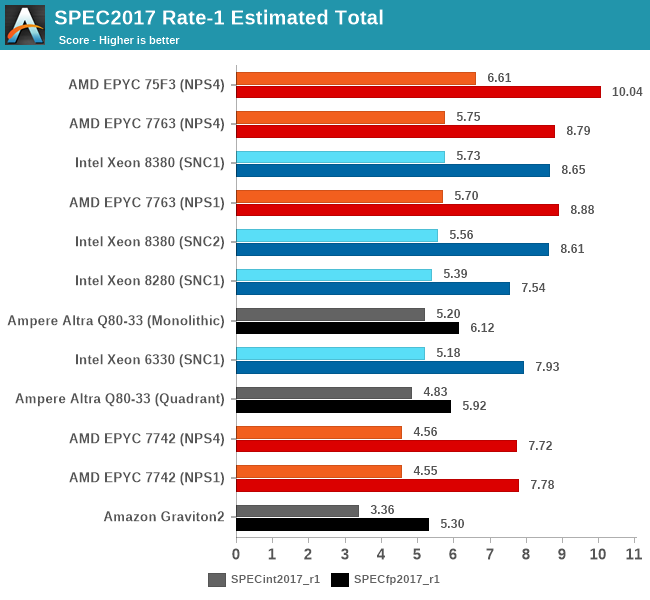

Overall, the new Xeon 8380 at least manages to post slight single-threaded performance increases this generation, with larger gains in memory-bound workloads. The 8380 is essentially on par with AMD’s 7763, and loses out to the higher frequency optimised parts.

Intel has a few SKUs which offers slightly higher ST boost clocks of up to 3.7GHz – 300Mhz / 8.8% higher than the 8380, however that part is only 8-core and features only 18MB of cache. Other SKUS offer 3.5-3.6GHz boosts, but again less cache. So while the ST figures here could improve a bit on those parts, it’s unlikely to be significant.

169 Comments

View All Comments

Drazick - Wednesday, April 7, 2021 - link

The ICC compiler has much better vectorization engine than the one in GCC. It will usually generate better vectorized code. Especially numerical code.But the real benefit of ICC is its companion libraries: VSML, MKL, IPP.

Oxford Guy - Wednesday, April 7, 2021 - link

I remember that custom builds of Blender done with ICC scored better on Piledriver as well as on Intel hardware. So, even an architecture that was very different was faster with ICC.mode_13h - Thursday, April 8, 2021 - link

And when was this? Like 10 years ago? How do we know the point is still relevant?Oxford Guy - Sunday, April 11, 2021 - link

How do we know it isn't?Instead of whinge why not investigate the issue if you're actually interested?

Bottom line is that, just before the time of Zen's release, I tested three builds of Blender done with ICC and all were faster on both Intel and Piledriver (a very different architecture from Haswell).

I asked why the Blender team wasn't releasing its builds with ICC since performance was being left on the table but only heard vague suggestions about code stability.

Wilco1 - Sunday, April 11, 2021 - link

This thread has a similar comment about quality and support in ICC: https://twitter.com/andreif7/status/13808945639975...KurtL - Wednesday, April 7, 2021 - link

This is absolutely untrue. There is not much special about AOCC, it is just a AMD-packaged Clang/LLVM with few extras so it is not a SPEC compiler at all. Neither is it true for Intel. Sites that are concerned about getting the most performance out of their investments often use the Intel compilers. It is a very good compiler for any code with good potential for vectorization, and I have seen it do miracles on badly written code that no version of GCC could do.Wilco1 - Wednesday, April 7, 2021 - link

And those closed-source "extras" in AOCC magically improve the SPEC score compared to standard LLVM. How is it not a SPEC compiler just like ICC has been for decades?JoeDuarte - Wednesday, April 7, 2021 - link

It's strange to tell people who use the Intel compiler that it's not used much in the real world, as though that carries some substantive point.The Intel compiler has always been better than gcc in terms of the performance of compiled code. You asserted that that is no longer true, but I'm not clear on what evidence you're basing that on. ICC is moving to clang and LLVM, so we'll see what happens there. clang and gcc appear to be a wash at this point.

It's true that lots of open source Linux-world projects use gcc, but I wouldn't know the percentage. Those projects tend to be lazy or untrained when it comes to optimization. They hardly use any compiler flags relevant to performance, like those stipulating modern CPU baselines, or link time optimization / whole program optimization. Nor do they exploit SIMD and vectorization much, or PGO, or parallelization. So they leave a lot of performance on the table. More rigorous environments like HPC or just performance-aware teams are more likely to use ICC or at least lots of good flags and testing.

And yes, I would definitely support using optimized assembly in benchmarks, especially if it surfaced significant differences in CPU performance. And probably, if the workload was realistic or broadly applicable. Anything that's going to execute thousands, millions, or billions of times is worth optimizing. Inner loops are a common focus, so I don't know what you're objecting to there. Benchmarks should be about realizable optimal performance, and optimization in general should be a much bigger priority for serious software developers – today's software and OSes are absurdly slow, and in many cases desktop applications are slower in user-time than their late 1980s counterparts. Servers are also far too slow to do simple things like parse an HTTP request header.

pSupaNova - Wednesday, April 7, 2021 - link

"today's software and OSes are absurdly slow, and in many cases desktop applications are slower in user-time than their late 1980s counterparts." a late 1980's desktop could not even play a video let alone edit one, your average mid range smartphone is much more capable. My four year old can do basic computing with just her voice. People like you forget how far software and hardware has come.GeoffreyA - Wednesday, April 7, 2021 - link

Sure, computers and devices are far more capable these days, from a hardware point of view, but applications, relying too much on GUI frameworks and modern languages, are more sluggish today than, say, a bare Win32 application of yore.