Sabrent Rocket Q4 and Corsair MP600 CORE NVMe SSDs Reviewed: PCIe 4.0 with QLC

by Billy Tallis on April 9, 2021 12:45 PM ESTMixed IO Performance

For details on our mixed IO tests, please see the overview of our 2021 Consumer SSD Benchmark Suite.

|

|||||||||

| Mixed Random IO | Throughput | Power | Efficiency | ||||||

| Mixed Sequential IO | Throughput | Power | Efficiency | ||||||

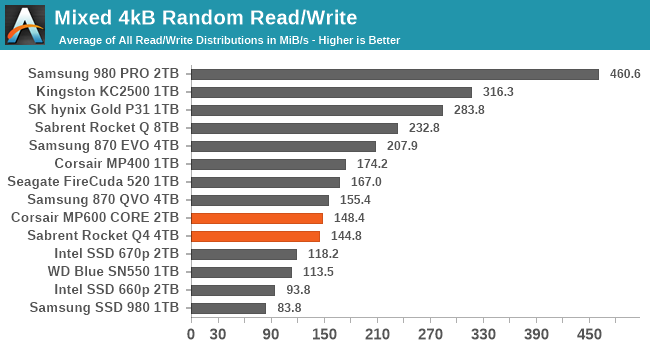

The Sabrent Rocket Q4 and Corsair MP600 CORE deliver excellent performance on the mixed sequential IO test, leading to above-average power efficiency as well. Their performance on the mixed random IO test is not great, and is actually slower overall than what we saw with Phison E12 QLC drives like the original Rocket Q and the MP400.

|

|||||||||

| Mixed Random IO | |||||||||

| Mixed Sequential IO | |||||||||

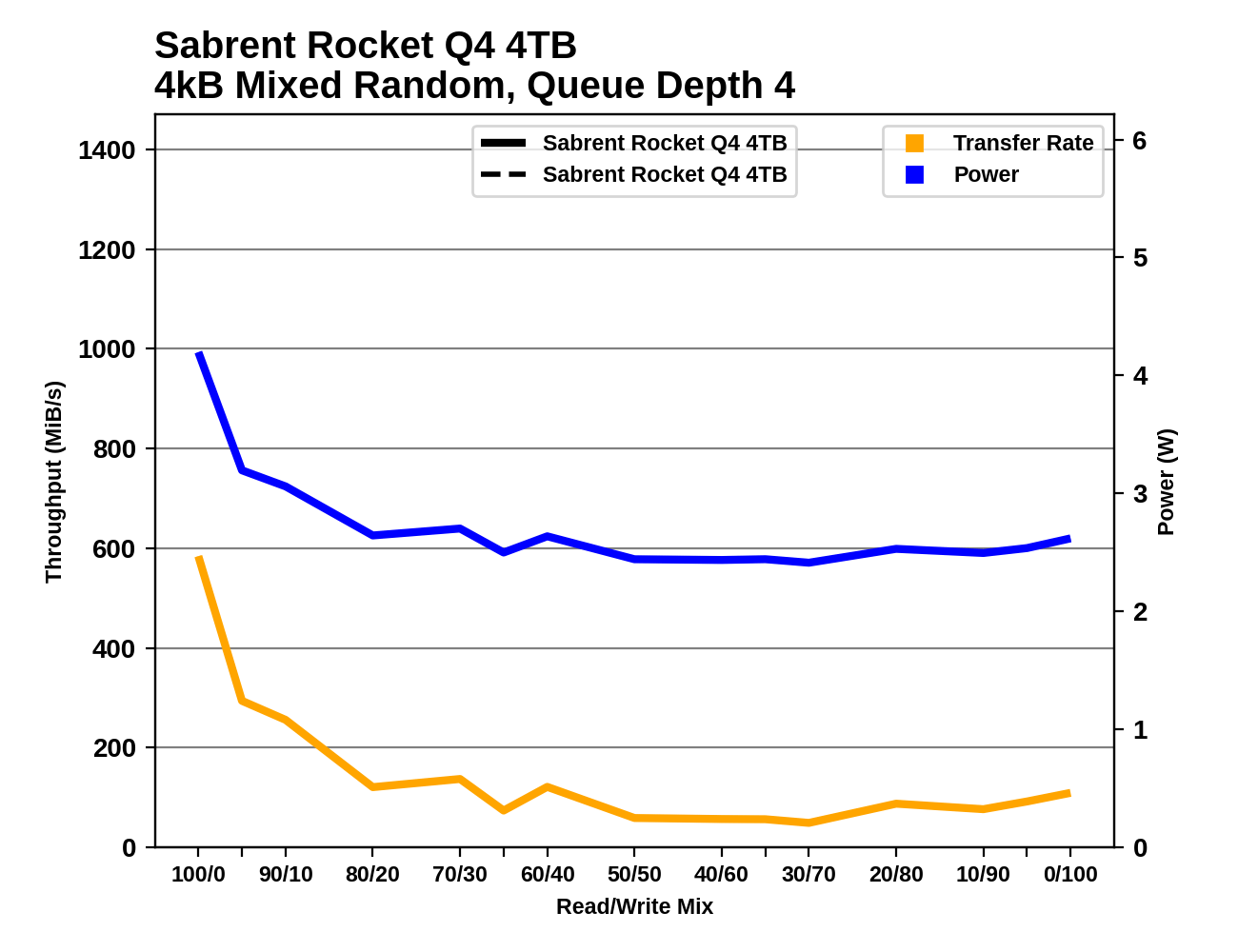

The earlier E12+QLC drives outperform these new E16+QLC drives across almost all phases of the mixed random IO test, despite using same Micron 96L QLC NAND. On the other hand, the newer QLC drives turn in surprisingly fast and steady results throughout the mixed sequential IO test, though the 2TB MP600 CORE does get off to a bit of a slow start.

Power Management Features

Real-world client storage workloads leave SSDs idle most of the time, so the active power measurements presented earlier in this review only account for a small part of what determines a drive's suitability for battery-powered use. Especially under light use, the power efficiency of a SSD is determined mostly be how well it can save power when idle.

For many NVMe SSDs, the closely related matter of thermal management can also be important. M.2 SSDs can concentrate a lot of power in a very small space. They may also be used in locations with high ambient temperatures and poor cooling, such as tucked under a GPU on a desktop motherboard, or in a poorly-ventilated notebook.

| Sabrent Rocket Q4 4TB NVMe Power and Thermal Management Features |

|||

| Controller | Phison E16 | ||

| Firmware | RKT40Q.2 (EGFM52.3) | ||

| NVMe Version |

Feature | Status | |

| 1.0 | Number of operational (active) power states | 3 | |

| 1.1 | Number of non-operational (idle) power states | 2 | |

| Autonomous Power State Transition (APST) | Supported | ||

| 1.2 | Warning Temperature | 75 °C | |

| Critical Temperature | 80 °C | ||

| 1.3 | Host Controlled Thermal Management | Supported | |

| Non-Operational Power State Permissive Mode | Supported | ||

Our samples of the Sabrent Rocket Q4 and Corsair MP600 CORE use the same firmware from Phison (though Sabrent has re-branded the version numbering). As a result, they support the same full range of power management features. The 4TB Rocket Q4 reports higher maximum power draws for its active power states than the 2TB MP600 CORE, but both drives report the same idle behaviors.

The advertised maximum of 10.58 W for the 4TB Rocket Q4 is alarming and definitely supports Sabrent's suggestion that the drive not be used without a heatsink. However, during our testing the drive never went much above 7W for sustained power draw, which is more in line with the maximum power claimed by the 2TB MP600 CORE (which also tended to stay well below its supposed maximum). In practice, these drives can get by just fine without a big heatsink as long as they have some decent airflow, because real-world workloads will almost never push these drives to their maximum power levels for long.

| Sabrent Rocket Q4 4TB NVMe Power States |

|||||

| Controller | Phison E16 | ||||

| Firmware | RKT40Q.2 (EGFM52.3) | ||||

| Power State |

Maximum Power |

Active/Idle | Entry Latency |

Exit Latency |

|

| PS 0 | 10.58 W | Active | - | - | |

| PS 1 | 7.14 W | Active | - | - | |

| PS 2 | 5.43 W | Active | - | - | |

| PS 3 | 49 mW | Idle | 2 ms | 2 ms | |

| PS 4 | 1.8 mW | Idle | 25 ms | 25 ms | |

Note that the above tables reflect only the information provided by the drive to the OS. The power and latency numbers are often very conservative estimates, but they are what the OS uses to determine which idle states to use and how long to wait before dropping to a deeper idle state.

Idle Power Measurement

SATA SSDs are tested with SATA link power management disabled to measure their active idle power draw, and with it enabled for the deeper idle power consumption score and the idle wake-up latency test. Our testbed, like any ordinary desktop system, cannot trigger the deepest DevSleep idle state.

Idle power management for NVMe SSDs is far more complicated than for SATA SSDs. NVMe SSDs can support several different idle power states, and through the Autonomous Power State Transition (APST) feature the operating system can set a drive's policy for when to drop down to a lower power state. There is typically a tradeoff in that lower-power states take longer to enter and wake up from, so the choice about what power states to use may differ for desktop and notebooks, and depending on which NVMe driver is in use. Additionally, there are multiple degrees of PCIe link power savings possible through Active State Power Management (APSM).

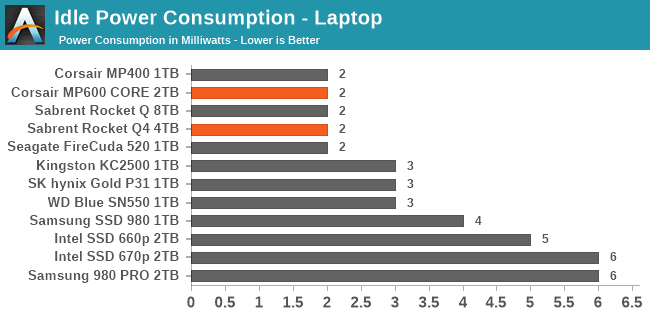

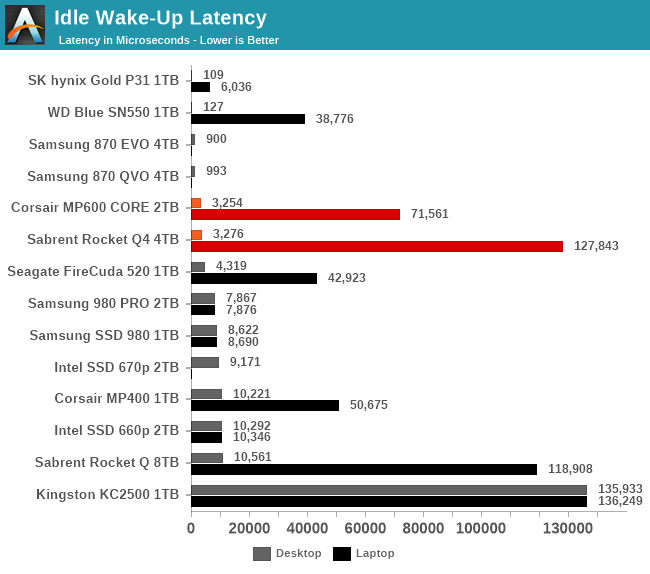

We report three idle power measurements. Active idle is representative of a typical desktop, where none of the advanced PCIe link or NVMe power saving features are enabled and the drive is immediately ready to process new commands. Our Desktop Idle number represents what can usually be expected from a desktop system that is configured to enable SATA link power management, PCIe ASPM and NVMe APST, but where the lowest PCIe L1.2 link power states are not available. The Laptop Idle number represents the maximum power savings possible with all the NVMe and PCIe power management features in use—usually the default for a battery-powered system but rarely achievable on a desktop even after changing BIOS and OS settings. Since we don't have a way to enable SATA DevSleep on any of our testbeds, SATA drives are omitted from the Laptop Idle charts.

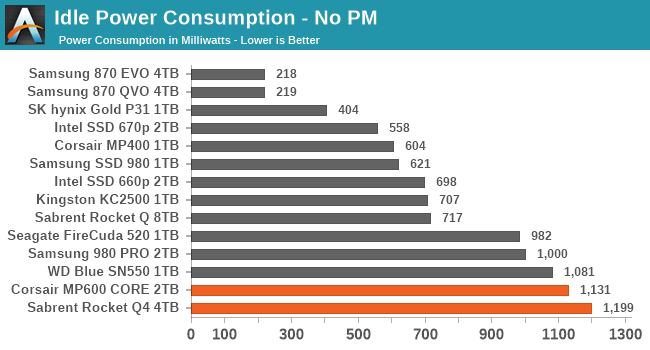

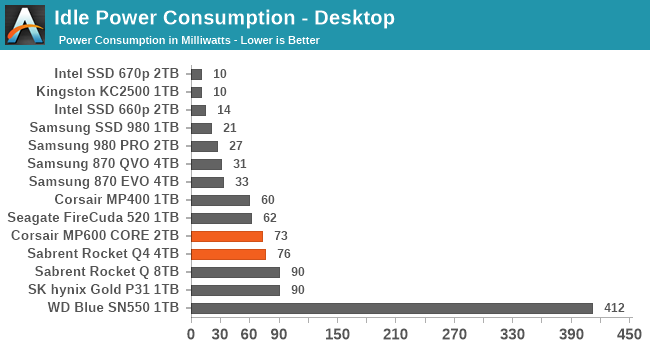

Both the Sabrent Rocket Q4 and Corsair MP600 CORE show quite high active idle power draw, a consequence of their use of a PCIe Gen4 controller made on 28nm rather than something newer like 12nm. However, there's no problem with the low-power idle states except for Phison's usual sluggish wake-up from the deepest sleep. This seems to be worse for higher capacity drives, with the 4TB Rocket Q4 taking about an eighth of a second to wake up.

60 Comments

View All Comments

allenb - Sunday, April 11, 2021 - link

This whining about QLC is an amazing mix of comedy and incompetence. Who cares about the storage medium? If your data is written to petrified goose excrement, what does it matter if the delivered performance meets your needs?Faceless corporations screw us over in plenty of legitimate ways. Why make up new things to get pissed off about?

Do the math or look at your actual usage data. Very few individuals are generating enough write traffic for endurance to be a concern. As others have stated here.

JoeDuarte - Sunday, April 11, 2021 - link

Bugs and bad software developers can cause significant degradation of SSD endurance. There was a bug in the Spotify desktop app a couple of years ago that caused massive writes, way beyond normal use, which affected SSD owners the most.And there are apparently issues with Apple's M1 Macbooks, though I haven't kept up. It might have been related to their stingy RAM allotment (8 GB), causing excessive SSD swap.

Endurance will matter when it's as bad as these drives, which is the worst I've ever seen.

Oxford Guy - Tuesday, April 13, 2021 - link

Probably nanosecond timestamps in APFS with various spyware running amok.edzieba - Monday, April 12, 2021 - link

Oh shush, next you'll be telling them that bus interface speed or heatsink presence/size is not an useful indicator of drive performance!ZolaIII - Friday, April 16, 2021 - link

Sure take an example of guy who likes to watch the movies on it's new TV. He whatches 50 movie a month. He takes 4K Blue-ray discs but wants to convert them to 4K HDR + HLG (H265 10 Bit HLG+) format to match the display capabilities as good as possible. In order to do that in real time he uses GPU conversion and as it sucks regarding quality he puts 2x higher bit rate doing so. Of course he first copy them to SSD. That's (50x50) GB x3 = 7.32 TB per month or almost 88 TB per year for one or two muvies every day. When you add rest it comes to at least 100 TB a year. Example is realistic with not to much or extensive usage and illustration of how QLC endurance simply is concerning.FunBunny2 - Saturday, April 17, 2021 - link

"almost 88 TB per year for one or two muvies every day. When you add rest it comes to at least 100 TB a year."well... such a knucklehead obviously doesn't have any time devoted to work, so s/he's either a rich real estate developer or a welfare queen/king. :)

ZolaIII - Sunday, April 18, 2021 - link

Why? If you work from 9 to 5 you don't have time to watch a movie or two (especially if you like watching movies)?DracoDan - Monday, April 19, 2021 - link

They should sell a single SSD that can be user configured as a 4TB QLC, 2TB TLC, 1TB MLC, or 512GB SLC SSD. That way the end user has the ability to decide the tradeoff for space vs performance!Billy Tallis - Tuesday, April 20, 2021 - link

Your math is off, possibly because of the common misunderstanding of the difference between the number of bits stored per memory cell and the number of possible voltage states required to represent those bits.It would be 4TB as QLC, 3TB as TLC, 2TB as MLC or 1TB as SLC. See the Whole-Drive Fill test which illustrates that the 4TB drive has up to 1TB of SLC cache.

xJumpManx - Monday, May 3, 2021 - link

If you have a X570 Taichi do not buy sabrent ssd the mobo does not detect the drive.