Sabrent Rocket Q4 and Corsair MP600 CORE NVMe SSDs Reviewed: PCIe 4.0 with QLC

by Billy Tallis on April 9, 2021 12:45 PM ESTAdvanced Synthetic Tests

Our benchmark suite includes a variety of tests that are less about replicating any real-world IO patterns, and more about exposing the inner workings of a drive with narrowly-focused tests. Many of these tests will show exaggerated differences between drives, and for the most part that should not be taken as a sign that one drive will be drastically faster for real-world usage. These tests are about satisfying curiosity, and are not good measures of overall drive performance. For more details, please see the overview of our 2021 Consumer SSD Benchmark Suite.

Whole-Drive Fill

|

|||||||||

| Pass 1 | |||||||||

| Pass 2 | |||||||||

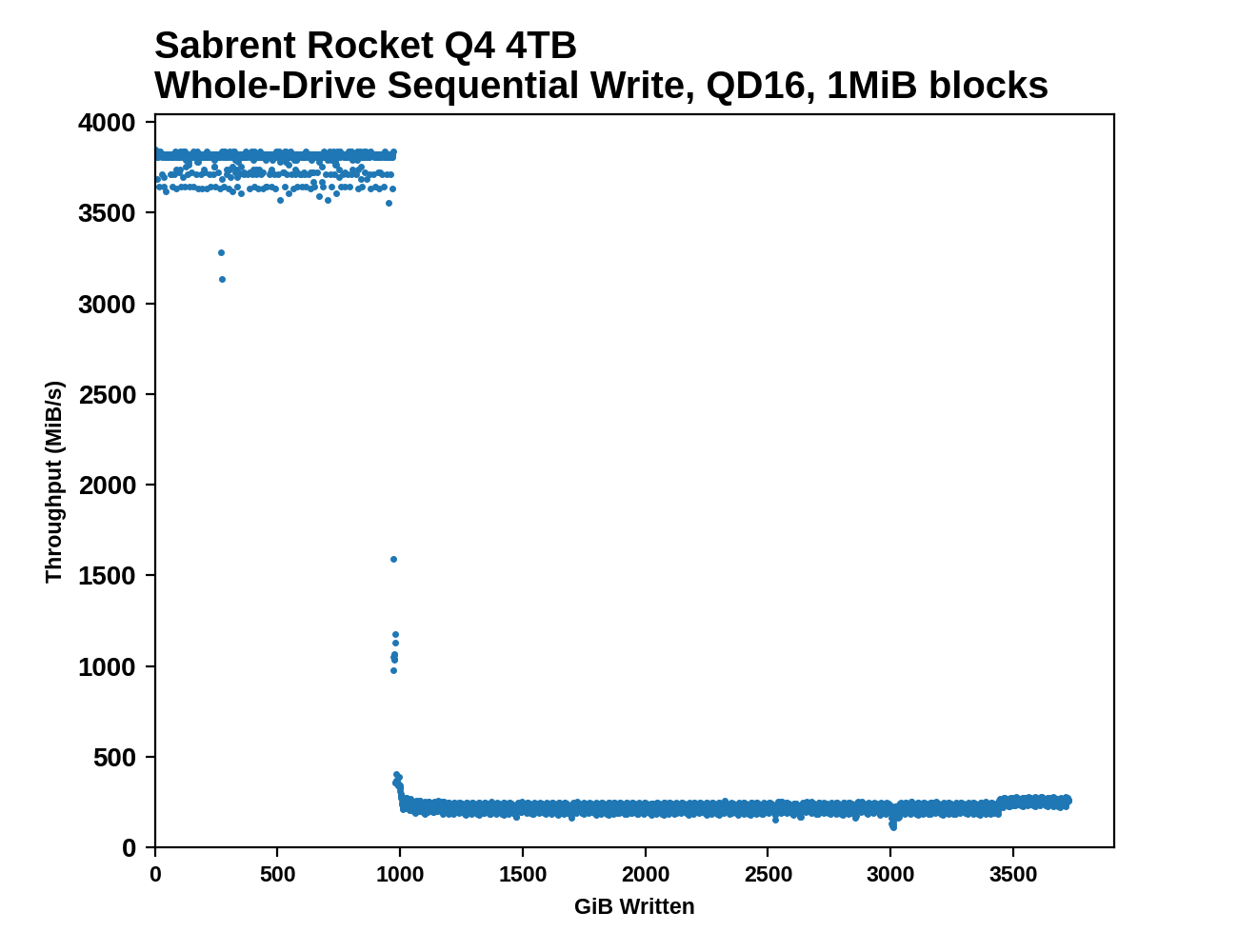

As is typical for QLC drives based around Phison controllers, the SLC caches on the Rocket Q4 and MP600 CORE are as large as possible: about 1/4 the usable capacity of the drive. Write speeds to the cache hover around or just above the limit of what a PCIe 3 x4 link could handle, and once the cache is full performance drops down to well below the limit of what a SATA link could handle.

|

|||||||||

| Average Throughput for last 16 GB | Overall Average Throughput | ||||||||

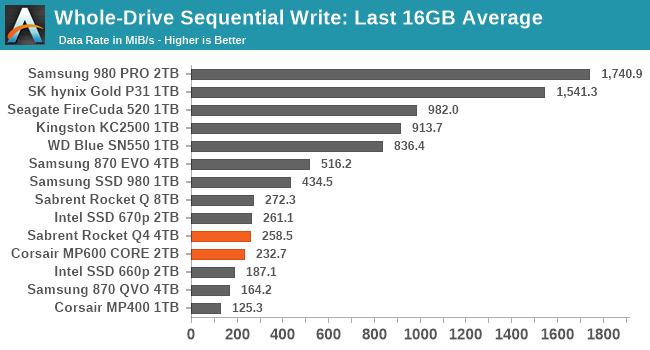

Both the 2TB MP600 CORE and the 4TB Rocket Q4 have about the same overall write speed, and they're in the middle of the pack of QLC drives: the older 8TB Rocket Q and the more recent Intel 670p both outperform the Phison E16 drives. And all of the TLC drives naturally sustain higher write speeds than any of these QLC drives.

Working Set Size

|

|||||||||

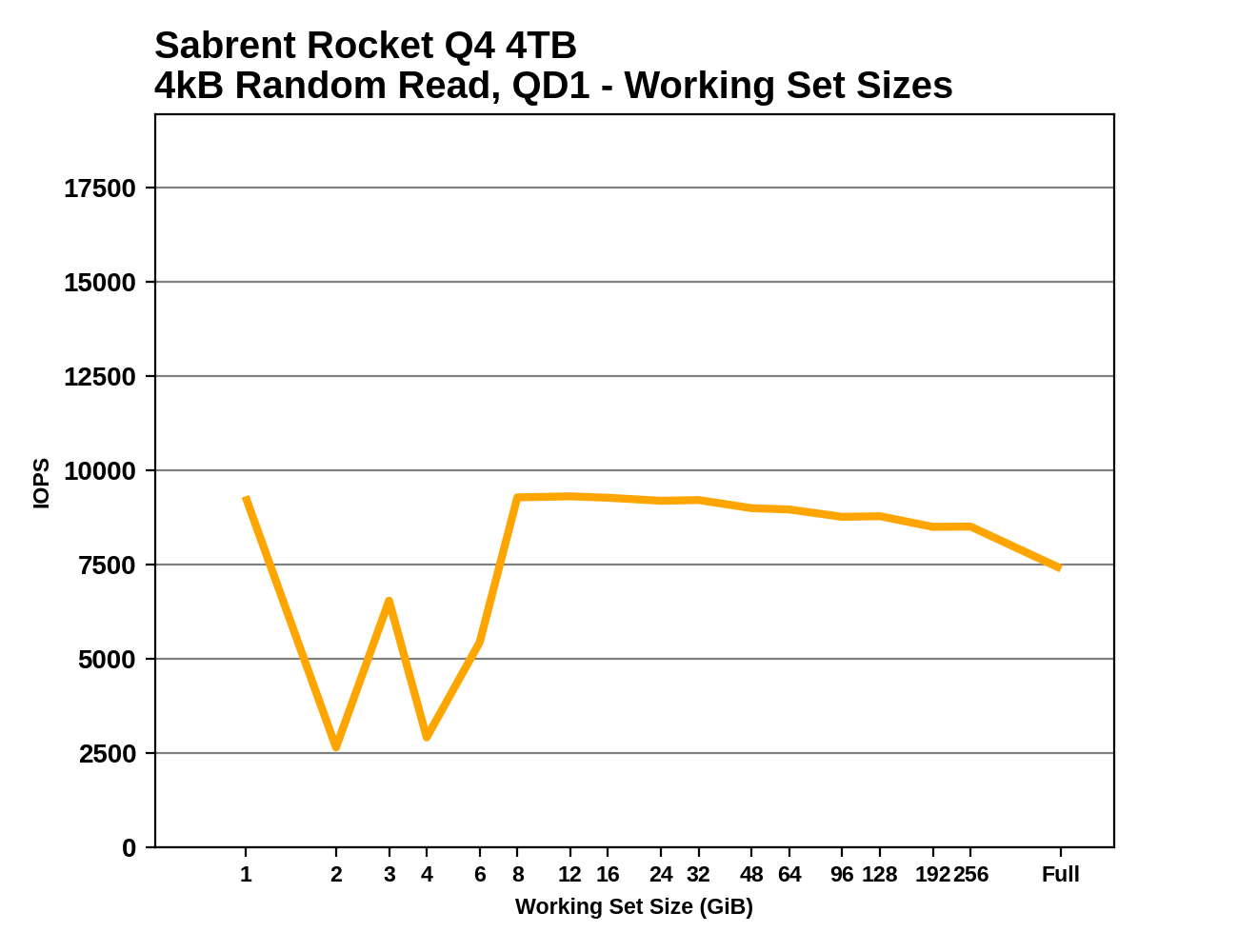

Both the Rocket Q4 and MP600 CORE have some disappointing performance drops during the working set size test, suggesting there's background work keeping the drives busy despite all the idle time they get before the test and between phases of the test. But aside from that, we see the expected trends: the MP600 CORE has flat overall performance on account of having 2GB of DRAM for its 2TB of NAND, while the Rocket Q4 shows a slight performance decline for large working sets because it's managing 4TB of NAND with the same 2GB of DRAM.

Performance vs Block Size

|

|||||||||

| Random Read | |||||||||

| Random Write | |||||||||

| Sequential Read | |||||||||

| Sequential Write | |||||||||

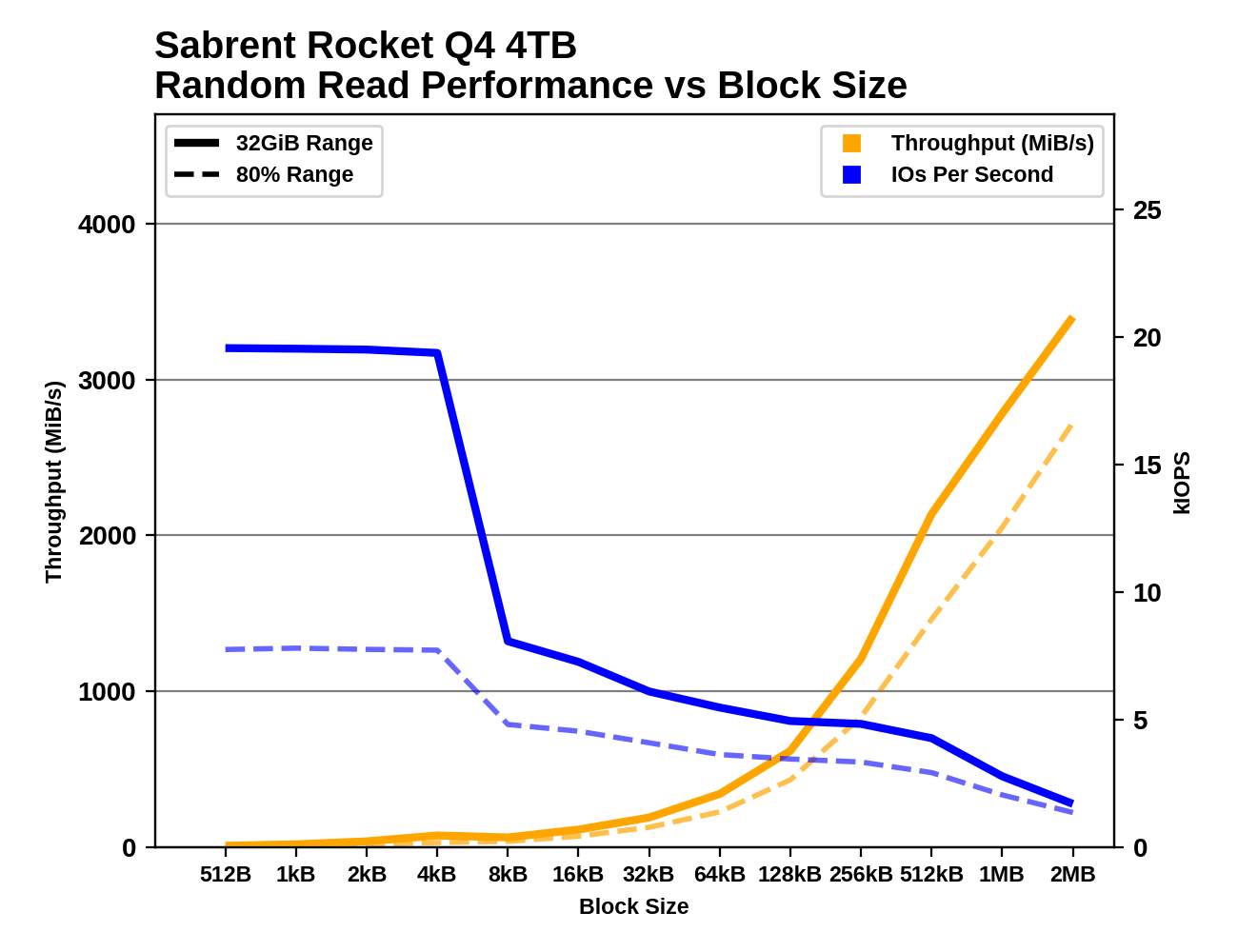

The two Phison E16 drives with QLC show similar patterns to the E12 QLC drives, but with substantial performance improvements in several places, most notable for random reads. These drives don't have any issues with block sizes smaller than 4kB, but there are performance drops at larger block sizes where the SLC cache runs out while testing random writes.

60 Comments

View All Comments

ZolaIII - Friday, April 9, 2021 - link

Actually 5.6 years but compared to same MP600 TLC 8x that much or 44.8 years and for just a little more money. But seriously buying a 1 TB mp600 which will be enough regarding capacity and which will last 22.4 years under same explanation (vs 2.8 for Core) then that makes a hell of a difference.WaltC - Saturday, April 10, 2021 - link

In far less than 22 years your entire system will have been replaced...;) IE, for the use-life of the drive you will never wear it out. The importance some people place on "endurance" is really weird. I have a 960 EVO NVMe with endurance estimates of 75TB: the drive is three years old this month and served as my boot drive for two of those three years, and I've used 19.6TB of write as of today. Rounding off, I have 55TB of write endurance remaining. That makes for an average of 6.5 TBs written per year--but the drive is no longer my boot/Win10-build install drive, so an average of 5TBs per year as strictly a data drive is probably overestimating, but just for fun, let's call it 5 TBs write per year. That means I have *at least* 11 years of write endurance remaining for this drive--which would mean the drive would have lasted at least 14 years in daily use before wearing out. Anyone think that 11 years from now I'll still be using that drive on a daily basis? I don't...;) The fact is that people worry needlessly about write endurance unless they are using these drives in some kind of mega heavy-use commercial setting. Write endurance estimates of 20-30 years are absurd and when choosing a drive for your personal system such estimates should be ignored as they have no meaning--they will be obsolete long before they wear out. So, buy the drive performance at the price you want to pay and don't worry about write endurance as even 75TB is plenty for personal systems.GeoffreyA - Sunday, April 11, 2021 - link

It would be interesting to put today's drives to an endurance experiment and see if their actual and advertised ratings square.ZolaIII - Sunday, April 11, 2021 - link

I have 2 TB writes per month, using PC for productivity, gaming and transcoding and still not to much. If I used it professionally for video that number would be much higher (high bandwidth mastering codes). To hell transcoding a single Blu-ray movie quickly (with GPU for sakes of making it HLG10+) will eat up to 150GB of writes and that's not a rocket science task to perform. By the way its not that PCIe interface will go anywhere and you can mont old NVMe to a new machine.Oxford Guy - Sunday, April 11, 2021 - link

One can't choose performance with QLC. It's inherently slower.It's also inherently reduced in longevity.

Remember, it has twice as many voltage states (causing a much bigger issue with drift) for just a 30% density increase.

That's diminished returns.

haukionkannel - Friday, April 9, 2021 - link

Well, soon QLS can be seen only in highend top models, when middle range and low end go to PLS or what ever...for SSD manufacturers it makes a lot of Sense because they save money in that way. Profit!

nandnandnand - Saturday, April 10, 2021 - link

5/6/8 bits per cell might be ok if NAND manufacturers found some magic sauce to increase endurance. There was research to that effect going on a decade ago: https://ieeexplore.ieee.org/abstract/document/6479...TLC is not going away just yet, and they can just increase drive capacities to make it unlikely an average user will hit the limits.

Samus - Sunday, April 11, 2021 - link

When you consider how well perfected TLC is now that it has gone full 3D and the SLC cache + overprovisioning eliminate most of the performance\endurance issues, it makes you wonder if MLC will ever come back. It's almost completely disappeared even in enterprise.Oxford Guy - Sunday, April 11, 2021 - link

3D manufacturing killed MLC. It made TLC viable.There is no such magic bullet for QLC.

FunBunny2 - Sunday, April 11, 2021 - link

"There is no such magic bullet for QLC."well... the same bullet, ver. 2, might work. that would require two steps:

- moving 'back' to an even larger node, assuming that there's sufficient machinery at such node available at scale

- getting two or three times the layers as TLC currently uses

I've no idea whether either is feasible, but willing to bet both gonads that both, at least, are required.