Intel Core i7-11700K Review: Blasting Off with Rocket Lake

by Dr. Ian Cutress on March 5, 2021 4:30 PM EST- Posted in

- CPUs

- Intel

- 14nm

- Xe-LP

- Rocket Lake

- Cypress Cove

- i7-11700K

CPU Tests: Encoding

One of the interesting elements on modern processors is encoding performance. This covers two main areas: encryption/decryption for secure data transfer, and video transcoding from one video format to another.

In the encrypt/decrypt scenario, how data is transferred and by what mechanism is pertinent to on-the-fly encryption of sensitive data - a process by which more modern devices are leaning to for software security.

Video transcoding as a tool to adjust the quality, file size and resolution of a video file has boomed in recent years, such as providing the optimum video for devices before consumption, or for game streamers who are wanting to upload the output from their video camera in real-time. As we move into live 3D video, this task will only get more strenuous, and it turns out that the performance of certain algorithms is a function of the input/output of the content.

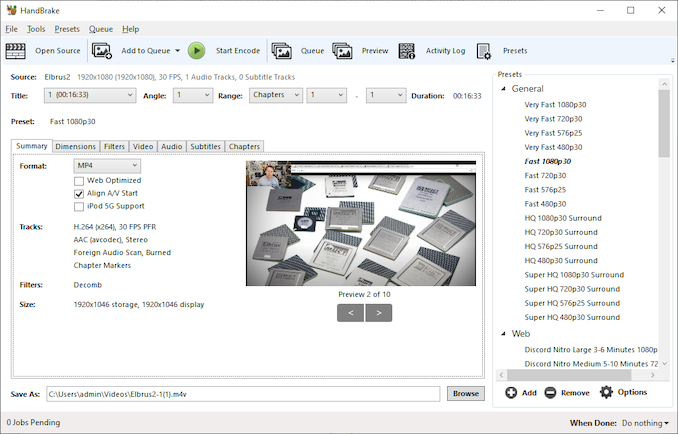

HandBrake 1.32: Link

Video transcoding (both encode and decode) is a hot topic in performance metrics as more and more content is being created. First consideration is the standard in which the video is encoded, which can be lossless or lossy, trade performance for file-size, trade quality for file-size, or all of the above can increase encoding rates to help accelerate decoding rates. Alongside Google's favorite codecs, VP9 and AV1, there are others that are prominent: H264, the older codec, is practically everywhere and is designed to be optimized for 1080p video, and HEVC (or H.265) that is aimed to provide the same quality as H264 but at a lower file-size (or better quality for the same size). HEVC is important as 4K is streamed over the air, meaning less bits need to be transferred for the same quality content. There are other codecs coming to market designed for specific use cases all the time.

Handbrake is a favored tool for transcoding, with the later versions using copious amounts of newer APIs to take advantage of co-processors, like GPUs. It is available on Windows via an interface or can be accessed through the command-line, with the latter making our testing easier, with a redirection operator for the console output.

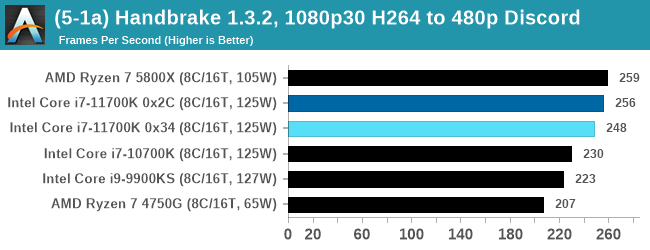

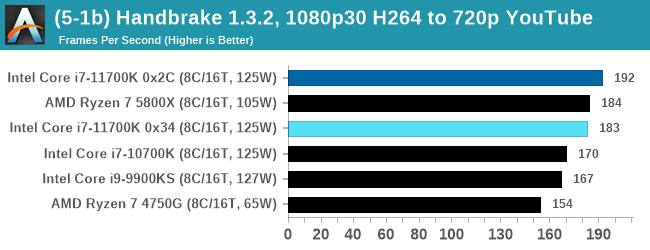

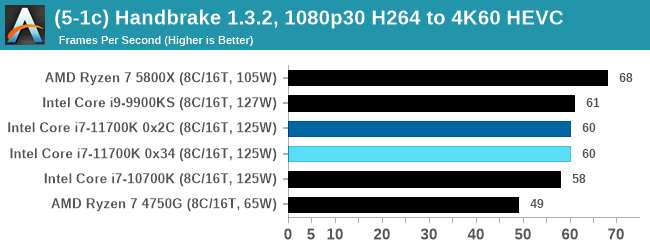

We take the compiled version of this 16-minute YouTube video about Russian CPUs at 1080p30 h264 and convert into three different files: (1) 480p30 ‘Discord’, (2) 720p30 ‘YouTube’, and (3) 4K60 HEVC.

Up to the final 4K60 HEVC, in CPU-only mode, the Intel CPU puts up some good gen-on-gen numbers.

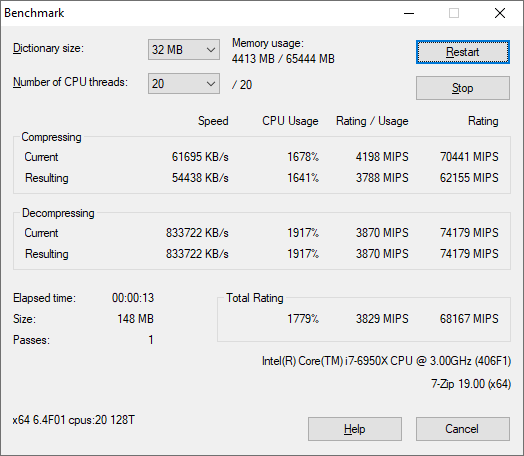

7-Zip 1900: Link

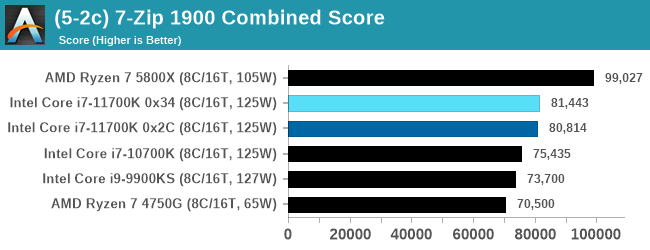

The first compression benchmark tool we use is the open-source 7-zip, which typically offers good scaling across multiple cores. 7-zip is the compression tool most cited by readers as one they would rather see benchmarks on, and the program includes a built-in benchmark tool for both compression and decompression.

The tool can either be run from inside the software or through the command line. We take the latter route as it is easier to automate, obtain results, and put through our process. The command line flags available offer an option for repeated runs, and the output provides the average automatically through the console. We direct this output into a text file and regex the required values for compression, decompression, and a combined score.

An increase over the previous generation, but AMD has a 25% lead.

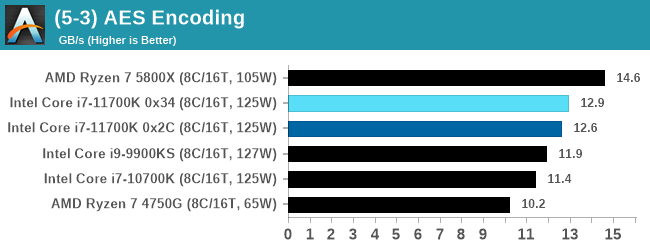

AES Encoding

Algorithms using AES coding have spread far and wide as a ubiquitous tool for encryption. Again, this is another CPU limited test, and modern CPUs have special AES pathways to accelerate their performance. We often see scaling in both frequency and cores with this benchmark. We use the latest version of TrueCrypt and run its benchmark mode over 1GB of in-DRAM data. Results shown are the GB/s average of encryption and decryption.

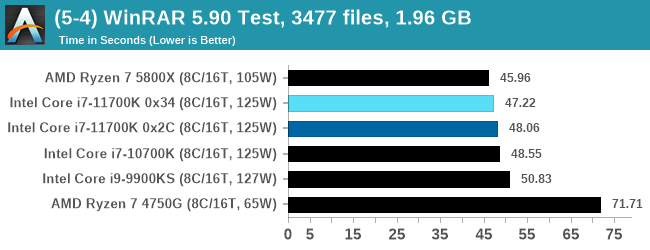

WinRAR 5.90: Link

For the 2020 test suite, we move to the latest version of WinRAR in our compression test. WinRAR in some quarters is more user friendly that 7-Zip, hence its inclusion. Rather than use a benchmark mode as we did with 7-Zip, here we take a set of files representative of a generic stack

- 33 video files , each 30 seconds, in 1.37 GB,

- 2834 smaller website files in 370 folders in 150 MB,

- 100 Beat Saber music tracks and input files, for 451 MB

This is a mixture of compressible and incompressible formats. The results shown are the time taken to encode the file. Due to DRAM caching, we run the test for 20 minutes times and take the average of the last five runs when the benchmark is in a steady state.

For automation, we use AHK’s internal timing tools from initiating the workload until the window closes signifying the end. This means the results are contained within AHK, with an average of the last 5 results being easy enough to calculate.

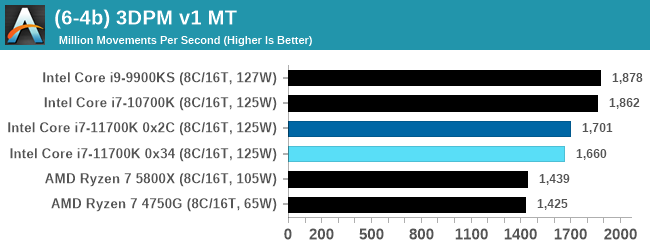

CPU Tests: Legacy and Web

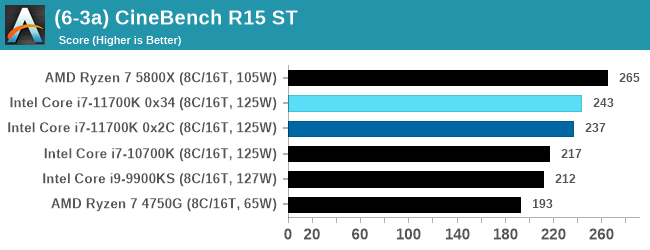

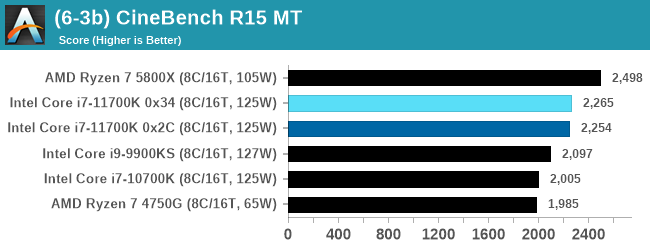

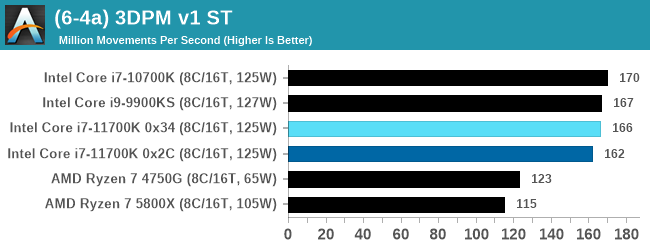

In order to gather data to compare with older benchmarks, we are still keeping a number of tests under our ‘legacy’ section. This includes all the former major versions of CineBench (R15, R11.5, R10) as well as x264 HD 3.0 and the first very naïve version of 3DPM v2.1. We won’t be transferring the data over from the old testing into Bench, otherwise it would be populated with 200 CPUs with only one data point, so it will fill up as we test more CPUs like the others.

The other section here is our web tests.

Web Tests: Kraken, Octane, and Speedometer

Benchmarking using web tools is always a bit difficult. Browsers change almost daily, and the way the web is used changes even quicker. While there is some scope for advanced computational based benchmarks, most users care about responsiveness, which requires a strong back-end to work quickly to provide on the front-end. The benchmarks we chose for our web tests are essentially industry standards – at least once upon a time.

It should be noted that for each test, the browser is closed and re-opened a new with a fresh cache. We use a fixed Chromium version for our tests with the update capabilities removed to ensure consistency.

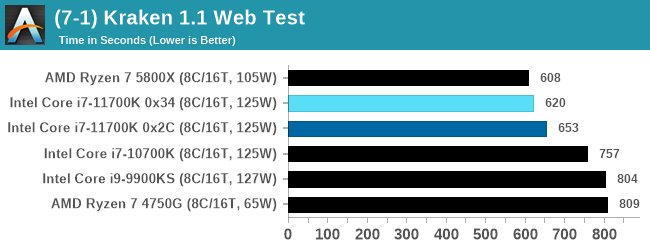

Mozilla Kraken 1.1

Kraken is a 2010 benchmark from Mozilla and does a series of JavaScript tests. These tests are a little more involved than previous tests, looking at artificial intelligence, audio manipulation, image manipulation, json parsing, and cryptographic functions. The benchmark starts with an initial download of data for the audio and imaging, and then runs through 10 times giving a timed result.

We loop through the 10-run test four times (so that’s a total of 40 runs), and average the four end-results. The result is given as time to complete the test, and we’re reaching a slow asymptotic limit with regards the highest IPC processors.

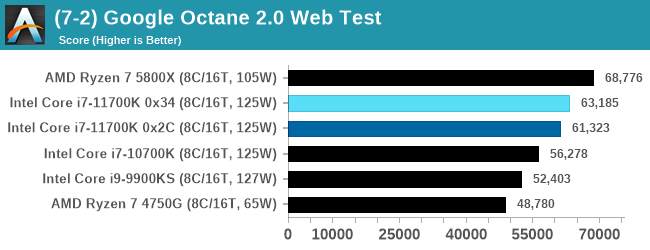

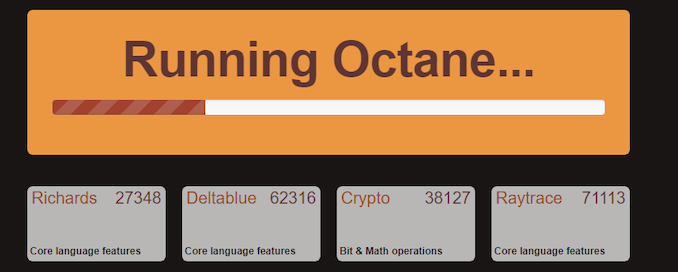

Google Octane 2.0

Our second test is also JavaScript based, but uses a lot more variation of newer JS techniques, such as object-oriented programming, kernel simulation, object creation/destruction, garbage collection, array manipulations, compiler latency and code execution.

Octane was developed after the discontinuation of other tests, with the goal of being more web-like than previous tests. It has been a popular benchmark, making it an obvious target for optimizations in the JavaScript engines. Ultimately it was retired in early 2017 due to this, although it is still widely used as a tool to determine general CPU performance in a number of web tasks.

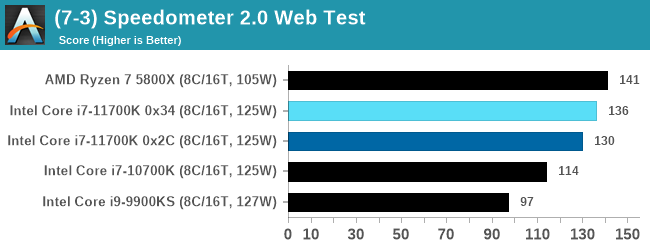

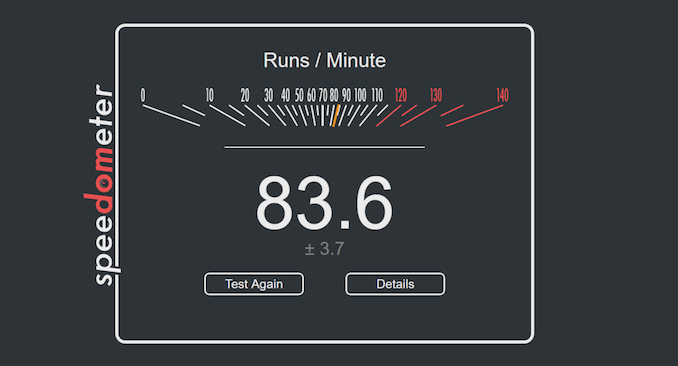

Speedometer 2: JavaScript Frameworks

Our newest web test is Speedometer 2, which is a test over a series of JavaScript frameworks to do three simple things: built a list, enable each item in the list, and remove the list. All the frameworks implement the same visual cues, but obviously apply them from different coding angles.

Our test goes through the list of frameworks, and produces a final score indicative of ‘rpm’, one of the benchmarks internal metrics.

We repeat over the benchmark for a dozen loops, taking the average of the last five.

Legacy Tests

541 Comments

View All Comments

zzzxtreme - Sunday, March 7, 2021 - link

I wished you would have tested the XE graphicsFman4 - Monday, March 8, 2021 - link

Am I the only one find that OP plugged 4 RAMs on an X570 ITX motherboard?Fman4 - Monday, March 8, 2021 - link

@Dr. Ian Cutresszodiacfml - Monday, March 8, 2021 - link

bored. just here to say this is unsurprising though this strongly reminds me of the time where AMD is releasing new, well designed CPUs but two process node generations behind intel. I think AMD was 32nm and 28nm while Intel is 22 and 14nm. most comments were really harsh with AMD but I reasoned that it is simply due to the manufacturing superiority of Intelblppt - Monday, March 8, 2021 - link

Bulldozer and Piledriver are not the examples I would put up for "well designed".GeoffreyA - Tuesday, March 9, 2021 - link

Still, within that mess, AMD did a pretty good job raising Bulldozer's IPC and cutting down its power each generation. But the foundation being fatally flawed, it was hopeless. I believe it taught them a lot about cutting power and so on, and when they poured that into Zen, we saw the result. Bulldozer was a fantastic training ground, if one looks at it humorously.Oxford Guy - Tuesday, March 9, 2021 - link

No, AMD did an extremely poor job.Firstly, Bulldozer had worse IPC than Phenom. No engineers with brains release a CPU to replace the entire line while giving it worse IPC. The trap of going for high clocks was a lesson shown to the entire industry via Netburst. AMD's engineers knew all about it, yet someone at the company decided to try Netburst 2.0.

Secondly, AMD was so sloppy and lazy that Piledriver shipped with a performance regression in AVX. It was worse to use AVX than to not use it. How incredibly incompetent can the company have been? It doesn't take a high IQ to understand that one doesn't ship broken AVX.

AMD then refused to replace Piledriver until Zen came out. It tinkered half-heartedly with APU rubbish and focused on pushing junk like Jaguar.

While it's true that the extreme failure of AMD (the construction core line) is due, to a large degree, to Intel abusing its monopoly to starve AMD of customers and cash — cash it needed to do R&D, one does not release a new chip with worse IPC and then very shortly after break AVX and refuse to stop feeding that junk to customers for many years. Just tinkering with Phenom would have been better (Phenom 3).

As for the foundation claim... we have no idea how well the CMT concept could have worked out with competent engineering. Remember, they literally broke AVX in the Piledriver revision that was supposed to fix Bulldozer enough to make it sellable. Operations caching could have been stronger. The L3 cache was almost as slow as main memory. The RAM controller was weak, just like Phenom's. Etc.

We paid for Intel's monopoly and we're still paying today. Only its monopoly and the lack of adequate competition is enabling the company to be so profitable despite failing so badly. Relying on two companies (or one 1/2, when it comes to R&D money ratio and other factors) to deliver adequate competition doesn't work.

Google and Microsoft = Google owns the clearnet. Apparently, they have some sort of cooperation agreement which helps to explain why Bing has such a tiny index and such a poor-quality search.

TSMC and Samsung = Can't meet demand.

AMD and Nvidia = Nvidia keeps breaking profit records while utterly failing to meet demand. Both companies refuse to stop making their cards attractive for mining and have for a long long time. AMD refused to adequately compete beyond the lower midrange (Polaris forever, or you can buy a 'console'!) for a long time, leaving us to pay through the nose for Nvidia's prices. AMD literally competes against the PC market by pushing the console scam. Consoles are gaming PCs in disguise and they're parasitic in multiple ways, including in terms of wafer allocations. AMD's many many years of refusal to compete with Nvidia beyond the Polaris price point caused so much pent-up demand and now the company can enjoy the artificially high price points from that. It let Nvidia keep raising prices to get consumers used to that. Now that it has finally been forced to improve the 'consoles' beyond the garbage-tier Jaguar CPU it has to offer a bit more value to the PC gaming market. And so, after all these years, we have something decent that one can't buy. I can go on about this so-called competition but why bother. People will go to the most extravagant lengths to excuse the problem of lack of adequate competition — like the person who recently said it's easier to create Google's empire from scratch than it is to make a competitive GPU and sell it as a third GPU company.

There are plenty of other areas in tech with inadequate competition, too.

blppt - Tuesday, March 9, 2021 - link

"AMD then refused to replace Piledriver until Zen came out. It tinkered half-heartedly with APU rubbish and focused on pushing junk like Jaguar."To be fair, AMD had put a LOT of time, money and effort into Bulldozer/Piledriver, and were never a company with bottomless wells of cash to toss an architecture out immediately. Plus, Zen took a long time to design and finalize---thankfully, they made literally ALL the right moves in designing it, including hiring the brilliant Jim Keller.

I think if Zen had been another BD like failure, that would have been the almost the end of AMD in the cpu market (leaving them basically as ATI was) The consoles likely would have gone with Intel or ARM for their next iteration. AMD once again spent tons of money that they don't have as disposable income in designing Zen. Two failures in a row would have been disastrous.

Heck, the consoles might go with their own custom ARM design for PS6/Xbox(whatever) anyways.

GeoffreyA - Wednesday, March 10, 2021 - link

blppt. Agreed, that would have been the end of AMD.Oxford Guy - Wednesday, March 10, 2021 - link

AMD did not put a lot of resources into fixing Bulldozer.It shipped Piledriver with broken AVX and never bothered to replace Piledriver on the desktop until Zen.

Inexcusable. It shipped Steamroller and Excavator in cost-cut mode, cutting cores, cutting clocks, cutting the socket standards, and cutting cache. It used a dense library to save money by keeping the die small and used the inferior 28nm bulk process.

Pathetic in basically every respect.