AMD 3rd Gen EPYC Milan Review: A Peak vs Per Core Performance Balance

by Dr. Ian Cutress & Andrei Frumusanu on March 15, 2021 11:00 AM ESTDisclaimer June 25th: The benchmark figures in this review have been superseded by our second follow-up Milan review article, where we observe improved performance figures on a production platform compared to AMD’s reference system in this piece.

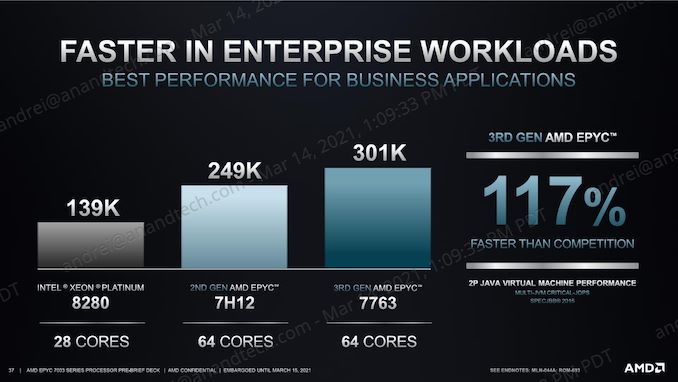

SPECjbb MultiJVM - Java Performance

Moving on from SPECCPU, we shift over to SPECjbb2015. SPECjbb is a from ground-up developed benchmark that aims to cover both Java performance and server-like workloads, from the SPEC website:

“The SPECjbb2015 benchmark is based on the usage model of a worldwide supermarket company with an IT infrastructure that handles a mix of point-of-sale requests, online purchases, and data-mining operations. It exercises Java 7 and higher features, using the latest data formats (XML), communication using compression, and secure messaging.

Performance metrics are provided for both pure throughput and critical throughput under service-level agreements (SLAs), with response times ranging from 10 to 100 milliseconds.”

The important thing to note here is that the workload is of a transactional nature that mostly works on the data-plane, between different Java virtual machines, and thus threads.

We’re using the MultiJVM test method where as all the benchmark components, meaning controller, server and client virtual machines are running on the same physical machine.

The JVM runtime we’re using is OpenJDK 15 on both x86 and Arm platforms, although not exactly the same sub-version, but closest we could get:

EPYC & Xeon systems:

openjdk 15 2020-09-15

OpenJDK Runtime Environment (build 15+36-Ubuntu-1)

OpenJDK 64-Bit Server VM (build 15+36-Ubuntu-1, mixed mode, sharing)

Altra system:

openjdk 15.0.1 2020-10-20

OpenJDK Runtime Environment 20.9 (build 15.0.1+9)

OpenJDK 64-Bit Server VM 20.9 (build 15.0.1+9, mixed mode, sharing)

Furthermore, we’re configuring SPECjbb’s runtime settings with the following configurables:

SPEC_OPTS_C="-Dspecjbb.group.count=$GROUP_COUNT -Dspecjbb.txi.pergroup.count=$TI_JVM_COUNT -Dspecjbb.forkjoin.workers=N -Dspecjbb.forkjoin.workers.Tier1=N -Dspecjbb.forkjoin.workers.Tier2=1 -Dspecjbb.forkjoin.workers.Tier3=16"

Where N=160 for 2S Altra test runs, N=80 for 1S Altra test runs, N=112 for 2S Xeon, N=56 for 1S Xeon, and N=128 for 2S and 1S on the EPYC system. The 75F3 system had the worker count reduced to 64 and 32 for 2S/1S runs.

In terms of JVM options, we’re limiting ourselves to bare-bone options to keep things simple and straightforward:

EPYC & Altra systems:

JAVA_OPTS_C="-server -Xms2g -Xmx2g -Xmn1536m -XX:+UseParallelGC "

JAVA_OPTS_TI="-server -Xms2g -Xmx2g -Xmn1536m -XX:+UseParallelGC"

JAVA_OPTS_BE="-server -Xms48g -Xmx48g -Xmn42g -XX:+UseParallelGC -XX:+AlwaysPreTouch"

Xeon system:

JAVA_OPTS_C="-server -Xms2g -Xmx2g -Xmn1536m -XX:+UseParallelGC"

JAVA_OPTS_TI="-server -Xms2g -Xmx2g -Xmn1536m -XX:+UseParallelGC"

JAVA_OPTS_BE="-server -Xms172g -Xmx172g -Xmn156g -XX:+UseParallelGC -XX:+AlwaysPreTouch"

The reason the Xeon system is running a larger back-end heap is because we’re running a single NUMA node per socket, while for the Altra and EPYC we’re running four NUMA nodes per socket for maximised throughput, meaning for the 2S figures we have 8 backends running for the Altra and EPYC and 2 for the Xeon, and naturally half of those numbers for the 1S benchmarks. The back-ends and transaction injectors are affinitised to their local NUMA node with numactl –cpunodebind and –membind, while the controller is called with –interleave=all.

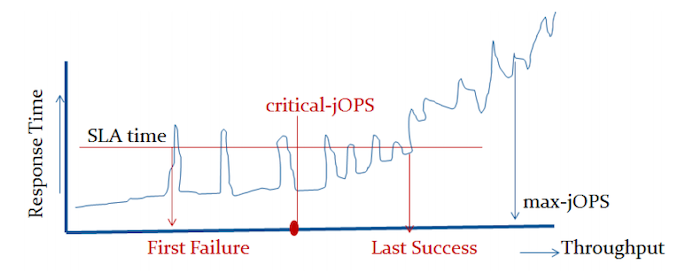

The max-jOPS and critical-jOPS result figures are defined as follows:

"The max-jOPS is the last successful injection rate before the first failing injection rate where the reattempt also fails. For example, if during the RT-curve phase the injection rate of 80000 passes, but the next injection rate of 90000 fails on two successive attempts, then the max-jOPS would be 80000."

"The overall critical-jOPS is computed by taking the geomean of the individual critical-jOPS computed at these five SLA points, namely:

• Critical-jOPSoverall = Geo-mean of (critical-jOPS@ 10ms, 25ms, 50ms, 75ms and 100ms response time SLAs)

During the RT curve building phase the Transaction Injector measures the 99th percentile response times at each step level for all the requests (see section 9) that are considered in the metrics computations. It then computes the Critical-jOPS for each of the above five SLA points using the following formula:

(first * nOver + last * nUnder) / (nOver + nUnder) "

That’s a lot of technicalities to explain an admittedly complex benchmark, but the gist of it is that max-jOPS represents the maximum transaction throughput of a system until further requests fail, and critical-jOPS is an aggregate geomean transaction throughput within several levels of guaranteed response times, essentially different levels of quality of service.

Beyond the result figures, the benchmark keeps detailed track of timings of responses and tracks a few important statistical data-points across a response-time curve, as follows:

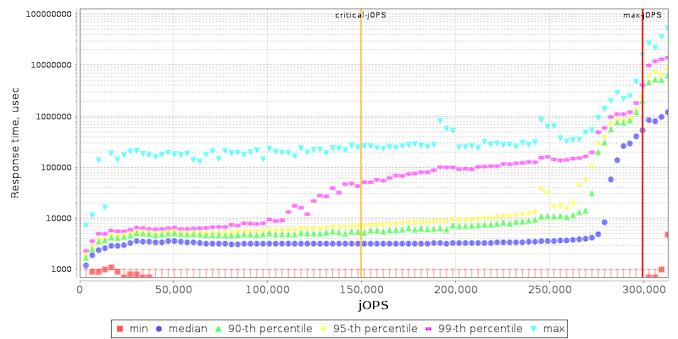

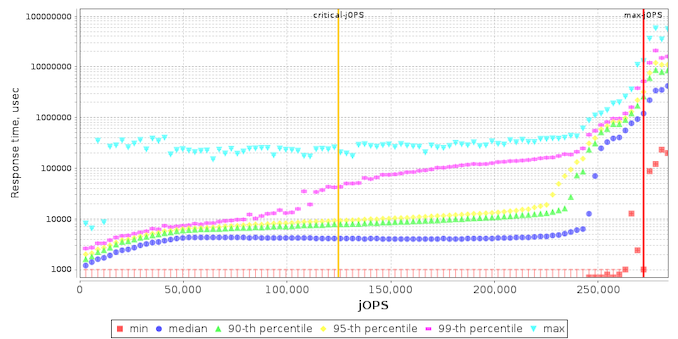

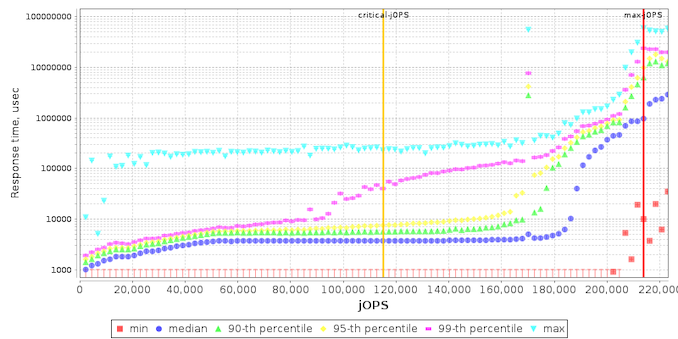

In terms of the response curves of the new Milan 7763 part, the general behaviour doesn’t look that much different to the 7742 other than a weird discrepancy at low load.

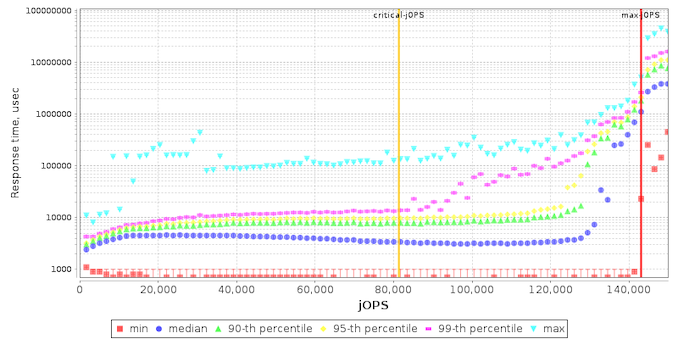

The 75F3 part is interesting as due to it focusing more on per-core performance, it tightens the response curve with the -critical performance score being closer to the -max capacity of the system.

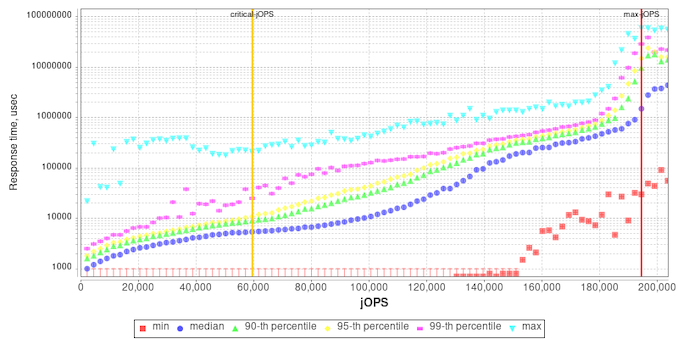

I included the Intel and Altra graphs for context.

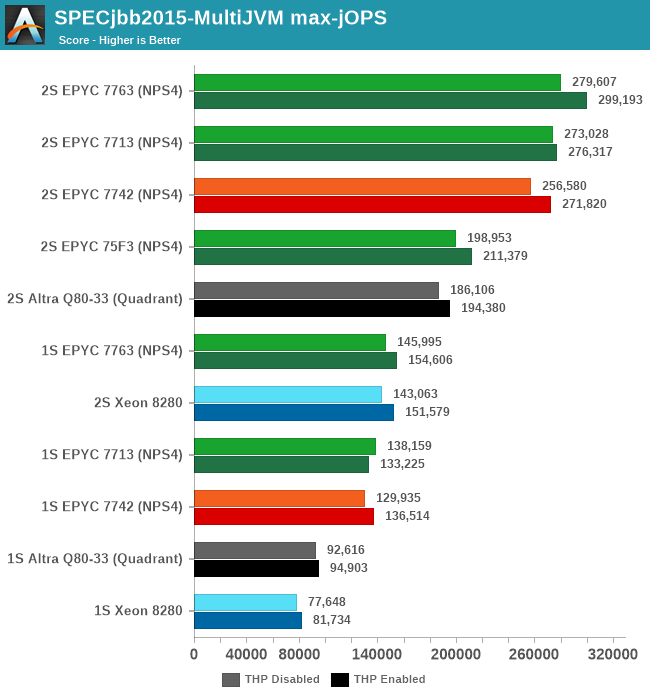

In terms of the -max-jOPS achieved by each system in our settings configuration, the new Zen3 parts fare quite well. The 7763 outperforms the 7742 by +9%, while the 7713 also outperforms the 7742 by +6.4%.

Again, very interesting is to see the 75F3’s maximum throughput reaching 71% of the top SKU’s performance in such scale-out workloads even though it’s only got half the cores available.

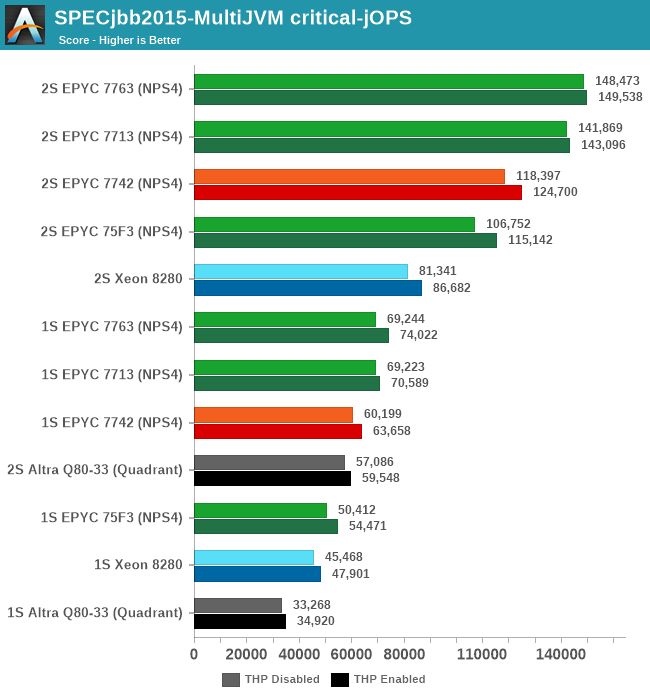

The -critical-jOPS figure is probably the more important metric for SPECjbb given that it covers SLA scenarios, and here the new Milan parts are faring extremely well. The 7763 outperforms the 7742 by +25%, and the 7713 is also not far behind with +19.7%.

The 75F3 is also doing amazingly well, keeping up with the higher core-count parts.

Against the competition, our own and AMD figures differ a bit due to different settings, however we’re still seeing the new Milan top-SKU outperform the 8280 by +82 in performance.

Generally speaking, the generational improvements over Rome in -critical-jOPS figure of SPECjbb are a more reassuring result compared to the other peak full load performance metrics we’ve seen on SPEC CPU. This actually corresponds to the power behaviour of the new chips, with the new Zen3 cores offering notably better per-core performance compared to the Rome predecessor, at least up until the new parts hit a power envelope wall where performance improvements become more limited.

120 Comments

View All Comments

mode_13h - Saturday, March 20, 2021 - link

Okay, thanks for confirming with them.mode_13h - Saturday, March 20, 2021 - link

It's not the easiest thing to confirm with a test, since you'd have to come along behind the writer and observe that a write that SHOULD still be in cache isn't.CBeddoe - Monday, March 15, 2021 - link

I'm excited by AMD's continuing design improvements.Can't wait to see what happens with the next node shrink. Intel has some catching up to do.

Ppietra - Tuesday, March 16, 2021 - link

Can someone please explain how is it possible that the power consumption of the all package is so much higher than the power consumption of the actual cores doing the work?Spunjji - Friday, March 19, 2021 - link

Because the I/O die is running on an older 14nm process and is servicing all of the cores. In a 64-core CPU, the per-core power use of the I/O die is less than 2W. Still too much, of course, but in context not as obscene as it looks when you look at the total power.Elstar - Tuesday, March 16, 2021 - link

Lest it go unsaid, I really appreciate the "compile a big C++ project" benchmark (i.e. LLVM). Thank you!Spunjji - Tuesday, March 16, 2021 - link

"To that end, all we have to compare Milan to is Intel’s Cascade Lake Xeon Scalable platform, which was the same platform we compared Rome to."Says it all, really. Good work AMD, and cheers to the team for the review!

Hifihedgehog - Tuesday, March 16, 2021 - link

Sysadmin: Ram? Rome?AMD: Milan, darling, Milan...

Ivan Argentinski - Tuesday, March 16, 2021 - link

Congrats for going more in-depth for the per-core performance! For many enterprise buyers, this is the most (only?) important metric. I do suspect, that in this regard, the 8 core 72F3 will actually be the best 3rd gen EPYC!But to better understand this, we need more test and per-core comparisons. I would suggest comparing:

* All current AMD fast/frequency optimized CPUs - EPYC 72F3, 73F3, ...

* Previous gen AMD fast/frequency CPUs like EPYC 7F32, ...

* Intel Frequency optimized CPUs like Xeon Gold 6250, 6244, ...

The only metric that matters is per-core performance under full *sustained* load.

Exploring the dynamic TDP of AMD EPYC 3rd gen is also an interesting option. For example, I am quite curious about configuring 72F3 with 200W instead of the default 180W.

Andrei Frumusanu - Saturday, March 20, 2021 - link

If we get more SKUs to test, I'll be sure to do so.