AMD Ryzen 9 5980HS Cezanne Review: Ryzen 5000 Mobile Tested

by Dr. Ian Cutress on January 26, 2021 9:00 AM EST- Posted in

- CPUs

- AMD

- Vega

- Ryzen

- Zen 3

- Renoir

- Notebook

- Ryzen 9 5980HS

- Ryzen 5000 Mobile

- Cezanne

CPU Tests: Synthetic and SPEC

Most of the people in our industry have a love/hate relationship when it comes to synthetic tests. On the one hand, they’re often good for quick summaries of performance and are easy to use, but most of the time the tests aren’t related to any real software. Synthetic tests are often very good at burrowing down to a specific set of instructions and maximizing the performance out of those. Due to requests from a number of our readers, we have the following synthetic tests.

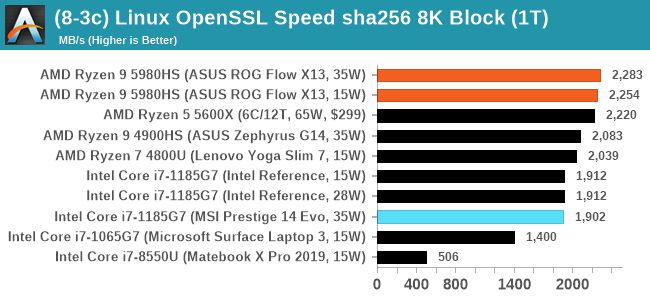

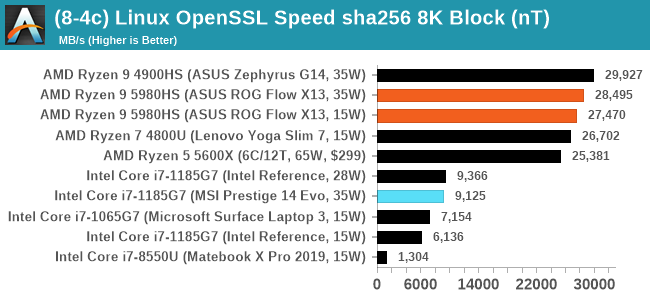

Linux OpenSSL Speed: SHA256

One of our readers reached out in early 2020 and stated that he was interested in looking at OpenSSL hashing rates in Linux. Luckily OpenSSL in Linux has a function called ‘speed’ that allows the user to determine how fast the system is for any given hashing algorithm, as well as signing and verifying messages.

OpenSSL offers a lot of algorithms to choose from, and based on a quick Twitter poll, we narrowed it down to the following:

- rsa2048 sign and rsa2048 verify

- sha256 at 8K block size

- md5 at 8K block size

For each of these tests, we run them in single thread and multithreaded mode. All the graphs are in our benchmark database, Bench, and we use the sha256 results in published reviews.

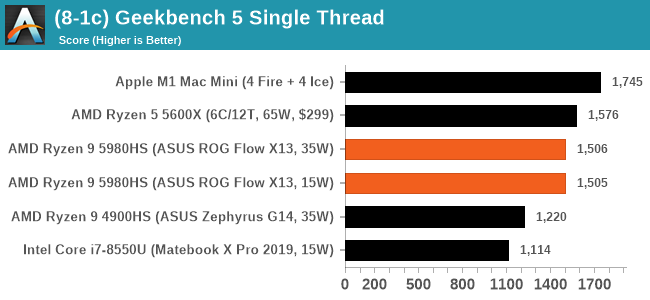

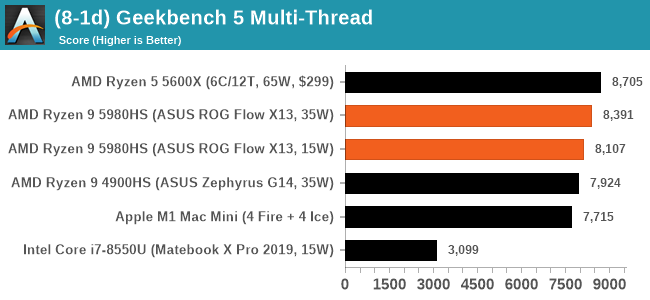

GeekBench 5: Link

As a common tool for cross-platform testing between mobile, PC, and Mac, GeekBench is an ultimate exercise in synthetic testing across a range of algorithms looking for peak throughput. Tests include encryption, compression, fast Fourier transform, memory operations, n-body physics, matrix operations, histogram manipulation, and HTML parsing.

Unfortunately we are not going to include the Intel GB5 results in this review, although you can find them inside our benchmark database. The reason behind this is down to AVX512 acceleration of GB5's AES test - this causes a substantial performance difference in single threaded workloads that thus sub-test completely skews any of Intel's results to the point of literal absurdity. AES is not that important of a real-world workload, so the fact that it obscures the rest of GB5's subtests makes overall score comparisons to Intel CPUs with AVX512 installed irrelevant to draw any conclusions. This is also important for future comparisons of Intel CPUs, such as Rocket Lake, which will have AVX512 installed. Users should ask to see the sub-test scores, or a version of GB5 where the AES test is removed.

To clarify the point on AES. The Core i9-10900K scores 1878 in the AES test, while 1185G7 scores 4149. While we're not necessarily against the use of accelerators especially given that the future is going to be based on how many and how efficient these accelerators work (we can argue whether AVX-512 is efficient compared to dedicated silicon), the issue stems from a combi-test like GeekBench in which it condenses several different (around 20) tests into a single number from which conclusions are meant to be drawn. If one test gets accelerated enough to skew the end result, then rather than being a representation of a set of tests, that one single test becomes the conclusion at the behest of the others, and it's at that point the test should be removed and put on its own. GeekBench 4 had memory tests that were removed for Geekbench 5 for similar reasons, and should there be a sixth GeekBench iteraction, our recommendation is that the cryptography is removed for similar reasons. There are 100s of cryptography algorithms to optimize for, but in the event where a popular tests focuses on a single algorithm, that then becomes an optimization target and becomes meaningless when the broader ecosystem overwhelmingly uses other cryptography algorithms.

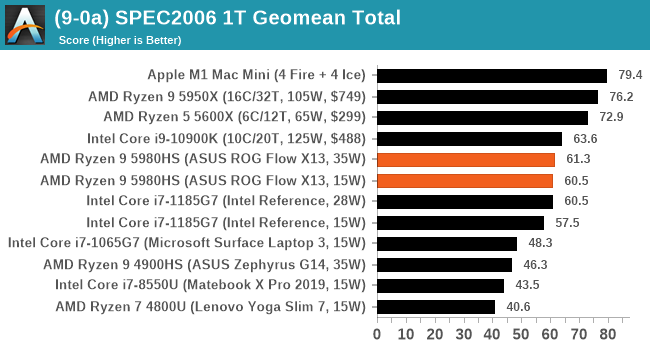

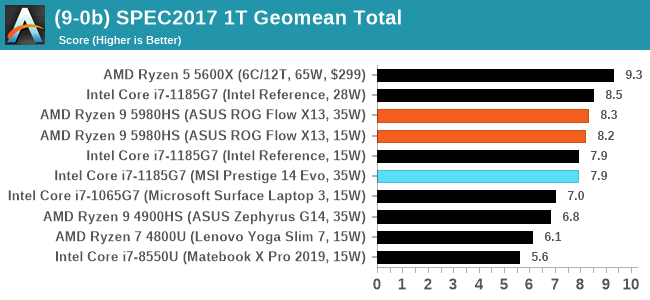

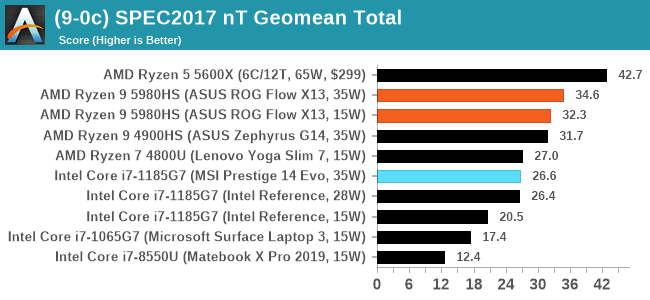

CPU Tests: SPEC

SPEC2017 and SPEC2006 is a series of standardized tests used to probe the overall performance between different systems, different architectures, different microarchitectures, and setups. The code has to be compiled, and then the results can be submitted to an online database for comparison. It covers a range of integer and floating point workloads, and can be very optimized for each CPU, so it is important to check how the benchmarks are being compiled and run.

We run the tests in a harness built through Windows Subsystem for Linux, developed by our own Andrei Frumusanu. WSL has some odd quirks, with one test not running due to a WSL fixed stack size, but for like-for-like testing is good enough. SPEC2006 is deprecated in favor of 2017, but remains an interesting comparison point in our data. Because our scores aren’t official submissions, as per SPEC guidelines we have to declare them as internal estimates from our part.

For compilers, we use LLVM both for C/C++ and Fortan tests, and for Fortran we’re using the Flang compiler. The rationale of using LLVM over GCC is better cross-platform comparisons to platforms that have only have LLVM support and future articles where we’ll investigate this aspect more. We’re not considering closed-sourced compilers such as MSVC or ICC.

clang version 10

clang version 7.0.1 (ssh://git@github.com/flang-compiler/flang-driver.git

24bd54da5c41af04838bbe7b68f830840d47fc03)

-Ofast -fomit-frame-pointer

-march=x86-64

-mtune=core-avx2

-mfma -mavx -mavx2

Our compiler flags are straightforward, with basic –Ofast and relevant ISA switches to allow for AVX2 instructions. We decided to build our SPEC binaries on AVX2, which puts a limit on Haswell as how old we can go before the testing will fall over. This also means we don’t have AVX512 binaries, primarily because in order to get the best performance, the AVX-512 intrinsic should be packed by a proper expert, as with our AVX-512 benchmark. All of the major vendors, AMD, Intel, and Arm, all support the way in which we are testing SPEC.

To note, the requirements for the SPEC licence state that any benchmark results from SPEC have to be labelled ‘estimated’ until they are verified on the SPEC website as a meaningful representation of the expected performance. This is most often done by the big companies and OEMs to showcase performance to customers, however is quite over the top for what we do as reviewers.

For each of the SPEC targets we are doing, SPEC2006 rate-1, SPEC2017 speed-1, and SPEC2017 speed-N, rather than publish all the separate test data in our reviews, we are going to condense it down into a few interesting data points. The full per-test values are in our benchmark database.

218 Comments

View All Comments

Tomatotech - Thursday, January 28, 2021 - link

Wrong. Check Wikipedia - 2013 MacBook Pros were available from Apple with 1TB SSDs. They’re still good even now as you can replace that 2013 Apple SSD with a modern NVME SSD for a huge speed up.And yes Apple supported the NVMe standard before it was even a standard. It wasn’t finalised by 2013 so these macs need a $10 hardware adaptor in the m.2 bay to physically take the NVMe drive but electronically and on the software level NVME is fully supported.

Kuhar - Thursday, January 28, 2021 - link

Sorry but you are wrong or don`t understand what stock means. On Apple`s own website states clearly that MBP 2013 had STOCK 256 gb SSD with OPTION to upgrade to as high as 1 tb SSD. So maybe your Apple lies again and wiki is ofc correct. On top of that: bragging about 1 tb SSD when in PC world you could get 2 tb SSD in top machines isn`t rellay something to brag about.GreenReaper - Saturday, January 30, 2021 - link

Stock means that they were in stock, available from the manufacturer for order. Which is fair to apply in this case. Most likely they didn't have any SSD in them until they were configured upon sale.What you're thinking of is base. At the same time, it's fair to call out as an unfair comparison, because they are cited as the standard/base configuration of this model, where it wasn't for the MBP

grant3 - Wednesday, January 27, 2021 - link

1. Worrying about what was standard 7 years ago as if it's relevant to what people need today is silly2. TB SSDs were probably about $600-$700 in 2013. If you spent that much to upgrade your MBP, good for you, that doesn't mean it's the best use of funds for everyone.

Makste - Wednesday, January 27, 2021 - link

It is a good review thank you Dr. Ian.My concern is, and has always been the fact that, CPU manufacturers make beefier iGPUs on higher core count CPUs which is not right/fair in my view, because higher core count CPUs and most especially the H series are most of the time bundled with a dGPU, while lower core count CPUs may or may not be bundled with a dGPU. I think lower core count APUs would sell much better if the iGPUs on lower core count CPUs are made beefier because they have enough die space for this, I suppose, in order to satisfy clients who can only afford lower core count CPUs which are not paired with a dGPU. It's a bit of a waste of resources in my view to give 8 vega cores to a ryzen 9 5980HS which is going to be paired with a dgpu and only 6 vega cores to a ryzen 3 5300 whose prospects of being paired with a dGPU are limited.

I don't know what you think about this, but if you agree, then it'd be helpful if you managed to get them to reconsider. Thanks.

Spunjji - Thursday, January 28, 2021 - link

I get your point here, and I agree that it would be a nice thing to have - a 15W 4-core CPU with fully-enabled iGPU would be lovely. Unfortunately it doesn't make much sense from AMD's perspective - they only have one chip design, and they want to get as much money as possible for the fully-enabled ones. It would also add a lot of complexity to their product lineup to have some models that have more CPU cores and fewer GPU CUs, and some that reversed the balance. It's easier for them just to have one line-up that goes from worst to best. :/Makste - Thursday, January 28, 2021 - link

Yes. It could be that, they are sticking with their original plan from the time they decided to introduce iGPUs to X86. But, I don't see why they can't make an overhaul to their offerings now that they are also on top. They could still offer 8 vega dies from the beginning of the series to the top most 8 core cpu offering. And those would be the high end offerings.Then, the other mid and low end variants would be those without the fully enabled vega dies. This way, nothing would be wasted and cezanne would then have a multitude of offerings, I believe people, even at this moment, would like to own a piece of cezanne, be it 3 cores or 5 cores. I think it's the customer to decide what is valuable and what is not valuable. Black and white thinking won't do (that cores will only sell if they are in even numbers). They should simply offer everything they have especially since their design can allow them to do so and more so now that there are supply constraints.

Spunjji - Friday, January 29, 2021 - link

The problem is that it's not just about what the end-user might want. AMD's customers are the OEMs, and the OEMs don't want to build a range of laptops with several dozen CPU options in it, because then they have to keep stock of all of those processors and try to guess the right amount of laptops to build with each different option. It's just not efficient for them. Unfortunately, what you're asking for isn't likely to happen.Makste - Friday, January 29, 2021 - link

Sigh... I realise the cold hard truth now that you've put it more bluntly....An OEM has to fill this gap.

Spunjji - Thursday, January 28, 2021 - link

I might be in the market for a laptop later this year, and it's nice to know that unlike the jump from Zen+ to Zen 2, the newer APUs are better but not *devastatingly so*. I might be able to pick up something using a 4000 series APU on discount and not feel like I'm missing out, but if funds allow I can go for a new device with a 5000 APU and know that I'm getting the absolute best mobile x86 performance per watt/dollar on the market. Either way, it's good to see that the Intel/Nvidia duopoly is finally being broken in a meaningful way.I do have one request - it would be nice to get a separate article with a little more analysis on Tiger Lake in shipping devices vs. the preview device they sent you. Your preview model appears to absolutely annihilate its own very close retail cousin here, and I'd love to see some informed thoughts on how and why that happens. I really don't like the fact that Intel seeded reviewers with something that, in retrospect, appears to significantly over-represent the performance of actually shipping products. It would be good to know whether that's a fluke or something you can replicate consistently - and, if it's the latter, for that to be called out more prominently.

Regardless, thanks for the efforts. It's good to see AMD maintaining good pace. When they get around to slapping RDNA 2 into a future APU, I might finally go ahead and replace my media centre with something that can game!