AMD Zen 3 Ryzen Deep Dive Review: 5950X, 5900X, 5800X and 5600X Tested

by Dr. Ian Cutress on November 5, 2020 9:01 AM ESTNew and Improved Instructions

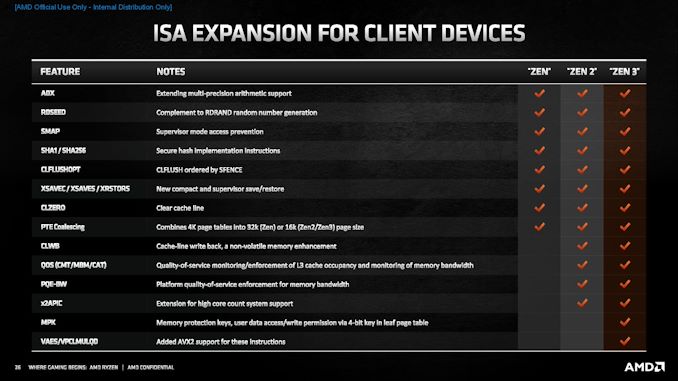

When it comes to instruction improvements, moving to a brand new ground-up core enables a lot more flexibility in how instructions are processed compared to just a core update. Aside from adding new security functionality, being able to rearchitect the decoder/micro-op cache, the execution units, and the number of execution units allows for a variety of new features and hopefully faster throughput.

As part of the microarchitecture deep-dive disclosures from AMD, we naturally get AMD’s messaging on the improvements in this area – we were told of the highlights, such as the improved FMAC and new AVX2/AVX256 expansions. There’s also Control-Flow Enforcement Technology (CET) which enables a shadow stack to protect against ret/ROP attacks. However after getting our hands on the chip, there’s a trove of improvements to dive through.

Let’s cover AMD’s own highlights first.

The top cover item is the improved Fused Multiply-Accumulate (FMA), which is a frequently used operation in a number of high-performance compute workloads as well as machine learning, neural networks, scientific compute and enterprise workloads.

In Zen 2, a single FMA took 5 cycles with a throughput of 2/clock.

In Zen 3, a single FMA takes 4 cycles with a throughput of 2/clock.

This means that AMD’s FMAs are now on parity with Intel, however this update is going to be most used in AMD’s EPYC processors. As we scale up this improvement to the 64 cores of the current generation EPYC Rome, any compute-limited workload on Rome should be freed in Naples. Combine that with the larger L3 cache and improved load/store, some workloads should expect some good speed ups.

The other main update is with cryptography and cyphers. In Zen 2, vector-based AES and PCLMULQDQ operations were limited to AVX / 128-bit execution, whereas in Zen 3 they are upgraded to AVX2 / 256-bit execution.

This means that VAES has a latency of 4 cycles with a throughput of 2/clock.

This means that VPCLMULQDQ has a latency of 4 cycles, with a throughput of 0.5/clock.

AMD also mentioned to a certain extent that it has increased its ability to process repeated MOV instructions on short strings – what used to not be so good for short copies is now good for both small and large copies. We detected that the new core performs better REP MOV instruction elimination at the decode stage, leveraging the micro-op cache better.

Now here’s the stuff that AMD didn’t talk about.

Integer

Sticking with instruction elimination, a lot of instructions and zeroing idioms that Zen 2 used to decode but then skip execution are now detected and eliminated at the decode stage.

- NOP (90h) up to 5x 66h

- LNOP3/4/5 (Looped NOP)

- (V)MOVAPS/MOVAPD/MOVUPS/MOVUPD vec1, vec1 : Move (Un)Aligned Packed FP32/FP64

- VANDNPS/VANDNPD vec1, vec1, vec1 : Vector bitwise logical AND NOT Packed FP32/FP64

- VXORPS/VXORPD vec1, vec1, vec1 : Vector bitwise logical XOR Packed FP32/FP64

- VPANDN/VPXOR vec1, vec1, vec1 : Vector bitwise logical (AND NOT)/XOR

- VPCMPGTB/W/D/Q vec1, vec1, vec1 : Vector compare packed integers greater than

- VPSUBB/W/D/Q vec1, vec1, vec1 : Vector subtract packed integers

- VZEROUPPER : Zero upper bits of YMM

- CLC : Clear Carry Flag

As for direct performance adjustments, we detected the following:

| Zen3 Updates (1) Integer Instructions |

|||

| AnandTech | Instruction | Zen2 | Zen 3 |

| XCHG | Exchange Register/Memory with Register |

17 cycle latency | 7 cycle latency |

| LOCK (ALU) | Assert LOCK# Signal | 17 cycle latency | 7 cycle latency |

| ALU r16/r32/r64 imm | ALU on constant | 2.4 per cycle | 4 per cycle |

| SHLD/SHRD | FP64 Shift Left/Right | 4 cycle latency 0.33 per cycle |

2 cycle latency 0.66 per cycle |

| LEA [r+r*i] | Load Effective Address | 2 cycle latency 2 per cycle |

1 cycle latency 4 per cycle |

| IDIV r8 | Signed Integer Division | 16 cycle latency 1/16 per cycle |

10 cycle latency 1/10 per cycle |

| DIV r8 | Unsigned Integer Division | 17 cycle latency 1/17 per cycle |

|

| IDIV r16 | Signed Integer Division | 21 cycle latency 1/21 per cycle |

12 cycle latency 1/12 per cycle |

| DIV r16 | Unsigned Integer Division | 22 cycle latency 1/22 per cycle |

|

| IDIV r32 | Signed Integer Division | 29 cycle latency 1/29 per cycle |

14 cycle latency 1/14 per cycle |

| DIV r32 | Unsigned Integer Division | 30 cycle latency 1/30 per cycle |

|

| IDIV r64 | Signed Integer Division | 45 cycle latency 1/45 per cycle |

19 cycle latency 1/19 per cycle |

| DIV r64 | Unsigned Integer Division | 46 cycle latency 1/46 cycle latency |

20 cycle latency 1/20 per cycle |

| Zen3 Updates (2) Integer Instructions |

|||

| AnandTech | Instruction | Zen2 | Zen 3 |

| LAHF | Load Status Flags into AH Register |

2 cycle latency 0.5 per cycle |

1 cycle latency 1 per cycle |

| PUSH reg | Push Register Onto Stack | 1 per cycle | 2 per cycle |

| POP reg | Pop Value from Stack Into Register |

2 per cycle | 3 per cycle |

| POPCNT | Count Bits | 3 per cycle | 4 per cycle |

| LZCNT | Count Leading Zero Bits | 3 per cycle | 4 per cycle |

| ANDN | Logical AND | 3 per cycle | 4 per cycle |

| PREFETCH* | Prefetch | 2 per cycle | 3 per cycle |

| PDEP/PEXT | Parallel Bits Deposit/Extreact |

300 cycle latency 250 cycles per 1 |

3 cycle latency 1 per clock |

It’s worth highlighting those last two commands. Software that helps the prefetchers, due to how AMD has arranged the branch predictors, can now process three prefetch commands per cycle. The other element is the introduction of a hardware accelerator with parallel bits: latency is reduced 99% and throughput is up 250x. If anyone asks why we ever need extra transistors for modern CPUs, it’s for things like this.

There are also some regressions

| Zen3 Updates (3) Slower Instructions |

|||

| AnandTech | Instruction | Zen2 | Zen 3 |

| CMPXCHG8B | Compare and Exchange 8 Byte/64-bit |

9 cycle latency 0.167 per cycle |

11 cycle latency 0.167 per cycle |

| BEXTR | Bit Field Extract | 3 per cycle | 2 per cycle |

| BZHI | Zero High Bit with Position | 3 per cycle | 2 per cycle |

| RORX | Rorate Right Logical Without Flags |

3 per cycle | 2 per cycle |

| SHLX / SHRX | Shift Left/Right Without Flags |

3 per cycle | 2 per cycle |

As always, there are trade offs.

x87

For anyone using older mathematics software, it might be riddled with a lot of x87 code. x87 was originally meant to be an extension of x86 for floating point operations, but based on other improvements to the instruction set, x87 is somewhat deprecated, and we often see regressed performance generation on generation.

But not on Zen 3. Among the regressions, we’re also seeing some improvements. Some.

| Zen3 Updates (4) x87 Instructions |

|||

| AnandTech | Instruction | Zen2 | Zen 3 |

| FXCH | Exchange Registers | 2 per cycle | 4 per cycle |

| FADD | Floating Point Add | 5 cycle latency 1 per cycle |

6.5 cycle latency 2 per cycle |

| FMUL | Floating Point Multiply | 5 cycle latency 1 per cycle |

6.5 cycle latency 2 per cycle |

| FDIV32 | Floating Point Division | 10 cycle latency 0.285 per cycle |

10.5 cycle latency 0.800 per cycle |

| FDIV64 | 13 cycle latency 0.200 per cycle |

13.5 cycle latency 0.235 per cycle |

|

| FDIV80 | 15 cycle latency 0.167 per cycle |

15.5 cycle latency 0.200 per cycle |

|

| FSQRT32 | Floating Point Square Root |

14 cycle latency 0.181 per cycle |

14.5 cycle latency 0.200 per cycle |

| FSQRT64 | 20 cycle latency 0.111 per cycle |

20.5 cycle latency 0.105 per cycle |

|

| FSQRT80 | 22 cycle latency 0.105 per cycle |

22.5 cycle latency 0.091 per cycle |

|

| FCOS 0.739079 |

cos X = X | 117 cycle latency 0.27 per cycle |

149 cycle latency 0.28 per cycle |

The FADD and FMUL improvements mean the most here, but as stated, using x87 is not recommended. So why is it even mentioned here? The answer lies in older software. Software stacks built upon decades old Fortran still use these instructions, and more often than not in high performance math codes. Increasing throughput for the FADD/FMUL should provide a good speed up there.

Vector Integers

All of the vector integer improvements fall into two main categories. Aside from latency improvements, some of these improvements are execution port specific – due to the way the execution ports have changed this time around, throughput has improved for large numbers of instructions.

| Zen3 Updates (5) Port Vector Integer Instructions |

||||

| AnandTech | Instruction | Vector | Zen2 | Zen 3 |

| FP013 -> FP0123 | ALU, BLENDI, PCMP, MIN/MAX | MMX, SSE, AVX, AVX2 | 3 per cycle | 4 per cycle |

| FP2 Non-Variable Shift | PSHIFT | MMX, SSE AVX, AVX2 |

1 per clock | 2 per clock |

| FP1 | VPSRLVD/Q VPSLLVD/Q |

AVX2 | 3 cycle latency 0.5 per clock |

1 cycle latency 2 per clock |

| DWORD FP0 | MUL/SAD | MMX, SSE, AVX, AVX2 | 3 cycle latency 1 per clock |

3 cycle latency 2 per cycle |

| DWORD FP0 | PMULLD | SSE, AVX, AVX2 | 4 cycle latency 0.25 per clock |

3 cycle latency 2 per clock |

| WORD FP0 int MUL | PMULHW, PMULHUW, PMULLW | MMX, SSE, AVX, AVX2 | 3 cycle latency 1 per clock |

3 cycle latency 0.6 per clock |

| FP0 int | PMADD, PMADDUBSW | MMX, SSE, AVX, AVX2 | 4 cycle latency 1 per clock |

3 cycle latency 2 per clock |

| FP1 insts | (V)PERMILPS/D, PHMINPOSUW EXTRQ, INSERTQ |

SSE4a | 3 cycle latency 0.25 per clock |

3 cycle latency 2 per clock |

There are a few others not FP specific.

| Zen3 Updates (6) Vector Integer Instructions |

||||

| AnandTech | Instruction | Zen2 | Zen 3 | |

| VPBLENDVB | xmm/ymm | Variable Blend Packed Bytes | 1 cycle latency 1 per cycle |

1 cycle latency 2 per cycle |

| VPBROADCAST B/W/D/SS |

ymm<-xmm | Load and Broadcast | 4 cycle latency 1 per cycle |

2 cycle latency 1 per cycle |

| VPBROADCAST Q/SD |

ymm<-xmm | Load and Broadcast | 1 cycle latency 1 per cycle |

2 cycle latency 1 per cycle |

| VINSERTI128 VINSERTF128 |

ymm<-xmm | Insert Packed Values | 1 cycle latency 1 per cycle |

2 cycle latency 1 per cycle |

| SHA1RNDS4 | Four Rounds of SHA1 | 6 cycle latency 0.25 per cycle |

6 cycle latency 0.5 per cycle |

|

| SHA1NEXTE | Calculate SHA1 State | 1 cycle latency 1 per cycle |

1 cycle latency 2 per cycle |

|

| SHA256RNDS2 | Four Rounds of SHA256 | 4 cycle latency 0.5 per cycle |

4 cycle latency 1 per cycle |

|

These last three are important for SHA cryptography. AMD, unlike Intel, does accelerated SHA so being able to reduce multiple instructions to a single instruction to help increase throughput and utilization should push them even further ahead. Rather than going for hardware accelerated SHA256, Intel instead prefers to use its AVX-512 unit, which unfortunately is a lot more power hungry and less efficient.

Vector Floats

We’ve already covered the improvements to the FMA latency, but there are also other improvements.

| Zen3 Updates (7) Vector Float Instructions |

||||

| AnandTech | Instruction | Zen2 | Zen 3 | |

| DIVSS/PS | xmm, ymm | Divide FP32 Scalar/Packed |

10 cycle latency 0.286 per cycle |

10.5 cycle latency 0.444 per cycle |

| DIVSD/PD | xmm, ymm | Divide FP64 Scalar/Packed |

13 cycle latency 0.200 per cycle |

13.5 cycle latency 0.235 per cycle |

| SQRTSS/PS | xmm, ymm | Square Root FP32 Scalar/Packed |

14 cycle latency 0.181 per cycle |

14.5 cycle latency 0.273 per cycle |

| SQRTSD/PD | xmm, ymm | Square Root FP64 Scalar/Packed |

20 cycle latency 0.111 per cycle |

20.5 cycle latency 0.118 per cycle |

| RCPSS/PS | xmm, ymm | Reciprocal FP32 Scalar/Packed |

5 cycle latency 2 per cycle |

3 cycle latency 2 per cycle |

| RSQRTSS/PS | xmm, ymm | Reciprocal FP32 SQRT Scalar/Pack |

5 cycle latency 2 per cycle |

3 cycle latency 2 per cycle |

| VCVT* | xmm<-xmm | Convert | 3 cycle latency 1 per cycle |

3 cycle latency 2 per cycle |

| VCVT* | xmm<-ymm ymm<-xmm |

Convert | 4 cycle latency 1 per cycle |

4 cycle latency 2 per cycle |

| ROUND* | xmm, ymm | Round FP32/FP64 Scalar/Packed |

3 cycle latency 1 per cycle |

3 cycle latency 2 per cycle |

| GATHER | 4x32 | Gather | 19 cycle latency 0.111 per cycle |

15 cycle latency 0.250 per cycle |

| GATHER | 8x32 | Gather | 23 cycle latency 0.063 per cycle |

19 cycle latency 0.111 per cycle |

| GATHER | 4x64 | Gather | 18 cycle latency 0.167 per cycle |

13 cycle latency 0.333 per cycle |

| GATHER | 8x64 | Gather | 19 cycle latency 0.111 per cycle |

15 cycle latency 0.250 per cycle |

Along with these, store-to-load latencies have increased by a clock. AMD is promoting that it has improved store-to-load bandwidth with the new core, but that comes at additional latency.

Compared to some of the recent CPU launches, this is a lot of changes!

339 Comments

View All Comments

TheinsanegamerN - Tuesday, November 10, 2020 - link

There is no x590 chipset coming. X570 is ryzen 5000s chipset.There's also this miracle fo technology, if you have a micro atx or full atx board, you can put in ADD IN CARDS. Amazing, right? So even if your board does not natively support 2.5G LAN you can add it for a low price, because 2.5G cards are relatively cheap.

TheinsanegamerN - Tuesday, November 10, 2020 - link

the x570 aorus master and msi x570 unify also have 2.5G lan. And surely there will be newer models next year with newer features and names, gotta keep the model churn going!alhopper - Sunday, November 8, 2020 - link

Ian and Andrei - 1,000 Thank Yous for this awesome article and you fine technical journalism. You guys did amazing work and we (the community) are fortunate to be the benefactors.Thanks again and keep up the Good Work (TM).

Rekaputra - Sunday, November 8, 2020 - link

Wow this article it so comprehensive. Glad i always check anandtech for my reference in computing. I wonder how it stack againt threadripper on database or excel compute workload. I know these are desktop proc. But there is possibility use it for mini workstation for office stuff like accounting and development RDBMS as it is cheaper.SkyBill40 - Sunday, November 8, 2020 - link

Once some availability comes back into play... my old and trusty FX 8350 is going to be retired. I've been waiting to rebuild for a long time now and the wait has clearly paid off regardless of how the is the end of the line for AM4 or well Ryzen 4 does next year. I could wait... but nah.jcromano - Friday, November 13, 2020 - link

I'm in a similar boat. I'm still running an i5-2500k from early 2011 (coming up on ten years, yikes), and I'll build a new rig, probably 5600X, when the processors become available. I fret a bit over whether I should wait for the next socket to arrive before taking the plunge, but given the infrequency with which I upgrade, I think it's likely that the next socket would also be obsolete by the time it mattered.evilpaul666 - Sunday, November 8, 2020 - link

I'd love to see some PS3 emulation testing added.abufrejoval - Monday, November 9, 2020 - link

Control flow integrity (or enforcement) seem to be in, and that was for me a major criterion for getting one (5800X scheduled to arrive tomorrow).But what about SEV or per-VM-encryption? From the hints I see this seems enabled in Intel's Tiger Lake and I guess the hardware would be there on all Zen 3 chiplets, but is AMD going to enable it for "consumer" platforms?

With 8 or more cores around, there is plenty of reasons why people would want to run a couple of VMs on pretty much anything, from a notebook to a home entertainment/control system, even a gaming rig. And some of those VMs we'd rather have secure from phishing and trojans, right?

Keeping this an EPIC-only or Pro-only feature would be a real mistake IMHO.

BTW ordered ECC DDR4-3200 to go with it, because this box will run 24x7 and pushes a Xeon E3-1276 v3 into cold backup.

lmcd - Monday, November 9, 2020 - link

Starting to feel like the platform is way too constrained just for the sake of all 6 APUs AMD has released (all with mediocre graphics and most with mediocre CPUs, no less). I hope AMD bifuricates and comes up with an in-between platform that supports ~32-40 CPU PCIe lanes and drops APUs. If APUs can't be on-time with everything else there's so little point.29a - Monday, November 9, 2020 - link

"Firstly, because we need an AI benchmark, and a bad one is still better than not having one at all."Can't say I agree with that.