A Broadwell Retrospective Review in 2020: Is eDRAM Still Worth It?

by Dr. Ian Cutress on November 2, 2020 11:00 AM ESTBroadwell with eDRAM: Still Has Gaming Legs

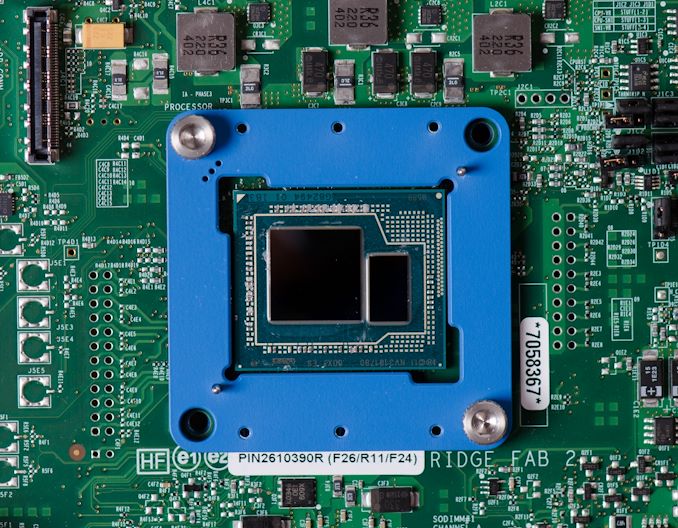

As we crossover into the 2020s era, we now have more memory bandwidth from DRAM than a processor in 2015. Intel's Broadwell processors were advertised as having 128 megabytes of 'eDRAM', which enabled 50 GiB/s of bidirectional bandwidth at a lower latency of main memory, which ran only at 25.6 GiB/s. Modern processors have access to DDR4-3200, which is 51.2 GiB/s, and future processors are looking at 65 GiB/s or higher.

At this time, it is perhaps poignant to take a step back and understand the beauty of having 128 MiB of dedicated silicon for a singular task.

Intel’s eDRAM enabled Broadwell processors accelerated a significant number of memory bandwidth and memory latency workloads, in particular gaming. What eDRAM has enabled in our testing, even if we bypass the now antiquated CPU performance, is surprisingly good gaming performance. Most of our CPU gaming tests are designed to enable a CPU-limited scenario, which is exactly where Broadwell can play best. Our final CPU gaming test is a 1080p Max scenario where the CPU matters less, but there still appears to be good benefits from having an on-die DRAM and that much lower latency all the way out to 128 MiB.

There have always been questions around exactly what 128 MiB of eDRAM cost Intel to produce and supply to a generation of processors. At launch, Intel priced the eDRAM versions of 14 nm Broadwell processors as +$60 above the non-eDRAM versions of 22 nm Haswell equivalents. There are arguments to say that it cost Intel directly somewhere south of $10 per processor to build and enable, but Intel couldn’t charge that low, based on market segmentation. Remember, that eDRAM was built on a mature 22 nm SoC process at the time.

As we move into an era where AMD is showcasing its new ‘double’ 32 MiB L3 cache on Zen 3 as a key part of their improved gaming performance, we already had 128 MiB of gaming acceleration in 2015. It was enabled through a very specific piece of hardware built into the chip. If we could do it in 2015, why can’t we do it in 2020?

What about HBM-enabled eDRAM for 2021?

Fast forward to 2020, and we now have mature 14 nm and 7 nm processes, as well as a cavalcade of packaging and eDRAM opportunities. We might consider that adding 1-2 GiB of eDRAM to a package could be done with high bandwidth connectivity, using either Intel’s embedded multi-die technology or TSMC’s 3DFabric technology.

If we did that today, it could arguably be just as complex as what it was to add 128 MiB back in 2015. We now have extensive EDA and packaging tools to deal with chiplet designs and multi-die environments.

So consider, at a time where high performance consumer processors are in the realm of $300 up to $500-$800, would customers consider paying +$60 more for a modern high-end processor with 2 gigabytes of intermediate L4 cache? It would extend AMD’s idea of high-performance gaming cache well beyond the 32 MiB of Zen 3, or perhaps give Intel a different dynamic to its future processor portfolio.

As we move into more a chiplet enabled environment, some of those chiplets could be an extra cache layer. However, to put some of this into perspective.

- Intel's Broadwell's 128 MiB of eDRAM was built (is still built) on Intel's 22nm IO process and used 77 mm2 of die area.

- AMD's new RX 6000 GPUs use '128 MiB' of 7nm Infinity Cache SRAM. At an estimated 6.4 billion transistors, or 24% of the 26.8 billion transistors and ~510-530mm2 die, this cache requires a substantial amount of die area, even on 7nm.

This would suggest that in order for future products to integrate large amounts of cache or eDRAM, then layered solutions will need to be required. This will require large investment in design and packaging, especially thermal control.

Many thanks to Dylan522p for some minor updates on die size and pointing out that the same 22nm eDRAM chip is still in use today with Apple's 2020 base Macbook Pro 13.

120 Comments

View All Comments

Khenglish - Monday, November 2, 2020 - link

The infinity cache is SRAM, which will be faster but much lower density. Only IBM ever integrated DRAM on the same die as a processor. The DRAM capacitor takes up the space where you want to put all your CPU wiring.Quantumz0d - Monday, November 2, 2020 - link

Always thought why Intel is so fucking foolish in making that shitty iGPU die instead of making eDRAM on the chip. It would have given a massive boost for all their CPUs. A big missed opportunity. AMD had this "Game cache" on their Zen 2 and now with RDNA2, "Infinity Cache" again..jospoortvliet - Wednesday, November 4, 2020 - link

I guess they did the math on cost and power. They always had better memory controllers and prefetchers so they didn't benefit as much from cache- they also have the memory controller on-die, unlike amd with their i/o die. So intel would benefit waaaay less than amd does, in almost every way.dragosmp - Monday, November 2, 2020 - link

"...the same 22nm eDRAM chip is still in use today with Apple's 2020 base Macbook Pro 13"Ahem, what? Is that CPU an off the roadmap Tiger Lake?

Jorgp2 - Monday, November 2, 2020 - link

Tiger Lake doesn't have the hardware for an L4.It's probably the Skylake version

colinisation - Monday, November 2, 2020 - link

Do the part numbers on Intel CPU's mean anything, I picked up a 5775C a week agoand have not installed it yet but the part number starts "L523" - I just assume it is a later batch than what is in the review.ilt24 - Tuesday, November 3, 2020 - link

@colinisationThat L523 are the first 4 characters of the is the Finished Process Order or Batch#.

The L says it was packaged in Malaysia

The 5 says it was packaged in 2015

The 23 says it was packaged on the 23rd week

Digits 5-8 are the specific lot number number of the wafer the die came from

colinisation - Tuesday, November 3, 2020 - link

@ilt24 - Thank you very muchMday - Monday, November 2, 2020 - link

I expected more eDRAM implementations after Broadwell coming from Intel and AMD on the CPU side, as a low latency - high "capacity" cache, particularly after the launch of HBM. It made me wonder why Intel even bothered, or what shifts in strategies moved them to and away from eDRAM.ichaya - Monday, November 2, 2020 - link

This is really the first desktop part I'm hearing of, weren't most of these "Iris Pro" chips sold in Apple laptops with maybe a small minority being sold by other laptop OEMs? I believe so.