A Broadwell Retrospective Review in 2020: Is eDRAM Still Worth It?

by Dr. Ian Cutress on November 2, 2020 11:00 AM ESTBroadwell with eDRAM: Still Has Gaming Legs

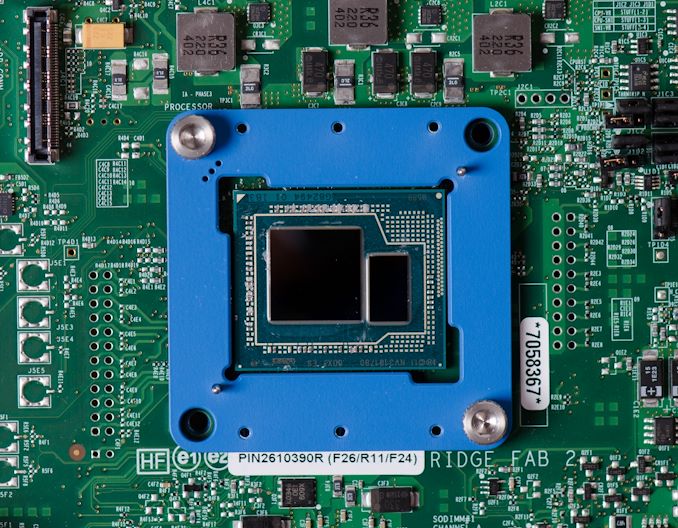

As we crossover into the 2020s era, we now have more memory bandwidth from DRAM than a processor in 2015. Intel's Broadwell processors were advertised as having 128 megabytes of 'eDRAM', which enabled 50 GiB/s of bidirectional bandwidth at a lower latency of main memory, which ran only at 25.6 GiB/s. Modern processors have access to DDR4-3200, which is 51.2 GiB/s, and future processors are looking at 65 GiB/s or higher.

At this time, it is perhaps poignant to take a step back and understand the beauty of having 128 MiB of dedicated silicon for a singular task.

Intel’s eDRAM enabled Broadwell processors accelerated a significant number of memory bandwidth and memory latency workloads, in particular gaming. What eDRAM has enabled in our testing, even if we bypass the now antiquated CPU performance, is surprisingly good gaming performance. Most of our CPU gaming tests are designed to enable a CPU-limited scenario, which is exactly where Broadwell can play best. Our final CPU gaming test is a 1080p Max scenario where the CPU matters less, but there still appears to be good benefits from having an on-die DRAM and that much lower latency all the way out to 128 MiB.

There have always been questions around exactly what 128 MiB of eDRAM cost Intel to produce and supply to a generation of processors. At launch, Intel priced the eDRAM versions of 14 nm Broadwell processors as +$60 above the non-eDRAM versions of 22 nm Haswell equivalents. There are arguments to say that it cost Intel directly somewhere south of $10 per processor to build and enable, but Intel couldn’t charge that low, based on market segmentation. Remember, that eDRAM was built on a mature 22 nm SoC process at the time.

As we move into an era where AMD is showcasing its new ‘double’ 32 MiB L3 cache on Zen 3 as a key part of their improved gaming performance, we already had 128 MiB of gaming acceleration in 2015. It was enabled through a very specific piece of hardware built into the chip. If we could do it in 2015, why can’t we do it in 2020?

What about HBM-enabled eDRAM for 2021?

Fast forward to 2020, and we now have mature 14 nm and 7 nm processes, as well as a cavalcade of packaging and eDRAM opportunities. We might consider that adding 1-2 GiB of eDRAM to a package could be done with high bandwidth connectivity, using either Intel’s embedded multi-die technology or TSMC’s 3DFabric technology.

If we did that today, it could arguably be just as complex as what it was to add 128 MiB back in 2015. We now have extensive EDA and packaging tools to deal with chiplet designs and multi-die environments.

So consider, at a time where high performance consumer processors are in the realm of $300 up to $500-$800, would customers consider paying +$60 more for a modern high-end processor with 2 gigabytes of intermediate L4 cache? It would extend AMD’s idea of high-performance gaming cache well beyond the 32 MiB of Zen 3, or perhaps give Intel a different dynamic to its future processor portfolio.

As we move into more a chiplet enabled environment, some of those chiplets could be an extra cache layer. However, to put some of this into perspective.

- Intel's Broadwell's 128 MiB of eDRAM was built (is still built) on Intel's 22nm IO process and used 77 mm2 of die area.

- AMD's new RX 6000 GPUs use '128 MiB' of 7nm Infinity Cache SRAM. At an estimated 6.4 billion transistors, or 24% of the 26.8 billion transistors and ~510-530mm2 die, this cache requires a substantial amount of die area, even on 7nm.

This would suggest that in order for future products to integrate large amounts of cache or eDRAM, then layered solutions will need to be required. This will require large investment in design and packaging, especially thermal control.

Many thanks to Dylan522p for some minor updates on die size and pointing out that the same 22nm eDRAM chip is still in use today with Apple's 2020 base Macbook Pro 13.

120 Comments

View All Comments

dsplover - Tuesday, November 3, 2020 - link

For Digital s Audio applications the i7-5775C @ 3.3GHz was incredible when disabling the Iris GFX turning the cache over to audio, then running s discrete GFX card.Bested my i7 4790k’s.

Tried OC’ing but even with the kick but Supermicro H70 it was unstable as the Ring Bus/L4 would also clock up and choked @ 2050MHz.

This rig allowed really tight low latency timings and I prayed they would release future designs with a larger cache.

AMD beat them to to it w/Matisse which was good for 8 core only.

The new 5000s are going to be Digital Audio dreams @ low wattage.

Intel just keeps lagging behind.

ironicom - Tuesday, November 3, 2020 - link

fps is irrelevant in civ; turn time and load time are what matter.vorsgren - Tuesday, November 3, 2020 - link

Thanks for using my benchmark! Hope it was usefull!Nictron - Wednesday, November 4, 2020 - link

Which benchmark was that?erotomania - Wednesday, November 4, 2020 - link

Google the username.vorsgren - Wednesday, November 4, 2020 - link

http://www.bay12forums.com/smf/index.php?topic=173...Oxford Guy - Thursday, November 5, 2020 - link

"The Intel skew on this site is getting silly its becoming an Intel promo machine!"Yes. An article that exposes how much Intel was able to get away with sandbagging because of our tech world's lack of adequate competition (seen in MANY tech areas to the point where it's more the norm than the exception) — clearly such an article is showing Intel in a good light.

If you were an Intel shareholder.

For everyone else (the majority of the readers), the article condemns Intel for intentionally hobbing Skylake's gaming performance. ArsTechnica produced an article about this five years ago when it became clear that Skylake wasn't going to have EDRAM.

The ridiculousness of the situation (how Intel got away with charging premium prices for horribly hobbled parts — $10 worth of EDRAM missing, no less) really shows the world's economic system particularly poorly. For all the alleged capitalism in tech, there certainly isn't much competition. That's why Intel didn't have to ship Skylake with EDRAM. Monopolization (and near-monopoly) enables companies to do what they want to do more than anything else: sell less for more. As long as regulators are toothless and/or incompetent the situation won't improve much.

erikvanvelzen - Saturday, November 7, 2020 - link

Ever since the Pentium 4 Extreme Edition I've wondered why intel does not permanently offer a top product with a large L3 or L4 cache.abufrejoval - Monday, November 9, 2020 - link

Just picked up a NUC8i7BEH last week (quad i7, 48EU GT3e with 128MB eDRAM), because they dropped below €300 including VAT: A pretty incredible value at that price point and extremely compatible with just about any software you can throw at it.Yes, Tiger Lake NUC11 would be better on paper and I have tried getting a Ryzen 7-4800U (as PN50-BBR748MD), but I've never heard of one actually shipped.

It's my second NUC8i7BEH, I had gotten another a month or two previously, while it was still at €450, but decided to swap that against a hexa-core NUC10i7FNH (24EU no eDRAM) at the same price, before the 14-days zero-cost return period was up. GT3e+quad-core vs. GT2+hexa-core was a tough call to make, but acutally both run really mostly server loads anyway. But at €300/quad vs €450/hexa the GT3e is quite simply for free, when the silicon die area for the GT3e/quad is in all likelyhood much greater than for the GT2/hexa, even without counting the eDRAM.

My Whiskey-lake has 200MHz less top clock than the Comet-lake, but that doesn't show in single core results, where the L4 seems to put Whiskey consistently into a small lead.

GT3e doesn't quite manage to double graphics performance over GT2, but I am not planning to use either for gaming. Both do fairly well at 4k on anything 2D, even Google Map's 3D renders do pretty well.

BTW: While Google Earth Pro's Flight simulator actually gives a fairly accurate representation of the area where I live, it doesn't do great on FPS, even with an Nvidia GPU. By contrast Microsoft latest and greatest is a huge disappointment when it comes to terrain accuracy (buildings are pure fantasy, not related at all to what's actually there), but delivers ok FPS on my RTX2080ti. No, I didn't try FlightSim on the NUCs...

However, the 3D rendering pipeline Google has put into the browser variant of Google Maps, beats the socks off both Google Earth Pro and Microsoft Flight: With Chrome leading over Firefox significantly, the 3D modelled environment is mind-boggling even on the GT2 at 4k resolutions, it's buttery smooth on GT3e. A browser based flight simulator might actually give the best experience overall, quite hard to believe in a way.

It has me appreciate how good even iGPU graphics could be, if code was properly tuned to make do with what's there.

And it exposes just how bad Microsoft Flight is with nothing but Bing map data unterneath: Those €120 were a full waste of money, but I just saved those from buying the second NUC8 later.

mrtunakarya - Wednesday, December 9, 2020 - link

<a href="https://www.mrtunakarya.com/?m=1">Nice<...