A Broadwell Retrospective Review in 2020: Is eDRAM Still Worth It?

by Dr. Ian Cutress on November 2, 2020 11:00 AM ESTGaming Tests: Civilization 6

Originally penned by Sid Meier and his team, the Civilization series of turn-based strategy games are a cult classic, and many an excuse for an all-nighter trying to get Gandhi to declare war on you due to an integer underflow. Truth be told I never actually played the first version, but I have played every edition from the second to the sixth, including the fourth as voiced by the late Leonard Nimoy, and it a game that is easy to pick up, but hard to master.

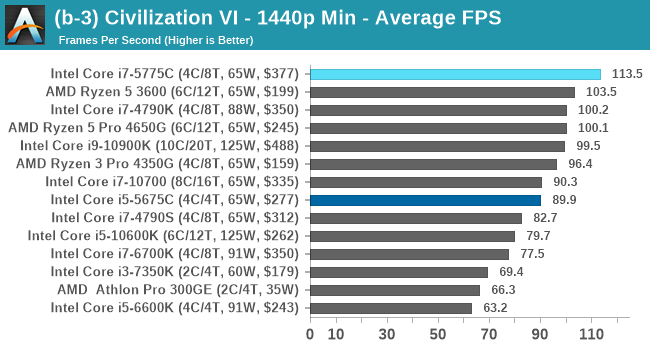

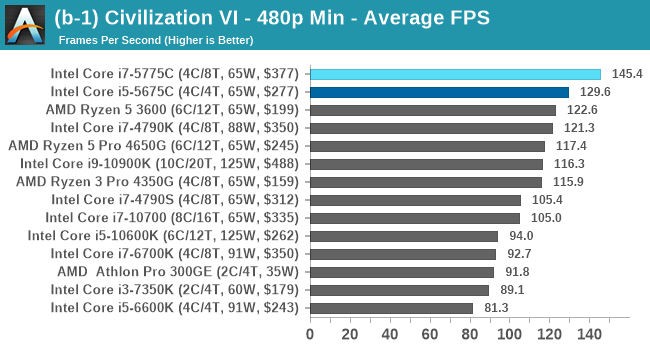

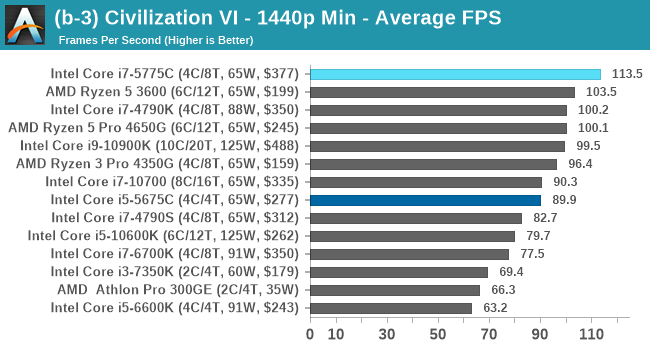

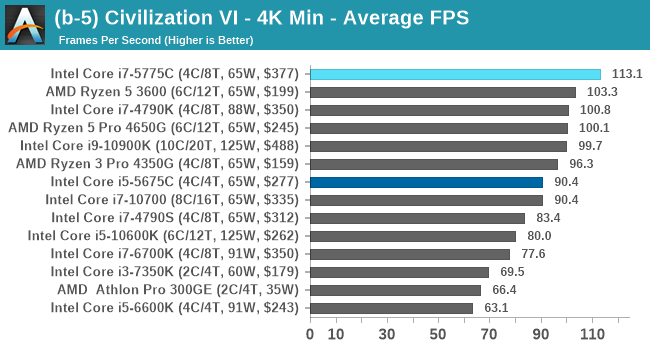

Benchmarking Civilization has always been somewhat of an oxymoron – for a turn based strategy game, the frame rate is not necessarily the important thing here and even in the right mood, something as low as 5 frames per second can be enough. With Civilization 6 however, Firaxis went hardcore on visual fidelity, trying to pull you into the game. As a result, Civilization can taxing on graphics and CPUs as we crank up the details, especially in DirectX 12.

For this benchmark, we are using the following settings:

- 480p Low, 1440p Low, 4K Low, 1080p Max

For automation, Firaxis supports the in-game automated benchmark from the command line, and output a results file with frame times. We do as many runs within 10 minutes per resolution/setting combination, and then take averages and percentiles.

| AnandTech | Low Res Low Qual |

Medium Res Low Qual |

High Res Low Qual |

Medium Res Max Qual |

| Average FPS |  |

|

|

|

| 95th Percentile |  |

|

|

|

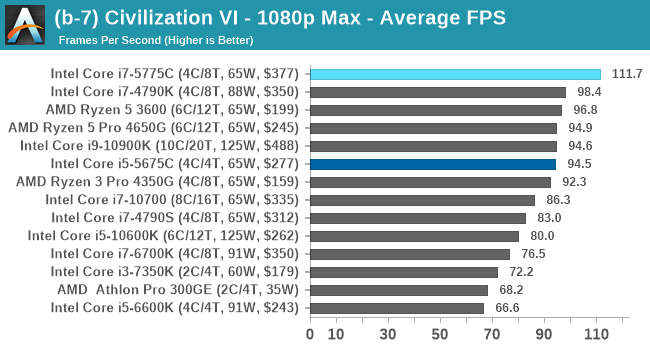

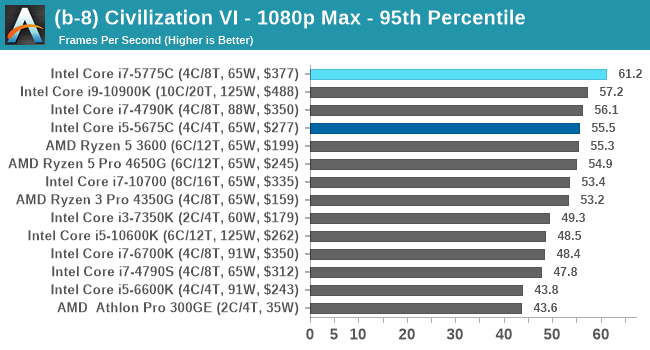

Civ 6 has always been a fan of fast CPU cores and low latency, so perhaps it isn't much of a surprise to see the Core i7 here beat out the latest processors. The Core i7 seems to generate a commanding lead, whereas those behind it seem to fall into a category around 94-96 FPS at 1080p Max settings.

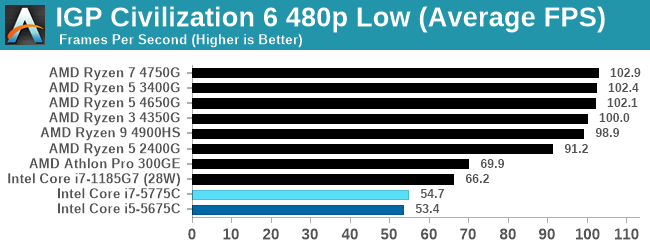

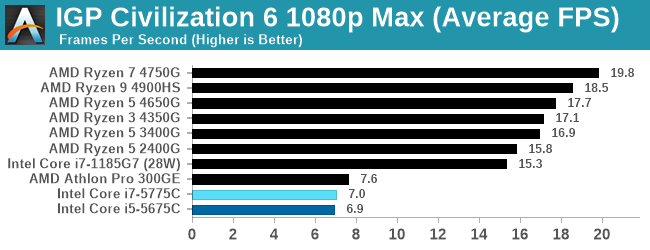

For our Integrated Tests, we run the first and last combination of settings.

When we use the integrated graphics, Broadwell isn't particularly playable here.

All of our benchmark results can also be found in our benchmark engine, Bench.

120 Comments

View All Comments

krowes - Monday, November 2, 2020 - link

CL22 memory for the Ryzen setup? Makes absolutely no sense.Ian Cutress - Tuesday, November 3, 2020 - link

That's JEDEC standard.Khenglish - Monday, November 2, 2020 - link

Was anyone else bothered by the fact that Intel's highest performing single thread CPU is the 1185G7, which is only accessible in 28W tiny BGA laptops?Also the 128mb edram cache does seem to make on average a 10% improvement over the edramless 4790S at the same TDP. I would love to see edram on more cpus. It's so rare to need more than 8 cores. I'd rather have 8 cores with edram than 16+ cores and no edram.

ichaya - Monday, November 2, 2020 - link

There's definitely a cost trade-off involved, but with an I/O die since Zen 2, it seems like AMD could just spin up a different I/O die, and justify the cost easily by selling to HEDT/Workstation/DC.Notmyusualid - Wednesday, November 4, 2020 - link

Chalk me up as 'bothered'.zodiacfml - Monday, November 2, 2020 - link

Yeah but Intel is about squeezing the last dollar in its products for a couple of years now.Endymio - Monday, November 2, 2020 - link

CPU register-> 3 levels of cache -> eDRAM -> DRAM -> Optane -> SSD -> Hard Drive.The human brain gets by with 2 levels of storage. I really don't feel that computers should require 9. The entire approach needs rethinking.

Tomatotech - Tuesday, November 3, 2020 - link

You remember everything without writing down anything? You remarkable person.The rest of us rely on written materials, textbooks, reference libraries, wikipedia, and the internet to remember stuff. If you jot down all the levels of hierarchical storage available to the average degree-educated person, it's probably somewhere around 9 too depending on how you count it.

Not everything you need to find out is on the internet or in books either. Data storage and retrieval also includes things like having to ask your brother for Aunt Jenny's number so you can ring Aunt Jenny and ask her some detail about early family life, and of course Aunt Jenny will tell you to go and ring Uncle Jonny, but she doesn't have Jonny's number, wait a moment while she asks Max for it and so on.

eastcoast_pete - Tuesday, November 3, 2020 - link

You realize that the closer the cache is to actual processor speed, the more demanding the manufacturing gets and the more die area it eats. That's why there aren't any (consumer) CPUs with 1 or more MB of L1 Cache. Also, as Tomatotech wrote, we humans use mnemonic assists all the time, so the analogy short-term/long-term memory is incomplete. Writing and even drawing was invented to allow for longer-term storage and easier distribution of information. Lastly, at least IMO, it boils down to cost vs. benefit/performance as to how many levels of memory storage are best, and depends on the usage scenario.Oxford Guy - Monday, November 2, 2020 - link

Peter Bright of Ars in 2015:"Intel’s Skylake lineup is robbing us of the performance king we deserve. The one Skylake processor I want is the one that Intel isn't selling.

in games the performance was remarkable. The 65W 3.3-3.7GHz i7-5775C beat the 91W 4-4.2GHz Skylake i7-6700K. The Skylake processor has a higher clock speed, it has a higher power budget, and its improved core means that it executes more instructions per cycle, but that enormous L4 cache meant that the Broadwell could offset its disadvantages and then some. In CPU-bound games such as Project Cars and Civilization: Beyond Earth, the older chip managed to pull ahead of its newer successor.

in memory-intensive workloads, such as some games and scientific applications, the cache is better than 21 percent more clock speed and 40 percent more power. That's the kind of gain that doesn't come along very often in our dismal post-Moore's law world.

Those 5775C results tantalized us with the prospect of a comparable Skylake part. Pair that ginormous cache with Intel's latest-and-greatest core and raise the speed limit on the clock speed by giving it a 90-odd W power envelope, and one can't help but imagine that the result would be a fine processor for gaming and workstations alike. But imagine is all we can do because Intel isn't releasing such a chip. There won't be socketed, desktop-oriented eDRAM parts because, well, who knows why.

Intel could have had a Skylake processor that was exciting to gamers and anyone else with performance-critical workloads. For the right task, that extra memory can do the work of a 20 percent overclock, without running anything out of spec. It would have been the must-have part for enthusiasts everywhere. And I'm tremendously disappointed that the company isn't going to make it."

In addition to Bright's comments I remember Anandtech's article that showed the 5675C beating or equalling the 5775C in one or more gaming tests, apparently largely due to the throttling due to Intel's decision to hobble Broadwell with such a low TDP.