A Broadwell Retrospective Review in 2020: Is eDRAM Still Worth It?

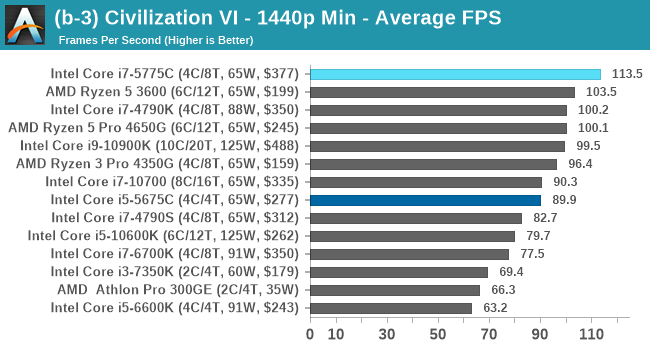

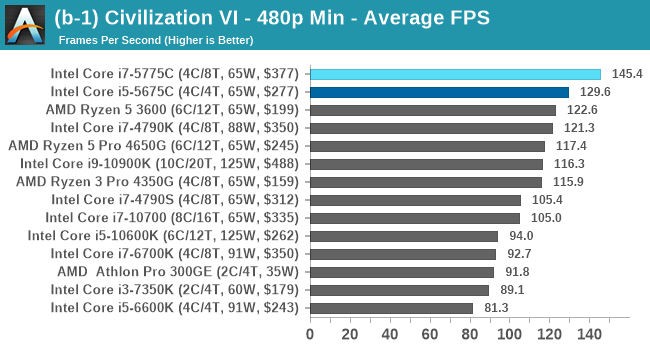

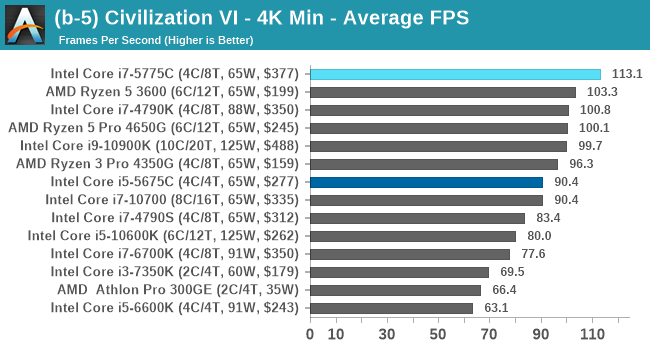

by Dr. Ian Cutress on November 2, 2020 11:00 AM ESTGaming Tests: Civilization 6

Originally penned by Sid Meier and his team, the Civilization series of turn-based strategy games are a cult classic, and many an excuse for an all-nighter trying to get Gandhi to declare war on you due to an integer underflow. Truth be told I never actually played the first version, but I have played every edition from the second to the sixth, including the fourth as voiced by the late Leonard Nimoy, and it a game that is easy to pick up, but hard to master.

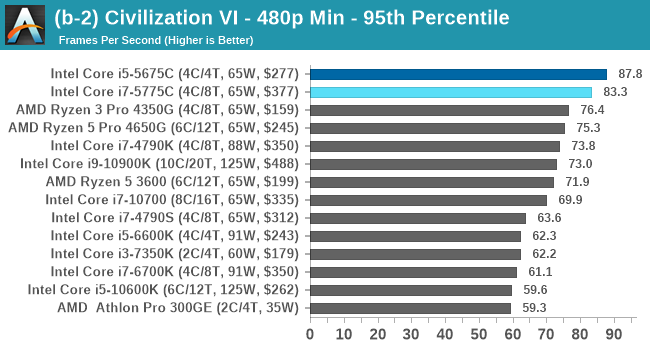

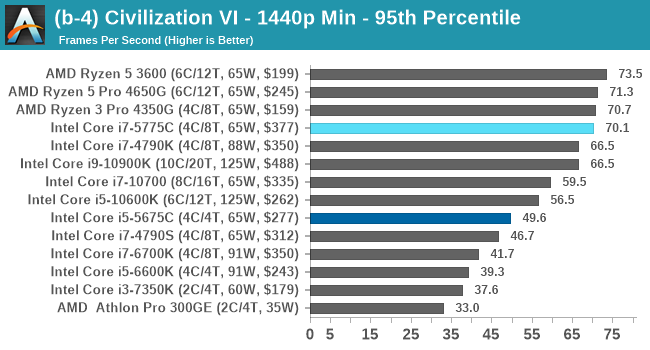

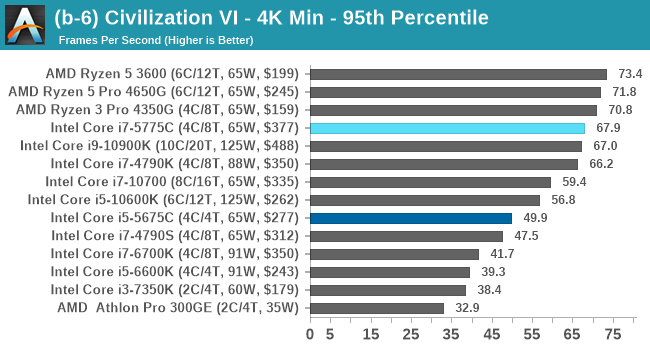

Benchmarking Civilization has always been somewhat of an oxymoron – for a turn based strategy game, the frame rate is not necessarily the important thing here and even in the right mood, something as low as 5 frames per second can be enough. With Civilization 6 however, Firaxis went hardcore on visual fidelity, trying to pull you into the game. As a result, Civilization can taxing on graphics and CPUs as we crank up the details, especially in DirectX 12.

For this benchmark, we are using the following settings:

- 480p Low, 1440p Low, 4K Low, 1080p Max

For automation, Firaxis supports the in-game automated benchmark from the command line, and output a results file with frame times. We do as many runs within 10 minutes per resolution/setting combination, and then take averages and percentiles.

| AnandTech | Low Res Low Qual |

Medium Res Low Qual |

High Res Low Qual |

Medium Res Max Qual |

| Average FPS |  |

|

|

|

| 95th Percentile |  |

|

|

|

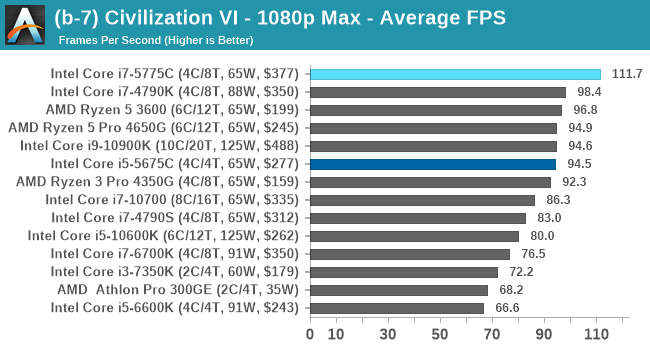

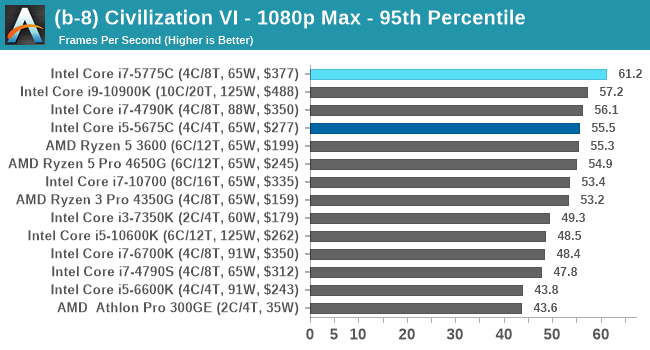

Civ 6 has always been a fan of fast CPU cores and low latency, so perhaps it isn't much of a surprise to see the Core i7 here beat out the latest processors. The Core i7 seems to generate a commanding lead, whereas those behind it seem to fall into a category around 94-96 FPS at 1080p Max settings.

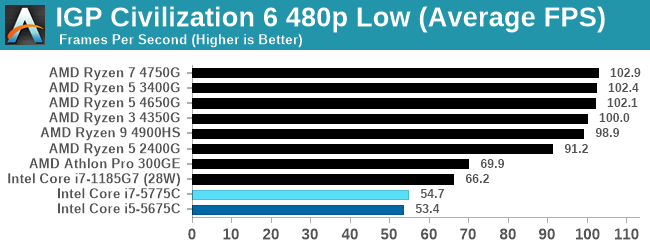

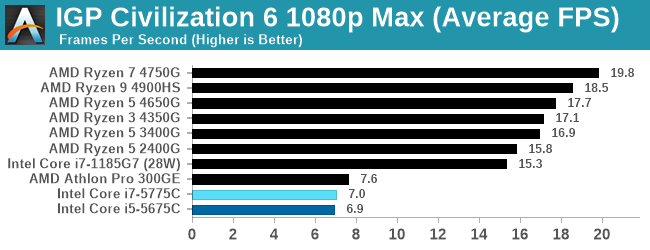

For our Integrated Tests, we run the first and last combination of settings.

When we use the integrated graphics, Broadwell isn't particularly playable here.

All of our benchmark results can also be found in our benchmark engine, Bench.

120 Comments

View All Comments

dotjaz - Saturday, November 7, 2020 - link

*servesSamus - Monday, November 9, 2020 - link

That's not true. There were numerous requests from OEM's for Intel to make iGPU-enabled XEONs for the specific purpose of QuickSync, so there are indeed various applications other than ML where an iGPU in a server environment is desirable.erikvanvelzen - Saturday, November 7, 2020 - link

Ever since the Pentium 4 Extreme Edition I've wondered why intel does not permanently offer a top product with a large L3 or L4 cache.lemmemakethis - Thursday, December 3, 2020 - link

Great blog post for better understanding <a href="https://farmslik.com/sales/">Buy rams near me </a>plonk420 - Monday, November 2, 2020 - link

been waiting for this to happen ...since the Fury/Fury X. would gladly pay the $230ish they want for a 6 core Zen 2 APU but even with "just" 4c8t + Vega 8 (but preferably 11) + HBM(2)ichaya - Monday, November 2, 2020 - link

With the RDNA2 infinitycache announcement and the increase (~2x) in effective BW from it, and we know Zen has always done better with more memory BW, so it's just dead obvious now that an L4 cache on the I/O die would increase performance (especially in workloads like gaming) more than it's power cost.I really should have said waiting since Zen 2, since that was the I/O die was introduced, but I'll settle for eDRAM or SRAM L4 on the I/O die as that would be easier than a CCX with HBM2 as cache. Some HBM2 APUS would be nice though.

throAU - Monday, November 2, 2020 - link

I think very soon for consumer focused parts, on package HBM won't necessarily be cache, but they'll be main memory. End users don't need massive amounts of RAM in end user devices, especially as more workload moves to cloud.8 GB of HBM would be enough for the majority of end user devices for some time to come and using only HBM instead of some multi-level caching architecture would be simpler - and much smaller.

Spunjji - Monday, November 2, 2020 - link

Really liking the level of detail from this new format! Fascinated to see how the Broadwell secret sauce has stood up to the test of time, too.Hopefully the new gaming CPU benchmarks will finally put most of the benchmark bitching to bed - for sure it goes to show (at quite some length) that the ranking under artificially CPU-limited scenarios doesn't really correspond to the ranking in a realistic scenario, where the CPU is one constraint amongst many.

Good work all-round 👍👍

lemurbutton - Monday, November 2, 2020 - link

Anandtech: We're going to review a product from 2015 but we're not going to review the RTX 3080, RTX 3090, nor the RTX 3070.If I were management, I'd fire every one of the editors.

e36Jeff - Monday, November 2, 2020 - link

The guy that tests GPUs was affected by the Cali wildfires. Ian wouldn't be writing a GPU review regardless, he does CPUs.