Imagination Announces A-Series GPU Architecture: "Most Important Launch in 15 Years"

by Andrei Frumusanu on December 2, 2019 8:00 PM ESTHyperLane Technology

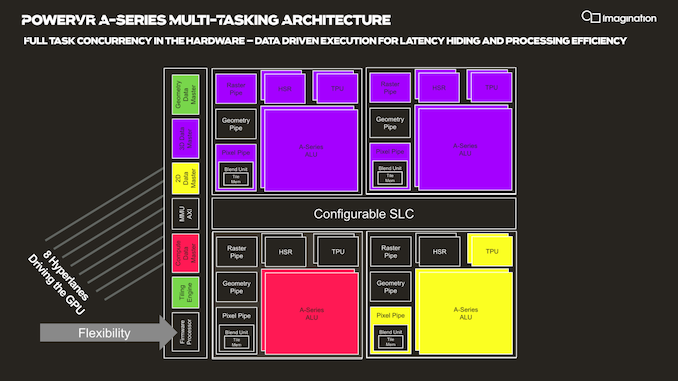

Another new addition to the A-Series GPU is Imagination's “HyperLane” technology, which promises to vastly expand the flexibility of the architecture in terms of multi-tasking as well as security. Imagination GPUs have had virtualization abilities for some time now, and this had given them an advantage in focus areas such as automotive designs.

The new HyperLane technology is said to be an extension to virtualization, going beyond it in terms of separation of tasks executed by a single GPU.

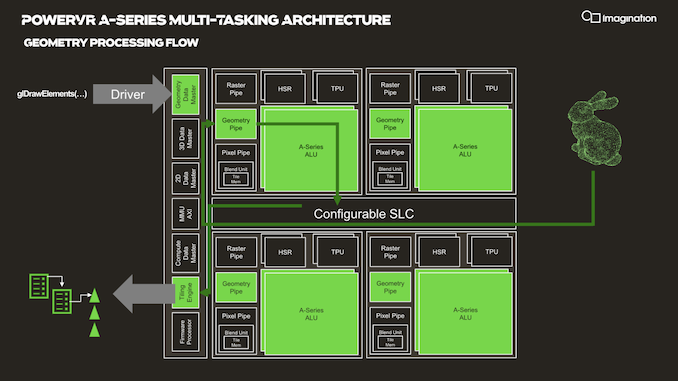

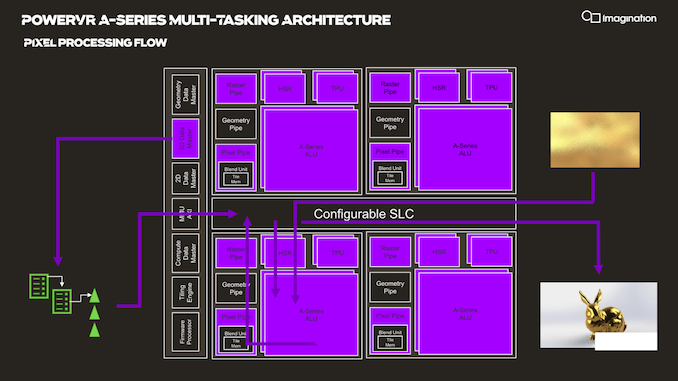

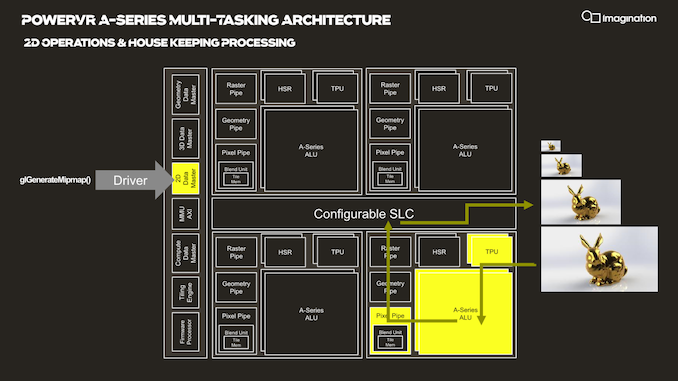

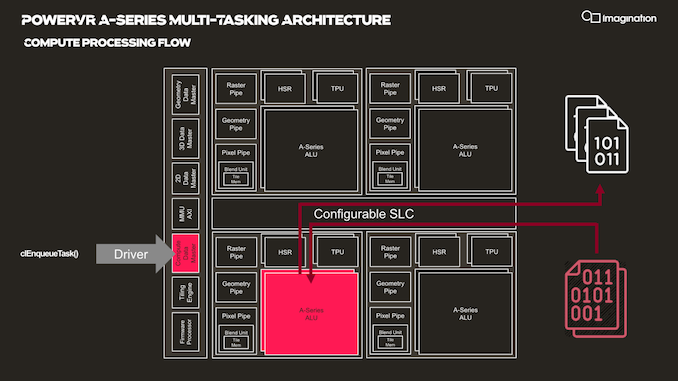

In your usual rendering flows, there are different kinds of “master” controllers each handling the dispatching of workloads to the GPU; geometry is handled by the geometry data master, pixel processing and shading by the 3D data master, 2D operations are handled by the 2D data, master, and compute workloads are processed by the, you guessed it, the compute data master.

In each of these processing flows various blocks of the GPU are active for a given task, while other blocks remain idle.

HyperLane technology is said to be able to enable full task concurrency of the GPU hardware, with multiple data masters being able to be active simultaneously, executing work dynamically across the GPU’s hardware resources. In essence, the whole GPU becomes multi-tasking capable, receiving different task submissions from up to 8 sources (hence 8 HyperLanes).

The new feature sounded to me like a hardware based scheduler for task submissions, although when I brought up this description the Imagination spokespeople were rather dismissive of the simplification, saying that HyperLanes go far deeper into the hardware architecture, with for example each HyperLane having being able to be configured with its own virtual memory space (or also sharing arbitrary memory spaces across hyperlanes).

Splitting GPU resources can happens on a block-level concurrently with other tasks, or also be shared in the time-domain with time-slices between HyperLanes. Priority can be given to HyperLanes, such as prioritizing graphics over a possible background AI task using the remaining free resources.

The security advantages of such a technology also seem advanced, with the company use-cases such as isolation for protected content and rights management.

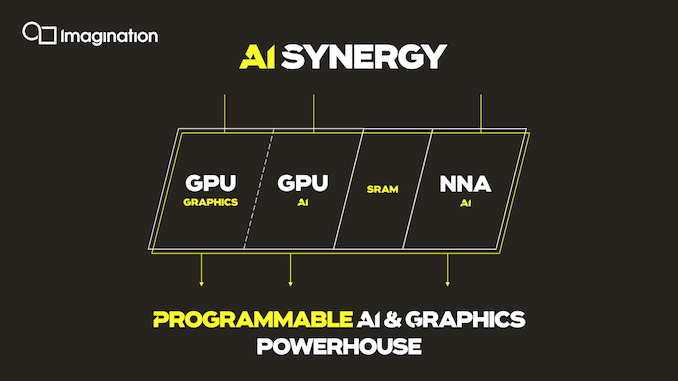

An interesting application of the technology is the synergy it allows between an A-Series GPU and the company’s in-house neural network accelerator IP. It would be able to share AI workloads between the two IP blocks, with the GPU for example handling the more programmable layers of a model while still taking advantage of the NNA’s efficiency for the fixed function fully connected layer processing.

Three Dozen Other Microarchitectural Improvements

The A-Series comes with other numerous microarchitectural advancements that are said to be advantageous to the GPU IP.

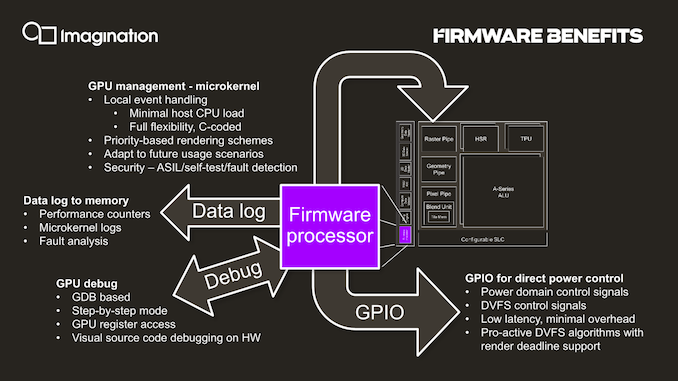

One such existing feature is the integration of a small dedicated CPU (which we understand to be RISC-V based) acting as a firmware processor, handling GPU management tasks that in other architectures might be still be handled by drivers on the host system CPU. The firmware processor approach is said to achieve more performant and efficient handling of various housekeeping tasks such as debugging, data logging, GPIO handling and even DVFS algorithms. In contrast as an example, DVFS for Arm Mali GPUs for example is still handled by the kernel GPU driver on the host CPUs.

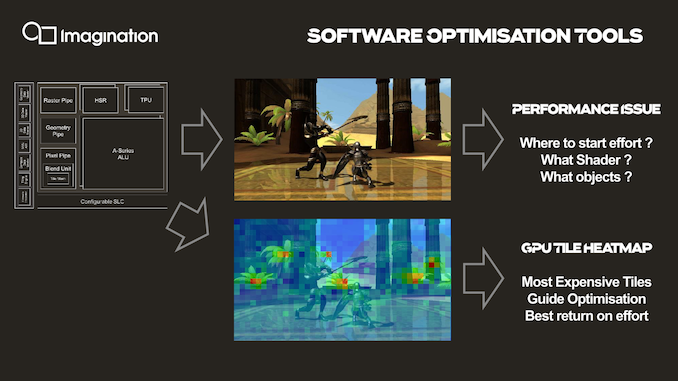

An interesting new development feature that is enabled by profiling the GPU’s hardware counters through the firmware processor is creating tile heatmaps of execution resources used. This seems relatively banal, but isn’t something that’s readily available for software developers and could be extremely useful in terms of quick debugging and optimizations of 3D workloads thanks to a more visual approach.

143 Comments

View All Comments

vladx - Wednesday, December 4, 2019 - link

Lol taking the words of a shady company like Apple as a fact, good one melgrosss.s.yu - Wednesday, December 4, 2019 - link

Championing a shady company like Huawei, good one vlad.name99 - Tuesday, December 3, 2019 - link

Andrei is as close to correct as one can hope to be.All we ACTUALLY know is that PowerVR has NEVER sued Apple. They said some pissed off things in a Press Release, then mostly took them back in a later Press Release.

And Apple said it would cease to pay to *royalties* to Imagination within a period of 15 months to two years.

It is possible that Apple created something de novo that's free and clear of IMG and that's that.

It's also possible that Apple paid (and even continues to pay?) IMG large sums of money that are not "royalties" but something else (IP licensing? patent purchase? ...).

We have no idea which is the case.

Apple is large enough that the money paid out could be hidden in pretty much any accounting line item. IMG is private so have no obligation to tell us where their money comes from.

mode_13h - Wednesday, December 4, 2019 - link

Yeah, it could've been a one-time lump sum payment. That's not technically "royalties".lucam - Tuesday, December 3, 2019 - link

Very true. If you were to run GFXBENCH on the new iPhone 11PRO with the A13, you will see that the gldrivers are all powervrs and IMGtc just the previous A12. Exactly the same. So in my mind the A13 still has some form of PowerVR design in it.vladx - Wednesday, December 4, 2019 - link

Don't kid yourself, Apple tried to buy IMG Tech on the cheap and I'm glad Imagination refused their insulting offer.lucam - Wednesday, December 4, 2019 - link

To be honest I think Apple still has some PowerVR design into the current chips, even in the A13, otherwise I can't explain why it appears the GLdrivers (PowerVR, img texture text etc) when I run the GFX bench....I think Apple and Imagination still have in somehow some form of good relationship behind...

s.yu - Tuesday, December 3, 2019 - link

"ISO-area and process node comparison"Goddamn. So refreshing to see a comparison under such standard conditions yet still showing up in footnotes. This is the industry that I recognize, not Huawei's deceitful comparison during the launch of GPU Turbo with age old design and age old architecture *without* due clarification for months.

Sychonut - Tuesday, December 3, 2019 - link

Now imagine this, but on 14+++++++. A beauty.mode_13h - Tuesday, December 3, 2019 - link

> There are very few companies in the world able to claim a history in the graphics market dating back to the “golden age” of the 90’s. Among the handful of survivors of that era are of course NVIDIA and AMD (formerly ATI), but there is also Imagination Technologies.Well... if you're going to count ATI, who got gobbled up by AMD, then you might also count Qualcomm's Adreno, which came from BitBoys Oy and passed through ATI/AMD.