The AMD Ryzen 9 3950X Review: 16 Cores on 7nm with PCIe 4.0

by Dr. Ian Cutress on November 14, 2019 9:00 AM ESTGaming: Grand Theft Auto V

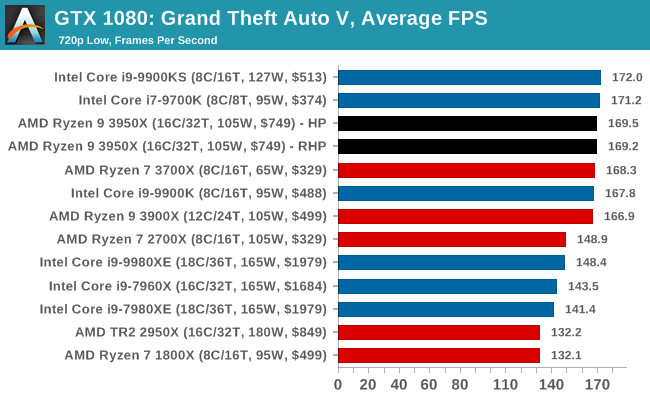

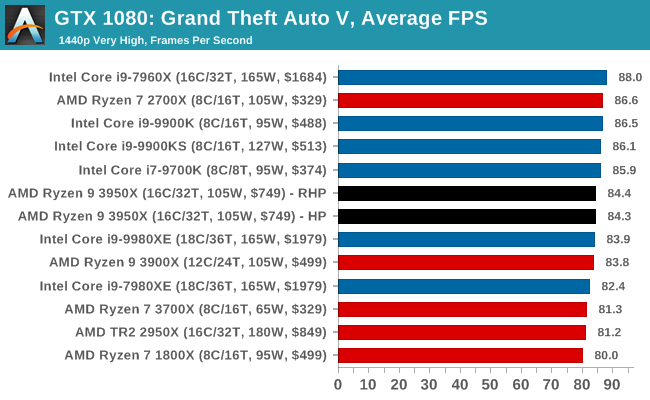

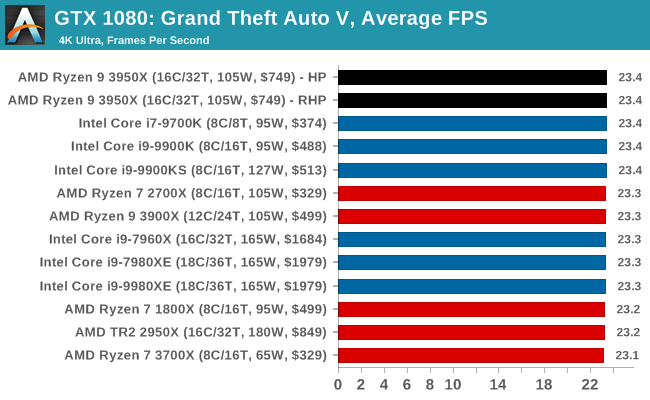

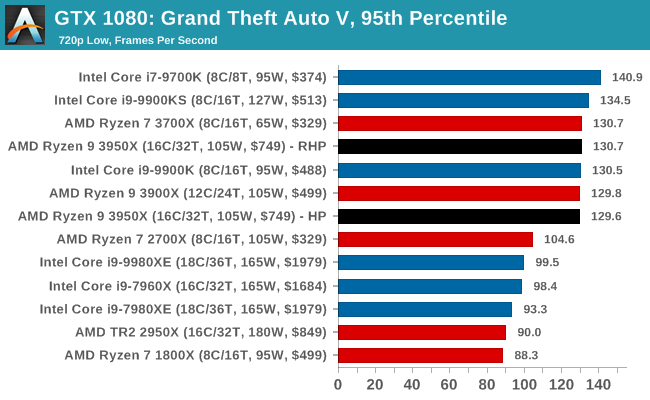

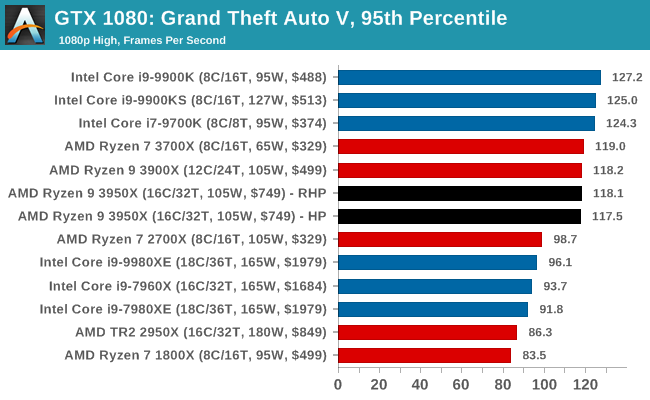

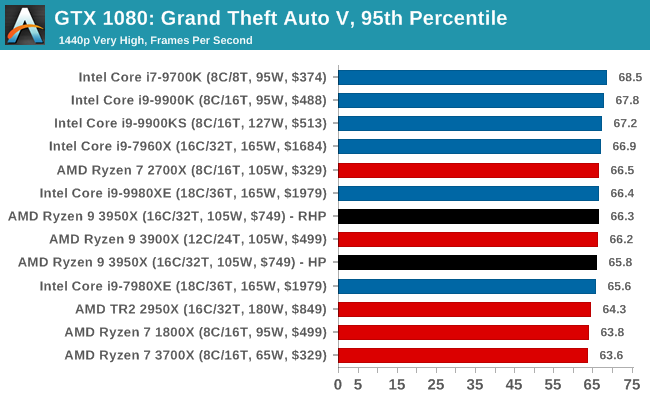

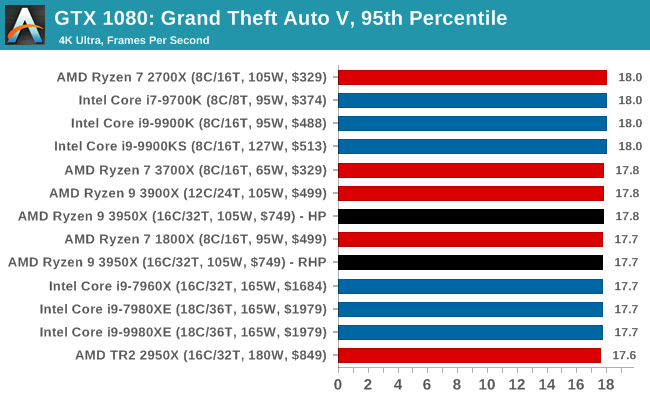

The highly anticipated iteration of the Grand Theft Auto franchise hit the shelves on April 14th 2015, with both AMD and NVIDIA in tow to help optimize the title. GTA doesn’t provide graphical presets, but opens up the options to users and extends the boundaries by pushing even the hardest systems to the limit using Rockstar’s Advanced Game Engine under DirectX 11. Whether the user is flying high in the mountains with long draw distances or dealing with assorted trash in the city, when cranked up to maximum it creates stunning visuals but hard work for both the CPU and the GPU.

For our test we have scripted a version of the in-game benchmark. The in-game benchmark consists of five scenarios: four short panning shots with varying lighting and weather effects, and a fifth action sequence that lasts around 90 seconds. We use only the final part of the benchmark, which combines a flight scene in a jet followed by an inner city drive-by through several intersections followed by ramming a tanker that explodes, causing other cars to explode as well. This is a mix of distance rendering followed by a detailed near-rendering action sequence, and the title thankfully spits out frame time data.

There are no presets for the graphics options on GTA, allowing the user to adjust options such as population density and distance scaling on sliders, but others such as texture/shadow/shader/water quality from Low to Very High. Other options include MSAA, soft shadows, post effects, shadow resolution and extended draw distance options. There is a handy option at the top which shows how much video memory the options are expected to consume, with obvious repercussions if a user requests more video memory than is present on the card (although there’s no obvious indication if you have a low end GPU with lots of GPU memory, like an R7 240 4GB).

All of our benchmark results can also be found in our benchmark engine, Bench.

| AnandTech | IGP | Low | Medium | High |

| Average FPS |  |

|

|

|

| 95th Percentile |  |

|

|

|

206 Comments

View All Comments

eva02langley - Thursday, November 14, 2019 - link

Yeah, because the average Joe is owning a 2080 TI to play at 1080p...blppt - Thursday, November 14, 2019 - link

Believe it or not, you need a 2080Ti to play 1080p at max settings smoothly in RDR2 at the moment.My oc'd 1080ti (FTW3) chokes on that game at 1080p/max settings.

itproflorida - Thursday, November 14, 2019 - link

Not.. 1440p 78 fps avg for RDR2 Benchmark and in game 72 fps avg maxed settings @ 1440p, 2080ti and 9700k@5Ghz.blppt - Thursday, November 14, 2019 - link

The 2080ti and other 2xxx series cards do MUCH better in RDR2 than their equivalent 10-series cards. Look at the benchmarks---we have Vega 64s challenging 1080tis in this game. That should not happen.https://www.guru3d.com/articles_pages/red_dead_red...

Ian Cutress - Thursday, November 14, 2019 - link

I have 2080 Ti units standing by, but my current benchmark run is with 1080s until I do a full benchmark reset. Probably Q1 next year, when I'm back at home for longer than 5 days. Supercomputing, Tech Summit, IEDM, and CES are in my next few weeks.Dusk_Star - Thursday, November 14, 2019 - link

> In our Ryzen 7 3700X review, with the 12-core processorPretty sure the 3700X is 8 cores.

Lux88 - Thursday, November 14, 2019 - link

Not a single compilation benchmark...Ian Cutress - Thursday, November 14, 2019 - link

Having issues getting the benchmark to work on Win 10 1909, didn't have time to debug. Hoping to fix it for the next benchmark suite update.Lux88 - Thursday, November 14, 2019 - link

Thanks!stux - Thursday, November 14, 2019 - link

Sad,Desperately want to know if the 3950x will make a good developer workstation. 64GB of Ram and a fast nvme, or is it going to be memory bandwidth bottlenecked... and I’ll need to step up to TR3.