Intel's Xeon Cascade Lake vs. NVIDIA Turing: An Analysis in AI

by Johan De Gelas on July 29, 2019 8:30 AM ESTTesting Notes

As the market stands, it is clear that alongside AMD and ARM, NVIDIA's professional offerings are a real threat to Intel's dominance in the datacenter and beyond. So for our testing today, we're going to focus on machine learning, and see just how Intel's new DL Boosted wares fare against the competition in the ML space.

On the Intel side of matters, of course, we're looking at the company's new Cascade Lake Xeon Scalable CPUs. The company provided two of their 28 core models, with the 165 Watt Xeon Platinum 8176, as well as the even faster 205 Watt Xeon Platinum 8280.

As for Cascade Lake's GPU competition, we've tapped NVIDIA's latest "Turing" Titan RTX card. While these aren't truly datacenter cards, the fact that they're based Turing means that they offer NVIDIA's very latest features. At the university that I work for, our deep learning researchers use these GPUs for training AI models as the Titan cards are affordable and have a lot of GPU memory available.

As an added bonus, Titan RTX cards can be used for both training (Hybrid FP32/16) as inference (FP16 and INT8). The current Tesla is still based on NVIDIA's Volta architecture, which does not have INT8 available for inference.

Finally, not to be excluded, we've also included AMD's first-generation EPYC platform in all of our testing. AMD doesn't have a hardware strategy quite like Intel – or specific instructions like VNNI – but as of late the company has offered all sorts of surprises.

Benchmark Configuration and Methodology

All of our testing was conducted on Ubuntu Server 18.04 LTS. You will notice that the DRAM capacity varies among our server configurations. This is of course a result of the fact that Xeons have access to six memory channels while EPYC CPUs have eight channels. As far as we know, all of our tests fit in 128 GB, so DRAM capacity should not have much influence on performance. But it will have a impact on total energy consumption, which we will discuss.

Last but not least, we want to note how the performance graphs have been color-coded. Orange is AMD's EPYC, dark blue is Intel's best (Cascade Lake/Skylake-SP), and light blue is the previous generation Xeons (Xeon E5-v4) . Gray has been used for the soon-to-be-replaced Xeon v1.

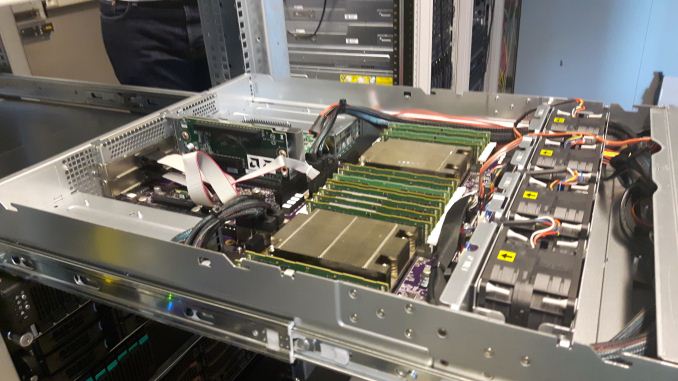

Intel's Xeon "Purley" Server – S2P2SY3Q (2U Chassis)

| CPU | Two Intel Xeon Platinum 8280 (2.7 GHz, 28c, 38.5MB L3, 205W) Two Intel Xeon Platinum 8176 (2.1 GHz, 28c, 38.5MB L3, 165W) |

| RAM | 384 GB (12x32 GB) Hynix DDR4-2666 |

| Internal Disks | SAMSUNG MZ7LM240 (bootdisk) Intel SSD3710 800 GB (data) |

| Motherboard | Intel S2600WF (Wolf Pass baseboard) |

| Chipset | Intel Wellsburg B0 |

| PSU | 1100W PSU (80+ Platinum) |

We enabled hyper-threading and Intel virtualization acceleration.

Xeon - NVIDIA Titan RTX Workstation

With some diplomacy, our AI researcher Pieter Bovijn at MCT was so kind to test his deep learning workstation. Below you can find the specs.

| CPU | Intel Xeon Gold 6152 (2.1 GHz, 22c, 30.25MB L3, 140W) |

| RAM | 192 GB (6x32 GB) Samsung DDR4-2666 |

| Internal Disks | SAMSUNG MZ7LM240 (bootdisk) Intel SSD3710 800 GB (data) |

| Motherboard | Supermicro SYS-7049A-T (Intel C621 chipset) |

| GPU | PNY TITAN RTX 24 GB GDDR6 |

| PSU | PWS-865-PQ |

This is the only server in the test with a discrete GPU.

AMD EPYC 7601 – (2U Chassis)

| CPU | Two EPYC 7601 (2.2 GHz, 32c, 8x8MB L3, 180W) |

| RAM | 512 GB (16x32 GB) Samsung DDR4-2666 @2400 |

| Internal Disks | SAMSUNG MZ7LM240 (bootdisk) Intel SSD3710 800 GB (data) |

| Motherboard | AMD Speedway |

| PSU | 1100W PSU (80+ Platinum) |

Other Notes

Both servers are fed by a standard European 230V (16 Amps max.) power line. The room temperature is monitored and kept at 23°C by our Airwell CRACs.

56 Comments

View All Comments

Drumsticks - Monday, July 29, 2019 - link

It's an interesting, valuable take on the challenges of responding to many of the ML workloads of today with a general purpose CPU, thanks! A third party review of Intel's latest against Nvidia, and even throwing AMD in to the mix, is pretty helpful as the two companies have been going at it for a while now.Intel has a lot of stuff going that should make the next few years quite interesting. If they manage to follow through on the Nervana Coprocessor/NNP-I that Toms talked about, or on their discrete GPUs, they'll have a potent lineup. The execution definitely isn't guaranteed, especially given the software reliance these products will have, but if Intel really can manage to transform their product stack, and do it in the next few years, they'll be well on their way to competing in a much larger market, and defending their current one.

OTOH, if they fail with all of them, it'll definitely be bad news for their future. They obviously won't go bankrupt (they'll continue to be larger than AMD for the foreseeable future), but it'll be exponentially harder if not impossible to get back into those markets they missed.

JohanAnandtech - Monday, July 29, 2019 - link

Thanks! Indeed, Nervana coprocessors are indeed Intel's most promising technology in this area.p1esk - Monday, July 29, 2019 - link

No one in their right mind would think "gee, should I get CPU or GPU for my DL app?" More concerning for Intel should be the fact that I bought a Threadripper for my latest DL build.Smell This - Monday, July 29, 2019 - link

You gotta Radeon VII ?I'm thinking Intel, and to a lesser extent, nVidia, is waiting for the next shoe(s) to drop in **Big Compute** --- Cascade Lake has been left at the starting gate.

An AMD Radeon Instinct 'cluster' on a dense specialized 'chiplet' server with hundreds of CPU cores/threads is where this train is headed ...

JohanAnandtech - Monday, July 29, 2019 - link

Spinning up a GPU based instance on Amazon is much more expensive than a CPU one. So for development purposes, this question is asked.p1esk - Tuesday, July 30, 2019 - link

Then you should be answering precisely that question: which instance should I spin up? Your article does not help with that because the CPU you test is more expensive than the GPU.JohnnyClueless - Monday, July 29, 2019 - link

Really surprised Intel, and to a lesser extent AMD, are even trying to fight this battle with nVidia on these terms. It’s a lot like going to a gun fight and developing an extra sharp samurai sword rather than bringing the usual switchblade knife. The sword may be awesome, but it’s always going to be the wrong tool for the gun fight.IMO, a better approach to capture market share in DL/AI/HPC might be to develop a low core count (by 2019 standards) CPU that excelled at sequential single threaded performance. Something like 6-10 GHz. That would provide a huge and tangible boost to any workload that is at least partially single core frequency limited, and that is most DL/AI/HPC workloads. Leave the parallel computing to chips and devices designed to excel at such workloads!

Eris_Floralia - Monday, July 29, 2019 - link

Still living in early 2000s?FunBunny2 - Monday, July 29, 2019 - link

"Something like 6-10 GHz. "IIRC, all the chip tried to get near that, but couldn't. it's not nice to fool Mother Nature.

Santoval - Monday, July 29, 2019 - link

"Something like 6-10 GHz."Google "Dennard scaling" (which ended in ~2005) to find out why this is impossible, at least with silicon based MOSFET transistors (including the GAA-FET based ones of the next decade). Wikipedia has a very informative page with multiple links to various sources for even more. The gist of the end of Dennard scaling is that single core clocks higher than ~5 GHz (at a reasonable TDP of up to ~100W) are explicitly forbidden at *any* node.

When Dennard scaling ended -in combination with the slowing down of Moore's Law- there was another, related consequence : Koomey's law started to slow down. Koomey's law is all about power efficiency, i.e. how many computations you can extract from each Wh or kWh.

Before the early 2000s the number of computations per x unit of energy doubled on average every 1.57 years. In 2011 Koomey himself re-evaluated his law and got an average doubling of computations every 2.6 years for the previous decade, a substantial collapse of power efficiency. Since 2011 Koomey's law has obviously slowed down further.

To make a long story short Moore's law puts a limit to the number of transistors we can fit in each mm^2, and that limit is not too far away. Dennard scaling once allowed us to raise clocks with each new node at the same TDP, and this is ancient history in computing terms. Koomey's law, finally, puts a limit to the power efficiency of our CPUs/GPUs, and this continues to slow down due to the slowing down of Moore's Law (when Moore's Law ends Koomey's law will also end, thus all three fundamental computing laws will be "dead").

Unless we ditch silicon (and even CMOS transistors, if required) and adopt a new computing paradigm we will have neither 6 - 10 GHz clocked CPUs in a couple of decades nor will we able to speed up CPUs, GPUs and computers at all.