The NVIDIA GeForce GTX 1650 Review, Feat. Zotac: Fighting Brute Force With Power Efficiency

by Ryan Smith & Nate Oh on May 3, 2019 10:15 AM ESTBattlefield 1 (DX11)

Battlefield 1 returns from the 2017 benchmark suite, the 2017 benchmark suite with a bang as DICE brought gamers the long-awaited AAA World War 1 shooter a little over a year ago. With detailed maps, environmental effects, and pacy combat, Battlefield 1 provides a generally well-optimized yet demanding graphics workload. The next Battlefield game from DICE, Battlefield V, completes the nostalgia circuit with a return to World War 2, but more importantly for us, is one of the flagship titles for GeForce RTX real time ray tracing.

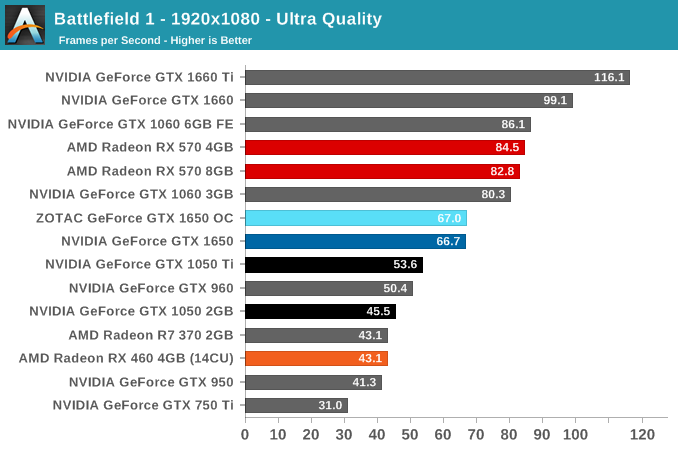

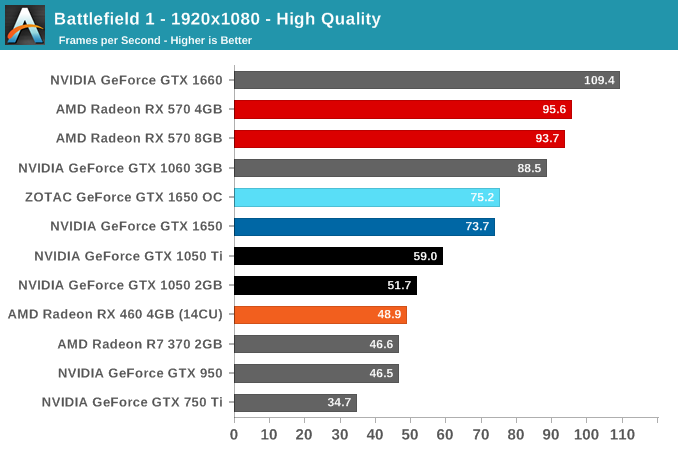

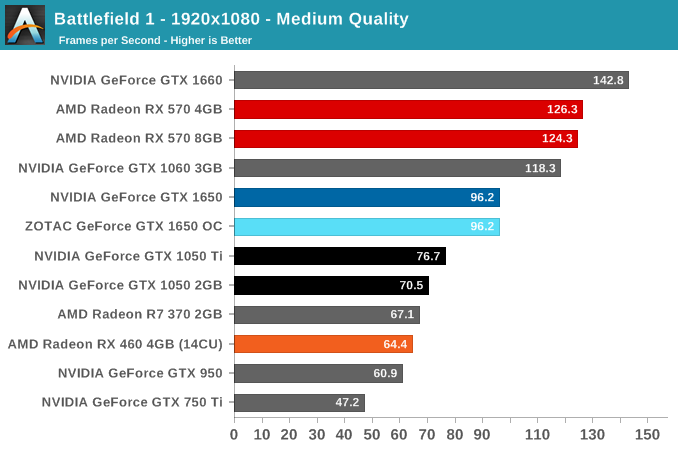

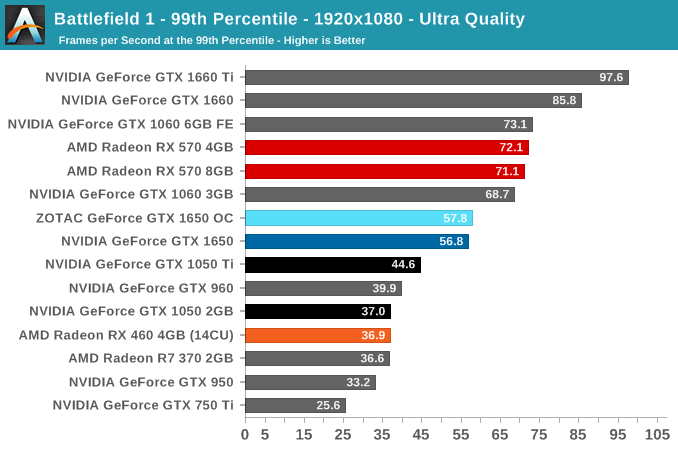

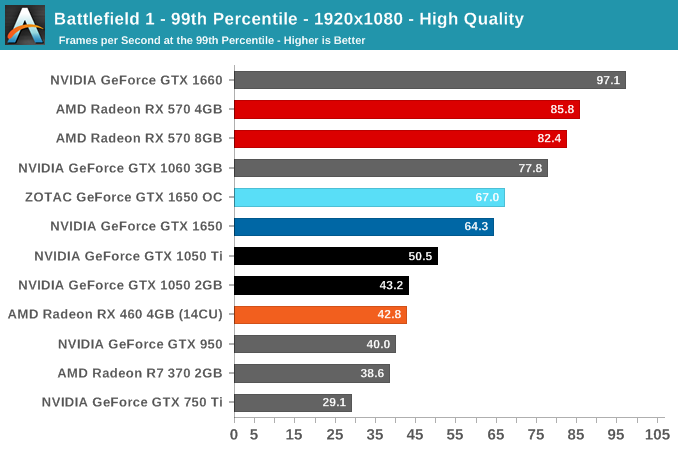

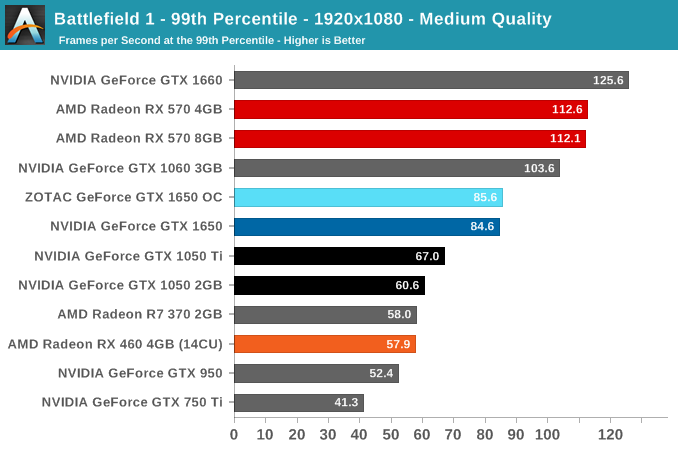

We use the Ultra, High, and Medium presets is used with no alterations. As these benchmarks are from single player mode, our rule of thumb with multiplayer performance still applies: multiplayer framerates generally dip to half our single player framerates. Battlefield 1 also supports HDR (HDR10, Dolby Vision).

Without a direct competitor in the current generation, the GTX 1650's intended position is somewhat vague, outside of iterating on Pascal's GTX 1050 variants. Looking back to Pascal's line-up, the GTX 1650 splits the difference between the GTX 1050 Ti and GTX 1060 3GB, and far from the GTX 1660.

Compared to the RX 570 though, the GTX 1650 is handily outpaced, and Battlefield 1 where the GTX 1650 is the furthest behind. That being said, the RX 570 wasn't originally in this segment, with price being the common denominator. The RX 460, meanwhile, is well-outclassed, and the additional 2 CUs in the RX 560 would be unlikely to significantly narrow the gap.

As for the ZOTAC card, the 30 MHz is an unnoticable difference in real world terms.

126 Comments

View All Comments

PeachNCream - Tuesday, May 7, 2019 - link

Agreed with nevc on this one. When you start discussing higher end and higher cost components, consideration for power consumption comes off the proverbial table to a great extent because priority is naturally assigned moreso to performance than purchase price or electrical consumption and TCO.eek2121 - Friday, May 3, 2019 - link

Disclaimer, not done reading the article yet, but I saw your comment.Some people look for low wattage cards that don't require a power connector. These types of cards are particularly suited for MiniITX systems that may sit under the TV. The 750ti was super popular because of this. Having Turings HEVC video encode/decode is really handy. You can put together a nice small MiniITX with something like the Node 202 and it will handle media duties much better than other solutions.

CptnPenguin - Friday, May 3, 2019 - link

That would be great if it actually had the Turing HVEC encoder - it does not; it retains the Volta encoder for cost saving or some other Nvidia-Alone-Knows reason. (source: Hardware Unboxed and Gamer's Nexus).Anyone buying a 1650 and expecting to get the Turing video encoding hardware is in for a nasty surprise.

Oxford Guy - Saturday, May 4, 2019 - link

"That would be great if it actually had the Turing HVEC encoder - it does not; it retains the Volta encoder"Yeah, lack of B support stinks.

JoeyJoJo123 - Friday, May 3, 2019 - link

Or if you're going with a miniITX low wattage system, you can cut out the 75w GPU and just go with a 65w AMD Ryzen 2400G since the integrated Vega GPU is perfectly suitable for an HTPC type system. It'll save you way more money with that logic.0ldman79 - Sunday, May 19, 2019 - link

What they are going to do though is look at the fast GPU + PSU vs the slower GPU alone.People with OEM boxes are going to buy one part at a time. Trust me on this, it's frustrating, but it's consistent.

Gich - Friday, May 3, 2019 - link

25$ a year? So 7cents a day?7cents is more then 1kWh where I live.

Yojimbo - Friday, May 3, 2019 - link

The us average is a bit over 13 cents per kilowatt hour. But I made an error in the calculation and was way off. It's more like $15 over 2 years and not $50. Sorry.DanNeely - Friday, May 3, 2019 - link

That's for an average of 2h/day gaming. Bump it up to a hard core 6h/day and you get around $50/2 years. Or 2h/day but somewhere with obnoxiously expensive electricity like Hawaii or Germany.rhysiam - Saturday, May 4, 2019 - link

I'd just like to point out that if you've gamed for an average of 6h per day over 2 years with a 570 instead of a 1650, then you've also been enjoying 10% or so extra performance. That's more than 4000 hours of higher detail settings and/or frame rates. If people are trying to calculate the true "value" of a card, then I would argue that this extra performance over time, let's not forget the performance benefits!