Upgrading from an Intel Core i7-2600K: Testing Sandy Bridge in 2019

by Ian Cutress on May 10, 2019 10:30 AM EST- Posted in

- CPUs

- Intel

- Sandy Bridge

- Overclocking

- 7700K

- Coffee Lake

- i7-2600K

- 9700K

Sandy Bridge: Inside the Core Microarchitecture

In the modern era, we are talking about chips roughly the size of 100-200mm2 having up to eight high performance cores on the latest variants of Intel’s 14nm process or AMD’s use of GlobalFoundries / upcoming with TSMC. Back with Sandy Bridge, 32nm was a different beast. The manufacturing process was still planar without FinFETs, implementing Intel’s second generation High-K Metal Gate, and achieving 0.7x scaling compared to the larger 45nm previous. The Core i7-2600K was the largest quad core die, running at 216 mm2 and 1.16 billion transistors, which compared to the latest Coffee Lake processors on 14nm offer eight cores at ~170 mm2 and over 2 billion transistors.

The big leap of the era was in the microarchitecture. Sandy Bridge promised (and delivered) a significant uplift in raw clock-for-clock performance over the previous generation Westmere processors, and forms the base schema for Intel’s latest chips almost a decade later. A number of key innovations were first made available at retail through Sandy Bridge, which have been built upon and iterated over many times to get to the high performance we have today.

Through this page, I have largely used Anand’s initial report into the microarchitecture back in 2010 as a base, with additions based on the modern look on this processor design.

A Quick Recap: A Basic Out-of-Order CPU Core

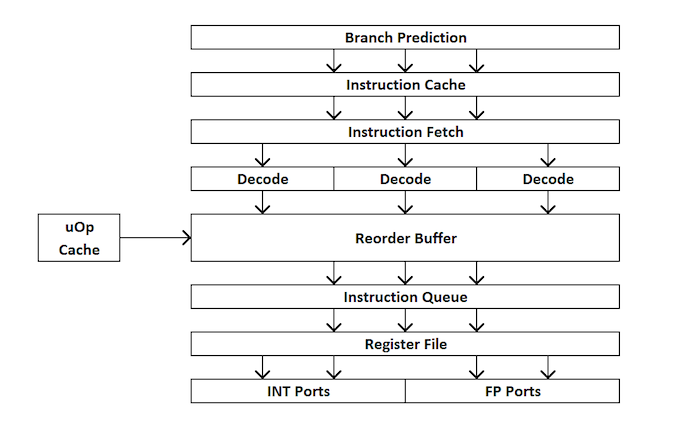

For those new to CPU design, here’s a quick run through of how an out-of-order CPU works. Broadly speaking, a core is divided into the front end and back end, and data first comes into the front end.

In the front end, we have the prefetchers and branch predictors that will predict and pull in instructions from the main memory. The idea here is that if you can predict what data and instructions are needed next before they are needed, then you can save time by having that data close to the core when needed. The instructions are then placed into a decoder, which transforms the byte code instruction into a number of ‘micro-operations’ that the core can then use. There are different types of decoders for simple and complex instructions – simple x86 instructions map easily to one micro-op, whereas more complex instructions can decode to more – the ideal situation is a decode ratio as low as possible, although sometimes instructions can be split into more micro-ops if they can be run in parallel together (instruction level parallelism, or ILP).

If the core has a ‘micro-operation cache’, or uOp cache, then the results from each decoded instruction ends up there. The core can detect before an instruction is decoded if that particular instruction has been decoded recently, and use the result from the previous decode rather than doing a full decode which wastes power.

Now the uOps are now in an allocation queue, which for modern cores usually means that the core can detect if the instructions are part of a simple loop, or if it can fuse uOps together to make the whole thing go quicker, it can. The uOps are then fed into the re-order buffer, which forms the ‘back end’ of the core.

In the back end, starting with the re-order buffer, uOps can be rearranged depending on where the data each micro-op needs is. This buffer can rename and allocate uOps depending on where they need to go (integer vs FP), and depending on the core, it can also act as a retire station for complete instructions. After the re-order buffer, uOps are fed into the scheduler in a desired order to ensure data is ready and the uOp throughput is as high as possible.

In the scheduler, it passes the uOps into the execution ports (what does the compute) as required. Some cores have a unified scheduler between all the ports, however some split the scheduler depending on integer operations or vector style operations. Most out-of-order cores can have anywhere from 4 to 10 ports (or more), and these execution ports will do the math required on the data given the instruction passed through the core. Execution ports can take the form of a load unit (load from cache), a store unit (store into cache), an integer math unit, a floating point math unit, vector math units, special division units, and a few others for special operations. After the execution port is complete, the data can then be held for reuse in a cache, be pushed to main memory, while the instruction feeds into the retire queue, and finally retired.

This brief overview doesn’t touch on some of the mechanisms that modern cores use to help caching and data look up, such as transaction buffers, stream buffers, tagging, etc., some of which get iterative improvements every generation, but usually when we talk about ‘instructions per clock’ as a measure of performance, we aim to get as many instructions through the core (through the front end and back end) as many as possible – this relies on the decode strength of the front end, the prefetchers, the reorder buffers, and maximising the execution port use, along with retiring as many completed instructions as possible every clock cycle.

With this in mind, hopefully it will give context to some of Anand’s analysis back when Sandy Bridge was launched.

Sandy Bridge: The Front End

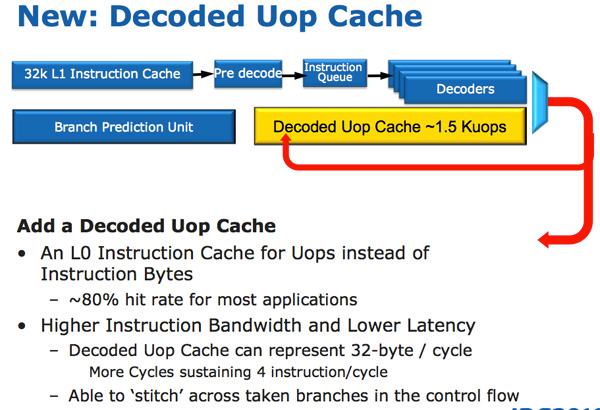

Sandy Bridge’s CPU architecture is evolutionary from a high level viewpoint but far more revolutionary in terms of the number of transistors that have been changed since Nehalem/Westmere. The biggest change for Sandy Bridge (and all microarchitectures since) is the micro-op cache (uOp cache).

In Sandy Bridge, there’s now a micro-op cache that caches instructions as they’re decoded. There’s no sophisticated algorithm here, the cache simply grabs instructions as they’re decoded. When SB’s fetch hardware grabs a new instruction it first checks to see if the instruction is in the micro-op cache, if it is then the cache services the rest of the pipeline and the front end is powered down. The decode hardware is a very complex part of the x86 pipeline, turning it off saves a significant amount of power.

The cache is direct mapped and can store approximately 1.5K micro-ops, which is effectively the equivalent of a 6KB instruction cache. The micro-op cache is fully included in the L1 instructioncache and enjoys approximately an 80% hit rate for most applications. You get slightly higher and more consistent bandwidth from the micro-op cache vs. the instruction cache. The actual L1 instruction and data caches haven’t changed, they’re still 32KB each (for total of 64KB L1).

All instructions that are fed out of the decoder can be cached by this engine and as I mentioned before, it’s a blind cache - all instructions are cached. Least recently used data is evicted as it runs out of space. This may sound a lot like Pentium 4’s trace cache but with one major difference: it doesn’t cache traces. It really looks like an instruction cache that stores micro-ops instead of macro-ops (x86 instructions).

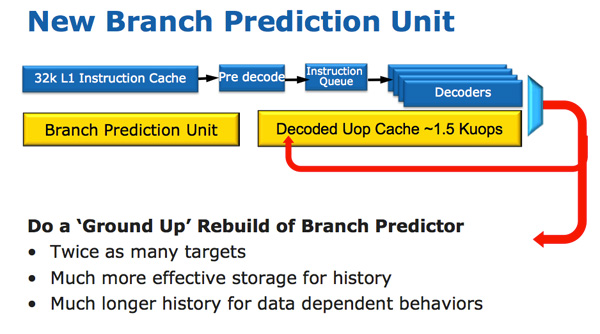

Along with the new micro-op cache, Intel also introduced a completely redesigned branch prediction unit. The new BPU is roughly the same footprint as its predecessor, but is much more accurate. The increase in accuracy is the result of three major innovations.

The standard branch predictor is a 2-bit predictor. Each branch is marked in a table as taken/not taken with an associated confidence (strong/weak). Intel found that nearly all of the branches predicted by this bimodal predictor have a strong confidence. In Sandy Bridge, the bimodal branch predictor uses a single confidence bit for multiple branches rather than using one confidence bit per branch. As a result, you have the same number of bits in your branch history table representing many more branches, which can lead to more accurate predictions in the future.

Branch targets also got an efficiency makeover. In previous architectures there was a single size for branch targets, however it turns out that most targets are relatively close. Rather than storing all branch targets in large structures capable of addressing far away targets, SNB now includes support for multiple branch target sizes. With smaller target sizes there’s less wasted space and now the CPU can keep track of more targets, improving prediction speed.

Finally we have the conventional method of increasing the accuracy of a branch predictor: using more history bits. Unfortunately this only works well for certain types of branches that require looking at long patterns of instructions, and not well for shorter more common branches (e.g. loops, if/else). Sandy Bridge’s BPU partitions branches into those that need a short vs. long history for accurate prediction.

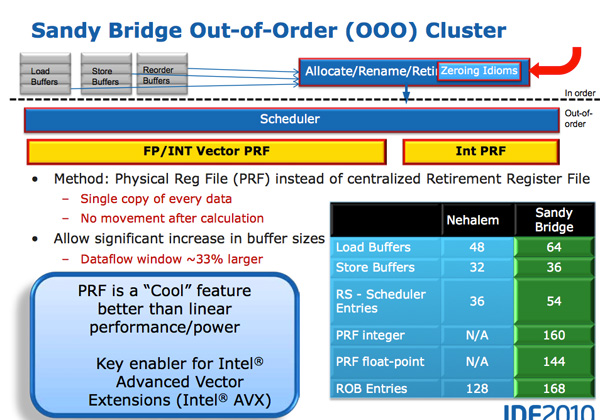

A Physical Register File

Compared to Westmere, Sandy Bridge moves to a physical register file. In Core 2 and Nehalem, every micro-op had a copy of every operand that it needed. This meant the out-of-order execution hardware (scheduler/reorder buffer/associated queues) had to be much larger as it needed to accommodate the micro-ops as well as their associated data. Back in the Core Duo days that was 80-bits of data. When Intel implemented SSE, the burden grew to 128-bits. With AVX however we now have potentially 256-bit operands associated with each instruction, and the amount that the scheduling/reordering hardware would have to grow to support the AVX execution hardware Intel wanted to enable was too much.

A physical register file stores micro-op operands in the register file; as the micro-op travels down the OoO engine it only carries pointers to its operands and not the data itself. This significantly reduces the power of the out of order execution hardware (moving large amounts of data around a chip eats tons of power), and it also reduces die area further down the pipe. The die savings are translated into a larger out of order window.

The die area savings are key as they enable one of Sandy Bridge’s major innovations: AVX performance.

AVX

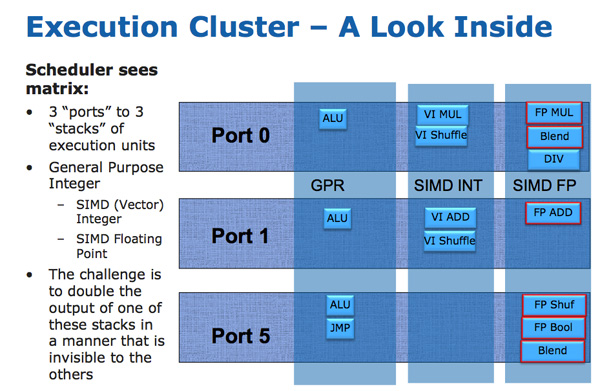

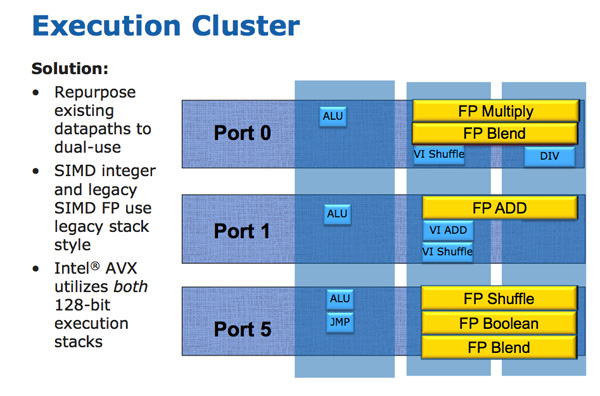

The AVX instructions support 256-bit operands, which as you can guess can eat up quite a bit of die area. The move to a physical register file enabled Intel to increase the OoO buffers to properly feed a higher throughput floating point engine. Intel clearly believes in AVX as it extended all of its SIMD units to 256-bit wide. The extension is done at minimal die expense. Nehalem has three execution ports and three stacks of execution units:

Sandy Bridge allows 256-bit AVX instructions to borrow 128-bits of the integer SIMD datapath. This minimizes the impact of AVX on the execution die area while enabling twice the FP throughput, you get two 256-bit AVX operations per clock (+ one 256-bit AVX load).

Granted you can’t mix 256-bit AVX and 128-bit integer SSE ops, however remember SNB now has larger buffers to help extract more instruction level parallelism (ILP).

Load and Store

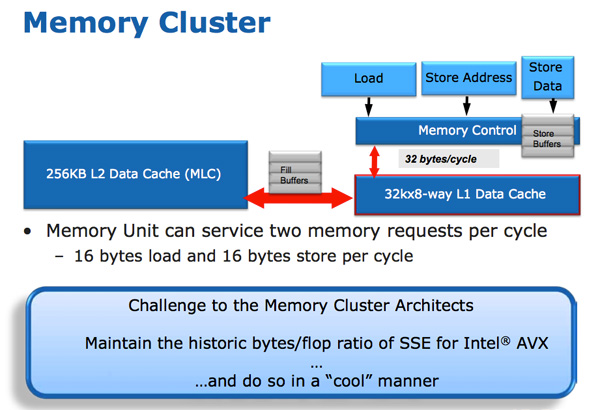

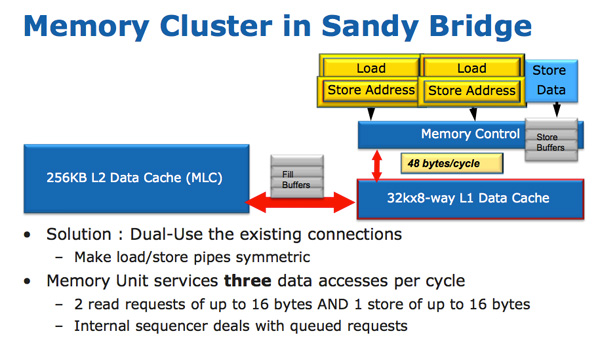

The improvements to Sandy Bridge’s FP performance increase the demands on the load/store units. In Nehalem/Westmere you had three LS ports: load, store address and store data.

In SNB, the load and store address ports are now symmetric so each port can service a load or store address. This doubles the load bandwidth compared to Westmere, which is important as Intel doubled the peak floating point performance in Sandy Bridge.

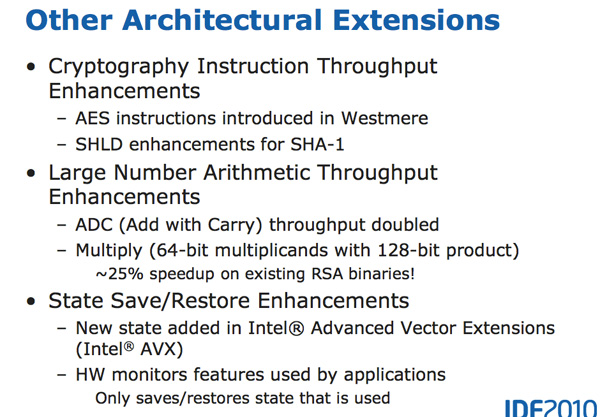

There are some integer execution improvements in Sandy Bridge, although they are more limited. Add with carry (ADC) instruction throughput is doubled, while large scale multiplies (64 * 64) see a ~25% speedup.

213 Comments

View All Comments

Midwayman - Monday, May 13, 2019 - link

I think the biggest thing I noticed moving to a 8700k from a 2600k was the same thing I noticed moving from a core 2 duo to a 2600k. Less weird pauses. The 2600k would get weird hitches in games. System processes would pop up and tank the frame rate for an instant, or just an explosion would trigger a physics event that would make it stutter. I see that a lot less with a couple extra cores and some performance overhead.tmanini - Monday, May 13, 2019 - link

I agree, the user experience is definitely improved in those ways. Granted, many of us think our time is a bit more important than it likely really is. (does waiting 3 seconds really ruin my day?)ochadd - Monday, May 13, 2019 - link

Enjoyed the article very much.Magnus101 - Monday, May 13, 2019 - link

You get about 3Xperformance when going from an upclocked 2600k@4.5GHz to a 8700k@4.5GHz when working in DAW:s (Digital Audio Workstation), i.e running dozens and dozens of virtual instruments and plugins when making music.The thing is that it is a combination of applications that:

1. Use all the SSE/AVX or whatever all the streaming extensions that makes parallell flotaing point calculations go much faster. DAW is all about floating point calculations.

2. Are extremely real-time dependent to get ultra low latency (milliseconds in single digits).

This makes even the 7700 k about double in performance in some scenarios when compared to an equally clocked 2600k.

mikato - Monday, May 13, 2019 - link

"and Intel’s final quad-core with HyperThreading chip for desktop, the 7700K""the Core i7-7700K, Intel’s final quad-core with HyperThreading processor"

Did I miss some big news?

mapesdhs - Monday, May 13, 2019 - link

"... the best chips managed 5.0 GHz or 5.1 GHz in a daily system."Worth noting that with the refined 2700K, *all* of them run fine at 5GHz in a daily system, sensible temps, a TRUE and one fan is plenty for cooling. Threaded performance is identical to a stock 6700K, IPC is identical to a stock 2700X (880 and 177 for CB R15 Nt/1t resp.)

Also, various P67/Z68 mbds support NVMe boot via modded BIOS files. The ROG forum has a selection for ASUS, search for "ASUS bolts4breakfast"; he's added support for the M4E and M4EZ, and I think others asked the same for the Pro Gen3, etc. I'm sure there are equivalent BIOS mod threads for GIgabyte, MSI, etc. My 5GHz 2700K on an M4E has a 1TB SM961 and a 1TB 970 EVO Plus (photo/video archive), though the C-drive is still a venerable Vector 256GB which holds up well even today.

Also, RAM support runs fine with 2133 CL9 on the M4E, which is pretty good (16GB GSkill TridentX, two modules).

However, after using this for a great many years, I do find myself wanting better performance for processing images & video, so I'll likely be stepping up to a Ryzen 3000 system, at least 8 cores.

mapesdhs - Monday, May 13, 2019 - link

Forgot to mention, someting else interesting about SB is the low cost of the sibling SB-E. Would be a laugh to see how all those tests pan with with a 3930K stock/oc'd thrown into the mix. It's a pity good X79 boards are hard to find now given how cheap one can get 3930Ks for these days. If stock performance is ok though, there are some cheap Chinese boards which work pretty well, and some of them do support NVMe boot.tezcan - Monday, May 13, 2019 - link

I am still running 3930k, prices for it are still very high ~$500. Not much cheaper then what I paid for it in 2011. I am yet to really test my GTX 680's in SLI. Kind of a waste, but they are driving many displays throughout my house. There was an article where some Australian bloke guy runs an 8 core sandy bridge - e (server chip) vs all modern intel 8 core chips. It actually had the lowest latency so was best for pro gamers, lagged a little behind on everything else- but definitely good enough.dad_at - Tuesday, May 14, 2019 - link

I run 3960X at ~ 4 GHz on X79 ASUS P9X79 and have nvme boot drive with modified BIOS. So it is really interesting to compare 2011/2012 6c/12t to 8700K or 9900K. I guess it's about 7700K stock, so modern 4c/8t is like old 6c/12t. Per core perf is about 20-30% up on average and this includes higher frequency ... So IPC is only about 15% up: not impressive. Of course in some loads like AVX2 heavy apps IPC could be 50% up, but such case is not common.martixy - Monday, May 13, 2019 - link

Oh man... I just upgraded my 2600K to a 9900K and a couple days later this article drops...The timing is impeccable!

If I ever had a shred of buyer's remorse, the article conclusion eradicated it thoroughly. Give me more FPS.

I saw a screenshot of StarCraft 2. On a mission which I, again, coincidentally (this is uncanny) played today. I can now report that the 9900K can FINALLY feed my graphics card in SC2 properly. With the 2600K I'd be around 20-60 FPS depending on load and intensity of the action. With the new processors, it barely ever drops below 60 and usually hovers around 90FPS. Ingame cinematics also finally run above the "cinematic" 30 FPS I saw on my trusty old 2600K.