Upgrading from an Intel Core i7-2600K: Testing Sandy Bridge in 2019

by Ian Cutress on May 10, 2019 10:30 AM EST- Posted in

- CPUs

- Intel

- Sandy Bridge

- Overclocking

- 7700K

- Coffee Lake

- i7-2600K

- 9700K

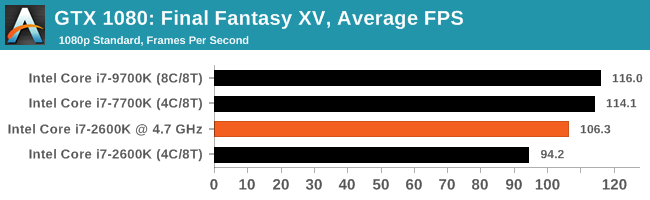

Gaming: Final Fantasy XV

Upon arriving to PC earlier this, Final Fantasy XV: Windows Edition was given a graphical overhaul as it was ported over from console, fruits of their successful partnership with NVIDIA, with hardly any hint of the troubles during Final Fantasy XV's original production and development.

In preparation for the launch, Square Enix opted to release a standalone benchmark that they have since updated. Using the Final Fantasy XV standalone benchmark gives us a lengthy standardized sequence to record, although it should be noted that its heavy use of NVIDIA technology means that the Maximum setting has problems - it renders items off screen. To get around this, we use the standard preset which does not have these issues.

Square Enix has patched the benchmark with custom graphics settings and bugfixes to be much more accurate in profiling in-game performance and graphical options. For our testing, we run the standard benchmark with a FRAPs overlay, taking a 6 minute recording of the test.

| AnandTech CPU Gaming 2019 Game List | ||||||||

| Game | Genre | Release Date | API | IGP | Low | Med | High | |

| Final Fantasy XV | JRPG | Mar 2018 |

DX11 | 720p Standard |

1080p Standard |

4K Standard |

8K Standard |

|

All of our benchmark results can also be found in our benchmark engine, Bench.

| AnandTech | IGP | Low | Medium | High |

| Average FPS |  |

|

|

|

| 95th Percentile |  |

|

|

|

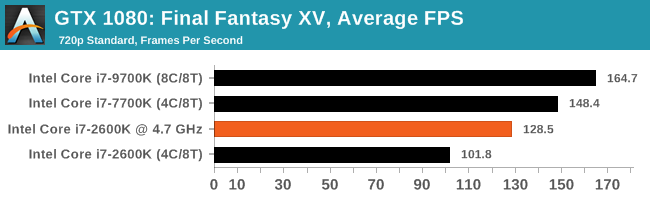

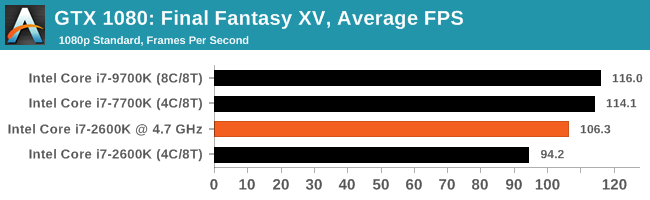

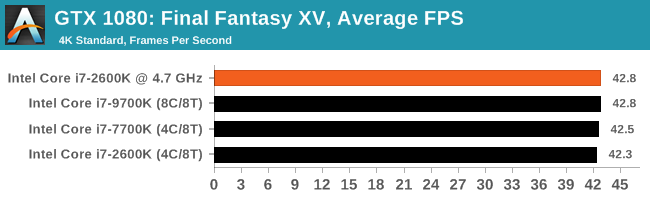

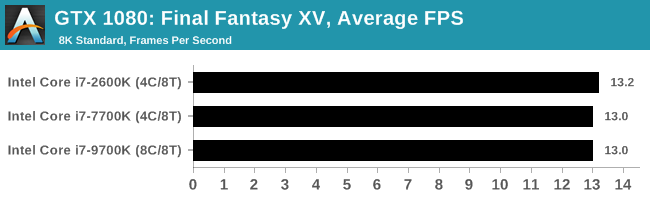

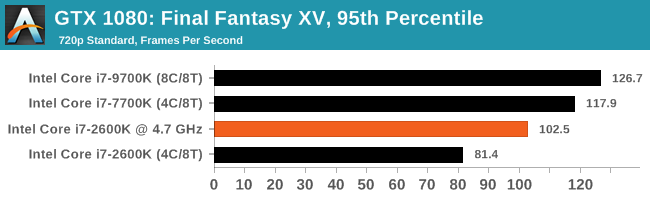

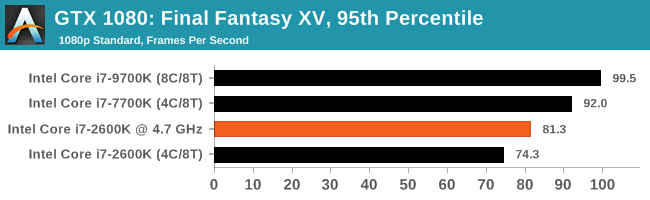

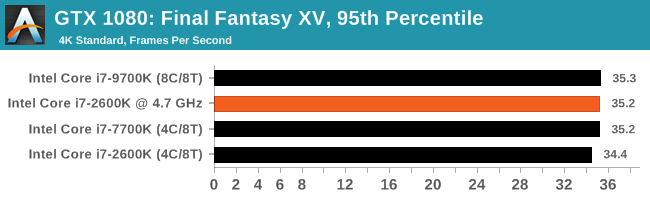

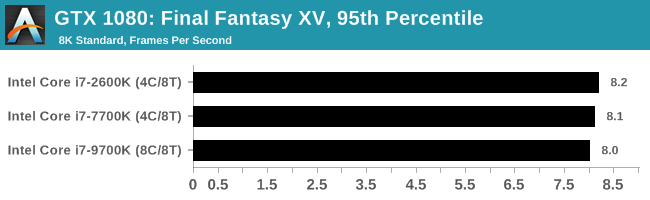

For Final Fantasy, all chips performed essentially the same from 4K upwards (the OC run failed at 8K for some reason), but at 1080p resolutions the OC chip still sits between the 2600K/7700K at stock almost easily in the middle.

213 Comments

View All Comments

kgardas - Friday, May 10, 2019 - link

Indeed, it's sad that it took ~8 years to have double performance kind of while in '90 we get that every 2-3 years. And look at the office tests, we're not there yet and we will probably never ever be as single-thread perf. increases are basically dead. Chromium compile suggests that it makes a sense to update at all -- for developers, but for office users it's nonsense if you consider just the CPU itself.chekk - Friday, May 10, 2019 - link

Thanks for the article, Ian. I like your summation: impressive and depressing.I'll be waiting to see what Zen 2 offers before upgrading my 2500K.

AshlayW - Friday, May 10, 2019 - link

Such great innovation and progress and cost-effectiveness advances from Intel between 2011 and 2017. /sYes AMD didn't do much here either, but it wasn't for lack of trying. Intel deliberately stagnated the market to bleed consumers from every single cent, and then Ryzen turns up and you get the 6 and now 8 core mainstream CPUs.

Would have liked to see 2600K versus Ryzen honestly. Ryzen 1st gen is around Ivy/Haswell performance per core in most games and second gen is haswell/broadwell. But as many games get more threaded, Ryzen's advantage will ever increase.

I owned a 2600K and it was the last product from Intel that I ever owned that I truly felt was worth its price. Even now I just can't justify spending £350-400 quid on a hexa core or octa with HT disabled when the competition has unlocked 16 threads for less money.

29a - Friday, May 10, 2019 - link

"Yes AMD didn't do much here either"I really don't understand that statement at all.

thesavvymage - Friday, May 10, 2019 - link

Theyre saying AMD didnt do much to push the price/performance envelope between 2011 and 2017. Which they didnt, since their architecture until Zen was terrible.eva02langley - Friday, May 10, 2019 - link

Yeah, you are right... it is AMD fault and not Intel who wanted to make a dime on your back selling you quadcore for life.wilsonkf - Friday, May 10, 2019 - link

Would be more interesting to add 8150/8350 to the benchmark. I run my 8350 at 4.7Ghz for five years. It's a great room heater.MDD1963 - Saturday, May 11, 2019 - link

I don't think AMD would have sold as many of the 8350s and 9590s as they did had people known that i3's and i5's outperformed them in pretty much all games, and, at lower clock speeds, no less. Many people probably bought the FX8350 because it 'sounded faster' at 4.7 GHz than did the 2600K at 'only' 3.8 GHz' , or so I speculate, anyway... (sort of like the Florida Broward county votes in 2000!)Targon - Tuesday, May 14, 2019 - link

Not everyone looks at games as the primary use of a computer. The AMD FX chips were not great when it came to IPC, in the same way that the Pentium 4 was terrible from an IPC basis. Still, the 8350 was a lot faster than the Phenom 2 processors, that's for sure.artk2219 - Wednesday, May 15, 2019 - link

I got my FX 8320 because I preferred threads over single core performance. I was much more likely to notice a lack of computing resources and multi tasking ability vs how long something took to open or run. The funny part is that even though people shit all over them, they were, and honestly still are valid chips for certain use cases. They'll still game, they can be small cheap vhosts, nas servers, you name it. The biggest problem recently is finding a decent AM3+ board to put them in.