The NVIDIA GeForce GTX 1660 Ti Review, Feat. EVGA XC GAMING: Turing Sheds RTX for the Mainstream Market

by Ryan Smith & Nate Oh on February 22, 2019 9:00 AM ESTPower, Temperature, and Noise

As always, we'll take a look at power, temperature, and noise of the GTX 1660 Ti, though as a pure custom launch we aren't expecting anything out of the ordinary. As mentioned earlier, the XC Black board has already revealed itself in its RTX 2060 guise.

As this is a new GPU, we will quickly review the GeForce GTX 1660 Ti's stock voltages and clockspeeds as well.

| NVIDIA GeForce Video Card Voltages | ||

| Model | Boost | Idle |

| GeForce GTX 1660 Ti | 1.037V | 0.656V |

| GeForce RTX 2060 | 1.025v | 0.725v |

| GeForce GTX 1060 6GB | 1.043v | 0.625v |

The voltages are naturally similar to the 16nm GTX 1060, and in comparison to pre-FinFET generations, these voltages are exceptionally lower because of the FinFET process used, something we went over in detail in our GTX 1080 and 1070 Founders Edition review. As we said then, the 16nm FinFET process requires said low voltages as opposed to previous planar nodes, so this can be limiting in scenarios where a lot of power and voltage are needed, i.e. high clockspeeds and overclocking. For Turing (along with Volta, Xavier, and NVSwitch), NVIDIA moved to 12nm "FFN" rather than 16nm, and capping the voltage at 1.063v.

| GeForce Video Card Average Clockspeeds | |||||

| Game | GTX 1660 Ti | EVGA GTX 1660 Ti XC |

RTX 2060 | GTX 1060 6GB | |

| Max Boost Clock |

2160MHz

|

2160MHz |

2160MHz

|

1898MHz

|

|

| Boost Clock | 1770MHz | 1770MHz | 1680MHz | 1708MHz | |

| Battlefield 1 | 1888MHz | 1901MHz | 1877MHz | 1855MHz | |

| Far Cry 5 | 1903MHz | 1912MHz | 1878MHz | 1855MHz | |

| Ashes: Escalation | 1871MHz | 1880MHz | 1848MHz | 1837MHz | |

| Wolfenstein II | 1825MHz | 1861MHz | 1796MHz | 1835MHz | |

| Final Fantasy XV | 1855MHz | 1882MHz | 1843MHz | 1850MHz | |

| GTA V | 1901MHz | 1903MHz | 1898MHz | 1872MHz | |

| Shadow of War | 1860MHz | 1880MHz | 1832MHz | 1861MHz | |

| F1 2018 | 1877MHz | 1884MHz | 1866MHz | 1865MHz | |

| Total War: Warhammer II | 1908MHz | 1911MHz | 1879MHz | 1875MHz | |

| FurMark | 1594MHz | 1655MHz | 1565MHz | 1626MHz | |

Looking at clockspeeds, a few things are clear. The obvious point is that the very similar results of the reference-clocked GTX 1660 Ti and EVGA GTX 1660 Ti XC are reflected in the virtually identical clockspeeds. The GeForce cards boost higher than the advertised boost clock, as is typically the case in our testing. All told, NVIDIA's formal estimates are still run a bit low, especially in our properly ventilated testing chassis, so we won't complain about the extra performance.

But on that note, it's interesting to see that while the GTX 1660 Ti should have a roughly 60MHz average boost advantage over the GTX 1060 6GB when going by the official specs, in practice the cards end up within half that span. Which hints that NVIDIA's official average boost clock is a little more correctly grounded here than with the GTX 1060.

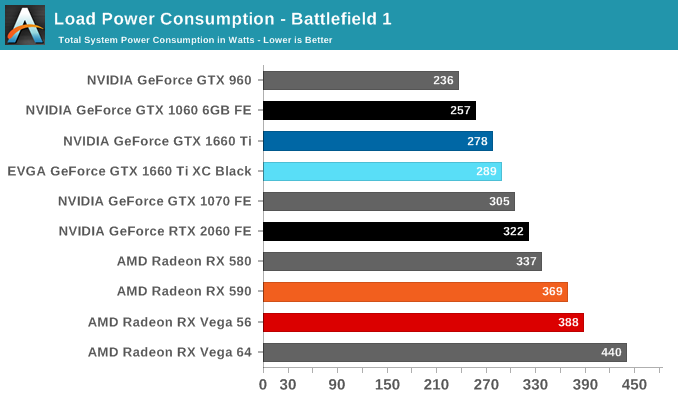

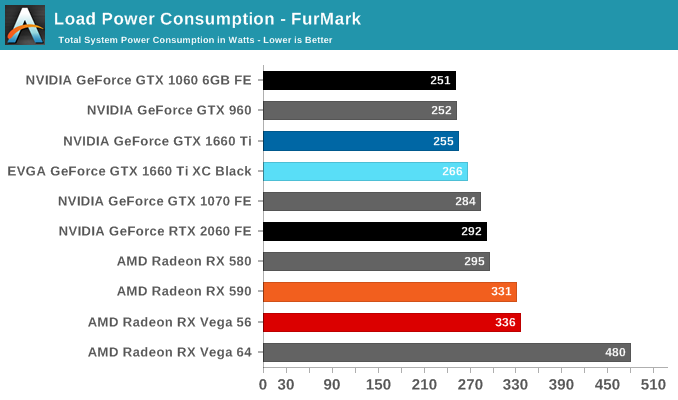

Power Consumption

Even though NVIDIA's video card prices for the xx60 cards have drifted up over the years, the same cannot be said for their power consumption. NVIDIA has set the reference specs for the card at 120W, and relative to their other cards this is exactly what we see. Looking at FurMark, our favorite pathological workload that's guaranteed to bring a video card to its maximum TDP, the GTX 960, GTX 1060, and GTX 1660 are all within 4 Watts of each other, exactly what we'd expect to see from the trio of 120W cards. It's only in Battlefield 1 do these cards pull apart in terms of total system load, and this is due to the greater CPU workload from the higher framerates afforded by the GTX 1660 Ti, rather than a difference at the card level itself.

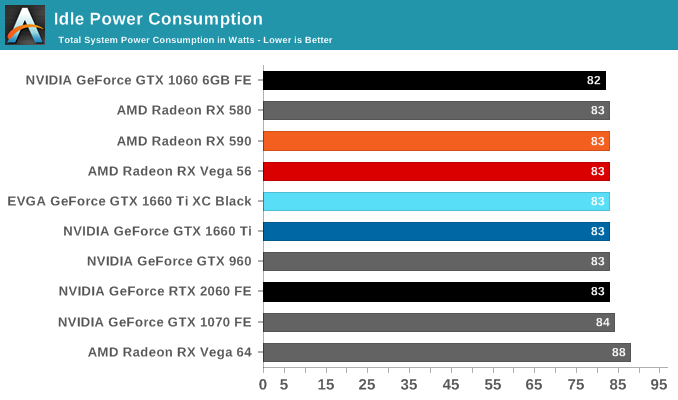

Meanwhile when it comes to idle power consumption, the GTX 1660 Ti falls in line with everything else at 83W. With contemporary desktop cards, idle power has reached the point where nothing short of low-level testing can expose what these cards are drawing.

As for the EVGA card in its natural state, we see it draw almost 10W more on the dot. I'm actually a bit surprised to see this under Battlefield 1 as well since the framerate difference between it and the reference-clocked card is barely 1%, but as higher clockspeeds get increasingly expensive in terms of power consumption, it's not far-fetched to see a small power difference translate into an even smaller performance difference.

All told, NVIDIA has very good and very consistent power control here. and it remains one of their key advantages over AMD, and key strengths in keeping their OEM customers happy.

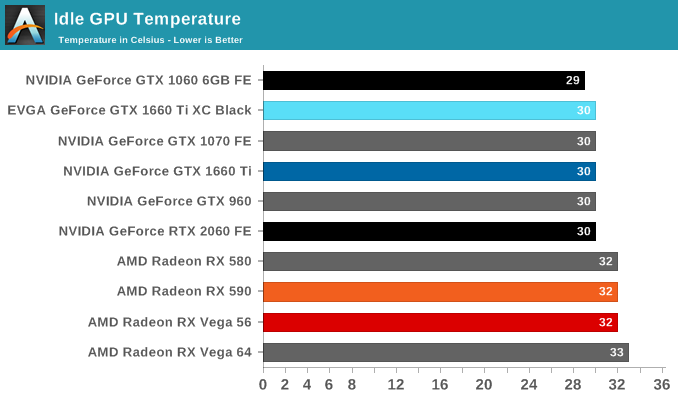

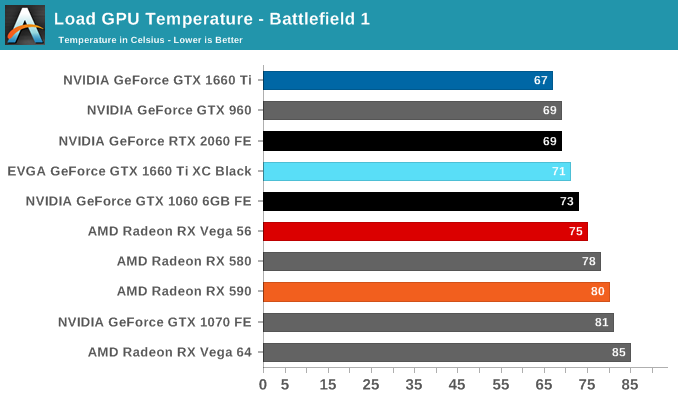

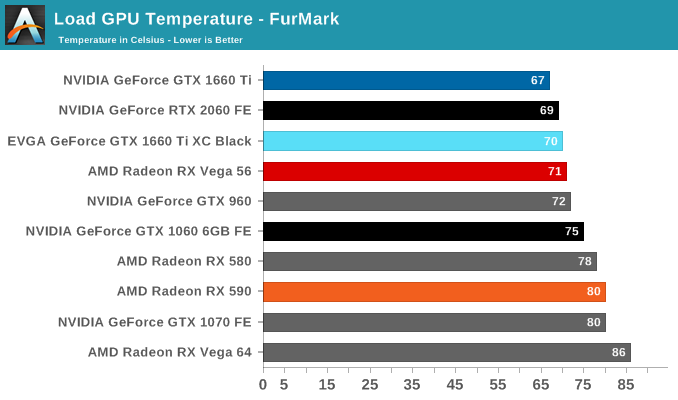

Temperature

Looking at temperatures, there are no big surprises here. EVGA seems to have tuned their card for high performance cooling, and as a result the large, 2.75-slot card reports some of the lowest numbers in our charts, including a 67C under FurMark when the card is capped at the reference spec GTX 1660 Ti's 120W limit.

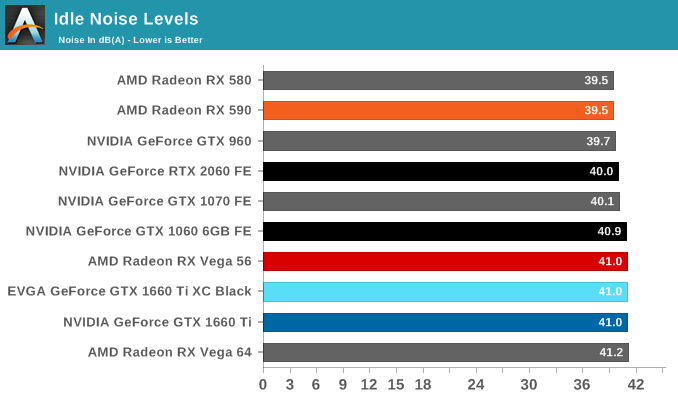

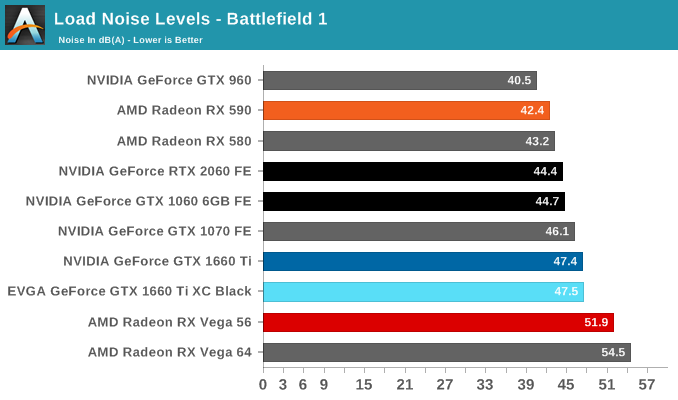

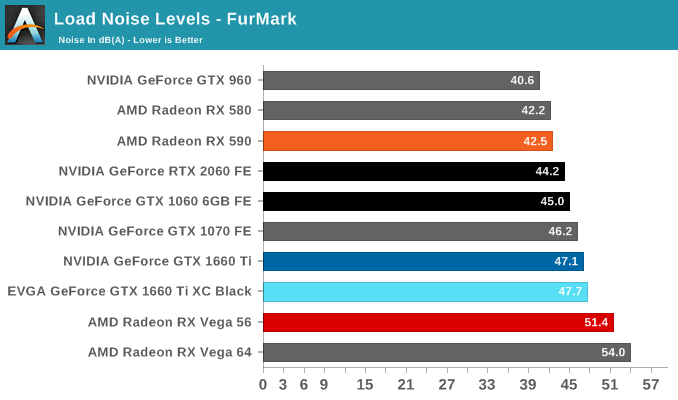

Noise

Turning again to EVGA's card, despite being a custom open air design, the GTX 1660 Ti XC Black doesn't come with 0db idle capabilties and features a single smaller but higher-RPM fan. The default fan curve puts the minimum at 33%, which is indicative that EVGA has tuned the card for cooling over acoustics. That's not an unreasonable tradeoff to make, but it's something I'd consider more appropriate for a factory overclocked card. For their reference-clocked XC card, EVGA could have very well gone with a less aggressive fan curve and still have easily maintained sub-80C temperatures while reducing their noise levels as well.

157 Comments

View All Comments

GreenReaper - Friday, February 22, 2019 - link

Your point is a lie, though, as you clearly didn't buy it on his recommendation. How can we believe anything you say after that?Questor - Wednesday, March 6, 2019 - link

Not criticizing, simply adding:Several times in the past, honest review sites did comparisons of electrical costs in several places around the States and a few other countries with regard to brand A video card at a lower power draw than brand B video card. The idea was to calculate a reasonable overall cost for the extra power draw and if it was worth worrying about/worth specifically buying the lower draw card. In each case it was negligible in terms of addition power use by dollar (or whatever currency). A lot of these great sites have died out or been bought out and are gone now. It a darned shame. We used to actually real useful information about products and what all these values actually mean to the user/customer/consumer. We used to see the same for power supplies too. I haven't seen anything like that in years now. Too bad. It proved how little a lot of the numbers mattered in real life to real bill paying consumers.

Icehawk - Friday, February 22, 2019 - link

Man this sucks, clearly this card isn't enough for 4k and I'm not willing to spend on a RTX 2070. Can I hope for a GTX 1170 at like $399? 8gb of RAM please. I'm not buying a new card until it's $400 or less and has 8gb+, my 970 runs 1440p maxed or close to it in almost all AAA games and even 4k in some (like Overwatch) so I'm not going for a small improvement - after 2 gens I should be looking at close to double the performance but it sure doesn't look like that's happening currently.eva02langley - Friday, February 22, 2019 - link

Navi is your only hope.CiccioB - Friday, February 22, 2019 - link

And I think he will be even more disappointed if he's looking for a 4K card that is able to play with <b>modern</b> games.BTW: No 1170 will be made. This card is the top Turing without RT+TC and so it's the best performance you can get at lowest the price. Other Turing with no RT+TC will be slower (though probably cheaper, but you are not looking for just a cheap card, you are looking for a x2 the performace of your actual one).

catavalon21 - Sunday, February 24, 2019 - link

I am curious, what are you basing "no 1170" on?CiccioB - Monday, February 25, 2019 - link

Huh, let's see...designing a new chip costs a lot of money, especially when it is not that tiny.

A chip bigger than this TU116 will be just faster than the 2060, which has a 445mm^2 die size which has to be sold with some margins (unlike AMD that sells Vega GPU+HBM at the price of bread slices and at the end of the quarter reports gains in the amount of the fractions number of nvidia, but that's good for AMD fans, it is good that the company looses money to make them happy with oversized and HW that performs like mainstream competition one).

So creating a 1170 simply means killing the 2060 (and probably 2070), just defeating the original purpose of these cards as first lower HW (possible mainstream) capable of RT.

Unless you are supposing nvidia is going to scrap completely their idea that RT is the future and it's support will be expanded in future generations, there's no a valid, rationale reason for them to create a new GPU that will replace the cut version of TU106.

All this without considering that AMD is probably not going to compete on 7nm as with that PP they will probably manage to reach Pascal performance while at 7nm nvidia is going to blow any AMD solution away under the point of view of absolute performance, performance per W and performance per mm^2 (despite the addition of the new computational units that will find more and more usage in the future.. none still has thought of using tensor core for advanced AI, for example).

So, no, there will be no a 1170 unless it will be a further cut of TU106 that at the end will perform just like TU116 but will be just a mere recycle of broken silicon.

Now, let me hear what makes you believe that a 1170 will be created.

catavalon21 - Tuesday, February 26, 2019 - link

I do not know if they will create an 1170 or not; to be fair, I am surprised they even created the 1160. You have a very good point, upon reflection, it is quite likely such a product would impact RTX sales. I was just curious what had you thinking that way.Thank you for the response.

Oxford Guy - Saturday, February 23, 2019 - link

Our only hope is capitalism.That's not going to happen, though.

Instead, we get duopoly/quasi-monopoly.

douglashowitzer - Friday, February 22, 2019 - link

Hey not sure if you're opposed to used GPUs... but you can get a used, overclocked, 3rd party GTX 1080 with 8GB vram on eBay for about $365-$400. In my opinion it's an amazing deal and I can tell you from experience that it would satisfy the performance jump that you're looking for. It's actually the exact situation I was in back in June of 2016 when I upgraded my 970 to a 1080. Being a proper geek, I maintained a spreadsheet of my benchmark performance improvements and the LOWEST improvement was an 80% gain. The highest was a 122% gain in Rise of the Tomb Raider (likely VRAM related but impressive nonetheless). Honestly I don't believe I've ever experienced a performance improvement that felt so "game changing" as when I went from my 970 to the 1080. Maybe waaay back when I upgraded my AMD 6950 to a GTX 670 :). If "used" doesn't turn you off, the upgrade of your dreams is waiting for you. Good luck to you!