The NVIDIA GeForce GTX 1660 Ti Review, Feat. EVGA XC GAMING: Turing Sheds RTX for the Mainstream Market

by Ryan Smith & Nate Oh on February 22, 2019 9:00 AM ESTFinal Words

We’re now four GPUs into the NVIDIA Turing architecture product stack, and while NVIDIA’s latest processor has pitched us a bit of a curve ball in terms of feature support, by and large NVIDIA is holding to a pretty consistent pattern with regards to product performance, positioning, and pricing. Which is to say that the company has a very specific product stack in mind for this generation, and thus far they’ve been delivering on it with the kind of clockwork efficiency that NVIDIA has come to be known for.

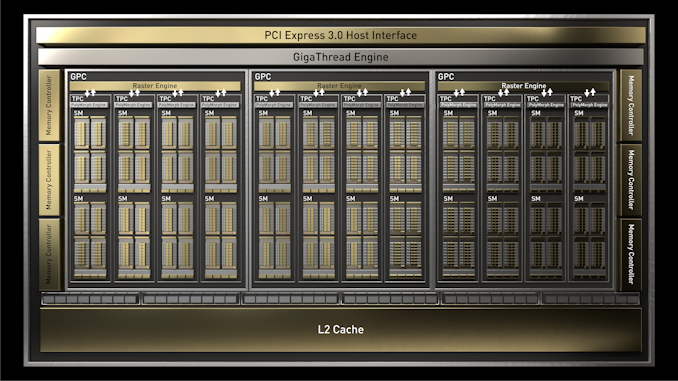

With the launch of the GeForce GTX 1660 Ti and the TU116 GPU underpinning it, we’re finally seeing NVIDIA shift gears a bit in how they’re building their cards. Whereas the four RTX 20 series cards are all loosely collected under the umbrella of “premium features for a premium price”, the GTX 1660 Ti goes in the other direction, dropping NVIDIA’s shiny RTX suite of effects for a product that is leaner and cheaper to produce. As a result, the new card offers a bigger improvement on a price/performance basis (in current games) than any of the other Turing cards, and with a sub-$300 price tag, is likely to be more warmly received than the other cards.

Looking at the numbers, the GeForce GTX 1660 Ti delivers around 37% more performance than the GTX 1060 6GB at 1440p, and a very similar 36% gain at 1080p. So consistent with the other Turing cards, this is not quite a major generational leap in performance; and to be fair to NVIDIA they aren’t really claiming otherwise. Instead, NVIDIA is mostly looking to sell this card to current GTX 960 and R9 380 users; people who skipped the Pascal generation and are still on 28nm parts. In which case, the GTX 1660 Ti offers well over 2x the performance of these cards, with performance frequently ending up neck-and-neck with what was the GTX 1070.

Meanwhile, taking a look at power efficiency, it’s interesting to note that for the GTX 1660 Ti NVIDIA has been able to hold the line on power consumption: performance has gone up versus the GTX 1060 6GB, but card power consumption hasn’t. Thanks to this, the GTX 1660 Ti is not just 36% faster, it’s 36% percent more efficient as well. The other Turing cards have seen their own efficiency gains as well, but with their TDPs all drifting up, this is the largest (and purest) efficiency gain we’ve seen to date, and probably the best metric thus far for evaluating Turing’s power efficiency against Pascal’s.

The end result of these improvements in performance and power efficiency is that NVIDIA has once again put together a very solid Turing-based video card. And while its performance gains don’t make the likes of the GTX 1060 6GB and Radeon RX 590 obsolete overnight, it’s a clear case of out with the old and in with the new for the mainstream video card market. The GTX 1060 is well on its way out, and meanwhile AMD is going to have to significantly reposition the $279 RX 590. The GTX 1660 Ti cleanly beats it in performance and power efficiency, delivering 25% better performance for a bit over half the power consumption.

If anything, having cleared its immediate competitors with superior technology, the only real challenge NVIDIA will face is convincing consumers to pay $279 for a xx60 class card, and which performs like a $379 card from two years ago. In this respect the GTX 1660 Ti is a much better value proposition than the RTX 2060 above it, but it’s also more expensive than the GTX 1060 6GB it replaces, so it runs the risk of drifting out of the mainstream market entirely. Thankfully pricing here is a lot more grounded than the RTX 20 series cards, but the mainstream market is admittedly more price sensitive to begin with.

This also means that AMD remains a wildcard factor; they have the option of playing the value spoiler with cheap RX 590 cards, and I’m curious to see how serious they really are about bringing the RX Vega 56 in to compete with NVIDIA’s newest card. Our testing shows that RX Vega 56 is still around 5% faster on average, so AMD could still play a new version of the RX 590 gambit (fight on performance and price, damn the power consumption).

Perhaps the most surprising part about any of this is that despite the fact that the GTX 1660 Ti very notably omits NVIDIA’s RTX functionality, I’m not convinced RTX alone is going to sway any buyers one way or another. Since the RTX 2060 is both a faster and more expensive card, I quickly tabled the performance and price increases for all of the Turing cards launched thus far.

| GeForce: Turing versus Pascal | ||||||

| List Price (Turing) |

Relative Performance | Relative Price |

Relative Perf-Per-Dollar |

|||

| RTX 2080 Ti vs GTX 1080 Ti | $999 | +32% | +42% | -7% | ||

| RTX 2080 vs GTX 1080 | $699 | +35% | +40% | -4% | ||

| RTX 2070 vs GTX 1070 | $499 | +35% | +32% | +2% | ||

| RTX 2060 vs GTX 1060 6GB | $349 | +59% | +40% | +14% | ||

| GTX 1660 Ti vs GTX 1060 6GB | $279 | +36% | +12% | +21% | ||

The long and short of matters is that with the cheapest RTX card costing an additional $80, there’s a much stronger rationale to act based on pricing than feature sets. In fact considering just how amazingly consistent the performance gains are on a generation-by-generation basis, there’s ample evidence that NVIDIA has always planned it this way. Earlier I mentioned that NVIDIA acts with clockwork efficiency, and with nearly ever Turing card improving over its predecessor by roughly 35% (save the RTX 2060 with no direct predecessor), it’s amazing just how consistent NVIDIA’s product positioning is here. If the next GTX 16 series card isn’t also 35% faster than its predecessor, then I’m going to be amazed.

In any case, this makes a potentially complex situation for card buyers pretty simple: buy the card you can afford – or at least, the card with the performance you’re after – and don’t worry about whether it’s RTX or GTX. And while it’s unfortunate that NVIDIA didn’t include their RTX functionality top-to-bottom in the Turing family, there’s also a good argument to be had that the high-performance cost means that it wouldn’t make sense on a mainstream card anyhow. At least, not for this generation.

Last, but not least, we have the matter of EVGA’s GeForce GTX 1660 Ti XC Black GAMING. As this is launch without reference cards, we’re going to see NVIDIA’s board partners hit the ground running with their custom cards. And in true EVGA tradition, their XC Black GAMING is a solid example of what to expect for a $279 baseline GTX 1660 Ti card.

Since this isn’t a factory overclocked card, I’m a bit surprised that EVGA bothered to ship it with an increased 130W TDP. But I’m also glad they did, as the fact that it only improves performance by around 1% versus the same card at 120W is a very clear indicator that the GTX 1660 Ti is not meaningfully TDP limited. Overclocking will be another matter of course, but at stock this means that NVIDIA hasn’t had to significantly clamp down on power consumption to hit their power targets.

As for EVGA’s card design, I have to admit a triple-slot cooler is an odd choice for a 130W card – a standard double-wide card would have been more than sufficient for that kind of TDP – but in a market that’s going to be full of single and dual fan cards it definitely stands out from the crowd; and quite literally so, in the case of NVIDIA’s own promotional photos. Meanwhile I’m not sure there’s much to be said about EVGA’s software that we haven’t said a dozen times before: in EVGA Precision remains some of the best overclocking software on the market. And with such a beefy cooler on this card, it’s certainly begging to be overclocked.

157 Comments

View All Comments

Psycho_McCrazy - Tuesday, February 26, 2019 - link

Given that 21:9 monitors are also making great inroads into the gamer's purchase lists, can benchmark resolutions also include 2560.1080p, 3440.1440p and (my wishlist) 3840.1600p benchies??eddman - Tuesday, February 26, 2019 - link

2560x1080, 3440x1440 and 3840x1600That's how you right it, and the "p" should not be used when stating the full resolution, since it's only supposed to be used for denoting video format resolution.

P.S. using 1080p, etc. for display resolutions isn't technically correct either, but it's too late for that.

Ginpo236 - Tuesday, February 26, 2019 - link

a 3-slot ITX-sized graphics card. What ITX case can support this? 0.bajs11 - Tuesday, February 26, 2019 - link

Why can't they just make a GTX 2080Ti with the same performance as RTX 2080Ti but without useless RT and dlss and charge something like 899 usd (still 100 bucks more than gtx 1080ti)?i bet it will sell like hotcakes or at least better than their overpriced RTX2080ti

peevee - Tuesday, February 26, 2019 - link

Do I understand correctly that this thing does not have PCIe4?CiccioB - Thursday, February 28, 2019 - link

No, they have not a PCIe4 bus.Do you think they should have?

Questor - Wednesday, February 27, 2019 - link

Why do I feel like this was a panic plan in an attempt to bandage the bleed from RTX failure? No support at launch and months later still abysmal support on a non-game changing and insanely expensive technology.I am not falling for it.

CiccioB - Thursday, February 28, 2019 - link

Yes, a "panic plan" that required about 3 years to create the chips.3 years ago they already know that they would have panicked at the RTX cards launch and so they made the RT-less chip as well. They didn't know that the RT could not be supported in performance with the low number of CUDA core low level cards have.

They didn't know that the concurrent would have played with the only weapon it was left to it to battle, that is prize as they could not think that the concurrent was not ready with a beefed up architecture capable of the sa functionalities.

So, yes, they panicked for sure. They were not prepared to anything of what is happening,

Korguz - Friday, March 1, 2019 - link

" that required about 3 years to create the chips.3 years ago they already know that they would have panicked at the RTX cards launch and so they made the RT-less chip as well. They didn't know that the RT could not be supported in performance with the low number of CUDA core low level cards have. "

and where did you read this ? you do understand, and realize... is IS possible to either disable, or remove parts of an IC with out having to spend " about 3 years " to create the product, right ? intel does it with their IGP in their cpus, amd did it back in the Phenom days with chips like the Phenom X4 and X3....

CiccioB - Tuesday, March 5, 2019 - link

So they created a TU116, a completely new die without RT and Tensor Core, to reduce the size of the die and lose about 15% of performance with respect to the 2060 all in 3 months because they panicked?You probably have no idea of what are the efforts to create a 280mm^2 new die.

Well, by this and your previous posts you don't have idea of what you are talking about at all.