The AMD Radeon VII Review: An Unexpected Shot At The High-End

by Nate Oh on February 7, 2019 9:00 AM ESTCompute Performance

Shifting gears, we'll look at the compute aspects of the Radeon VII. Though it is fundamentally similar to first generation Vega, there has been an emphasis on improved compute for Vega 20, and we may see it here.

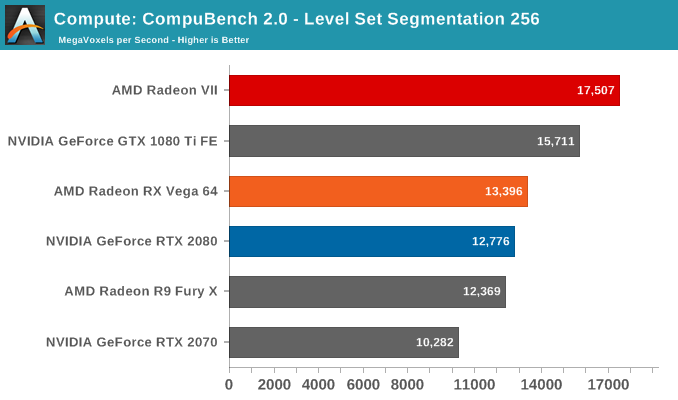

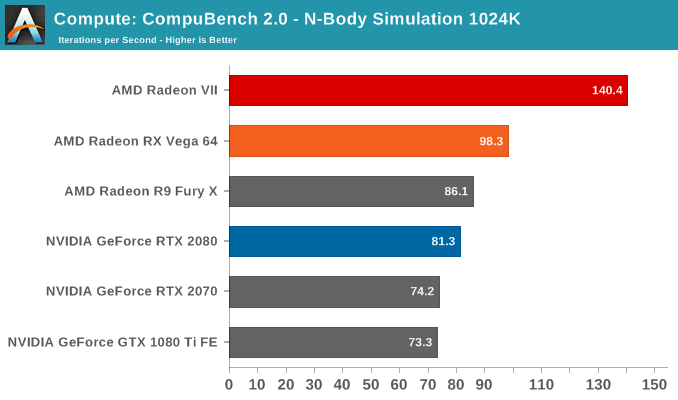

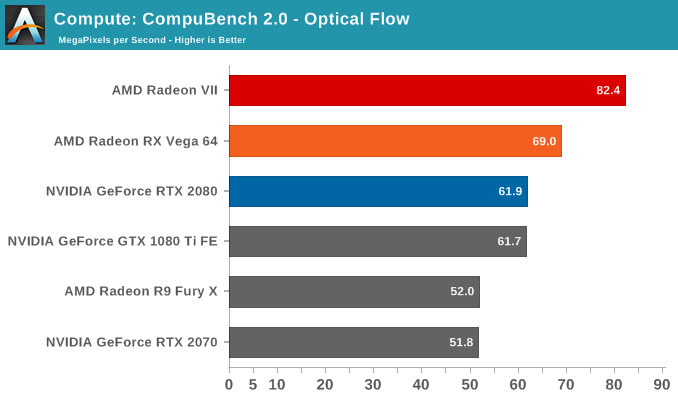

Beginning with CompuBench 2.0, the latest iteration of Kishonti's GPU compute benchmark suite offers a wide array of different practical compute workloads, and we’ve decided to focus on level set segmentation, optical flow modeling, and N-Body physics simulations.

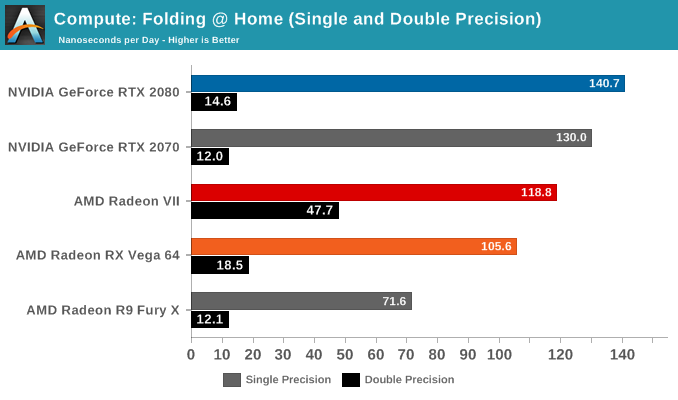

Moving on, we'll also look at single precision floating point performance with FAHBench, the official Folding @ Home benchmark. Folding @ Home is the popular Stanford-backed research and distributed computing initiative that has work distributed to millions of volunteer computers over the internet, each of which is responsible for a tiny slice of a protein folding simulation. FAHBench can test both single precision and double precision floating point performance, with single precision being the most useful metric for most consumer cards due to their low double precision performance.

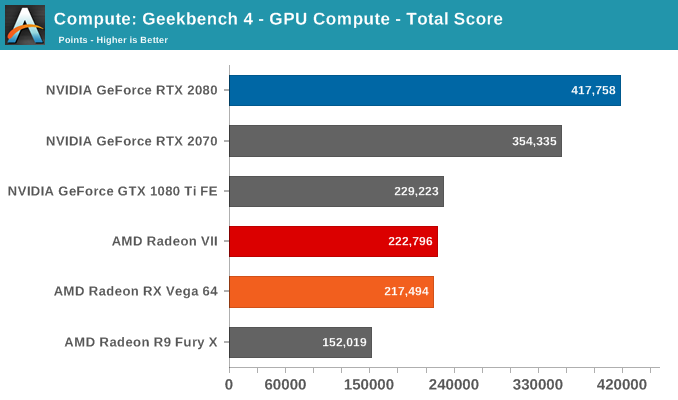

Next is Geekbench 4's GPU compute suite. A multi-faceted test suite, Geekbench 4 runs seven different GPU sub-tests, ranging from face detection to FFTs, and then averages out their scores via their geometric mean. As a result Geekbench 4 isn't testing any one workload, but rather is an average of many different basic workloads.

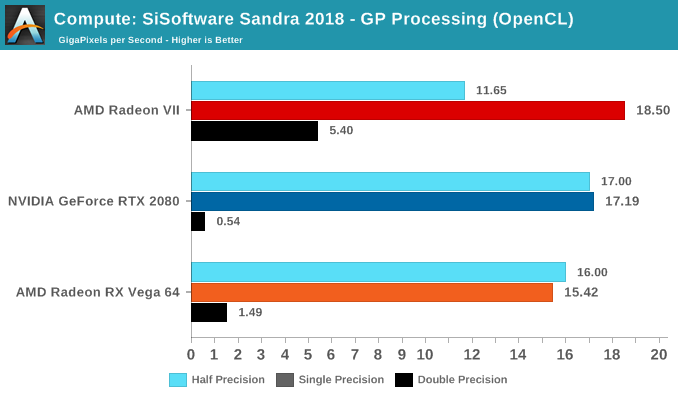

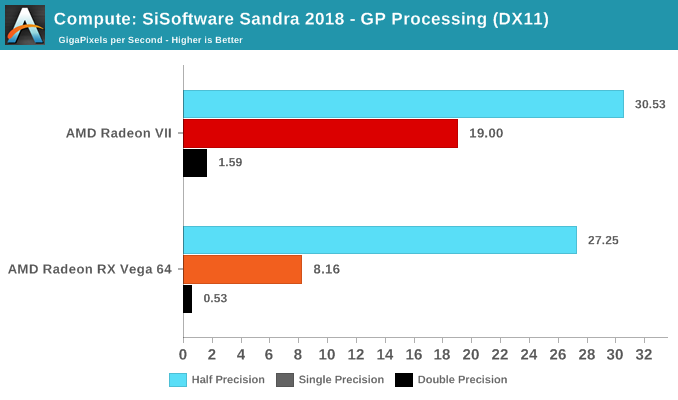

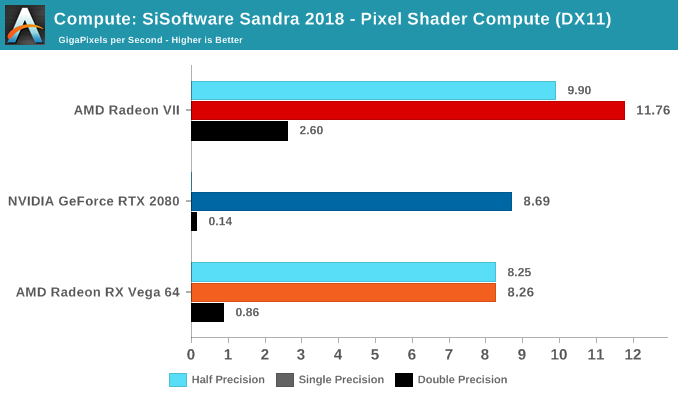

Lastly, we have SiSoftware Sandra, with general compute benchmarks at different precisions.

289 Comments

View All Comments

mapesdhs - Friday, February 8, 2019 - link

It's going to be hillariously funny if Ryzen 3000 series reverses this accepted norm. :)mkaibear - Saturday, February 9, 2019 - link

I'd not be surprised - given anandtech's love for AMD (take a look at the "best gaming CPUs" article released today...)Not really "hilariously funny", though. More "logical and methodical"

thesavvymage - Thursday, February 7, 2019 - link

It's not like itll perform any better though... Intel still has generally better gaming performance. There's no reason to artificially hamstring the card, as it introduces a CPU bottleneckbrokerdavelhr - Thursday, February 7, 2019 - link

Once again - in gaming for the most part....try again with other apps and their is a marked difference. Many of which are in AMD's favor. try again.....jordanclock - Thursday, February 7, 2019 - link

In every scenario that is worth testing a VIDEO CARD, Intel CPUs offer the best performance.ballsystemlord - Thursday, February 7, 2019 - link

There choice of processor is kind of strange. An 8-core Intel on *plain* 14nm, now 2! years old, with rather low clocks at 4.3Ghz, is not ideal for a gaming setup. I would have used a 9900K or 2700X personally[1].For a content creator I'd be using a Threadripper or similar.

Re-testing would be an undertaking for AT though. Probably too much to ask. Maybe next time they'll choose some saner processor.

[1] 9900K is 4.7Ghz all cores. The 2700X runs at 4.0Ghz turbo, so you'd loose frequency, but then you could use faster RAM.

For citations see:

https://www.intel.com/content/www/us/en/products/p...

https://images.anandtech.com/doci/12625/2nd%20Gen%...

https://images.anandtech.com/doci/13400/9thGenTurb...

ToTTenTranz - Thursday, February 7, 2019 - link

Page 3 table:- The MI50 uses a Vega 20, not a Vega 10.

Ryan Smith - Thursday, February 7, 2019 - link

Thanks!FreckledTrout - Thursday, February 7, 2019 - link

I wonder why this card absolutely dominates in the "LuxMark 3.1 - LuxBall and Hotel" HDR test? Its pulling in numbers 1.7x higher than the RTX 2080 on that test. That's a funky outlier.Targon - Thursday, February 7, 2019 - link

How much video memory is used? That is the key. Since many games and benchmarks are set up to test with a fairly low amount of video memory being needed(so those 3GB 1050 cards can run the test), what happens when you try to load 10-15GB into video memory for rendering? Cards with 8GB and under(the majority) will suddenly look a lot slower in comparison.