The Intel Xeon W-3175X Review: 28 Unlocked Cores, $2999

by Ian Cutress on January 30, 2019 9:00 AM ESTGaming: Civilization 6 (DX12)

Originally penned by Sid Meier and his team, the Civ series of turn-based strategy games are a cult classic, and many an excuse for an all-nighter trying to get Gandhi to declare war on you due to an integer overflow. Truth be told I never actually played the first version, but every edition from the second to the sixth, including the fourth as voiced by the late Leonard Nimoy, it a game that is easy to pick up, but hard to master.

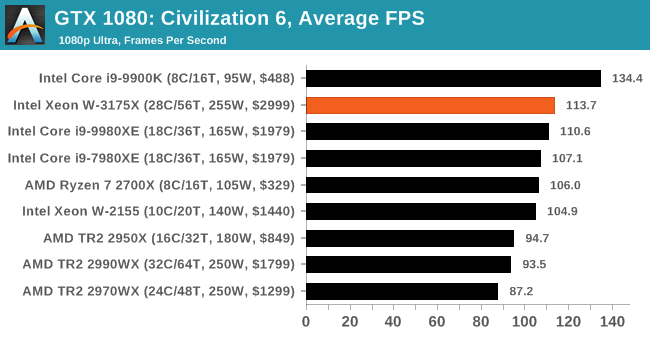

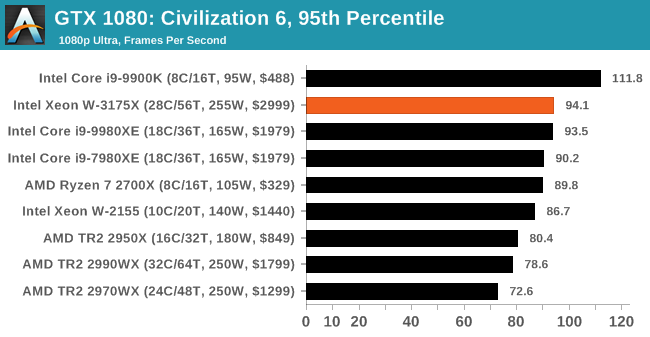

Benchmarking Civilization has always been somewhat of an oxymoron – for a turn based strategy game, the frame rate is not necessarily the important thing here and even in the right mood, something as low as 5 frames per second can be enough. With Civilization 6 however, Firaxis went hardcore on visual fidelity, trying to pull you into the game. As a result, Civilization can taxing on graphics and CPUs as we crank up the details, especially in DirectX 12.

Perhaps a more poignant benchmark would be during the late game, when in the older versions of Civilization it could take 20 minutes to cycle around the AI players before the human regained control. The new version of Civilization has an integrated ‘AI Benchmark’, although it is not currently part of our benchmark portfolio yet, due to technical reasons which we are trying to solve. Instead, we run the graphics test, which provides an example of a mid-game setup at our settings.

| AnandTech CPU Gaming 2019 Game List | ||||||||

| Game | Genre | Release Date | API | IGP | Low | Med | High | |

| Civilization VI | RTS | Oct 2016 |

DX12 | 1080p Ultra |

4K Ultra |

8K Ultra |

16K Low |

|

All of our benchmark results can also be found in our benchmark engine, Bench.

| Civilization VI | IGP |

| Average FPS |  |

| 95th Percentile |  |

We had issues running Civilization beyond IGP, we're looking into exactly why.

136 Comments

View All Comments

tamalero - Wednesday, January 30, 2019 - link

Aaah yes.. the presenter "forgot" to say it was heavily overclocked..arh2o - Wednesday, January 30, 2019 - link

Hey Ian, nice review. But you guys really need to stop testing games with an ancient GTX 1080 from 1H 2016...it's almost 3 years old now. You're clearly GPU bottle-necked on a bunch of these games you've benchmarked. At least use a RTX 2080, but if you're really insistent on keeping the GTX 1080, bench at 720p with it instead of your IGP. For example:Final Fantasy XV: All your CPUs have FPS between 1-4 frames of difference. Easy to spot GPU bottleneck here.

Shadow of War Low: Ditto, all CPUs bench within the 96-100 FPS range. Also, what's the point of even including the medium and high numbers? It's decimal point differences on the FPS, not even a whole number difference. Clearly GPU bottle-necked here even at 1080p unfortunately.

eddman - Wednesday, January 30, 2019 - link

Xeons don't even have an IGP. That IGP in the tables is simply the name they chose for that settings, which includes 720 resolution, since it represents a probable use case for an IGP.Anyway, you are right about the card. They should've used a faster one, although IMO game benchmarks are pointless for such CPUs.

BushLin - Wednesday, January 30, 2019 - link

I'm glad they're using the same card for years so it can be directly compared to previous benchmarks and we can see how performance scales with cores vs clock speed.Mitch89 - Friday, February 1, 2019 - link

That’s a poor rationale, you wouldn’t pair a top-end CPU with an outdated GPU if you were building a system that needs both CPU and GPU performance.SH3200 - Wednesday, January 30, 2019 - link

For all the jokes its getting doesn't the 7290F actually run at a higher TDP using the same socket? Intel couldn't have just have taken the coolers from the Xeon DAP WSes and used those instead?evernessince - Wednesday, January 30, 2019 - link

How is 3K priced right? You can purchased a 2990WX for half that price and 98% of the performance. $1,500 is a lot of extra money in your wallet.GreenReaper - Thursday, January 31, 2019 - link

Maybe they thought since it was called the 2990WX it cost $2990...tygrus - Wednesday, January 30, 2019 - link

1) A few cases showed the 18core Intel CPU beat their 28core. I assume the benchmark and/or OS is contributing to a reduced performance for the 28 core Intel and the 32 core AMD (TR 2950 beats TR 2990 a few times).2) Do you really want to use 60% more power for <25% increase of performance?

3) This chip is a bit like the 1.13GHz race in terms of such a small release & high cost it should be ignored by most of us as a marketing stunt.

GreenReaper - Thursday, January 31, 2019 - link

Fewer cores may be able to boost faster and have less contention for shared resources such as memory bandwidth. This CPU tends to only win by any significant margin when it whenuse all of its cores. Heck, you have the 2700X up there in many cases.