The Samsung 860 QVO (1TB, 4TB) SSD Review: First Consumer SATA QLC

by Billy Tallis on November 27, 2018 11:20 AM ESTAnandTech Storage Bench - Heavy

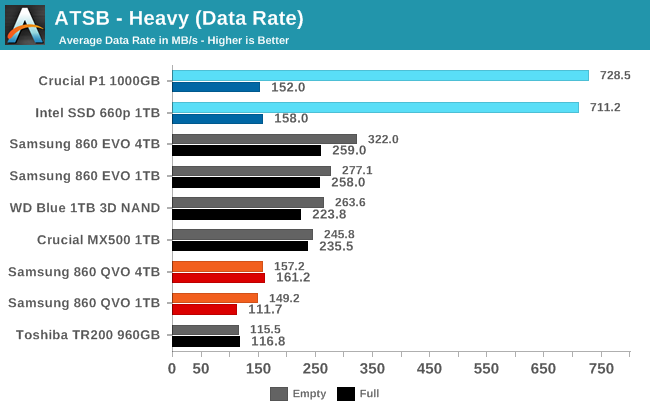

Our Heavy storage benchmark is proportionally more write-heavy than The Destroyer, but much shorter overall. The total writes in the Heavy test aren't enough to fill the drive, so performance never drops down to steady state. This test is far more representative of a power user's day to day usage, and is heavily influenced by the drive's peak performance. The Heavy workload test details can be found here. This test is run twice, once on a freshly erased drive and once after filling the drive with sequential writes.

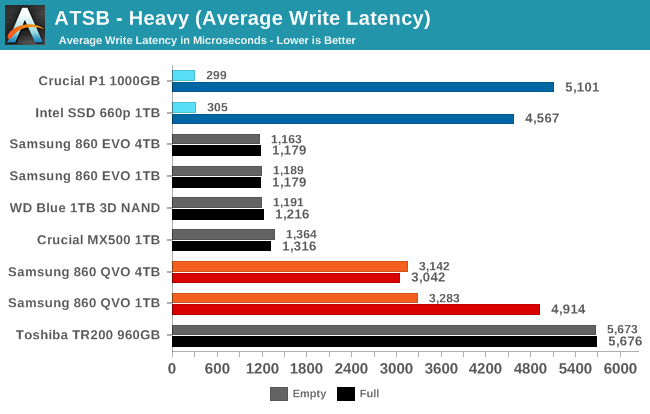

Neither capacity of the Samsung 860 QVO can keep pace with the mainstream TLC drives on the write-intensive Heavy test, but they both outperform the DRAMless TLC drive. The NVMe+QLC drives from Intel and Micron fare much better when the test is run on an empty drive, but when full they too fall behind the mainstream TLC SSDs.

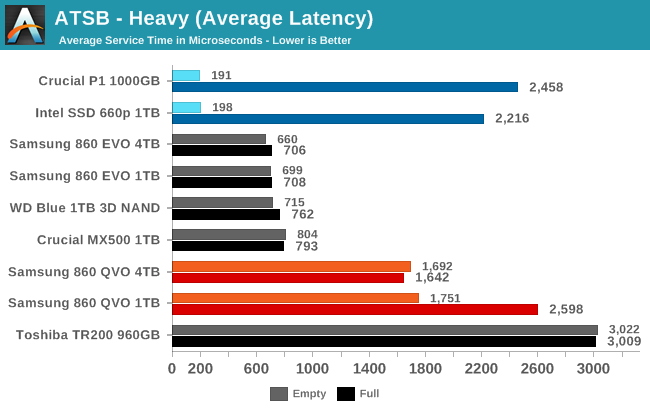

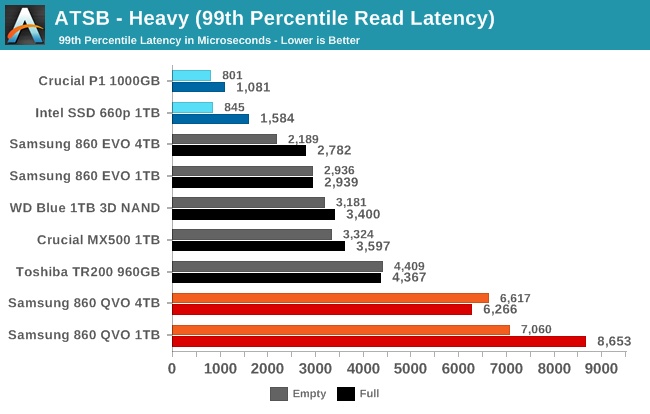

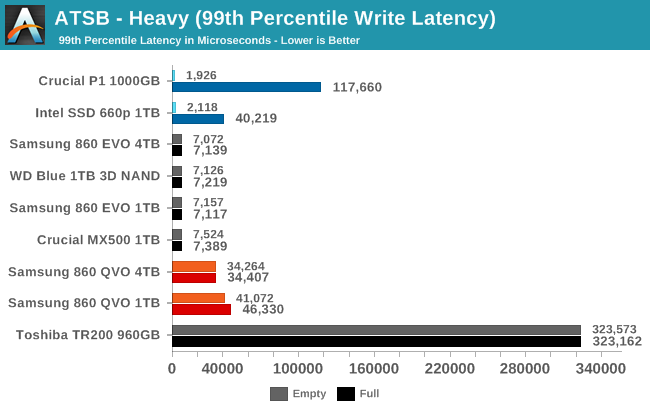

The Samsung 860 QVOs have much worse latency scores than the mainstream TLC drives, and the 99th percentile latency is much worse than even the DRAMless TLC SSD. However, the Samsung QLC drives are a bit better than the Intel/Micron QLC drives at keeping latency under control when the test is run on a full drive.

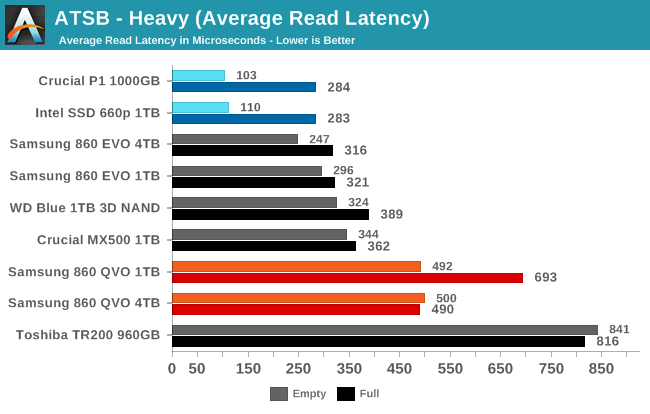

The average read latencies from the Samsung 860 QVOs are only a bit higher than the mainstream TLC drives, but the average write latencies stand out as worse by at least a factor of two.

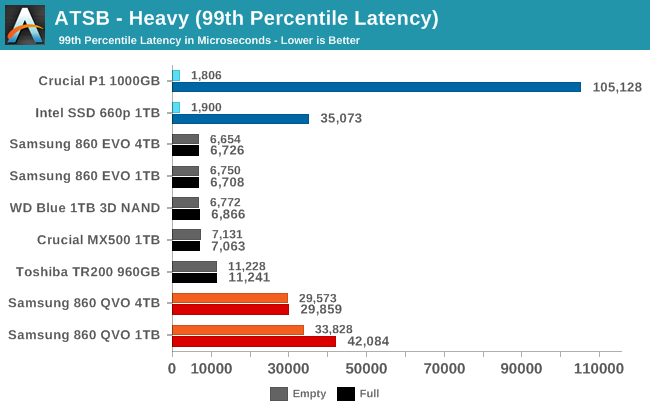

The 99th percentile read and write latency scores from the 860 QVOs are poor, but they at least avoid the horrific write QoS issues that the Toshiba TR200 shows, and are better than the full-drive run on the Crucial P1.

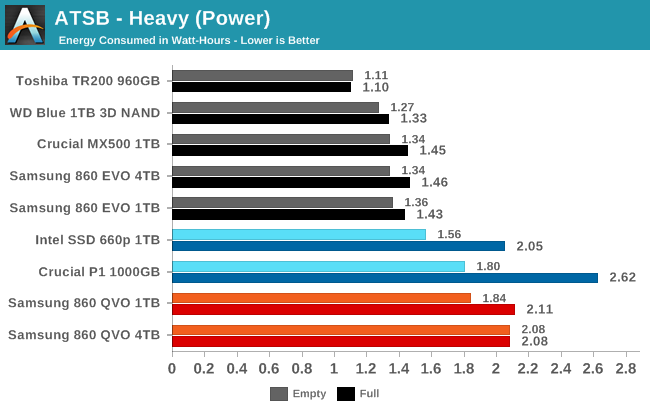

The Samsung 860 QVO uses substantially more energy over the course of the Heavy test than the other SATA drives, and more than the the NVMe QLC drives in most cases, too.

109 Comments

View All Comments

boozed - Sunday, March 24, 2019 - link

RecoupedFunBunny2 - Tuesday, November 27, 2018 - link

"moving off of spinning rust and onto SSDs with my bulk storage. "well... cold storage of NAND ain't all that hot even at SLC. at QLC? not up to bulk storage, if you ask me. and, no, you didn't.

0ldman79 - Tuesday, November 27, 2018 - link

Bulk storage on the PC, not offline storage.I could use a big SSD myself, but at this price I'm better off picking up an EVO or WD 3D NAND instead.

I'm sure it will drop in a couple of months and we may even see the 1TB hit the $99 mark. Samsung QLC is looking pretty decent, overall endurance isn't much worse than my WD Blue 1TB 3D NAND, though smaller capacities drop off horribly.

azazel1024 - Friday, November 30, 2018 - link

What conditions? SLC lasts a LONGGGgggggg time powered off. Decades.MLC on a lightly used drive is also years and years. TLC is also measured in years on a lightly used drive under the right conditions.

Yes, an abused TLC drive today, left in a hot car in the summer time is going to start getting corrupt bits in a matter of a few weeks. That isn't most people's use case and for bulk storage, TLC at least kept vaguely room temperature has a storage life of something >1yr even when pushing up near the P/E endurance of the drive. I forget what JEDEC calls for (or whoever the standards body is), but the P/E endurance on TLC IIRC is 9 months at room temperature. IE your drive should typically not lose any stored bits when at room temperature and powered off for 9 months once you have exhausted your P/E endurance (obviously going past it reduces the powered off endurance as well as the potential for other things, like blocks not being able to be written to due to high power requirements or not being able to differentiate voltages, etc.)

I don't know what the endurance on QLC is supposed to be, but IIRC it is still measured in multiple months at the limit of P/E endurance (and typically when new the cold/off endurance of a drive is several times longer than when it is at the end of its life). I wouldn't want to use QLC, TLC or even MLC or SLC as archival storage that is supposed to last decades, but HDDs could be problematic for decadal storage also.

Araemo - Tuesday, November 27, 2018 - link

Honestly? Give me a 4TB for $300 and I start getting tempted. I have an array of 4 3TB spinning rust (5900 RPM even) disks I would love to upgrade to SSDs and at least a moderate size increase. I'd prefer 6TB or 8TB disks, so I can double the array size, but 4TB at a reasonable price gets tempting.Spunjji - Wednesday, November 28, 2018 - link

Wait. Just wait. Every time the capacity goes up and the price goes down, another poster comes along with a new higher capacity they want at a new lower price. You'll get it one day but right now it's physically impossible.rpg1966 - Wednesday, November 28, 2018 - link

Ha, I came here to say this. These guys would (supposedly) have jumped at these capacity/price/speed/endurance combinations a year ago, but now, oh no, they're way too expensive. It's a wonder they ever buy anything at all...azazel1024 - Friday, November 30, 2018 - link

My limit has always been spending $600-800 to replace the storage in my desktop and server. Right now I am utilizing 3.5TiB of the 5.4TiB capacity of my 2x3TB RAID0 arrays. Now that has been creeping up. 12 months ago it was probably 2.8-2.9TiB utilized. At any rate, I need enough performance (minimum 250MB/sec sustained large transfers once SLC cache is exhausted, or if below, it better be very close to that figure) to not need feel the need to make a RAID array so I can run JBOD and add disks as my storage pool gets utilized so they don't need to all be matching drives.4TB would maybe just barely cut it, but it also likely wouldn't leave me enough growth room as I'd be running out to add probably 2TB disks in a few months. 5-6TB would probably be enough to last me 18-24 months before I'd need to add any disks.

Anyway, so you are talking 10TB of total storage (minimum) for no more than about $800. My song hasn't changed on that in the last couple of years. We still aren't there. Though getting close. 8 cents a GB isn't THAT far away. And by the time we get there, my needs might have only creeped up to 12TB for the same no more than $800 (and who knows, maybe in a couple more years I could gin up another $100 or $200 to get it, even if prices haven't gotten down to 6-7 cents a GB).

So if I was looking at 3x2TB drives in JBOD and could just add another 2TB disk to it as my storage got filled up for a measly ~$350 between both machines every couple of years (and hopefully getting cheaper each time I did it, or it is cheaper enough when I need more capacity a couple years after building the SSD storage pools to get a new 4TB disk for each machine or something).

One of the things I don't like have HDDs and RAID (well it would be the same with SSDs and RAID) is really needing matching drives. So if my storage runs low, it means replacing entire arrays instead of just adding a new disk to provide the extra capacity, but being able to keep both higher performance and keeping a unified volume.

Right now my minimum HDD standard once I start hitting capacity/performance limits is probably going to be getting a set of Seagate Barracuda Pro drives for the performance of 7200rpm spindle speeds. That means 2x2x4TB drives (so 4 drives total) which is around $600 right now, and only gets me 1/3rd more capacity...

In other words I'd likely need to replace both arrays again maybe 3 years later (at most). Going to 2x2x6TB drives might make longer term financial sense, but it also has a startup cost of around $1000.

Impulses - Thursday, November 29, 2018 - link

It'll probably take several years for prices to get to that point... Optimistically.Spunjji - Wednesday, November 28, 2018 - link

"They are trying to milk saps"There's no evidence whatsoever for that claim. TLC drives weren't cheaper than MLC when they started out, now they (mostly) are - it's how product introductions work. The same will happen here. Enough of the bleating and moving of goalposts to ridiculous locations already.