The Intel Core i9-9980XE CPU Review: Refresh Until it Hertz

by Ian Cutress on November 13, 2018 9:00 AM ESTHEDT Performance: System Tests

Our System Test section focuses significantly on real-world testing, user experience, with a slight nod to throughput. In this section we cover application loading time, image processing, simple scientific physics, emulation, neural simulation, optimized compute, and 3D model development, with a combination of readily available and custom software. For some of these tests, the bigger suites such as PCMark do cover them (we publish those values in our office section), although multiple perspectives is always beneficial. In all our tests we will explain in-depth what is being tested, and how we are testing.

All of our benchmark results can also be found in our benchmark engine, Bench.

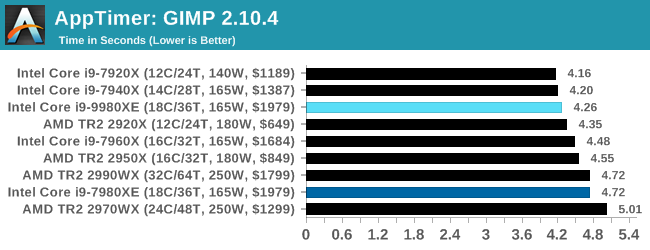

Application Load: GIMP 2.10.4

One of the most important aspects about user experience and workflow is how fast does a system respond. A good test of this is to see how long it takes for an application to load. Most applications these days, when on an SSD, load fairly instantly, however some office tools require asset pre-loading before being available. Most operating systems employ caching as well, so when certain software is loaded repeatedly (web browser, office tools), then can be initialized much quicker.

In our last suite, we tested how long it took to load a large PDF in Adobe Acrobat. Unfortunately this test was a nightmare to program for, and didn’t transfer over to Win10 RS3 easily. In the meantime we discovered an application that can automate this test, and we put it up against GIMP, a popular free open-source online photo editing tool, and the major alternative to Adobe Photoshop. We set it to load a large 50MB design template, and perform the load 10 times with 10 seconds in-between each. Due to caching, the first 3-5 results are often slower than the rest, and time to cache can be inconsistent, we take the average of the last five results to show CPU processing on cached loading.

Loading software is usually an achilles heel of multi-core processors based on the lower frequency. The 9980XE pushes above and beyond the 7980XE in this regard, given it has better turbo performance across the board.

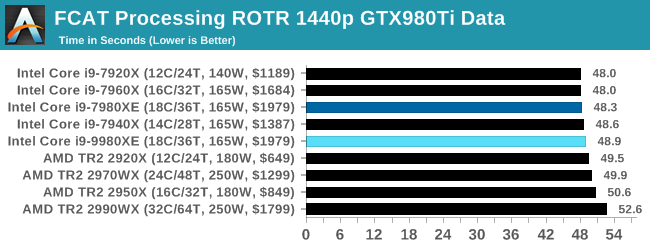

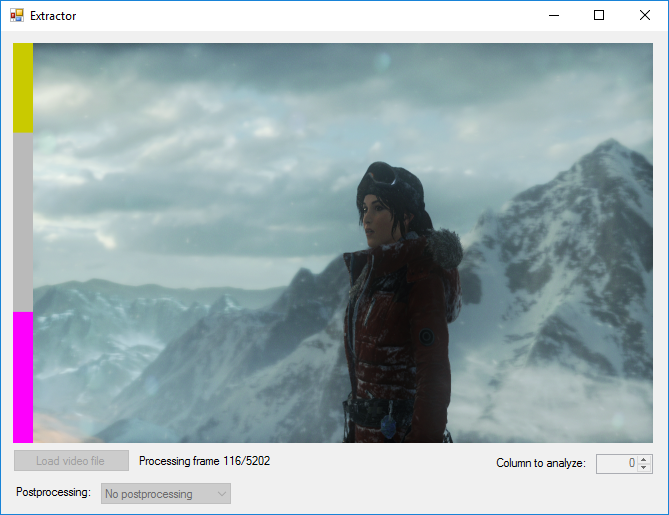

FCAT: Image Processing

The FCAT software was developed to help detect microstuttering, dropped frames, and run frames in graphics benchmarks when two accelerators were paired together to render a scene. Due to game engines and graphics drivers, not all GPU combinations performed ideally, which led to this software fixing colors to each rendered frame and dynamic raw recording of the data using a video capture device.

The FCAT software takes that recorded video, which in our case is 90 seconds of a 1440p run of Rise of the Tomb Raider, and processes that color data into frame time data so the system can plot an ‘observed’ frame rate, and correlate that to the power consumption of the accelerators. This test, by virtue of how quickly it was put together, is single threaded. We run the process and report the time to completion.

Despite the 9980XE having a higher frequency than the 7980XE, they both fall in the same region as all these HEDT processors seems to be trending towards 48 seconds. For context, the 5.0 GHz Core i9-9900K scores 44.7 seconds, another 8% or so faster.

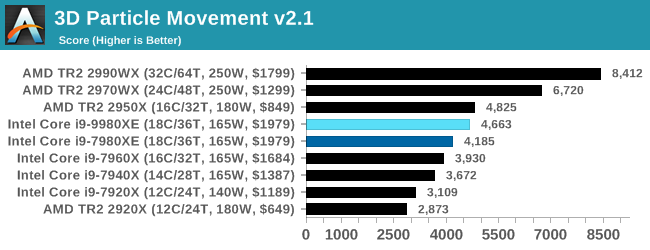

3D Particle Movement v2.1: Brownian Motion

Our 3DPM test is a custom built benchmark designed to simulate six different particle movement algorithms of points in a 3D space. The algorithms were developed as part of my PhD., and while ultimately perform best on a GPU, provide a good idea on how instruction streams are interpreted by different microarchitectures.

A key part of the algorithms is the random number generation – we use relatively fast generation which ends up implementing dependency chains in the code. The upgrade over the naïve first version of this code solved for false sharing in the caches, a major bottleneck. We are also looking at AVX2 and AVX512 versions of this benchmark for future reviews.

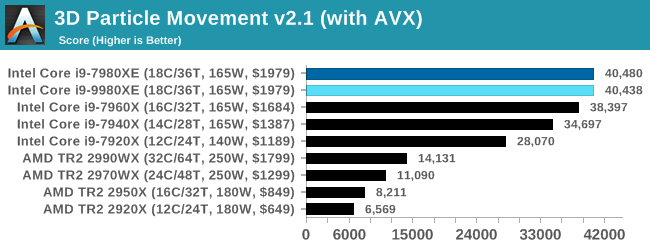

For this test, we run a stock particle set over the six algorithms for 20 seconds apiece, with 10 second pauses, and report the total rate of particle movement, in millions of operations (movements) per second. We have a non-AVX version and an AVX version, with the latter implementing AVX512 and AVX2 where possible.

3DPM v2.1 can be downloaded from our server: 3DPMv2.1.rar (13.0 MB)

Without any AVX code, our 3DPM test shows that with fewer cores, AMD's 16-core Threadripper actually beats both of the 7980XE and 9980XE. The higher core count AMD parts blitz the field.

When we add AVX2 / AVX512, the Intel HEDT systems go above and beyond. This is the benefit of hand-tuned AVX512 code. Interestingly the 9980XE scores about the same as the 7980XE - I have a feeling that the AVX512 turbo tables for both chips are identical.

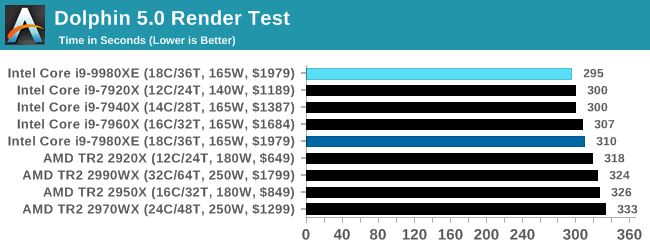

Dolphin 5.0: Console Emulation

One of the popular requested tests in our suite is to do with console emulation. Being able to pick up a game from an older system and run it as expected depends on the overhead of the emulator: it takes a significantly more powerful x86 system to be able to accurately emulate an older non-x86 console, especially if code for that console was made to abuse certain physical bugs in the hardware.

For our test, we use the popular Dolphin emulation software, and run a compute project through it to determine how close to a standard console system our processors can emulate. In this test, a Nintendo Wii would take around 1050 seconds.

The latest version of Dolphin can be downloaded from https://dolphin-emu.org/

Dolphin enjoys single thread frequency, so at 4.5 GHz we see the 9980XE getting a small bump over the 7980XE.

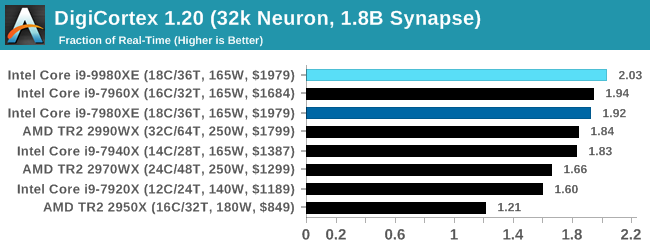

DigiCortex 1.20: Sea Slug Brain Simulation

This benchmark was originally designed for simulation and visualization of neuron and synapse activity, as is commonly found in the brain. The software comes with a variety of benchmark modes, and we take the small benchmark which runs a 32k neuron / 1.8B synapse simulation, equivalent to a Sea Slug.

Example of a 2.1B neuron simulation

We report the results as the ability to simulate the data as a fraction of real-time, so anything above a ‘one’ is suitable for real-time work. Out of the two modes, a ‘non-firing’ mode which is DRAM heavy and a ‘firing’ mode which has CPU work, we choose the latter. Despite this, the benchmark is still affected by DRAM speed a fair amount.

DigiCortex can be downloaded from http://www.digicortex.net/

DigiCortex requires a good memory subsystem as well as cores and frequency. We get a small bump for the new 9980XE here.

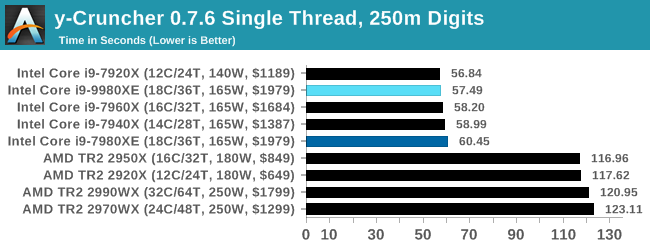

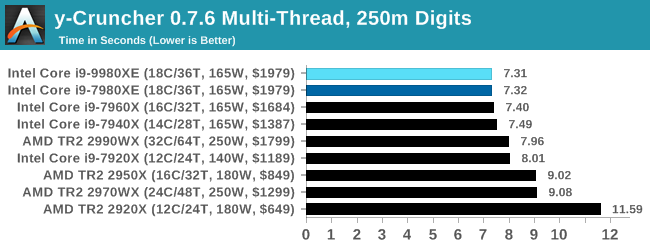

y-Cruncher v0.7.6: Microarchitecture Optimized Compute

I’ve known about y-Cruncher for a while, as a tool to help compute various mathematical constants, but it wasn’t until I began talking with its developer, Alex Yee, a researcher from NWU and now software optimization developer, that I realized that he has optimized the software like crazy to get the best performance. Naturally, any simulation that can take 20+ days can benefit from a 1% performance increase! Alex started y-cruncher as a high-school project, but it is now at a state where Alex is keeping it up to date to take advantage of the latest instruction sets before they are even made available in hardware.

For our test we run y-cruncher v0.7.6 through all the different optimized variants of the binary, single threaded and multi-threaded, including the AVX-512 optimized binaries. The test is to calculate 250m digits of Pi, and we use the single threaded and multi-threaded versions of this test.

Users can download y-cruncher from Alex’s website: http://www.numberworld.org/y-cruncher/

With another one of our AVX2/AVX512 tests, the Skylake-X parts win in both single thread and multi-threads.

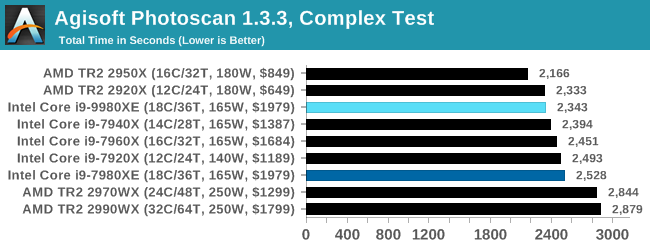

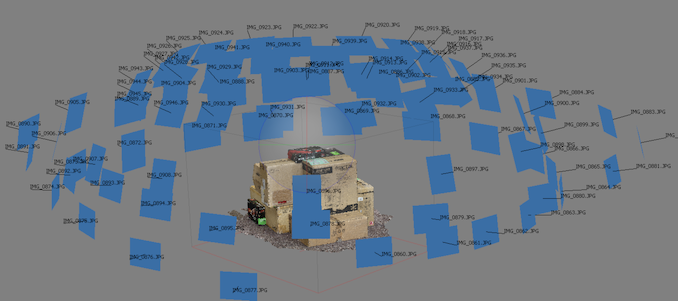

Agisoft Photoscan 1.3.3: 2D Image to 3D Model Conversion

One of the ISVs that we have worked with for a number of years is Agisoft, who develop software called PhotoScan that transforms a number of 2D images into a 3D model. This is an important tool in model development and archiving, and relies on a number of single threaded and multi-threaded algorithms to go from one side of the computation to the other.

In our test, we take v1.3.3 of the software with a good sized data set of 84 x 18 megapixel photos and push it through a reasonably fast variant of the algorithms, but is still more stringent than our 2017 test. We report the total time to complete the process.

Agisoft’s Photoscan website can be found here: http://www.agisoft.com/

Photoscan is a mix of parallel compute and single threaded work, and the 9980XE does give another 7-8% performance over the 7980XE. The AMD dual-die TR2 parts still have the edge, however.

143 Comments

View All Comments

TEAMSWITCHER - Tuesday, November 13, 2018 - link

I never said that we didn't have external monitors, keyboards, and mice for desktop work. However, from 25 years of personal experience in this industry I can tell you emphatically .. productivity isn't related to the number of pixels on your display.HStewart - Tuesday, November 13, 2018 - link

Exactly - I work with 15 in IBM Thinkpad 530 that screen is never used - but I have 2 24in 1980p monitors on my desk at home - if I need to go home office - hook it up another monitor - always with external monitor.It is really not the number of pixels but size of work sapace. I have 4k Dell XPS 15 2in1 and I barely use the 4k on laptop - I mostly use it hook to LG 38U88 Ultrawide. I have option to go to 4k on laptop screen but in reality - I don't need it.

Atari2600 - Tuesday, November 13, 2018 - link

I'd agree if you are talking about going from 15" 1080p laptop screen to 15" 4k laptop screen.But, if you don't see significant changes in going from a single laptop screen to a 40" 4k or even just dual SD monitors - any arrangement that lets you put up multiple information streams at once, whatever you are doing isn't very complicated.

twtech - Thursday, November 15, 2018 - link

Maybe not necessarily the number of pixels. I don't think you'd be a whole lot more productive with a 4k screen than a 2k screen. But screen area on the other hand does matter.From simple things like being able to have the .cpp, the .h, and some other relevant code file all open at the same time without needing to switch windows, to doing 3-way merges, even just being able to see the progress of your compile while you check your email. Why wouldn't you want to have more screen space?

If you're going to sit at a desk anyway, and you're going to be paid pretty well - which most developers are - why sacrifice even 20, 10, even 5% productivity if you don't have to? And personally I think it's at the higher end of that scale - at least 20%. Every time I don't have to lose my train of thought because I'm fiddling with Visual Studio tabs - that matters.

Kilnk - Tuesday, November 13, 2018 - link

You're assuming that everyone who needs to use a computer for work needs power and dual monitors. That just isn't the case. The only person kidding themselves here is you.PeachNCream - Tuesday, November 13, 2018 - link

Resolution and the presence or absence of a second screen are things that are not directly linked to increased productivity in all situations. There are a few workflows that might benefit, but a second screen or a specific resolution, 4k for instance versus 1080, doesn't automatically make a workplace "serious" or...well whatever the opposite of serious is in the context in which you're using it.steven4570 - Tuesday, November 13, 2018 - link

"I wouldn't call them very "professional" when they are sacrificing 50+% productivity for mobility."This is quite honestly, a very stupid statement without any real practical views in the real world.

Atari2600 - Wednesday, November 14, 2018 - link

Not really.The idiocy is thinking that working off a laptop screen is you being as productive as you can be.

The threshold for seeing tangible benefiting from more visible workspace (when so restricted) is very low.

I can accept if folks say they dock their laptops and work on large/multiple monitors - but absolutely do not accept the premise that working off the laptop screen should be considered effective working. If you believe otherwise, you've either never worked with multiple/large screens or simply aren't working fast enough or on something complicated enough to have a worthwhile opinion in the matter! [IMO it really is that stark and it boils my piss seeing folks grappling with 2x crap 20" screens in engineering workplaces and their managers squeezing to make them more productive and not seeing the problem right in front of them.]

jospoortvliet - Thursday, November 15, 2018 - link

Dude it depends entirely on what you are doing. A writer (from books to marketing) needs nothing beyond a 11" screen... I'm in marketing in a startup and for half my tasks my laptop is fine, writing in particular. And yes as soon as I need to work on a web page or graphics design, I need my two screens and 6 virtual desktops at home.I have my XPS 13 for travel and yes I take a productivity hit from the portability, but only when forced to use it for a week. Working from a cafe once or twice a week I simply plan tasks where a laptop screen isn't limiting and people who do such tasks all day (plenty) don't NEED a bigger screen at all.

He'll I know people who do 80% of their work on a freaking PHONE. Sales folks probably NEVER need anything beyond a 15" screen, and that only for 20% of their work...

Atari2600 - Thursday, November 15, 2018 - link

I never said the non-complicated things need anything more than 1 small screen!