The Intel Core i9-9980XE CPU Review: Refresh Until it Hertz

by Ian Cutress on November 13, 2018 9:00 AM ESTHEDT Performance: Web and Legacy Tests

While more the focus of low-end and small form factor systems, web-based benchmarks are notoriously difficult to standardize. Modern web browsers are frequently updated, with no recourse to disable those updates, and as such there is difficulty in keeping a common platform. The fast paced nature of browser development means that version numbers (and performance) can change from week to week. Despite this, web tests are often a good measure of user experience: a lot of what most office work is today revolves around web applications, particularly email and office apps, but also interfaces and development environments. Our web tests include some of the industry standard tests, as well as a few popular but older tests.

We have also included our legacy benchmarks in this section, representing a stack of older code for popular benchmarks.

All of our benchmark results can also be found in our benchmark engine, Bench.

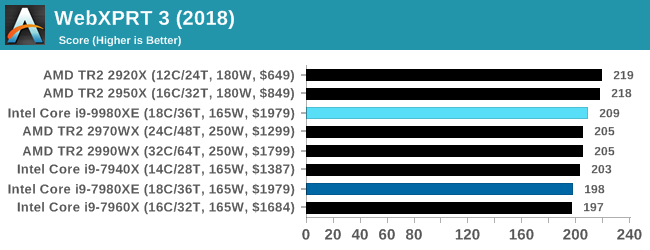

WebXPRT 3: Modern Real-World Web Tasks, including AI

The company behind the XPRT test suites, Principled Technologies, has recently released the latest web-test, and rather than attach a year to the name have just called it ‘3’. This latest test (as we started the suite) has built upon and developed the ethos of previous tests: user interaction, office compute, graph generation, list sorting, HTML5, image manipulation, and even goes as far as some AI testing.

For our benchmark, we run the standard test which goes through the benchmark list seven times and provides a final result. We run this standard test four times, and take an average.

Users can access the WebXPRT test at http://principledtechnologies.com/benchmarkxprt/webxprt/

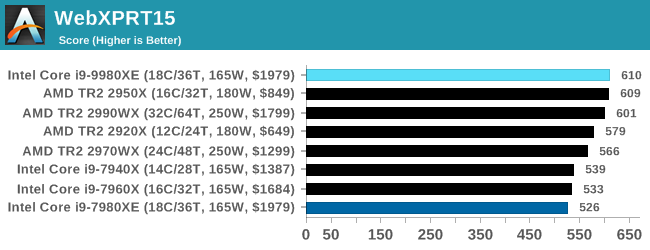

WebXPRT 2015: HTML5 and Javascript Web UX Testing

The older version of WebXPRT is the 2015 edition, which focuses on a slightly different set of web technologies and frameworks that are in use today. This is still a relevant test, especially for users interacting with not-the-latest web applications in the market, of which there are a lot. Web framework development is often very quick but with high turnover, meaning that frameworks are quickly developed, built-upon, used, and then developers move on to the next, and adjusting an application to a new framework is a difficult arduous task, especially with rapid development cycles. This leaves a lot of applications as ‘fixed-in-time’, and relevant to user experience for many years.

Similar to WebXPRT3, the main benchmark is a sectional run repeated seven times, with a final score. We repeat the whole thing four times, and average those final scores.

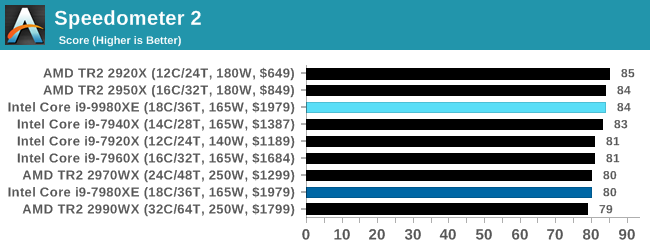

Speedometer 2: JavaScript Frameworks

Our newest web test is Speedometer 2, which is a accrued test over a series of javascript frameworks to do three simple things: built a list, enable each item in the list, and remove the list. All the frameworks implement the same visual cues, but obviously apply them from different coding angles.

Our test goes through the list of frameworks, and produces a final score indicative of ‘rpm’, one of the benchmarks internal metrics. We report this final score.

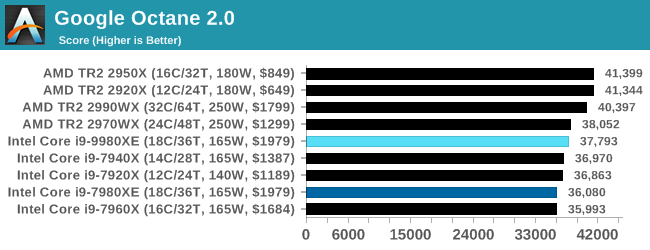

Google Octane 2.0: Core Web Compute

A popular web test for several years, but now no longer being updated, is Octane, developed by Google. Version 2.0 of the test performs the best part of two-dozen compute related tasks, such as regular expressions, cryptography, ray tracing, emulation, and Navier-Stokes physics calculations.

The test gives each sub-test a score and produces a geometric mean of the set as a final result. We run the full benchmark four times, and average the final results.

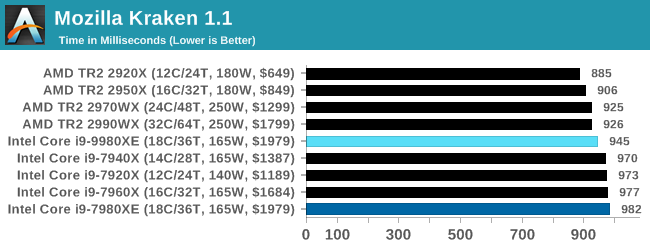

Mozilla Kraken 1.1: Core Web Compute

Even older than Octane is Kraken, this time developed by Mozilla. This is an older test that does similar computational mechanics, such as audio processing or image filtering. Kraken seems to produce a highly variable result depending on the browser version, as it is a test that is keenly optimized for.

The main benchmark runs through each of the sub-tests ten times and produces an average time to completion for each loop, given in milliseconds. We run the full benchmark four times and take an average of the time taken.

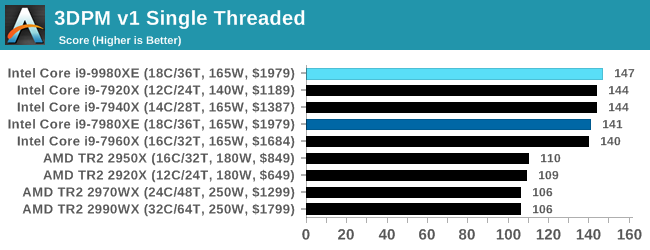

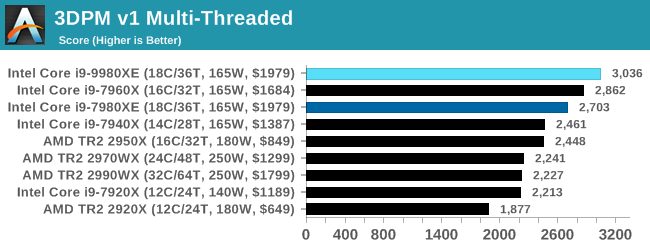

3DPM v1: Naïve Code Variant of 3DPM v2.1

The first legacy test in the suite is the first version of our 3DPM benchmark. This is the ultimate naïve version of the code, as if it was written by scientist with no knowledge of how computer hardware, compilers, or optimization works (which in fact, it was at the start). This represents a large body of scientific simulation out in the wild, where getting the answer is more important than it being fast (getting a result in 4 days is acceptable if it’s correct, rather than sending someone away for a year to learn to code and getting the result in 5 minutes).

In this version, the only real optimization was in the compiler flags (-O2, -fp:fast), compiling it in release mode, and enabling OpenMP in the main compute loops. The loops were not configured for function size, and one of the key slowdowns is false sharing in the cache. It also has long dependency chains based on the random number generation, which leads to relatively poor performance on specific compute microarchitectures.

3DPM v1 can be downloaded with our 3DPM v2 code here: 3DPMv2.1.rar (13.0 MB)

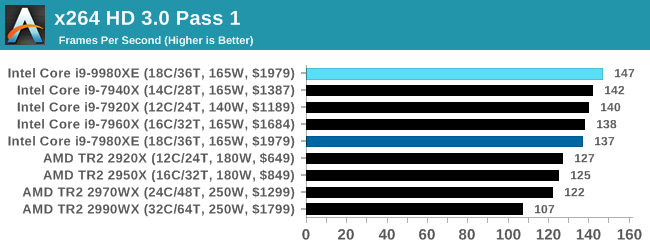

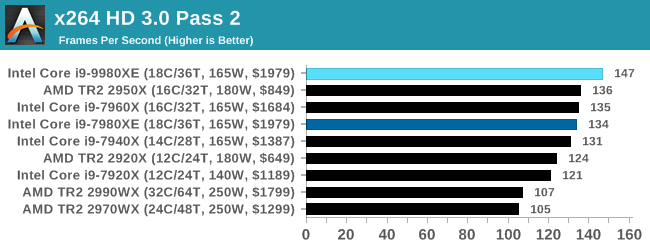

x264 HD 3.0: Older Transcode Test

This transcoding test is super old, and was used by Anand back in the day of Pentium 4 and Athlon II processors. Here a standardized 720p video is transcoded with a two-pass conversion, with the benchmark showing the frames-per-second of each pass. This benchmark is single-threaded, and between some micro-architectures we seem to actually hit an instructions-per-clock wall.

143 Comments

View All Comments

at8750 - Tuesday, November 13, 2018 - link

Hi, Ian.Did Intel officially announce Skylake-X Refresh be manufactured on 14++ node?

But 9980XE Stepping is the same as 7980XE.

Stepping is 4, there is no change.

SanX - Tuesday, November 13, 2018 - link

Sometimes the advantage of these processors with AVX512 versus usual desktop processors with AVX2 is crazy. The 3D particle tests fly like 500 mph cars. Which other tasks besides 3D particle movement also benefit from AVX512?How about linear algebra? Does Intel MKL which seems now support these extensions demonstrate similar speedups with AVX512 on solutions Ax=B, say, with the usual dense matrices?

TitovVN1974 - Friday, November 16, 2018 - link

Pray look up linpack results.SonicAndSmoke - Tuesday, November 13, 2018 - link

@Ian: What's with that paragraph about the Mesh clocks on page 1? Mesh clock is 2.4 GHz stock on SKX, and there is no mesh turbo at all. You can check for yourself with AIDA64 or HwInfo. So does SKX-R have the same 2.4 GHz clock, or higher?Tamerlin - Tuesday, November 13, 2018 - link

Thorough review as always.I'd like to request that you consider adding some DaVinci Resolve tests to your suite, as it would be helpful for professional film post production professionals. There is a free license which has enough capability for professional work, and there is free raw footage available from Black Magic's web site and 8K raw footage available from Red's.

Thanks :)

askmedov - Tuesday, November 13, 2018 - link

Intel is playing with fire by doing incremental upgrades over and over again. Look no further than Apple's new iPads - their chips are better than what Intel has to offer in terms of price-power-efficiency. Apple is going to ditch Intel's processors very soon for most of its Mac lineup.bji - Tuesday, November 13, 2018 - link

Microsoft Windows is known to suck hard when it comes to performance on NUMA architectures and particularly the TR2 processors. See Phoronix for analysis.Why does Anandtech continue to post Windows-only benchmarks? They are fairly useless; they tell more about the limitations of Microsoft Windows than they do the processors themselves.

Of course, if you're a poor sap stuck running Windows for any task that requires these processors, I guess you care, but you really should be pushing your operating system vendor to use some of their billions of dollars to hire OS developers who know what they are doing.

I just bought a TR2 1950X for my software development workstation (Linux based) and I am fairly confident that for my work loads, it will kick the crap out of these Intel processors. I wouldn't know for sure though because I tend to read Anandtech fairly exclusively for hardware reports, dipping into sites like Phoronix only when necessary to get accurate details for edge cases like the TR2.

It sure would be nice if my site of choice (Anandtech) would start posting relevant results from operating systems designed to take advantage of these high power processors instead of more Windows garbage ... especially Windows gaming benchmarks, as if those are even remotely relevant to this CPU segment!

bji - Tuesday, November 13, 2018 - link

Erp I meant 2950X, sorry typo there.Shaky156 - Wednesday, November 14, 2018 - link

I have to agree 110%, gaming benchmarks are more gpu/ipc related more than a multicore cpu benchmark.Somehow seems anandtech could be biased

eva02langley - Wednesday, November 14, 2018 - link

Totally agree, we have come to a time that benchmarks are not even accurately evaluating the product anymore. The big question is how can we depict an accurate picture? Especially if the reviewer is not choosing the right ones properly for real comparison.Well, seeing disparity from Phoronix is raising major concern to me.