The AMD Threadripper 2 CPU Review: The 24-Core 2970WX and 12-Core 2920X Tested

by Ian Cutress on October 29, 2018 9:00 AM ESTGaming: Ashes Classic (DX12)

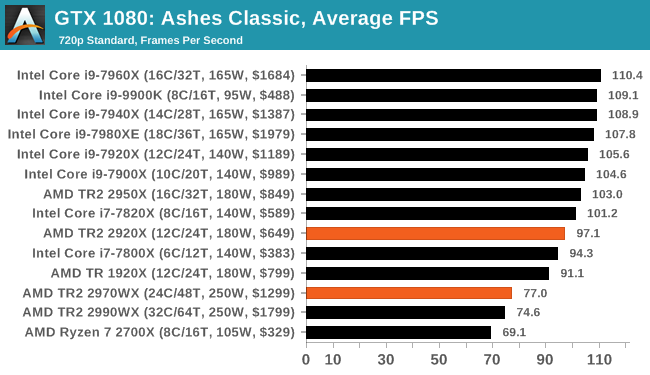

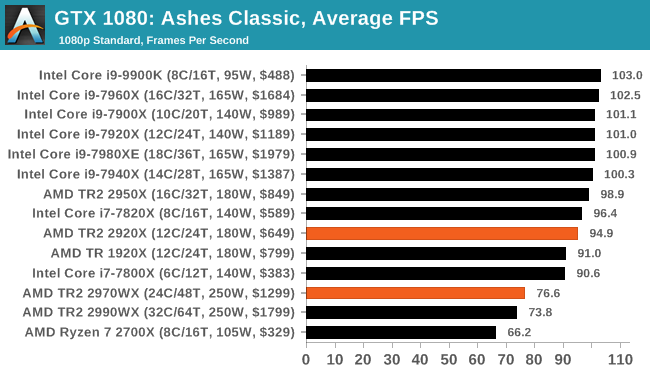

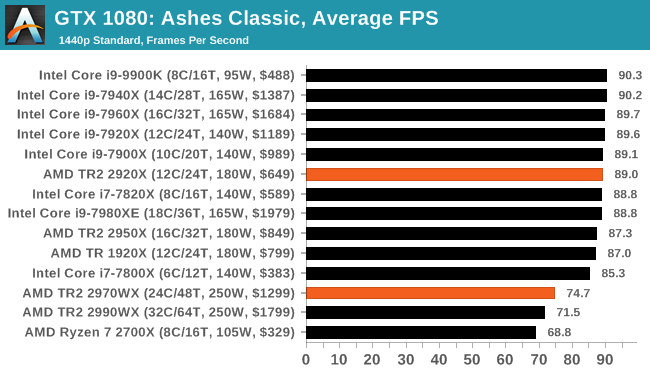

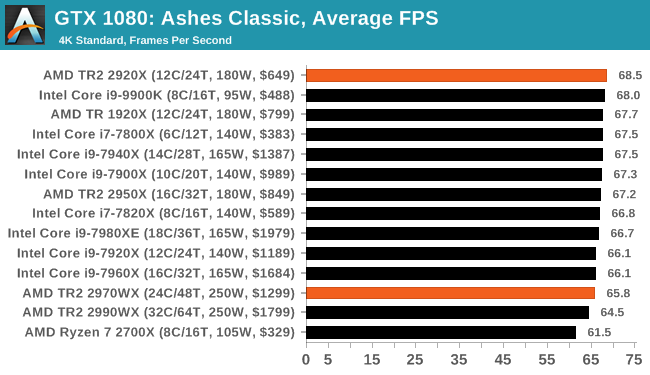

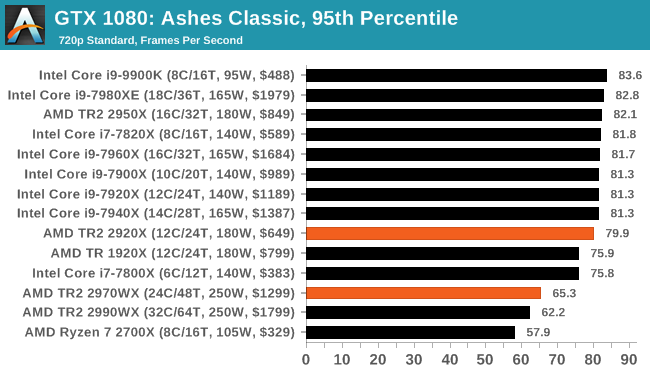

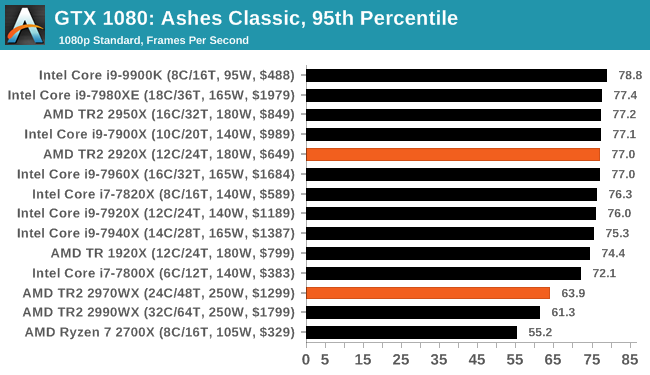

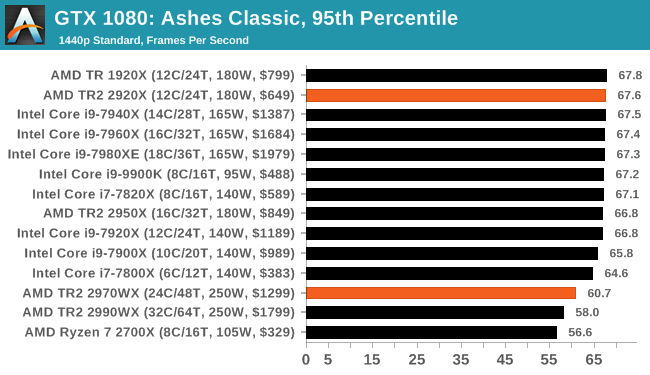

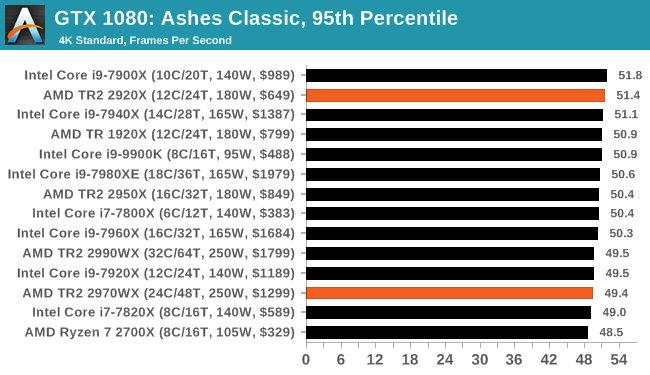

Seen as the holy child of DirectX12, Ashes of the Singularity (AoTS, or just Ashes) has been the first title to actively go explore as many of the DirectX12 features as it possibly can. Stardock, the developer behind the Nitrous engine which powers the game, has ensured that the real-time strategy title takes advantage of multiple cores and multiple graphics cards, in as many configurations as possible.

As a real-time strategy title, Ashes is all about responsiveness during both wide open shots but also concentrated battles. With DirectX12 at the helm, the ability to implement more draw calls per second allows the engine to work with substantial unit depth and effects that other RTS titles had to rely on combined draw calls to achieve, making some combined unit structures ultimately very rigid.

Stardock clearly understand the importance of an in-game benchmark, ensuring that such a tool was available and capable from day one, especially with all the additional DX12 features used and being able to characterize how they affected the title for the developer was important. The in-game benchmark performs a four minute fixed seed battle environment with a variety of shots, and outputs a vast amount of data to analyze.

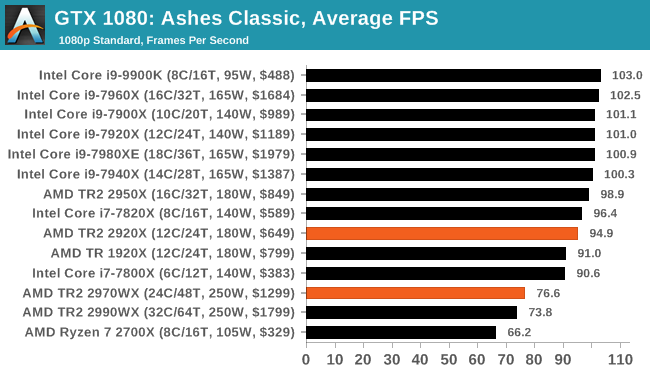

For our benchmark, we run Ashes Classic: an older version of the game before the Escalation update. The reason for this is that this is easier to automate, without a splash screen, but still has a strong visual fidelity to test.

| AnandTech CPU Gaming 2019 Game List | ||||||||

| Game | Genre | Release Date | API | IGP | Low | Med | High | |

| Ashes: Classic | RTS | Mar 2016 |

DX12 | 720p Standard |

1080p Standard |

1440p Standard |

4K Standard |

|

Ashes has dropdown options for MSAA, Light Quality, Object Quality, Shading Samples, Shadow Quality, Textures, and separate options for the terrain. There are several presents, from Very Low to Extreme: we run our benchmarks at the above settings, and take the frame-time output for our average and percentile numbers.

All of our benchmark results can also be found in our benchmark engine, Bench.

| Game | IGP | Low | Medium | High |

| Average FPS |  |

|

|

|

| 95th Percentile |  |

|

|

|

69 Comments

View All Comments

schujj07 - Monday, October 29, 2018 - link

You would be far to limited with RAM to run 60 VMs on that system. I've got 80 on dual Dell 7425's with dual 24 Core Epycs and 512GB RAM and I'm already getting RAM limited.Again I wouldn't install ESXi on these. Use Win 10 and Workstation for your test/dev and you will have a more agile system. If you don't need it for testing that day you still have Windows. FYI I'm VMware Admin.

Ratman6161 - Monday, October 29, 2018 - link

All depends...in my home lab environment (which lets me test things at will and do whatever I want as opposed to at work where even the lab is more locked down) . For me, the Threadrippers would be great...but extreme overkill. I actually use old FX8320's which I bought when they were dirt cheap and DDR3 RAM was cheap too. The free version of ESXi works fine for me too. For my purposes the threadrippers would be really cool but more expensive than they would be worth.Icehawk - Monday, October 29, 2018 - link

I would love one of these high cores boxes for our test lab, using W10 and VM on my desktop is very limiting for me (work rig is 7700 & 32gb) - one of these would let me put plenty of resources onboard. Currently my lab runs off a G6 Dell server which is totally fine but if I could get myself a new, personal, lab I'd want a TR rig since it can host a lot more RAM than Intel's option.odrade - Tuesday, October 30, 2018 - link

Hi I completely agree with you.With security enhancement moving to sandbox/VM (Application Guard, Sandboxed Defender in 19H1) virtualization scenario will be more prevalent beyond developper or test scenarios.

One major disappointment is that after 12+ months since GA there is no support for nested virtualization for TR/TR2 ?, Ryzen ? Epyc ?.

This issue seems to be general and not limited to hyper-v (KVM, etc..).

This is strange since EPYC made is way through Azure or Oracle Cloud catalog.

During Ignite 2018 there was a demo with an EPYC box (VM or Server).

Regards G.

GreenReaper - Wednesday, October 31, 2018 - link

You could ask for HyperV over here:https://windowsserver.uservoice.com/forums/295047-...

But such features are often buggy in their initial implementations:

http://www.os2museum.com/wp/vme-broken-on-amd-ryze...

https://www.reddit.com/r/Amd/comments/8ljgph/has_t...

It wouldn't surprise me if they ran into too many problems to want to push out a solution. And Intel has had issues here too - most recently L1 Terminal Fault relating to EPT:

https://www.redhat.com/en/blog/understanding-l1-te...

If people buy enough of them, and there is a performance benefit or it otherwise becomes a feature differentiator, support will doubtless be developed. Chicken and egg, I know.

odrade - Monday, November 5, 2018 - link

Hi,Thanks four your inputs.

This feature is handy if you want to build advanced lab scenarios while preserving your work environment or avoid the hassle to use dual boot.

Maybe this feature will be enabled with the 2019 Epyc / TR iteration.

And if the the socket and compatibility promises is kept by AMD refreshing

my setup will do it and put those extra pcie lanes to use (upgrading storage as well).

At least the 7mm process will help to kept the power compatibility in line.

Regards G.

Blindsay - Monday, October 29, 2018 - link

For the chart on the last page, the "12-core Battle" it would be interesting to see a "similar price battle" of like the 9900k vs 7820X vs 2920X. I suspect the 9900k would hold up rather well especially once it returns to its SRPmapesdhs - Monday, October 29, 2018 - link

A battle for what? If it's gaming, get the far cheaper 2700X and using the difference to buy a better GPU, giving better gaming results by default (some niche cases at 1080p, but in general the 9900K is a poor value option for gaming, except for those who've gone the NPC route into high refresh displays from which there's no way back, ironic now NVIDIA has decided to move backwards to sub-60Hz 1080p with RTX).Blindsay - Monday, October 29, 2018 - link

Definitely not for gaming lol. It is for a home server (unraid)PeachNCream - Tuesday, October 30, 2018 - link

That's a lot of compute for a home server. Home servers (outside of those used for the development of professional skills or to test software outside of a setting where there are office usage policies) serve very limited useful purposes. They're mainly a solution looking for a problem or just fun to mess around with. I have an old C2D E8400-powered desktop PC with 8GB of RAM that I just recently put online as a local file, media, and internal web server connected via a cheap TPLink PCI (non-e) wifi card. There's nothing that the kids and I have done to it yet that brings it anywhere close to its knees. Even streaming videos from it to three other systems at once is a non-issue and all of those files are stored on a single 1TB 5400 RPM 2.5 inch mechanical HDD. TR is extreme overkill for a toy server at home. Literally any old scavenged desktop or laptop can act as a home server.