The NVIDIA GeForce RTX 2080 Ti & RTX 2080 Founders Edition Review: Foundations For A Ray Traced Future

by Nate Oh on September 19, 2018 5:15 PM EST- Posted in

- GPUs

- Raytrace

- GeForce

- NVIDIA

- DirectX Raytracing

- Turing

- GeForce RTX

Total War: Warhammer II (DX11)

Last in our 2018 game suite is Total War: Warhammer II, built on the same engine of Total War: Warhammer. While there is a more recent Total War title, Total War Saga: Thrones of Britannia, that game was built on the 32-bit version of the engine. The first TW: Warhammer was a DX11 game was to some extent developed with DX12 in mind, with preview builds showcasing DX12 performance. In Warhammer II, the matter, however, appears to have been dropped, with DX12 mode still marked as beta, but also featuring performance regression for both vendors.

It's unfortunate because Creative Assembly themselves have acknowledged the CPU-bound nature of their games, and with re-use of game engines as spin-offs, DX12 optimization would have continued to provide benefits, especially if the future of graphics in RTS-type games will lean towards low-level APIs.

There are now three benchmarks with varying graphics and processor loads; we've opted for the Battle benchmark, which appears to be the most graphics-bound.

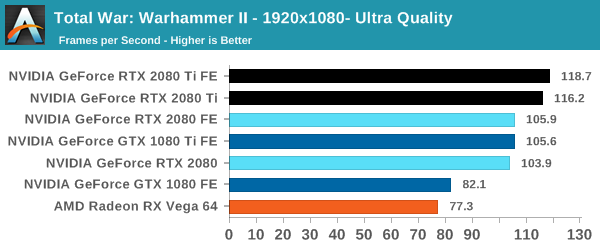

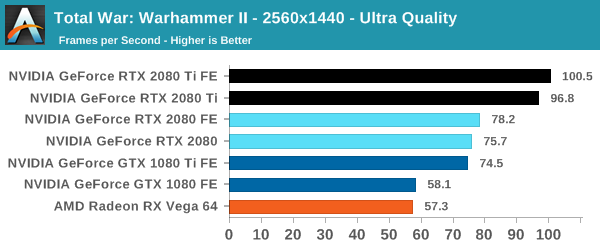

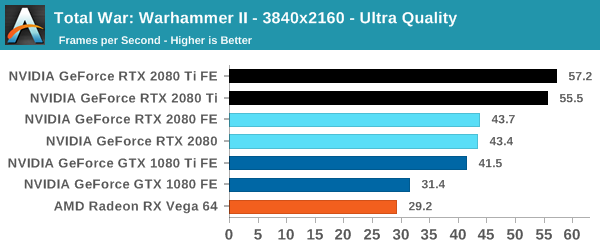

| Total War Warhammer II |

1920x1080 | 2560x1440 | 3840x2160 |

| Average FPS |  |

|

|

At 1080p, the cards quickly run into the CPU bottleneck, which is to be expected with top-tier video cards and the CPU intensive nature of RTS'es. The Founders Edition power and clock tweaks prove less useful here at 4K, but the models are otherwise in keeping with the expected 1-2-3 linup of 2080 Ti, 2080, and 1080 Ti, with the latter two roughly on par and the 2080 Ti pushing further.

337 Comments

View All Comments

imaheadcase - Wednesday, September 19, 2018 - link

Because bluray players played movies from the start, delivered what they promised from the start even if cost a lot? Duh.PopinFRESH007 - Thursday, September 20, 2018 - link

They played DVDs from the start. Your statement is falseimaheadcase - Thursday, September 20, 2018 - link

Umm nope its true.Spunjji - Friday, September 21, 2018 - link

Yeah, there was media available at launch. Also Blu-Ray provided a noticeable jump in both quality AND resolution over DVD. RTX provides maybe the first and definitely not the second.V900 - Wednesday, September 19, 2018 - link

And it’s clear that you didn’t read the article, or skimmed it at best, if you’re claiming that “the two technologies have not even seen the real light of day”.The tools are out there, developers are working with them, and not only are there many games on the way that support them, there are games out now that use RTX.

Let me quote from the review:

“not only was the feat achieved but implemented, and not with proofs-of-concept but with full-fledged AA and AAA games. Today is a milestone from a purely academic view of computer graphics.”

tamalero - Wednesday, September 19, 2018 - link

Development means nothing unless they are released. As plans get cancelled, budgets gets cut and technology is replaced or converted/merged into a different standard.imaheadcase - Wednesday, September 19, 2018 - link

You just proved yourself wrong with own quote. lolGuess what? Python language is out there, lets all develop games from it! All the tools are available! Its so easy! /sarcasm

Ranger1065 - Thursday, September 20, 2018 - link

V900 shillage stench.PopinFRESH007 - Wednesday, September 19, 2018 - link

Just like those HD-DVD adopters, Laser Disc adopters, BetaMax adopters. V900 is pointing out that early adopters accept a level of risk in adopting new technology to enjoy cutting-edge stuff. This is no different that Bluray or DVDs when they came out. People who buy RTX cards have "WORKING TECH" and will have few options to use it just like the 2nd wave of Bluray players. The first Bluray player actually never had a movie released for it and it cost $3800."The first consumer device arrived in stores on April 10, 2003: the Sony BDZ-S77, a $3,800 (US) BD-RE recorder that was made available only in Japan.[20] But there was no standard for prerecorded video, and no movies were released for this player."

Even 3 years after that when they actually had a standard studios would produce movies for the players that were out cost over $1000 and there was a whopping 7 titles that were available. Similar to RTX being the fastest cards available for current technology, those Bluray players also played DVDs (gasp).

imaheadcase - Wednesday, September 19, 2018 - link

Again, the point is bluray WORKED out of the box even if expensive. This doesn't even have any way to even test the other stuff.. You are literally buying something for a FPS boost over previous gens that is not really a big one at that. It be a different tune if lots of games already had the tech in hand by nvidia, had it in games just not enabled...but its not even available to test is silly.