The NVIDIA GeForce RTX 2080 Ti & RTX 2080 Founders Edition Review: Foundations For A Ray Traced Future

by Nate Oh on September 19, 2018 5:15 PM EST- Posted in

- GPUs

- Raytrace

- GeForce

- NVIDIA

- DirectX Raytracing

- Turing

- GeForce RTX

Total War: Warhammer II (DX11)

Last in our 2018 game suite is Total War: Warhammer II, built on the same engine of Total War: Warhammer. While there is a more recent Total War title, Total War Saga: Thrones of Britannia, that game was built on the 32-bit version of the engine. The first TW: Warhammer was a DX11 game was to some extent developed with DX12 in mind, with preview builds showcasing DX12 performance. In Warhammer II, the matter, however, appears to have been dropped, with DX12 mode still marked as beta, but also featuring performance regression for both vendors.

It's unfortunate because Creative Assembly themselves have acknowledged the CPU-bound nature of their games, and with re-use of game engines as spin-offs, DX12 optimization would have continued to provide benefits, especially if the future of graphics in RTS-type games will lean towards low-level APIs.

There are now three benchmarks with varying graphics and processor loads; we've opted for the Battle benchmark, which appears to be the most graphics-bound.

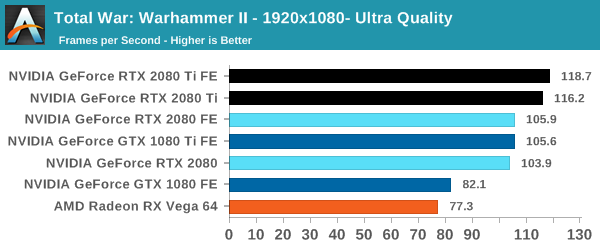

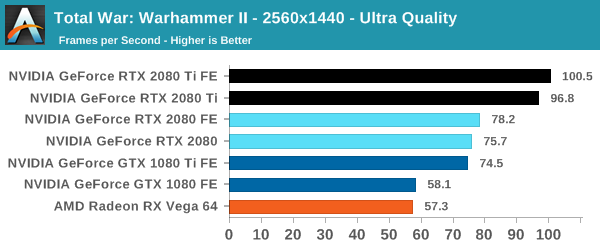

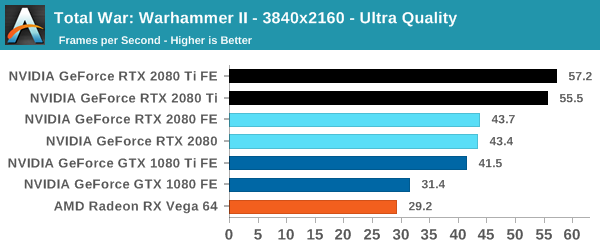

| Total War Warhammer II |

1920x1080 | 2560x1440 | 3840x2160 |

| Average FPS |  |

|

|

At 1080p, the cards quickly run into the CPU bottleneck, which is to be expected with top-tier video cards and the CPU intensive nature of RTS'es. The Founders Edition power and clock tweaks prove less useful here at 4K, but the models are otherwise in keeping with the expected 1-2-3 linup of 2080 Ti, 2080, and 1080 Ti, with the latter two roughly on par and the 2080 Ti pushing further.

337 Comments

View All Comments

Billstpor - Friday, September 21, 2018 - link

Wrong. It's already known that the tensor cores have enough juice to run ray-traced effects and DLSS at the same time:https://youtu.be/pgEI4tzh0dc?t=10m55s

Vayra - Monday, September 24, 2018 - link

Wrong, the tensor cores need DLSS to run ray tracing at somewhat bearable FPS - that is, 30 to 60.DLSS is a way to reduce the amount of rays to cast.

Vayra - Monday, September 24, 2018 - link

Hence the non-existant improvement, or even worse position in terms of quality compared to SSAA x4 or better.In other words, running at native 4K is miles sharper and will perform miles better than a DLSS+RTRT combination still.

IUU - Sunday, September 23, 2018 - link

While your argument is solid, these days are just so weird that even $1200 cards seem to make a hell of a lot sense. This is also valid for similar desktop cpus.Why? Well , go buy a high-end iphone or a high end android phone... Enough said.

PS. For those who may use arguments like geekbench and such , it is just insulting to put it very very kindly!

Gastec - Thursday, September 27, 2018 - link

So basically buying high-priced electronics make sense because the companies selling them just increase the prices every year to make profits and a certain type of consumers are supporting those companies by purchasing no matter what the price (call them fanbois). The question is: Why, why are those people acting like that? What drives them?watek - Wednesday, September 19, 2018 - link

Consumers paying these premium prices for features that are not even fully developed or finished is mind boggling!! People are being bent over and screwed by Nvidia hard yet they still pay $1500 to be beta testers until next Gen.V900 - Wednesday, September 19, 2018 - link

Mindboggling? I suppose it would be for a time traveller visiting from the 19th century, but for everyone else it’s perfectly normal.There is always a price premium for those early adopters who want to live on the cutting edge of technology.

When DVD players came out, they cost over a 1000$ and the selection of movies they could watch was extremely small. When Blu-ray players came out, they also cost well over 1000$ and the entire catalogue of Blu-ray titles was a dozen movies or so.

And keep in mind, that the price that Nvidia charges for joining the early adopter club is really shockingly low.

When OLED or 4K televisions first came out, people paid tens of thousands of dollars for a set, and the selection of 4K entertainment to watch on them was pretty much zero.

With the 2080, early adopters can climb aboard for 600-1000$.

Games that take advantage of DLSS and RTX will be here soon and in the meantime they have the most powerful graphics card on the market that will play pretty much anything you can throw at it, in 4K without breaking a sweat.

It’s not a bad deal at all.

imaheadcase - Wednesday, September 19, 2018 - link

Again, two technology that have not even seen the real light of day, let alone to be proven worth it at all. Early adopters of the other techs you listed at least got WORKING TECH from the start as promised.V900 - Wednesday, September 19, 2018 - link

Ok, you’re either deliberately spreading untruths and FUD, or you just haven’t paid attention.There are games NOW that support RTX and DLSS. Games like Shadow of the Tombraidet, Control and PUBG.

And there are more games coming out THIS YEAR with RTX/DLSS support: Battlefield 5 is one of them.

The next Metro is one of many games coming out in early 2019 that also support RTX.

So tell me again how this is different from when Blueray players came out?

imaheadcase - Wednesday, September 19, 2018 - link

THose games you listed don't have it now, they are COMING. lol Even then the difference is not even worth it considering the games don't hardly take a hit for the 1080TI. You are the nvidia shill on here and forums as everyone knows.