The NVIDIA GeForce RTX 2080 Ti & RTX 2080 Founders Edition Review: Foundations For A Ray Traced Future

by Nate Oh on September 19, 2018 5:15 PM EST- Posted in

- GPUs

- Raytrace

- GeForce

- NVIDIA

- DirectX Raytracing

- Turing

- GeForce RTX

Ashes of the Singularity: Escalation (DX12)

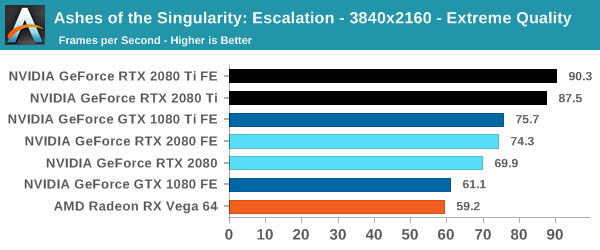

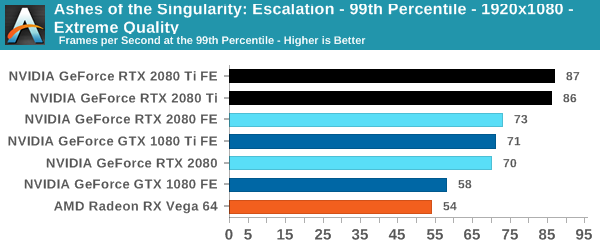

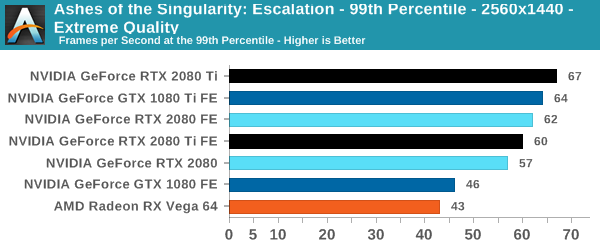

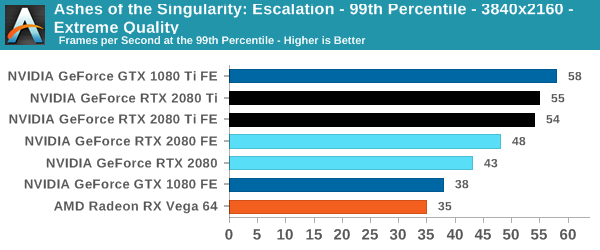

A veteran from both our 2016 and 2017 game lists, Ashes of the Singularity: Escalation remains the DirectX 12 trailblazer, with developer Oxide Games tailoring and designing the Nitrous Engine around such low-level APIs. The game makes the most of DX12's key features, from asynchronous compute to multi-threaded work submission and high batch counts. And with full Vulkan support, Ashes provides a good common ground between the forward-looking APIs of today. Its built-in benchmark tool is still one of the most versatile ways of measuring in-game workloads in terms of output data, automation, and analysis; by offering such a tool publicly and as part-and-parcel of the game, it's an example that other developers should take note of.

Settings and methodology remain identical from its usage in the 2016 GPU suite. To note, we are utilizing the original Ashes Extreme graphical preset, which compares to the current one with MSAA dialed down from x4 to x2, as well as adjusting Texture Rank (MipsToRemove in settings.ini).

| Ashes | 1920x1080 | 2560x1440 | 3840x2160 |

| Average FPS |  |

|

|

| 99th Percentile |  |

|

|

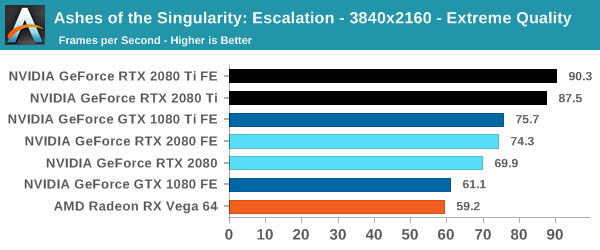

For Ashes, the 20 series fare a little worse in their gains over the 10 series, with an advantage at 4K around 14 to 22%. Here, the Founders Edition power and clock tweaks are essential in avoiding the 2080 FE outright losing to the 1080 Ti, though our results are putting the Founders Editions essentially neck-and-neck.

337 Comments

View All Comments

whaever85343 - Friday, September 21, 2018 - link

Whatever, this is your new benchmark:https://albertoven.com/2018/08/29/light-lands/

Golgatha777 - Friday, September 21, 2018 - link

I just want to be able to play all my games at 1440p, 60 FPS with all the eye candy turned on. Looks like my overclocked 1080 TI will be good for the immediate future is what I got from this review. The only real upgrade path is to the 2080 TI, and at $1200 that's an extremely hard sell.vivekvs1992 - Friday, September 21, 2018 - link

Well the problem is in India retailers are not willing to reduce the price of 1080 deries.. At present the 2080 is cheaper than all models of 1080 ti.. If given the chance I will definitely go for 2080..thing is that I will have to invest in a gaming monitor firstwebdoctors - Friday, September 21, 2018 - link

Any mining benchmarks?Can I actually make money buying these cards?

ravyne - Friday, September 21, 2018 - link

I agree these are really for early-adopters of RT, or if you're doing a new build or need of a new card but want it to last you 3+ years, so you need to catch the RT wave now.I think the next generation of RT-enabled cards will probably be the optimal entry-point; Presumably they'll be able to double (or so) RT performance on a 7nm process, and that means that the next xx70/80 products will actually have enough RT to match the resolution/framerate expectations of a high-end card, and also that the RT core won't be too costly to put into xx50/60 tier SKUs (If we even see a 2060 SKU, I don't think it will include RT cores at all, simply because the performance it could offer won't really be meaningful).

More than a few things are conspiring against the price too -- Aside from the specter of terriffs, the high price of all kinds of RAM right now, and that this is a 12nm product rather than 7nm, it looks to me like the large and relatively monolithic nature of the RT core itself is preventing nV from salvaging/binning more dies -- with the cuda/tensor cores I'd imagine they build in some redundant units so they can salvage the SM even if there are minor flaws in the functional units, but since there's only 1 RT core per SM, any flaw there means the whole SM is out -- that explains why the 2080 is based on the same GPU as the TI, and why the 2070 is the only card based on the GPU that would normally serve the xx70 and xx80 SKUs. Its possible they might be holding onto the dies with too many flawed RT cores to re-purpose them for the AI market, but that would compete with existing products.

gglaw - Saturday, September 22, 2018 - link

Is there a graph error for BF1 99th percentile at 4k resolution? The 2080 TI FE is at 90, and the 2080 TI (non founders) is 68. How is it possible to have this gigantic difference when almost all other benchmarks and games they are neck and neck?vandamick - Saturday, September 22, 2018 - link

A GTX 980 user. Would the RTX2080 be a big upgrade? Or should I stick with the 1080Ti that I had earlier planned? My upgrade cycle is about 3 years.Inteli - Saturday, September 22, 2018 - link

Are you willing to pay the extra $1-200 for a 2080 over a 1080 Ti for the same performance in current games, in exchange for the new Turing features (Ray-tracing and DLSS)?I'm not convinced yet that the 2080 will be able to run ray-traced games at acceptable frame rates, but it is "more future-proof" for the extra money you pay.

mapesdhs - Thursday, September 27, 2018 - link

Thing is, for the features you're talking about, the 2080 _is not fast enough_ at using them. I don't understand why more people aren't taking this onboard. NVIDIA's own demos show this to be the case, at least for RT. DLSS is more interesting, but the 2080 has less RAM. Until games exist that make decent use of these new features, buying into this tech now when it's at such a gimped low level is unwise. He's far better off with the 1080 Ti, that'll run his existing games faster straight away.AshlayW - Saturday, September 22, 2018 - link

They've completely priced me out of the entire RTX series lol. My budget ends at £400 and even that is pushing it :(