The NVIDIA GeForce RTX 2080 Ti & RTX 2080 Founders Edition Review: Foundations For A Ray Traced Future

by Nate Oh on September 19, 2018 5:15 PM EST- Posted in

- GPUs

- Raytrace

- GeForce

- NVIDIA

- DirectX Raytracing

- Turing

- GeForce RTX

Meet The New Future of Gaming: Different Than The Old One

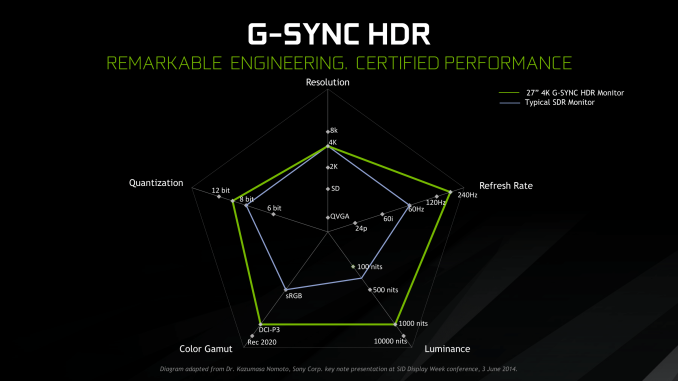

Up until last month, NVIDIA had been pushing a different, more conventional future for gaming and video cards, perhaps best exemplified by their recent launch of 27-in 4K G-Sync HDR monitors, courtesy of Asus and Acer. The specifications and display represented – and still represents – the aspired capabilities of PC gaming graphics: 4K resolution, 144 Hz refresh rate with G-Sync variable refresh, and high-quality HDR. The future was maxing out graphics settings on a game with high visual fidelity, enabling HDR, and rendering at 4K with triple-digit average framerate on a large screen. That target was not achievable by current performance, at least, certainly not by single-GPU cards. In the past, multi-GPU configurations were a stronger option provided that stuttering was not an issue, but recent years have seen both AMD and NVIDIA take a step back from CrossFireX and SLI, respectively.

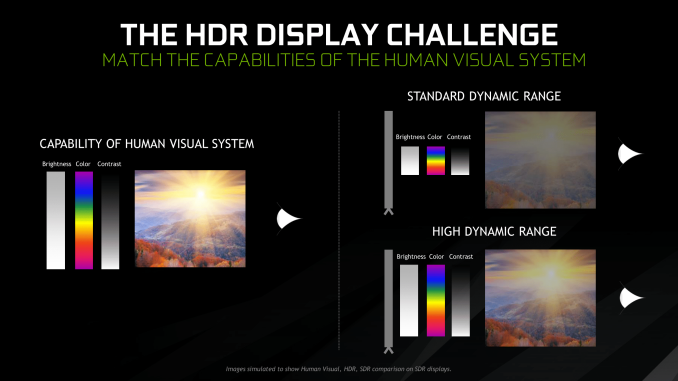

Particularly with HDR, NVIDIA expressed a qualitative rather than quantitative enhancement in the gaming experience. Faster framerates and higher resolutions were more known quantities, easily demoed and with more intuitive benefits – though in the past there was the perception of 30fps as cinematic, and currently 1080p still remains stubbornly popular – where higher resolution means more possibility for details, higher even framerates meant smoother gameplay and video. Variable refresh rate technology soon followed, resolving the screen-tearing/V-Sync input lag dilemma, though again it took time to catch on to where it is now – nigh mandatory for a higher-end gaming monitor.

For gaming displays, HDR was substantively different than adding graphical details or allowing smoother gameplay and playback, because it meant a new dimension of ‘more possible colors’ and ‘brighter whites and darker blacks’ to gaming. Because HDR capability required support from the entire graphical chain, as well as high-quality HDR monitor and content to fully take advantage, it was harder to showcase. Added to the other aspects of high-end gaming graphics and pending the further development of VR, this was the future on the horizon for GPUs.

But today NVIDIA is switching gears, going to the fundamental way computer graphics are modelled in games today. Of the more realistic rendering processes, light can be emulated as rays that emit from their respective sources, but computing even a subset of the number of rays and their interactions (reflection, refraction, etc.) in a bounded space is so intensive that real time rendering was impossible. But to get the performance needed to render in real time, rasterization essentially boils down 3D objects as 2D representations to simplify the computations, significantly faking the behavior of light.

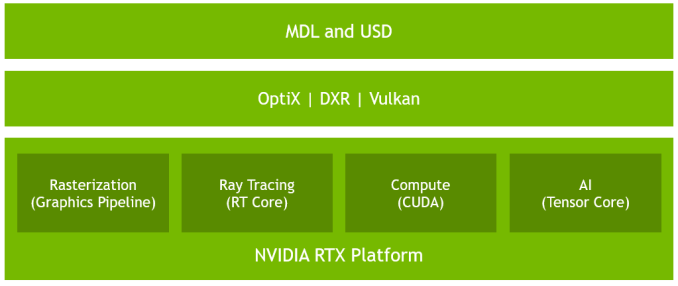

It’s on real time ray tracing that NVIDIA is staking its claim with GeForce RTX and Turing’s RT Cores. Covered more in-depth in our architecture article, NVIDIA’s real time ray tracing implementation takes all the shortcuts it can get, incorporating select real time ray tracing effects with significant denoising but keeping rasterization for everything else. Unfortunately, this hybrid rendering isn’t orthogonal to the previous concepts. Now, the ultimate experience would be hybrid rendered 4K with HDR support at high, steady, and variable framerates, though GPUs didn’t have enough performance to get to that point under traditional rasterization.

There’s a still a performance cost incurred with real time ray tracing effects, except right now only NVIDIA and developers have a clear idea of what it is. What we can say is that utilizing real time ray tracing effects in games may require sacrificing some or all three of high resolution, ultra high framerates, and HDR. HDR is limited by game support more than anything else. But the first two have arguably minimum performance standards when it comes to modern high-end gaming on PC – anything under 1080p is completely unpalatable, and anything under 30fps or more realistically 45 to 60fps hurts the playability. Variable refresh rate can mitigate the latter and framedrops are temporary, but low resolution is forever.

Ultimately, the real time ray tracing support needs to be implemented by developers via a supporting API like DXR – and many have been working hard on doing so – but currently there is no public timeline of application support for real time ray tracing, Tensor Core accelerated AI features, and Turing advanced shading. The list of games with support for Turing features - collectively called the RTX platform - will be available and updated on NVIDIA's site.

337 Comments

View All Comments

beisat - Thursday, September 20, 2018 - link

Very nice review, by far the best one I've read. Thanks for that.How likely do you think the launch of another generation is in 2019 from Nvidia / and or something competitive from AMD based on 7nm?

I currently have gtx970, skipped the Pascal generation and was waiting for Turing. But I don't like being an early adopter and feel that for pure rasterisation, these cards aren't worth it. Yes they are more powerful then the 10er series I skipped, but they also costs more - so performance pro $$$ is similar, and I'm not willing to pay the same amout of $$$ for the same performance as I would have 2 years ago.

Guess I'll just have to stick it out with my 970 at 1080p?

dguy6789 - Thursday, September 20, 2018 - link

RTX 2080 Ti and 2080 are highly disappointing.V900 - Thursday, September 20, 2018 - link

That’s a rather debatable take that most hardware sites and tech-journalists would disagree with.But would do they know, amirite?

dguy6789 - Friday, September 21, 2018 - link

Just about every review of these cards states that right now they're disappointing and we need to wait and see how ray tracing games pan out to see if that will change.We waited this many years to have the smallest generation to generation performance jump we have ever seen. Price went way up too. The cards are hotter and use a more power which makes me question how long they last before they die.

The weird niche Nvidia "features" these cards have will end up like PhysX.

The performance you get for what you pay for a 2080 or 2080 Ti is simply terrible.

dguy6789 - Friday, September 21, 2018 - link

Not to mention that Nvidia's stock was just downgraded due to the performance of the 2080 and 2080 Ti.mapesdhs - Thursday, September 27, 2018 - link

V900, you've posted a lot stuff here that was itself debatable, but that comment was just nonsense. I don't believe for a moment you think most tech sites think these cards are a worthy buy. The vast majority of reviews have been generally or heavily negative. I therefore conclude troll.hammer256 - Thursday, September 20, 2018 - link

Oof, still on the 12nm process. Which frankly is quite remarkable how much rasterization performance they were able to squeeze out, while putting in the tensor and ray tracing cores. The huge dies are not surprising in that regard. In the end, architectural efficiency can only go so far, and the fundamental limit is still on transistor budget.With that said, I'm guessing there's going to be a 7nm refresh pretty soon-ish? I would wait...

V900 - Thursday, September 20, 2018 - link

You might have to wait a long time then.Don’t see a 7nm refresh on the horizon. Maybe in a year, probably not until 2020.

*There isn’t any HP/high density 7nm process available right now. (The only 7nm product shipping right now is the A12. And that’s a low power/mobile process. The 7nm HP processes are all in various form of pre-production/research.

*Price. 7nm processes are going to be expensive. And the Turing dies are gigantic, and already expensive to make on its current node. That means that Nvidia will most likely wait with a 7nm Turing until proces have come down, and the process is more mature.

*And then there’s the lack of competition: AMD doesn’t have anything even close to the 2080 right now, and won’t for a good 3 years if Navi is a mid-range GPU. As long as the 2080Ti is the king of performance, there’s no reason for Nvidia to rush to a smaller process.

Zoolook - Thursday, September 27, 2018 - link

Kirin 980 has been shipping for a while, should be in stores in two weeks, we know that atleast Vega was sampling in June, so it depends on the allocation at TSMC it's not 100% Apple.Antoine. - Thursday, September 20, 2018 - link

The assumption under which this article operates that RTX2080 should be compared to GTX1080 and RTX2080TI to GTX1080TI is a disgrace. It allows you to be overly satisfied with performance evolutions between GPUS with a vastly different price tag! It just shows that you completely bought the BS renaming of Titan into Ti's. Of course the next gen Titan is going to perform better than the previous generation's Ti ! Such a gullible take on these new products cannot be by sheer stupidity alone.