The NVIDIA GeForce RTX 2080 Ti & RTX 2080 Founders Edition Review: Foundations For A Ray Traced Future

by Nate Oh on September 19, 2018 5:15 PM EST- Posted in

- GPUs

- Raytrace

- GeForce

- NVIDIA

- DirectX Raytracing

- Turing

- GeForce RTX

Power, Temperature, and Noise

With a large chip, more transistors, and more frames, questions always pivot to the efficiency of the card, and how well it sits with the overall power consumption, thermal limits of the default ‘coolers’, and the local noise of the fans when at load. Users buying these cards are going to be expected to push some pixels, which will have knock on effects inside a case. For our testing, we use a case for the best real-world results in these metrics.

Power

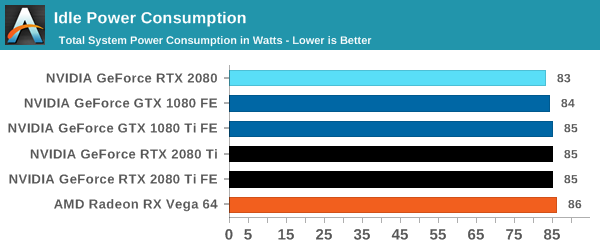

All of our graphics cards pivot around the 83-86W level when idle, though it is noticeable that they are in sets: the 2080 is below the 1080, the 2080 Ti sits above the 1080 Ti, and the Vega 64 consumes the most.

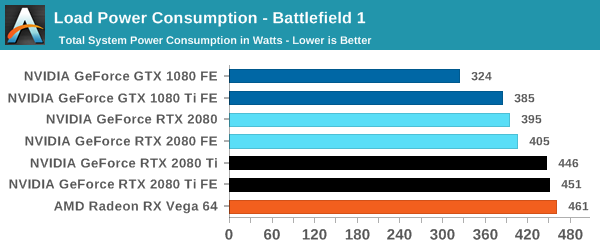

When we crank up a real-world title, all the RTX 20-series cards are pushing more power. The 2080 consumes 10W over the previous generation flagship, the 1080 Ti, and the new 2080 Ti flagship goes for another 50W system power beyond this. Still not as much as the Vega 64, however.

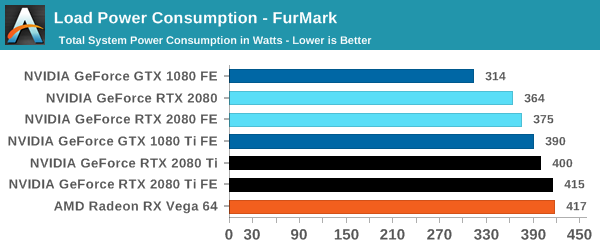

For a synthetic like Furmark, the RTX 2080 results show that it consumes less than the GTX 1080 Ti, although the GTX 1080 is some 50W less. The margin between the RTX 2080 FE and RTX 2080 Ti FE is some 40W, which is indicative of the official TDP differences. At the top end, the RTX 2080 Ti FE and RX Vega 64 are consuming equal power, however the RTX 2080 Ti FE is pushing through more work.

For power, the overall differences are quite clear: the RTX 2080 Ti is a step up above the RTX 2080, however the RTX 2080 shows that it is similar to the previous generation 1080/1080 Ti.

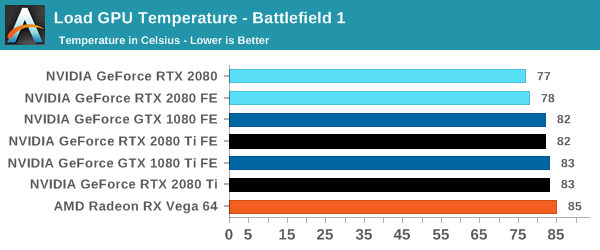

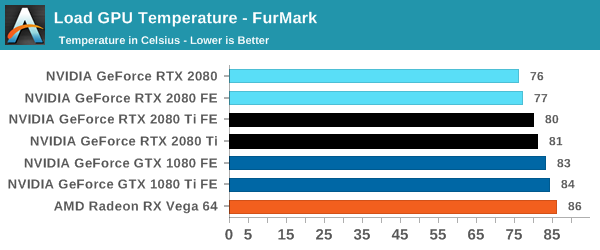

Temperature

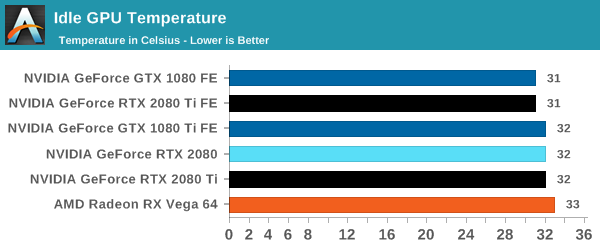

Straight off the bat, moving from the blower cooler to the dual fan coolers, we see that the RTX 2080 holds its temperature a lot better than the previous generation GTX 1080 and GTX 1080 Ti.

At each circumstance at load, the RTX 2080 is several degrees cooler than both the previous generation and the RTX 2080 Ti. The 2080 Ti fairs well in Furmark, coming in at a lower temperature than the 10-series, but trades blows in Battlefield. This is a win for the dual fan cooler, rather than the blower.

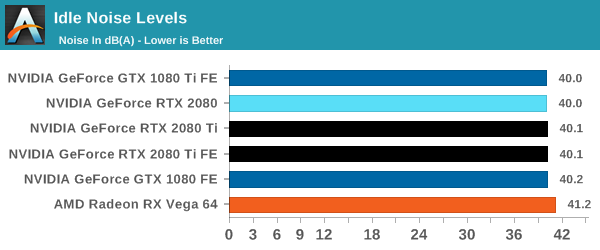

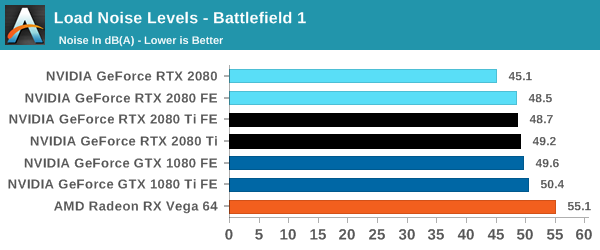

Noise

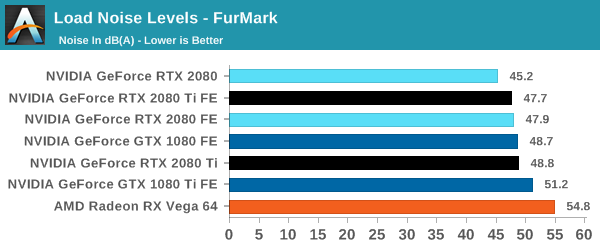

Similar to the temperature, the noise profile of the two larger fans rather than a single blower means that the new RTX cards can be quieter than the previous generation: the RTX 2080 wins here, showing that it can be 3-5 dB(A) lower than the 10-series and perform similar. The added power needed for the RTX 2080 Ti means that it is still competing against the GTX 1080, but it always beats the GTX 1080 Ti by comparison.

337 Comments

View All Comments

Hixbot - Friday, September 21, 2018 - link

I'm not sure how midrange 2070/2060 cards will sell if they're not a significant value in performance/price compared to 1070/1060 cards. If AMD offer no competition, Nvidia should still compete with itselfWwhat - Saturday, September 22, 2018 - link

It's interesting that every comment I've seen says a similar thing and that nobody thinks of uses outside of gaming.I would think that for real raytracers and Adobe's graphics and video software for instance the tensor and RT cores would be very interesting.

I wonder though if open source software will be able to successfully use that new hardware or that Nvidia is too closed for it to get the advantages you might expect.

And apart from raytracers and such there is also the software science students use too.

And with the interest in AI currently by students and developers it might also be an interesting offering.

Although that again relies on Nvidia playing ball a bit.

michaelrw - Wednesday, September 19, 2018 - link

"where paying 43% or 50% more gets you 27-28% more performance"1080 Ti can be bought in the $600 range, wheres the 2080 Ti is $1200 .. so I'd say thats more than 43-50% price increase..at a minimum we're talking a 71% increase, at worst 100% (Launch MSRP for 1080 Ti was $699)

V900 - Wednesday, September 19, 2018 - link

Which is the wrong way of looking at it.NVIDIA didn’t just increase the price for shit and giggles, the Turing GPUs are much more expensive to fab, since you’re talking about almost 20 BILLION transistors squeezed into a few hundred mm2.

Regardless: Comparing the 2080 with the 1080, and claiming there is a 70% price increase, is a bogus logic in the first place, since the 2080 brings a number of things to the table that the 1080 isn’t even capable of.

Find me a 1080ti with DLSS and that is also capable of raytracing, and then we can compare prices and figure out if there’s a price increase or not.

imaheadcase - Wednesday, September 19, 2018 - link

In brings it to the table..on paper more like it. You literally listed the two things that are not really shown AT ALL.mscsniperx - Wednesday, September 19, 2018 - link

No, actually YOUR logic is bogus. Find me a DLSS or Raytracing game to bench.. You can't. There is a reason for that. Raytracing will require a Massive FPS hit, Nvidia knows this and is delaying you from seeing that as damage control.Yojimbo - Wednesday, September 19, 2018 - link

There are no ray tracing games because the technology is new, not because NVIDIA is "delaying them". As far as DLSS, I think those games will appear faster than ray tracing.Andrew LB - Thursday, September 20, 2018 - link

Coming soon:Darksiders III from Gunfire Games / THQ Nordic

Deliver Us The Moon: Fortuna from KeokeN Interactive

Fear The Wolves from Vostok Games / Focus Home Interactive

Hellblade: Senua's Sacrifice from Ninja Theory

KINETIK from Hero Machine Studios

Outpost Zero from Symmetric Games / tinyBuild Games

Overkill's The Walking Dead from Overkill Software / Starbreeze Studios

SCUM from Gamepires / Devolver Digital

Stormdivers from Housemarque

Ark: Survival Evolved from Studio Wildcard

Atomic Heart from Mundfish

Dauntless from Phoenix Labs

Final Fantasy XV: Windows Edition from Square Enix

Fractured Lands from Unbroken Studios

Hitman 2 from IO Interactive / Warner Bros.

Islands of Nyne from Define Human Studios

Justice from NetEase

JX3 from Kingsoft

Mechwarrior 5: Mercenaries from Piranha Games

PlayerUnknown’s Battlegrounds from PUBG Corp.

Remnant: From The Ashes from Arc Games

Serious Sam 4: Planet Badass from Croteam / Devolver Digital

Shadow of the Tomb Raider from Square Enix / Eidos-Montréal / Crystal Dynamics / Nixxes

The Forge Arena from Freezing Raccoon Studios

We Happy Few from Compulsion Games / Gearbox

Funny how the same people who praised AMD for being the first to bring full DX12 support yet only 15 games in the first two years used it, are the same people sh*tting on nVidia for bringing a far more revolutionary technology that's going to be in far more games in a shorter time span.

jordanclock - Thursday, September 20, 2018 - link

Considering AMD was the first to bring support to an API that all GPUs could have support for, DLSS is not a comparison. DLSS is an Nvidia-only feature and Nvidia couldn't manage to have even ONE game on launch day with DLSS.Manch - Thursday, September 20, 2018 - link

AMD spawned Mantle which then turned into Vulcan. Also pushed MS to dev DX12 as it was in both their interests. These APIs can be used by all.DLSS while potentially very cool, is as Jordan said proprietary. Like hair works and other crap ot will get light support but devs when it comes to feature sets will spend most of their effort building to common ground. With consoles being AMD GPU based, guess where that will be.

If will be interesting how AMD will ultimatley respond. Ie gsync/freesync CUDA/OpenCL, etc.

As Nvidia has stated, these features are designed to work with how current game engines already function so they dont (the devs) have to reinvent the wheel. Ultimately this meanz the integration wont be very deep at least not for awhile.

For consumers the end goal is always better graphics at the same price point when new releases happen.

Not that these are bad cards, just expensove and two very key features are unavailable, and that sucks. Hopefully the situation will change sooner rather than later.